Official statement

Other statements from this video 43 ▾

- □ Does the 15 MB Googlebot crawl limit really kill your indexation, and how can you fix it?

- □ Is Google Really Measuring Page Weight the Way You Think It Does?

- □ Has mobile page weight tripled in 10 years? Why should SEO professionals care about this trend?

- □ Is your mobile site missing critical content that exists on desktop?

- □ Is your desktop content disappearing from Google rankings because it's missing on mobile?

- □ Does page speed really impact conversions according to Google?

- □ Is Google really processing 40 billion spam URLs every single day?

- □ Does network compression really improve your site's crawl budget?

- □ Is lazy loading really essential to optimize your initial page weight and boost Core Web Vitals?

- □ Does Googlebot really stop crawling after 15 MB per URL?

- □ Has mobile page weight really tripled in just one decade?

- □ Does page weight really affect user experience and SEO performance?

- □ Does structured data really bloat your HTML and hurt page performance?

- □ Is mobile-desktop parity really costing you search rankings more than you think?

- □ Should you still worry about page weight for SEO in 2024?

- □ Is resource size really the make-or-break factor for your website's speed?

- □ Is Google really enforcing a strict 1 MB limit on images—and what does that tell you about SEO priorities?

- □ Does optimizing page size actually benefit users more than it benefits your search rankings?

- □ Does Googlebot really cap crawling at 15 MB per URL?

- □ Is exploding web page weight hurting your SEO? Here's what you need to know

- □ Is page size really still hurting your SEO in 2024?

- □ Are structured data slowing down your pages enough to harm your SEO?

- □ Does page loading speed really impact your conversion rates?

- □ Does network compression really optimize user device storage space, or is it just a temporary fix?

- □ Is content disparity between mobile and desktop killing your rankings in mobile-first indexing?

- □ Is lazy loading really a must-have SEO performance lever you should activate systematically?

- □ Does Google really block 40 billion spam URLs daily—and how does your site avoid the filter?

- □ Can image optimization really cut your page weight by 90%?

- □ Does Googlebot really stop at 15 MB per URL?

- □ Why is mobile-desktop parity sabotaging your rankings in Mobile-First Indexing?

- □ Is your page weight really slowing down your SEO performance?

- □ Does structured data really slow down your crawl budget?

- □ Does Google really block 40 billion spam URLs every single day?

- □ Should you really cap your images at 1 MB to satisfy Google?

- □ Does Googlebot really stop crawling after 15 MB per URL?

- □ Does site speed really impact your conversion rates?

- □ Is mobile-desktop mismatch really destroying your SEO rankings right now?

- □ Do structured data markups really bloat your HTML pages?

- □ Does page size really matter for SEO when internet connections keep getting faster?

- □ Is network compression really enough to optimize your site's crawlability?

- □ Can lazy loading really boost your performance without hurting crawlability?

- □ Does your website's overall size really hurt your SEO performance?

- □ Why does Google enforce a strict 1MB image size limit across its developer documentation?

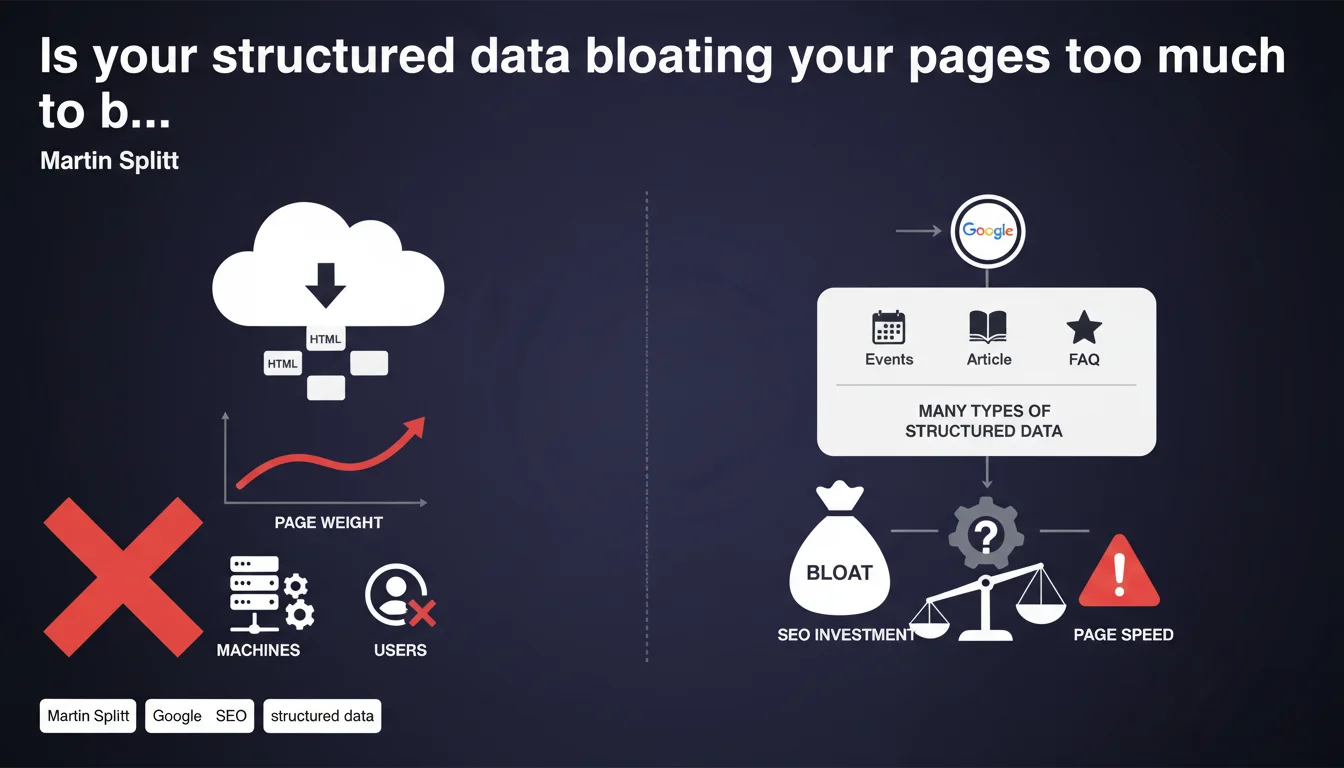

Google acknowledges that structured data can significantly increase a page's HTML weight, since this data is intended for machines rather than users. With the multiplication of supported markup types, code bloat has become a real problem that can impact performance if you're not careful.

What you need to understand

Why is Google raising the HTML weight question now?

Martin Splitt points out a technical paradox: the more Google encourages structured data adoption (and it does so massively), the more the volume of code invisible to the user explodes. SEO professionals stack schemas — Product, FAQ, Review, Breadcrumb, Organization, LocalBusiness, VideoObject, and more — in a race for visibility in rich SERPs.

The problem? This JSON-LD or microdata code can easily represent 30 to 50% of a page's total weight. On e-commerce sites with complex product sheets, you regularly see pages where structured data exceeds visible content. And that's starting to raise performance questions.

What exactly do we mean by "intended for machines"?

Structured data is invisible code to the end user. It doesn't display on screen, doesn't contribute to reading experience, and only has value for robots parsing the HTML. It's pure markup, redundant with visible content.

This redundancy is precisely what bloats the page: you describe your product in standard HTML for your visitors, then describe it again entirely in JSON-LD for Google. Same information, twice. The signal-to-noise ratio of HTML code degrades mechanically.

At what point does this extra weight become problematic?

Concretely, it depends on your weight budget and Core Web Vitals. If your page already weighs 1.5 MB with optimized images, minified JS, and critical CSS, adding 50 KB of JSON-LD isn't neutral. Especially on mobile with degraded connections.

TTFB and LCP can be impacted if the server has to generate and transmit more code. The browser must parse more HTML before displaying anything. It's marginal on lightweight pages, but becomes measurable on already heavy pages.

- Structured data is invisible code to users, but heavy for machines

- The multiplication of supported schemas encourages unlimited stacking without clear guidelines

- Performance impact depends on total page weight and connection quality

- The useful code-to-machine code ratio degrades with semantic markup inflation

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes, absolutely. We regularly see that sites implementing every possible schema end up with pages that are 200-300 KB of pure HTML, half of which is JSON-LD. This is particularly visible on e-commerce sites that stack Product + AggregateRating + Offer + FAQPage + Breadcrumb + Organization.

But — and this is where it gets tricky — Google provides no threshold indication. From how many KB is the game no longer worth the candle? No idea. Splitt identifies the problem without proposing guardrails, which leaves SEOs in the dark. [To verify] whether Google actively penalizes pages too heavy in structured data, or if it's just an indirect effect through Core Web Vitals.

Should you reduce your structured data usage then?

No, and that's precisely the trap of this statement. Google says "watch your weight," but in parallel it continues to prioritize rich results in SERPs. If you remove your FAQ markup to lighten the page, you lose your rich snippet and your CTR collapses.

The real message to take away: be selective and pragmatic. Implement schemas that have measurable ROI (those that trigger visible rich snippets), not all those Google "supports." Nobody's asking you to mark up every detail of your content if it brings nothing to visibility.

Which structured data are truly a priority?

Focus on schemas with high SERP impact: Product/Offer for e-commerce, Recipe for food content, VideoObject for video content, FAQ/HowTo to capture space in results. The rest — Organization, BreadcrumbList, WebSite — is useful but secondary.

If your page already exceeds 150 KB of HTML and your Core Web Vitals are tight, remove schemas that trigger no differentiated display in Google. That's dead weight. Test with Search Console and the Rich Results Test: if the schema appears nowhere, cut it.

Practical impact and recommendations

How do you identify if your structured data is too heavy?

First reflex: measure your raw HTML weight. Open the inspector, display the full source code, and compare JSON-LD size to the rest of the document. If your structured data represents more than 30% of HTML weight, that's a warning signal.

Then test performance impact. Temporarily remove all JSON-LD and measure your Core Web Vitals before/after with PageSpeed Insights or WebPageTest. If LCP gains 200-300 ms, you have a problem.

What concrete actions should you take to optimize?

Prioritize schemas with ROI. Keep only those that trigger rich snippets or features visible in SERPs. Test each schema with the Rich Results Test and verify in Search Console that it actually generates enrichments.

Minify your JSON-LD. Remove unnecessary spaces, line breaks, optional properties without added value. A well-cleaned JSON-LD can lose 20-30% of weight without changing functionality.

Avoid duplication between schemas. If you mark up a product with Product + Offer + AggregateRating, verify that you're not repeating the same info (name, description, image) in each object. Factor out as much as possible.

- Measure total HTML weight and isolate the structured data portion

- Compare Core Web Vitals with and without JSON-LD to quantify impact

- Keep only schemas that trigger visible rich snippets

- Minify JSON-LD: remove spaces, line breaks, empty properties

- Factor common data between schemas to avoid redundancy

- Test regularly with Rich Results Test and Search Console

- Monitor HTML weight evolution across deployments

❓ Frequently Asked Questions

Le structured data peut-il réellement pénaliser mes Core Web Vitals ?

Faut-il supprimer certains schémas pour alléger mes pages ?

Quelle est la limite de poids acceptable pour le structured data ?

Le JSON-LD est-il plus lourd que les microdonnées ou RDFa ?

Peut-on compresser le structured data côté serveur ?

🎥 From the same video 43

Other SEO insights extracted from this same Google Search Central video · published on 30/03/2026

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.