Official statement

Other statements from this video 43 ▾

- □ Does the 15 MB Googlebot crawl limit really kill your indexation, and how can you fix it?

- □ Is Google Really Measuring Page Weight the Way You Think It Does?

- □ Has mobile page weight tripled in 10 years? Why should SEO professionals care about this trend?

- □ Is your structured data bloating your pages too much to be worth the SEO investment?

- □ Is your mobile site missing critical content that exists on desktop?

- □ Is your desktop content disappearing from Google rankings because it's missing on mobile?

- □ Does page speed really impact conversions according to Google?

- □ Is Google really processing 40 billion spam URLs every single day?

- □ Does network compression really improve your site's crawl budget?

- □ Is lazy loading really essential to optimize your initial page weight and boost Core Web Vitals?

- □ Does Googlebot really stop crawling after 15 MB per URL?

- □ Has mobile page weight really tripled in just one decade?

- □ Does page weight really affect user experience and SEO performance?

- □ Does structured data really bloat your HTML and hurt page performance?

- □ Is mobile-desktop parity really costing you search rankings more than you think?

- □ Should you still worry about page weight for SEO in 2024?

- □ Is Google really enforcing a strict 1 MB limit on images—and what does that tell you about SEO priorities?

- □ Does optimizing page size actually benefit users more than it benefits your search rankings?

- □ Does Googlebot really cap crawling at 15 MB per URL?

- □ Is exploding web page weight hurting your SEO? Here's what you need to know

- □ Is page size really still hurting your SEO in 2024?

- □ Are structured data slowing down your pages enough to harm your SEO?

- □ Does page loading speed really impact your conversion rates?

- □ Does network compression really optimize user device storage space, or is it just a temporary fix?

- □ Is content disparity between mobile and desktop killing your rankings in mobile-first indexing?

- □ Is lazy loading really a must-have SEO performance lever you should activate systematically?

- □ Does Google really block 40 billion spam URLs daily—and how does your site avoid the filter?

- □ Can image optimization really cut your page weight by 90%?

- □ Does Googlebot really stop at 15 MB per URL?

- □ Why is mobile-desktop parity sabotaging your rankings in Mobile-First Indexing?

- □ Is your page weight really slowing down your SEO performance?

- □ Does structured data really slow down your crawl budget?

- □ Does Google really block 40 billion spam URLs every single day?

- □ Should you really cap your images at 1 MB to satisfy Google?

- □ Does Googlebot really stop crawling after 15 MB per URL?

- □ Does site speed really impact your conversion rates?

- □ Is mobile-desktop mismatch really destroying your SEO rankings right now?

- □ Do structured data markups really bloat your HTML pages?

- □ Does page size really matter for SEO when internet connections keep getting faster?

- □ Is network compression really enough to optimize your site's crawlability?

- □ Can lazy loading really boost your performance without hurting crawlability?

- □ Does your website's overall size really hurt your SEO performance?

- □ Why does Google enforce a strict 1MB image size limit across its developer documentation?

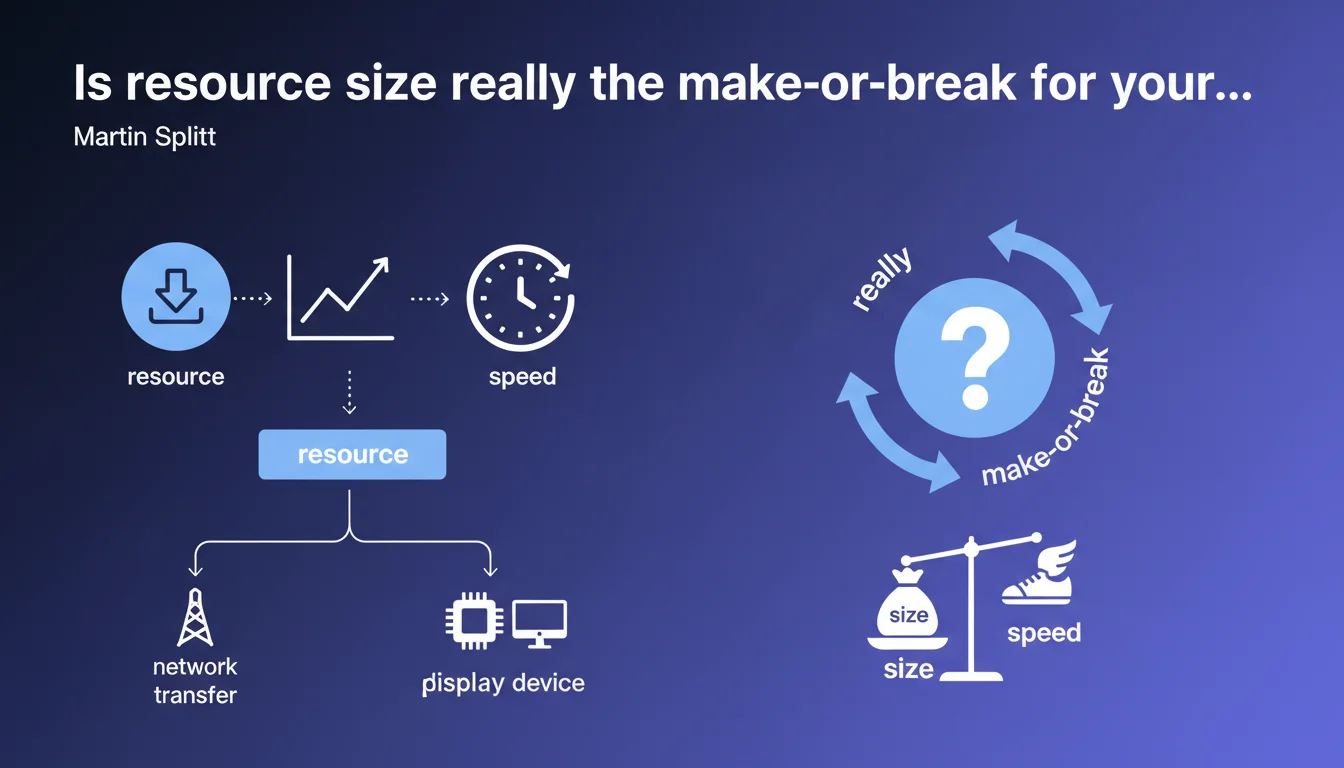

Martin Splitt confirms that a site's speed depends directly on the volume of data being transferred: the heavier the resources, the slower the network transfer and device processor handling. It's not groundbreaking news, but a timely reminder that optimizing resource weight remains a fundamental lever for improving performance.

What you need to understand

What's the logic behind this claim?

Google establishes a straightforward cause-and-effect relationship: data volume = transfer time + processing time. The more bytes you send to the browser, the harder the network works to deliver them and the more the device's CPU (especially on mobile) struggles to digest everything.

This statement targets two distinct bottlenecks. On one hand, network bandwidth: a 2 MB file mechanically takes longer to download than a 200 KB file, especially on unstable 3G or 4G. On the other hand, the processing capacity of the device: decompressing, parsing, and displaying heavy JavaScript or unoptimized images puts strain on the processor, delaying final display.

Why does Google keep hammering home this obvious point?

Because websites keep ballooning without restraint. WordPress themes bloated with scripts, uncompressed HD images, JavaScript libraries imported entirely when you only use 10% of their functions — it all accumulates and tanks your Core Web Vitals.

Google wants to drive home that resource size isn't a cosmetic detail, but a parameter that directly impacts LCP (Largest Contentful Paint) and FID (First Input Delay). If your hero image weighs 5 MB, your LCP will be catastrophic, no matter how good your hosting is.

What does this mean concretely for SEO?

The Core Web Vitals have been a ranking signal since June 2021. A bloated site = degraded metrics = a handicap in the SERPs, especially against competitors who've optimized their resources.

But there's another stake: crawl budget. A site serving 10 MB pages consumes more bandwidth on the Googlebot side. On large sites (e-commerce, catalogs), this can limit the number of pages crawled daily.

- Resource size directly influences the speed perceived by users and measured by Google.

- Network transfer and CPU processing are the two bottlenecks identified by Splitt.

- Optimizing resource weight improves Core Web Vitals (LCP, FID) and potentially crawl budget.

- This claim primarily targets sites accumulating scripts and media without discernment.

SEO Expert opinion

Does this statement bring anything new to the table?

Let's be honest: no. This is a restatement of well-known principles from years past. Any professional who's optimized a site knows that compressing images, minifying CSS/JS, and limiting HTTP requests speeds up rendering.

What's interesting is that Splitt chooses to reinforce this publicly. It signals that Google still sees too many sites neglecting these basics — and that the Chrome/Search team views this as a persistent problem.

What nuances should we add to this claim?

The statement deliberately remains generic and vague. It doesn't specify at what threshold size becomes problematic, or which type of resource weighs heaviest in the balance (images? scripts? fonts?).

In practice, not all bytes are created equal. A large JavaScript file blocking rendering is far more penalizing than an image lazy-loaded at the bottom of the page. The timing and criticality of each resource matter just as much as their raw weight.

Another point: the statement ignores the impact of the number of HTTP requests. A 500 KB site spread across 150 requests can be slower than a 1 MB site compressed into 10 well-optimized requests (HTTP/2, CDN, cache). Size is just one variable among many.

In what cases doesn't this rule really apply?

On ultra-fast connections (fiber, 5G), the difference between 500 KB and 1 MB becomes negligible for network transfer. The bottleneck then shifts to CPU processing and code quality (JavaScript parsing, layout shifts).

Similarly, on high-end devices, processing resources is fast even if they're voluminous. The problem primarily arises on budget smartphones and unstable mobile connections — a significant share of global traffic. [To verify]: Google doesn't publish public stats on the actual distribution of device types in its indexes.

Practical impact and recommendations

What should you actually do to reduce resource size?

Start with a weight audit: use Lighthouse, WebPageTest, or GTmetrix to identify the heaviest resources. Focus on quick wins: image compression, conversion to WebP/AVIF, CSS/JS minification.

For images, prioritize native lazy-loading (loading="lazy" attribute) on everything that's not above-the-fold. Serve modern formats (WebP, AVIF) with a JPEG fallback. Set explicit dimensions to prevent layout shifts.

On the JavaScript side, hunt down unused libraries. Webpack Bundle Analyzer or Chrome DevTools Coverage show you what's loaded but never executed. Properly configured tree-shaking can halve your bundle size.

What mistakes should you avoid when optimizing?

Don't sacrifice visual quality to the point of pixelated images. A blurry image harms user experience and conversions — slight extra weight beats perceptible degradation.

Also avoid lazy-loading every image thoughtlessly. The hero image (LCP) must load immediately. Lazy-loading this critical resource degrades LCP instead of improving it.

Another pitfall: over-optimizing caching. Setting infinite cache on all resources can block urgent fixes. Use cache-busting (filename hashing) to properly version your assets.

How do you verify your site complies?

Regularly measure your Core Web Vitals via Search Console (real-world data) and PageSpeed Insights (lab data). Target LCP < 2.5s, FID < 100ms, and CLS < 0.1.

Use continuous monitoring (SpeedCurve, Calibre, Treo) to catch regressions. A plugin addition or theme update can easily push page weight up 30% overnight.

- Audit resource weight with Lighthouse or WebPageTest

- Compress and convert images to WebP/AVIF

- Minify CSS/JS and eliminate dead code (tree-shaking)

- Lazy-load non-critical images, but not the hero image

- Use a CDN to reduce network latency

- Implement cache-busting for static assets

- Monitor Core Web Vitals via Search Console and third-party tools

❓ Frequently Asked Questions

La taille des ressources est-elle le seul facteur qui impacte la vitesse d'un site ?

Faut-il privilégier le lazy-loading systématique de toutes les images ?

Quel format d'image privilégier pour optimiser le poids sans perdre en qualité ?

La réduction de la taille des ressources améliore-t-elle le budget de crawl ?

Comment mesurer l'impact réel de l'optimisation des ressources sur le classement ?

🎥 From the same video 43

Other SEO insights extracted from this same Google Search Central video · published on 30/03/2026

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.