Official statement

Other statements from this video 43 ▾

- □ Does the 15 MB Googlebot crawl limit really kill your indexation, and how can you fix it?

- □ Is Google Really Measuring Page Weight the Way You Think It Does?

- □ Has mobile page weight tripled in 10 years? Why should SEO professionals care about this trend?

- □ Is your structured data bloating your pages too much to be worth the SEO investment?

- □ Is your mobile site missing critical content that exists on desktop?

- □ Is your desktop content disappearing from Google rankings because it's missing on mobile?

- □ Does page speed really impact conversions according to Google?

- □ Is Google really processing 40 billion spam URLs every single day?

- □ Does network compression really improve your site's crawl budget?

- □ Is lazy loading really essential to optimize your initial page weight and boost Core Web Vitals?

- □ Does Googlebot really stop crawling after 15 MB per URL?

- □ Has mobile page weight really tripled in just one decade?

- □ Does page weight really affect user experience and SEO performance?

- □ Does structured data really bloat your HTML and hurt page performance?

- □ Is mobile-desktop parity really costing you search rankings more than you think?

- □ Is resource size really the make-or-break factor for your website's speed?

- □ Is Google really enforcing a strict 1 MB limit on images—and what does that tell you about SEO priorities?

- □ Does optimizing page size actually benefit users more than it benefits your search rankings?

- □ Does Googlebot really cap crawling at 15 MB per URL?

- □ Is exploding web page weight hurting your SEO? Here's what you need to know

- □ Is page size really still hurting your SEO in 2024?

- □ Are structured data slowing down your pages enough to harm your SEO?

- □ Does page loading speed really impact your conversion rates?

- □ Does network compression really optimize user device storage space, or is it just a temporary fix?

- □ Is content disparity between mobile and desktop killing your rankings in mobile-first indexing?

- □ Is lazy loading really a must-have SEO performance lever you should activate systematically?

- □ Does Google really block 40 billion spam URLs daily—and how does your site avoid the filter?

- □ Can image optimization really cut your page weight by 90%?

- □ Does Googlebot really stop at 15 MB per URL?

- □ Why is mobile-desktop parity sabotaging your rankings in Mobile-First Indexing?

- □ Is your page weight really slowing down your SEO performance?

- □ Does structured data really slow down your crawl budget?

- □ Does Google really block 40 billion spam URLs every single day?

- □ Should you really cap your images at 1 MB to satisfy Google?

- □ Does Googlebot really stop crawling after 15 MB per URL?

- □ Does site speed really impact your conversion rates?

- □ Is mobile-desktop mismatch really destroying your SEO rankings right now?

- □ Do structured data markups really bloat your HTML pages?

- □ Does page size really matter for SEO when internet connections keep getting faster?

- □ Is network compression really enough to optimize your site's crawlability?

- □ Can lazy loading really boost your performance without hurting crawlability?

- □ Does your website's overall size really hurt your SEO performance?

- □ Why does Google enforce a strict 1MB image size limit across its developer documentation?

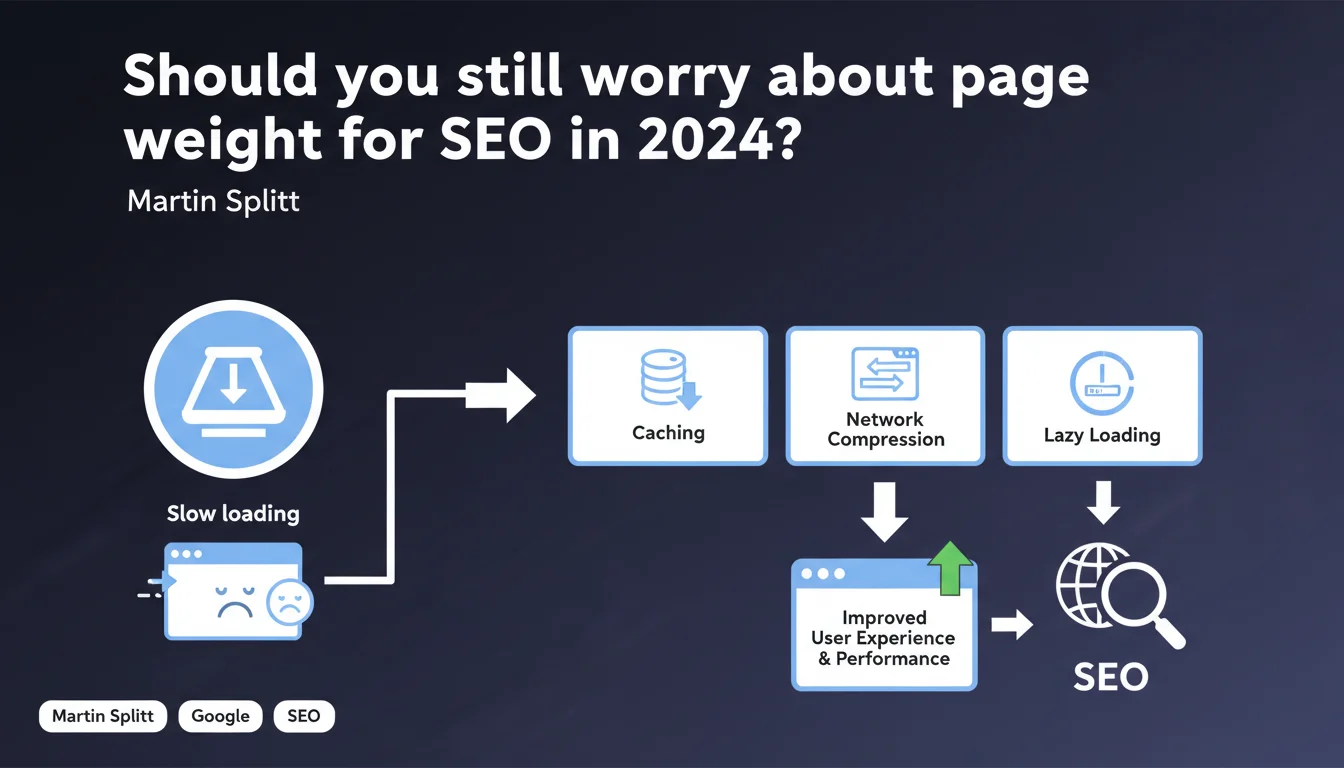

Google recommends three technical levers to neutralize the impact of page weight: caching, network compression, and lazy loading. The goal: maintain a smooth user experience without necessarily reducing the total volume of data. These mechanisms allow you to decouple technical weight from perceived performance.

What you need to understand

Why does Google insist on mechanisms rather than pure weight reduction?

Google's position reflects a reality: the modern web is visually rich, and asking sites to return to 500 KB pages is utopian. Users expect high-resolution images, embedded videos, interactive interfaces.

Rather than fighting this trend, Google recommends intelligently managing resource delivery. Caching avoids repeated downloads, compression drastically reduces transferred volume, lazy loading defers loading what isn't immediately visible.

What are the specific technical mechanisms mentioned and their exact role?

Caching (browser cache, CDN) stores static resources locally or on geographically close servers. Result: a resource is downloaded only once, then reused.

Network compression (Gzip, Brotli) compresses text files (HTML, CSS, JS) before transmission. A 200 KB CSS file can drop to 40 KB once compressed — that's 80% savings.

Lazy loading defers loading images and iframes outside the viewport. If a page contains 50 images but only 5 are visible above the fold, only those 5 load initially.

Is this approach sufficient to guarantee good performance?

No. These mechanisms mitigate the impact of weight, they don't eliminate it. A 10 MB page remains problematic even with cache and compression, especially on mobile with 3G connection.

Google isn't saying "ignore total weight". It's saying "use these tools so weight doesn't impact perceived experience". Crucial nuance.

- Caching: reduces repeated requests

- Network compression: decreases transferred volume (text only)

- Lazy loading: prioritizes immediately visible content

- These mechanisms complement but don't replace actual weight optimization

- Impact on Core Web Vitals (LCP, CLS) depends on implementation

SEO Expert opinion

Is this statement coherent with Core Web Vitals?

Yes, but with a major caveat. Core Web Vitals measure actual experience: LCP (time to load largest visible element), FID (interactivity), CLS (visual stability). The three mechanisms cited can improve these metrics — provided they're properly implemented.

Poorly configured lazy loading can actually degrade LCP if the hero image is deferred. Caching does nothing on first visit. Brotli compression requires server support that not all hosting providers natively offer.

Google remains vague on acceptable thresholds. At what weight do these mechanisms become insufficient? [To verify] — no quantified data accompanies this recommendation.

What risks does this approach present in practice?

The main danger: relying exclusively on these technical crutches and neglecting actual resource optimization. I've audited sites with lazy loading on 200 images at 3 MB each — technically "compliant" with recommendations, catastrophic for mobile experience.

Another pitfall observed in the field: lazy loading triggering CLS (Layout Shift) because image dimensions aren't reserved. Or Brotli compression overloading server CPU on undersized infrastructure.

Let's be honest: this statement gives a partial green light to sometimes questionable practices. "My pages are 5 MB but I have lazy loading so it's fine" — no, it probably isn't.

In which contexts are these recommendations insufficient?

On mobile with slow connection, lazy loading doesn't compensate for bloated DOM or blocking JavaScript. Cache doesn't work for new visitors (often the majority on some sites).

For e-commerce sites with hundreds of product listings, lazy loading alone doesn't solve Time to Interactive. If main JavaScript weighs 800 KB, even compressed, parsing blocks the main thread.

Practical impact and recommendations

What needs to be implemented concretely?

Caching: configure HTTP Cache-Control and Expires headers for your static resources (CSS, JS, images, fonts). Target 1-year duration for versioned files. Use a CDN to distribute geographically.

Network compression: enable Brotli (level 6-8 for CSS/JS) on your server or via your CDN. Fallback to Gzip if Brotli isn't supported. Verify with tools like GiftOfSpeed or DevTools that text files are actually compressed.

Lazy loading: implement the native HTML attribute loading="lazy" on off-viewport images. For older browsers, use a lightweight JS library (Lozad, Lazysizes). Always reserve dimensions (width/height) to avoid CLS.

Which mistakes should you absolutely avoid?

Never lazy-load the LCP image (Largest Contentful Paint) — this must load with absolute priority. Use fetchpriority="high" or preload if needed.

Don't enable overly aggressive compression (Brotli level 11) in real-time — marginal gains don't offset CPU load. Pre-compress assets at build time if possible.

Don't rely solely on browser cache: users clear their cache, switch devices. Cache should be a bonus, not the primary strategy.

How do you verify these optimizations are working?

Use PageSpeed Insights and WebPageTest to measure actual impact on LCP, TBT (Total Blocking Time), and CLS. Compare before/after with slow connection profiles (3G).

Check HTTP response headers via DevTools (Network tab): presence of content-encoding: br or gzip, correct cache-control headers.

Test lazy loading by slowly scrolling the page with network throttling enabled. Images should load just before entering the viewport, not too early, not too late.

- Enable Brotli/Gzip on server for HTML, CSS, JS, SVG, fonts

- Configure Cache-Control with long durations (1 year) for versioned resources

- Implement

loading="lazy"on all off-viewport images - Reserve dimensions (width/height) on all images to avoid CLS

- Never lazy-load the LCP image or critical resources

- Test actual impact on Core Web Vitals with mobile 3G profiles

- Regularly audit HTTP headers and RUM metrics (Real User Monitoring)

❓ Frequently Asked Questions

Le lazy loading natif (attribut HTML) suffit-il ou faut-il une bibliothèque JavaScript ?

La compression Brotli est-elle vraiment meilleure que Gzip ?

Quelle durée de cache définir pour les ressources statiques ?

Le lazy loading impacte-t-il le référencement des images dans Google Images ?

Ces optimisations suffisent-elles pour passer les Core Web Vitals ?

🎥 From the same video 43

Other SEO insights extracted from this same Google Search Central video · published on 30/03/2026

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.