Official statement

Other statements from this video 43 ▾

- □ Does the 15 MB Googlebot crawl limit really kill your indexation, and how can you fix it?

- □ Is Google Really Measuring Page Weight the Way You Think It Does?

- □ Has mobile page weight tripled in 10 years? Why should SEO professionals care about this trend?

- □ Is your structured data bloating your pages too much to be worth the SEO investment?

- □ Is your mobile site missing critical content that exists on desktop?

- □ Is your desktop content disappearing from Google rankings because it's missing on mobile?

- □ Does page speed really impact conversions according to Google?

- □ Is Google really processing 40 billion spam URLs every single day?

- □ Does network compression really improve your site's crawl budget?

- □ Is lazy loading really essential to optimize your initial page weight and boost Core Web Vitals?

- □ Does Googlebot really stop crawling after 15 MB per URL?

- □ Has mobile page weight really tripled in just one decade?

- □ Does page weight really affect user experience and SEO performance?

- □ Does structured data really bloat your HTML and hurt page performance?

- □ Is mobile-desktop parity really costing you search rankings more than you think?

- □ Should you still worry about page weight for SEO in 2024?

- □ Is resource size really the make-or-break factor for your website's speed?

- □ Is Google really enforcing a strict 1 MB limit on images—and what does that tell you about SEO priorities?

- □ Does optimizing page size actually benefit users more than it benefits your search rankings?

- □ Does Googlebot really cap crawling at 15 MB per URL?

- □ Is exploding web page weight hurting your SEO? Here's what you need to know

- □ Is page size really still hurting your SEO in 2024?

- □ Are structured data slowing down your pages enough to harm your SEO?

- □ Does page loading speed really impact your conversion rates?

- □ Does network compression really optimize user device storage space, or is it just a temporary fix?

- □ Is content disparity between mobile and desktop killing your rankings in mobile-first indexing?

- □ Is lazy loading really a must-have SEO performance lever you should activate systematically?

- □ Does Google really block 40 billion spam URLs daily—and how does your site avoid the filter?

- □ Can image optimization really cut your page weight by 90%?

- □ Does Googlebot really stop at 15 MB per URL?

- □ Why is mobile-desktop parity sabotaging your rankings in Mobile-First Indexing?

- □ Is your page weight really slowing down your SEO performance?

- □ Does Google really block 40 billion spam URLs every single day?

- □ Should you really cap your images at 1 MB to satisfy Google?

- □ Does Googlebot really stop crawling after 15 MB per URL?

- □ Does site speed really impact your conversion rates?

- □ Is mobile-desktop mismatch really destroying your SEO rankings right now?

- □ Do structured data markups really bloat your HTML pages?

- □ Does page size really matter for SEO when internet connections keep getting faster?

- □ Is network compression really enough to optimize your site's crawlability?

- □ Can lazy loading really boost your performance without hurting crawlability?

- □ Does your website's overall size really hurt your SEO performance?

- □ Why does Google enforce a strict 1MB image size limit across its developer documentation?

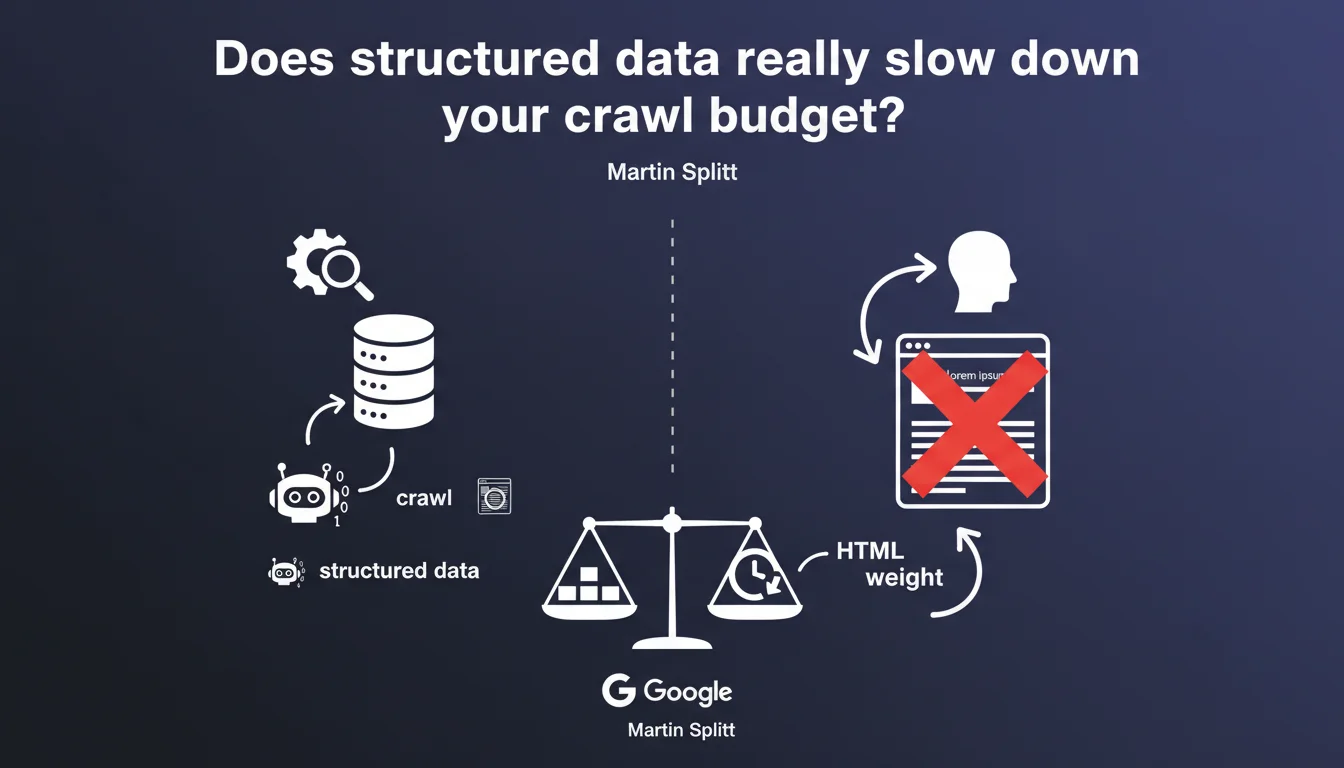

Martin Splitt reminds us that structured data inflates the HTML weight of pages. The more you add, the heavier it gets — which can impact crawl time and bandwidth consumption. Let's be honest: Google supports dozens of schema types, and stacking JSON-LD without discernment comes at a cost.

What you need to understand

Why is Google warning about the weight of structured data?

Structured data is additional code injected into the HTML to help search engines understand content. JSON-LD, microdata, RDFa: they all add kilobytes that the bot must download and parse.

The problem doesn't arise with a single Product or Article schema. But when you multiply types — FAQ, HowTo, BreadcrumbList, Organization, Review, Video, Event — HTML weight explodes. And this code is invisible to users: it only serves machines.

What are the concrete consequences of overly heavy HTML?

Bloated HTML consumes more bandwidth, slows down page downloads, and can hinder crawling. On a site with 10,000 pages containing 15 KB of structured data per page, that's 150 MB of pure JSON-LD that Googlebot must process.

Processing time on the bot side also increases. The more voluminous the HTML, the longer parsing and indexing take. On large-scale sites, this can impact crawl budget and delay the discovery of new pages.

Are all schema types equal in terms of weight?

No. A basic Article schema weighs 1-2 KB. A FAQPage schema with 10 detailed questions can reach 8-12 KB. An enriched Product schema with reviews, offers, aggregateRating: 5-7 KB.

Some sites stack 4-5 schemas per page without questioning it. Total weight can exceed 20 KB of structured data — more than the visible text content itself. That's where the problem lies.

- Structured data increases HTML weight proportionally to the volume of schemas added

- Heavy HTML slows down crawling, consumes crawl budget, and impacts bandwidth

- Google supports dozens of schema types: stacking without strategy has a real cost

- Structured data code is invisible to users — it only serves machines

- Complex schemas (FAQ, HowTo, enriched Product) weigh significantly more than a basic Article

SEO Expert opinion

Is this statement consistent with field observations?

Yes, completely. On e-commerce sites with product pages packed with Product schema + Review + FAQ + BreadcrumbList + Organization, we observe HTML weights of 80-120 KB of which 25-30 KB is pure JSON-LD. Server response time is fine, but crawl time increases mechanically.

Logs show Googlebot spends more time on these heavy pages. On sites with limited crawl budget, this translates to fewer pages crawled per session. Concretely? New pages take longer to be discovered.

Should you limit structured data then?

No — but you must be strategic. The classic mistake: deploying all schemas Google supports "just in case". FAQPage on every page when there's no real FAQ. HowTo on an article that isn't a tutorial. Review schema without actual user reviews.

The marginal SEO gain doesn't always justify the added weight. A poorly implemented or irrelevant schema provides nothing — it just consumes crawl budget for nothing. [To verify] Does Google have a threshold beyond which it penalizes HTML overweight? Officially no, but the crawl impact is measurable.

In what cases does structured data weight become critical?

On large sites with millions of pages. A media site with 500,000 articles, each carrying 10 KB of useless structured data: 5 GB of avoidable HTML weight. The bot spends its time downloading redundant JSON-LD instead of discovering fresh content.

On low-authority sites, crawl budget is limited. Every kilobyte counts. If Googlebot spends 30% of its time parsing non-essential schemas, that's 30% fewer pages crawled per session.

Practical impact and recommendations

What should you do concretely to optimize structured data weight?

Audit each schema type deployed. Ask yourself: does this schema deliver a measurable SEO gain or enriched display in the SERP? If not, remove it. A duplicated Organization schema on every page serves no purpose — put it only on the homepage.

Minimize JSON-LD. Remove unnecessary spaces, non-critical optional properties, redundant descriptions. A Product schema can be compact: name, image, offers, aggregateRating. No need to fill every property in the schema.org vocabulary.

What mistakes must you absolutely avoid?

Don't deploy a FAQPage schema with 20 detailed questions on every page if it doesn't generate rich snippets. Google rarely displays more than 2-3 FAQs in SERP — the rest is dead weight.

Avoid duplicating structured data between HTML and JSON-LD. Some CMS systems generate microdata in the page body AND JSON-LD in the footer — an unnecessary duplicate that bloats the code.

How do you verify the real impact on your crawl?

Analyze your server logs. Compare average crawl time for pages with structured data vs. without. Measure average HTML weight. If your pages exceed 100 KB of which 30 KB is JSON-LD, you likely have a problem.

Test the Rich Results Test on a sample of pages. Verify which schemas are actually eligible for rich snippets. Others are candidates for removal or simplification.

- Audit all deployed schema types and remove those without measurable SEO gain

- Minimize JSON-LD: remove spaces, non-critical optional properties, redundant descriptions

- Deploy a schema only if it generates an enriched display in the SERP

- Avoid duplicates between microdata and JSON-LD

- Limit FAQPage to a maximum of 3-5 relevant questions

- Place Organization schema only on the homepage, not on every page

- Analyze logs to measure the impact of HTML weight on crawl time

- Test schemas with the Rich Results Test before massive deployment

❓ Frequently Asked Questions

Combien de ko de structured data est acceptable par page ?

Les structured data impactent-elles le temps de chargement côté utilisateur ?

Faut-il privilégier JSON-LD ou microdata pour limiter le poids ?

Un schema invalide ralentit-il davantage le crawl ?

Les structured data comptent-elles dans le crawl budget ?

🎥 From the same video 43

Other SEO insights extracted from this same Google Search Central video · published on 30/03/2026

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.