Official statement

Other statements from this video 43 ▾

- □ Does the 15 MB Googlebot crawl limit really kill your indexation, and how can you fix it?

- □ Is Google Really Measuring Page Weight the Way You Think It Does?

- □ Has mobile page weight tripled in 10 years? Why should SEO professionals care about this trend?

- □ Is your structured data bloating your pages too much to be worth the SEO investment?

- □ Is your mobile site missing critical content that exists on desktop?

- □ Is your desktop content disappearing from Google rankings because it's missing on mobile?

- □ Does page speed really impact conversions according to Google?

- □ Is Google really processing 40 billion spam URLs every single day?

- □ Does network compression really improve your site's crawl budget?

- □ Is lazy loading really essential to optimize your initial page weight and boost Core Web Vitals?

- □ Does Googlebot really stop crawling after 15 MB per URL?

- □ Has mobile page weight really tripled in just one decade?

- □ Does page weight really affect user experience and SEO performance?

- □ Does structured data really bloat your HTML and hurt page performance?

- □ Is mobile-desktop parity really costing you search rankings more than you think?

- □ Should you still worry about page weight for SEO in 2024?

- □ Is resource size really the make-or-break factor for your website's speed?

- □ Is Google really enforcing a strict 1 MB limit on images—and what does that tell you about SEO priorities?

- □ Does optimizing page size actually benefit users more than it benefits your search rankings?

- □ Does Googlebot really cap crawling at 15 MB per URL?

- □ Is exploding web page weight hurting your SEO? Here's what you need to know

- □ Is page size really still hurting your SEO in 2024?

- □ Are structured data slowing down your pages enough to harm your SEO?

- □ Does page loading speed really impact your conversion rates?

- □ Does network compression really optimize user device storage space, or is it just a temporary fix?

- □ Is content disparity between mobile and desktop killing your rankings in mobile-first indexing?

- □ Is lazy loading really a must-have SEO performance lever you should activate systematically?

- □ Does Google really block 40 billion spam URLs daily—and how does your site avoid the filter?

- □ Can image optimization really cut your page weight by 90%?

- □ Why is mobile-desktop parity sabotaging your rankings in Mobile-First Indexing?

- □ Is your page weight really slowing down your SEO performance?

- □ Does structured data really slow down your crawl budget?

- □ Does Google really block 40 billion spam URLs every single day?

- □ Should you really cap your images at 1 MB to satisfy Google?

- □ Does Googlebot really stop crawling after 15 MB per URL?

- □ Does site speed really impact your conversion rates?

- □ Is mobile-desktop mismatch really destroying your SEO rankings right now?

- □ Do structured data markups really bloat your HTML pages?

- □ Does page size really matter for SEO when internet connections keep getting faster?

- □ Is network compression really enough to optimize your site's crawlability?

- □ Can lazy loading really boost your performance without hurting crawlability?

- □ Does your website's overall size really hurt your SEO performance?

- □ Why does Google enforce a strict 1MB image size limit across its developer documentation?

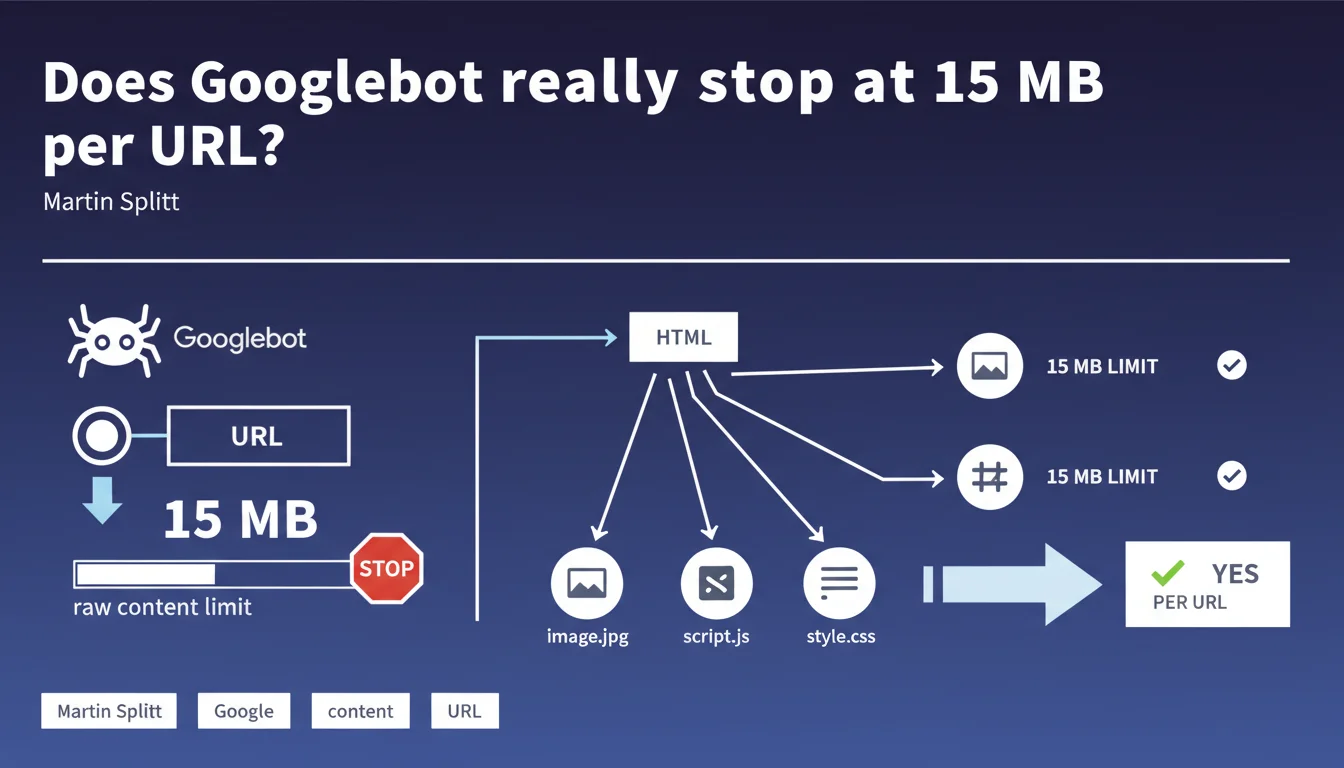

Googlebot crawls up to 15 megabytes of raw content per URL by default before stopping. Each referenced resource (CSS, JS, images) has its own 15 MB limit. This technical constraint directly impacts the indexation of large or content-rich pages.

What you need to understand

What exactly is this 15 MB limit?

Google sets a crawl limit of 15 megabytes for each crawled URL. In concrete terms, Googlebot downloads the raw content of a page until it reaches this threshold, then stops downloading abruptly.

This limit applies to the main HTML document only. External resources — CSS, JavaScript, images, videos — referenced in this HTML each benefit from their own 15 MB quota. In other words, a 10 MB HTML page that loads a 12 MB script and an 8 MB stylesheet passes without issue.

Why does Google impose this constraint?

The reason is simple: protecting crawl infrastructure. Google processes billions of URLs daily. Without safeguards, a poorly configured site could send documents of several hundred megabytes, saturating the crawler's resources.

This limit also prevents abuse — intentional or not. Endlessly generated dynamic pages, oversized JSON feeds, log files exposed by mistake: as many cases that would exceed crawl budget without adding value.

What happens if my page exceeds 15 MB?

Googlebot cuts off the retrieval at exactly 15 MB. Content beyond this threshold is never seen or indexed. If your crucial text appears after 16 MB of HTML, it will remain invisible to Google.

No alert is sent to Search Console. No notification, no warning. The crawl stops silently, and you discover the problem when your pages don't rank or display truncated snippets.

- Limit of 15 MB per URL, applied to raw content (HTML, JSON, XML...)

- Each referenced resource (CSS, JS, images) has its own 15 MB limit

- Content beyond 15 MB is never crawled or indexed

- No warning in Search Console in case of exceeding the limit

- This rule aims to protect Google's infrastructure and prevent abuse

SEO Expert opinion

Does this 15 MB limit pose a problem in practice?

Let's be honest: the majority of websites don't even come close to this threshold. A typical HTML page weighs between 50 KB and 500 KB. Even a long-form article with embedded rich media rarely exceeds 2-3 MB of pure HTML.

Where does it get tricky? E-commerce sites with oversized product listings loaded on a single page, SaaS platforms that inject massive JSON datasets into the DOM, or news sites that stack dozens of articles on the same URL with infinite scroll. In these cases, 15 MB can disappear quickly.

Is Google transparent about what counts toward these 15 MB?

Martin Splitt mentions "raw content," which includes uncompressed HTML. But what about inline JavaScript? JSON data embedded in <script> tags? SVGs integrated directly into the markup?

[To verify] Google doesn't specify whether HTTP compression (gzip, Brotli) is accounted for before or after this limit. If Googlebot decompresses first, a 3 MB compressed HTML could weigh 12 MB raw and approach the limit. No official data on this.

Should you actively monitor this metric?

Yes, especially if you operate in verticals with high content volume. E-commerce, price comparison sites, directories, job boards: so many sectors where pages easily balloon.

The problem? No Google tool exists to monitor this threshold. You must manually measure the HTML size returned for your main templates. A simple curl -I with Content-Length isn't always enough — some servers don't return this header or serve compressed content.

Practical impact and recommendations

How do you verify if your pages exceed the limit?

Start by identifying your at-risk templates: category pages, listings, archives, internal search results pages. These are the ones that accumulate the most content.

Then, measure the actual size of the returned HTML. Use curl -s https://yoursite.com/page | wc -c to get the size in bytes of the raw content. If you exceed 10-12 MB, you're in dangerous territory.

What optimizations should you implement if you exceed the limit?

First approach: pagination or lazy loading. Instead of loading 500 products on a single page, split into pages of 50 products. Or implement infinite scroll that loads content via AJAX after initial render.

Second lever: clean up superfluous HTML. Debug comments, unnecessary whitespace, redundant JSON-LD, oversized data-* attributes... all of this adds weight. Minify and compress aggressively.

Finally, if you embed JSON datasets for your React/Vue apps, externalize them. Rather than inlining them in the HTML, load them via a dedicated endpoint. This lightens the main document and respects the per-resource limit.

What if you can't reduce the size?

If your content is legitimate and can't be split — for example, exhaustive technical documentation on a single page — you'll have to accept that Google only crawls part of it. In this case, ensure your crucial content appears within the first 10 MB.

Alternatively, restructure your architecture so each major section is a distinct URL. This also improves your internal linking and the granularity of your indexation.

- Audit templates with high content volume (categories, listings, archives)

- Measure actual HTML size with

curlor monitoring tools - Implement pagination or lazy loading for lengthy content

- Clean up HTML: minification, comment removal, data attribute optimization

- Externalize large JSON datasets instead of inlining them in HTML

- Prioritize important content in the first megabytes of the document

- Regularly monitor page size after each deployment

❓ Frequently Asked Questions

Les 15 Mo incluent-ils le contenu compressé ou décompressé ?

Si mon HTML fait 14 Mo et charge un CSS de 20 Mo, que se passe-t-il ?

Puis-je voir dans Search Console si mes pages dépassent 15 Mo ?

Le contenu chargé en JavaScript après le rendu initial compte-t-il dans ces 15 Mo ?

Cette limite a-t-elle toujours existé ou est-ce une nouveauté ?

🎥 From the same video 43

Other SEO insights extracted from this same Google Search Central video · published on 30/03/2026

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.