Official statement

Other statements from this video 43 ▾

- □ Does the 15 MB Googlebot crawl limit really kill your indexation, and how can you fix it?

- □ Is Google Really Measuring Page Weight the Way You Think It Does?

- □ Has mobile page weight tripled in 10 years? Why should SEO professionals care about this trend?

- □ Is your structured data bloating your pages too much to be worth the SEO investment?

- □ Is your mobile site missing critical content that exists on desktop?

- □ Is your desktop content disappearing from Google rankings because it's missing on mobile?

- □ Does page speed really impact conversions according to Google?

- □ Is Google really processing 40 billion spam URLs every single day?

- □ Does network compression really improve your site's crawl budget?

- □ Is lazy loading really essential to optimize your initial page weight and boost Core Web Vitals?

- □ Does Googlebot really stop crawling after 15 MB per URL?

- □ Has mobile page weight really tripled in just one decade?

- □ Does page weight really affect user experience and SEO performance?

- □ Does structured data really bloat your HTML and hurt page performance?

- □ Is mobile-desktop parity really costing you search rankings more than you think?

- □ Should you still worry about page weight for SEO in 2024?

- □ Is resource size really the make-or-break factor for your website's speed?

- □ Is Google really enforcing a strict 1 MB limit on images—and what does that tell you about SEO priorities?

- □ Does optimizing page size actually benefit users more than it benefits your search rankings?

- □ Does Googlebot really cap crawling at 15 MB per URL?

- □ Is exploding web page weight hurting your SEO? Here's what you need to know

- □ Is page size really still hurting your SEO in 2024?

- □ Are structured data slowing down your pages enough to harm your SEO?

- □ Does page loading speed really impact your conversion rates?

- □ Does network compression really optimize user device storage space, or is it just a temporary fix?

- □ Is content disparity between mobile and desktop killing your rankings in mobile-first indexing?

- □ Is lazy loading really a must-have SEO performance lever you should activate systematically?

- □ Does Google really block 40 billion spam URLs daily—and how does your site avoid the filter?

- □ Can image optimization really cut your page weight by 90%?

- □ Does Googlebot really stop at 15 MB per URL?

- □ Why is mobile-desktop parity sabotaging your rankings in Mobile-First Indexing?

- □ Is your page weight really slowing down your SEO performance?

- □ Does structured data really slow down your crawl budget?

- □ Does Google really block 40 billion spam URLs every single day?

- □ Should you really cap your images at 1 MB to satisfy Google?

- □ Does Googlebot really stop crawling after 15 MB per URL?

- □ Does site speed really impact your conversion rates?

- □ Is mobile-desktop mismatch really destroying your SEO rankings right now?

- □ Do structured data markups really bloat your HTML pages?

- □ Does page size really matter for SEO when internet connections keep getting faster?

- □ Can lazy loading really boost your performance without hurting crawlability?

- □ Does your website's overall size really hurt your SEO performance?

- □ Why does Google enforce a strict 1MB image size limit across its developer documentation?

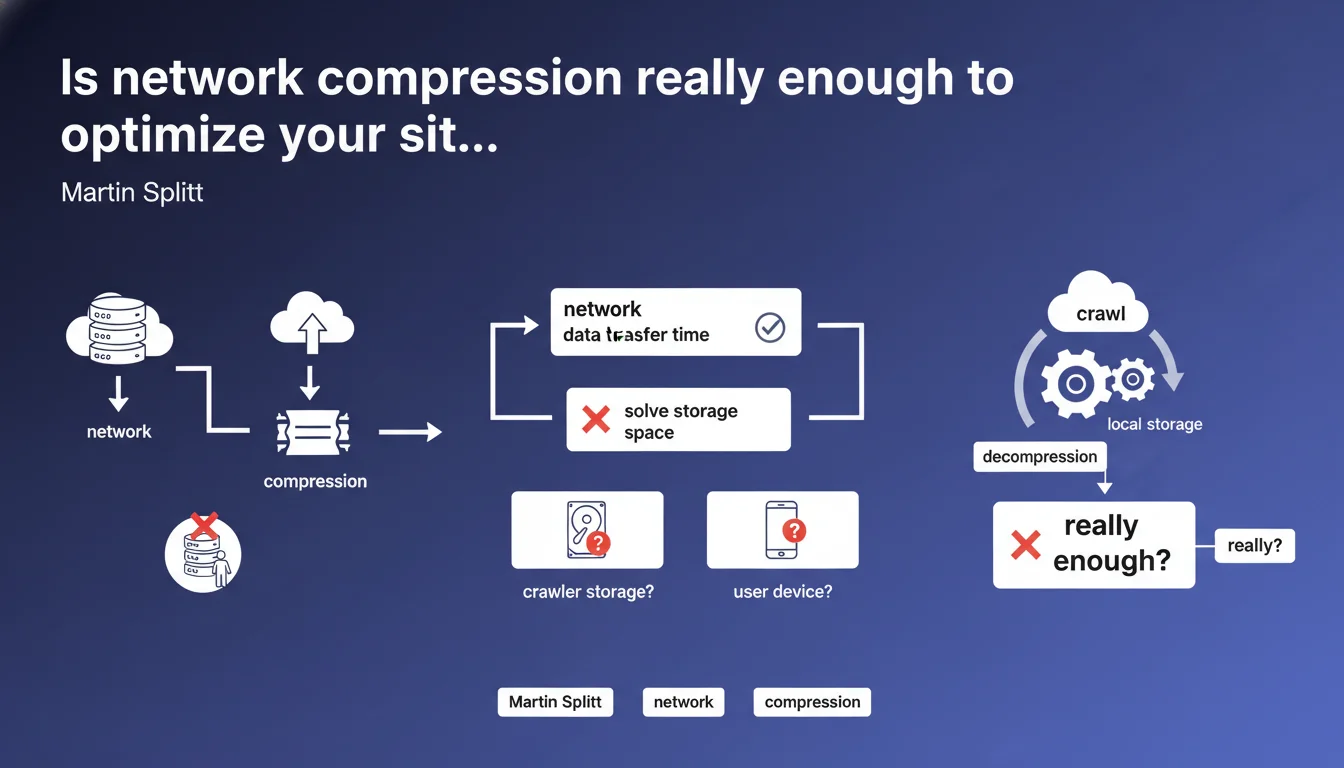

Network compression (gzip, brotli) reduces the data transfer time between server and client, but has no impact on the storage space required on the crawler's or user's side. Once decompressed, resources occupy the same volume as before compression. This technical detail has concrete implications for crawl budget optimization and managing large-sized resources.

What you need to understand

Why does Google specify that network compression does not solve local storage?

This clarification aims to correct a common misconception: many SEOs believe that by compressing their CSS, JavaScript or HTML files, they reduce not only page load time but also the load on the crawler side. This is partially wrong.

Network compression (gzip, brotli) acts only during the transit of data between the server and the client. Once files are received by Googlebot or the browser, they must be decompressed to be processed. At that point, they return to their original size and occupy as much memory space as an uncompressed file.

What is the difference between network compression and file weight optimization?

Network compression is a temporary and transparent operation: the server compresses, the client decompresses. It's a win-win for bandwidth, but that's where it stops.

Real file weight optimization involves reducing the native size of resources: code minification, comment removal, image compression (JPEG, WebP), elimination of unnecessary JavaScript. These gains persist after decompression and directly impact the memory consumption of the crawler or browser.

What are the implications for crawl budget and performance?

Googlebot has limited resources — in time, bandwidth, but also in processing capacity and temporary storage. If your pages weigh 5 MB after decompression, the crawler will need to allocate that space to analyze the DOM, execute JavaScript, and extract links.

A site with resource-heavy files, even well-compressed in transit, risks consuming its crawl budget faster than a natively light site. Network compression improves download speed, not processing efficiency.

- Network compression (gzip, brotli): reduces transfer time, not final memory footprint

- Native optimization: minification, removal of unnecessary elements, optimized images — real impact on storage and processing

- Crawl budget: influenced by the decompressed weight of resources, not just download speed

- Core Web Vitals: network compression helps with LCP and FCP, but the final resource size affects TBT and interactivity

SEO Expert opinion

Is this distinction really new for experienced SEOs?

Let's be honest: for a technical expert, this is basic knowledge. Every developer knows that gzip decompresses on the client side. But in SEO practice, this nuance is often overlooked.

We regularly see audits praising Brotli compression as a miracle solution to "page weight", when it only addresses a symptom — transfer time — without tackling the underlying problem. And that's where the issue lies: many modern CMS platforms generate bloated HTML, redundant JavaScript, and oversized CSS. Enabling network compression does not eliminate the need for real optimization work.

What are the concrete consequences on Googlebot's behavior?

Googlebot operates with strict quotas: crawl time, bandwidth, and processing capacity. If your pages are 3 MB decompressed, the crawler will need to allocate more resources to parse the DOM, execute JavaScript, and extract relevance signals.

The result? Fewer pages explored per crawl session. On a site with thousands of pages, this can delay the indexing of new URLs or the update of modified content. [To verify]: Google has never published a specific threshold beyond which decompressed resource weight directly impacts crawl budget, but real-world observations show a clear correlation between resource size and bot crawl frequency.

Should network compression be neglected then?

Certainly not. Network compression remains essential: it reduces transfer time, improves Core Web Vitals (particularly LCP), and decreases server-side bandwidth consumption. But it does not replace a comprehensive optimization strategy.

The trap would be believing that good server configuration (gzip, Brotli, CDN) eliminates the need to lighten source code. Both approaches are complementary, not interchangeable.

Practical impact and recommendations

What should you actually do to optimize the real weight of your pages?

First step: measure the decompressed weight of your resources. Standard tools (PageSpeed Insights, WebPageTest) mainly display transferred weight. Use Chrome DevTools (Network tab, Size vs Transferred column) to see the gap.

Next, prioritize native minification: remove spaces, comments, and unnecessary variables from HTML, CSS, and JavaScript. Tools like Terser (JS), cssnano (CSS), or HTMLMinifier automate this process. If you use a modern framework (React, Vue), enable production build optimizations.

What mistakes should you avoid when managing large resources?

Classic mistake: loading entire libraries when you only use a few functions. Lodash, jQuery, Bootstrap — these libraries are heavy when decompressed. Prefer tree-shaking or lightweight alternatives (vanilla JS, custom CSS utilities).

Another trap: non-optimized images. A 2 MB JPEG compressed with gzip remains a 2 MB JPEG after decompression. Switch to WebP or AVIF, reduce dimensions, and lazy-load images outside the viewport. The gain is direct and measurable.

How can you verify that your site is not wasting crawl budget?

Check the coverage reports in Search Console: pages crawled per day, average download time, server errors. If download time increases while network compression is active, the problem comes from decompressed weight or server speed.

Regularly audit the heaviest resources with Screaming Frog or OnCrawl. Identify JavaScript or CSS files exceeding 500 KB decompressed — these are priority optimization candidates.

- Enable gzip or Brotli on the server (nginx, Apache, CDN)

- Minify HTML, CSS, and JavaScript in production

- Remove unused or oversized JavaScript libraries

- Optimize images (WebP/AVIF format, compression, lazy-loading)

- Measure the decompressed weight of resources in DevTools

- Monitor average download time in Search Console

- Prioritize strategic pages to reduce their memory footprint

❓ Frequently Asked Questions

La compression Brotli est-elle meilleure que gzip pour le SEO ?

Le poids décompressé des ressources affecte-t-il réellement le crawl budget ?

Comment mesurer le poids décompressé de mes pages ?

Faut-il privilégier la minification ou la compression réseau ?

Quel impact sur les Core Web Vitals ?

🎥 From the same video 43

Other SEO insights extracted from this same Google Search Central video · published on 30/03/2026

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.