Official statement

Other statements from this video 43 ▾

- □ Does the 15 MB Googlebot crawl limit really kill your indexation, and how can you fix it?

- □ Is Google Really Measuring Page Weight the Way You Think It Does?

- □ Has mobile page weight tripled in 10 years? Why should SEO professionals care about this trend?

- □ Is your structured data bloating your pages too much to be worth the SEO investment?

- □ Is your mobile site missing critical content that exists on desktop?

- □ Is your desktop content disappearing from Google rankings because it's missing on mobile?

- □ Does page speed really impact conversions according to Google?

- □ Is Google really processing 40 billion spam URLs every single day?

- □ Does network compression really improve your site's crawl budget?

- □ Is lazy loading really essential to optimize your initial page weight and boost Core Web Vitals?

- □ Does Googlebot really stop crawling after 15 MB per URL?

- □ Has mobile page weight really tripled in just one decade?

- □ Does page weight really affect user experience and SEO performance?

- □ Does structured data really bloat your HTML and hurt page performance?

- □ Is mobile-desktop parity really costing you search rankings more than you think?

- □ Should you still worry about page weight for SEO in 2024?

- □ Is resource size really the make-or-break factor for your website's speed?

- □ Is Google really enforcing a strict 1 MB limit on images—and what does that tell you about SEO priorities?

- □ Does optimizing page size actually benefit users more than it benefits your search rankings?

- □ Does Googlebot really cap crawling at 15 MB per URL?

- □ Is exploding web page weight hurting your SEO? Here's what you need to know

- □ Is page size really still hurting your SEO in 2024?

- □ Does page loading speed really impact your conversion rates?

- □ Does network compression really optimize user device storage space, or is it just a temporary fix?

- □ Is content disparity between mobile and desktop killing your rankings in mobile-first indexing?

- □ Is lazy loading really a must-have SEO performance lever you should activate systematically?

- □ Does Google really block 40 billion spam URLs daily—and how does your site avoid the filter?

- □ Can image optimization really cut your page weight by 90%?

- □ Does Googlebot really stop at 15 MB per URL?

- □ Why is mobile-desktop parity sabotaging your rankings in Mobile-First Indexing?

- □ Is your page weight really slowing down your SEO performance?

- □ Does structured data really slow down your crawl budget?

- □ Does Google really block 40 billion spam URLs every single day?

- □ Should you really cap your images at 1 MB to satisfy Google?

- □ Does Googlebot really stop crawling after 15 MB per URL?

- □ Does site speed really impact your conversion rates?

- □ Is mobile-desktop mismatch really destroying your SEO rankings right now?

- □ Do structured data markups really bloat your HTML pages?

- □ Does page size really matter for SEO when internet connections keep getting faster?

- □ Is network compression really enough to optimize your site's crawlability?

- □ Can lazy loading really boost your performance without hurting crawlability?

- □ Does your website's overall size really hurt your SEO performance?

- □ Why does Google enforce a strict 1MB image size limit across its developer documentation?

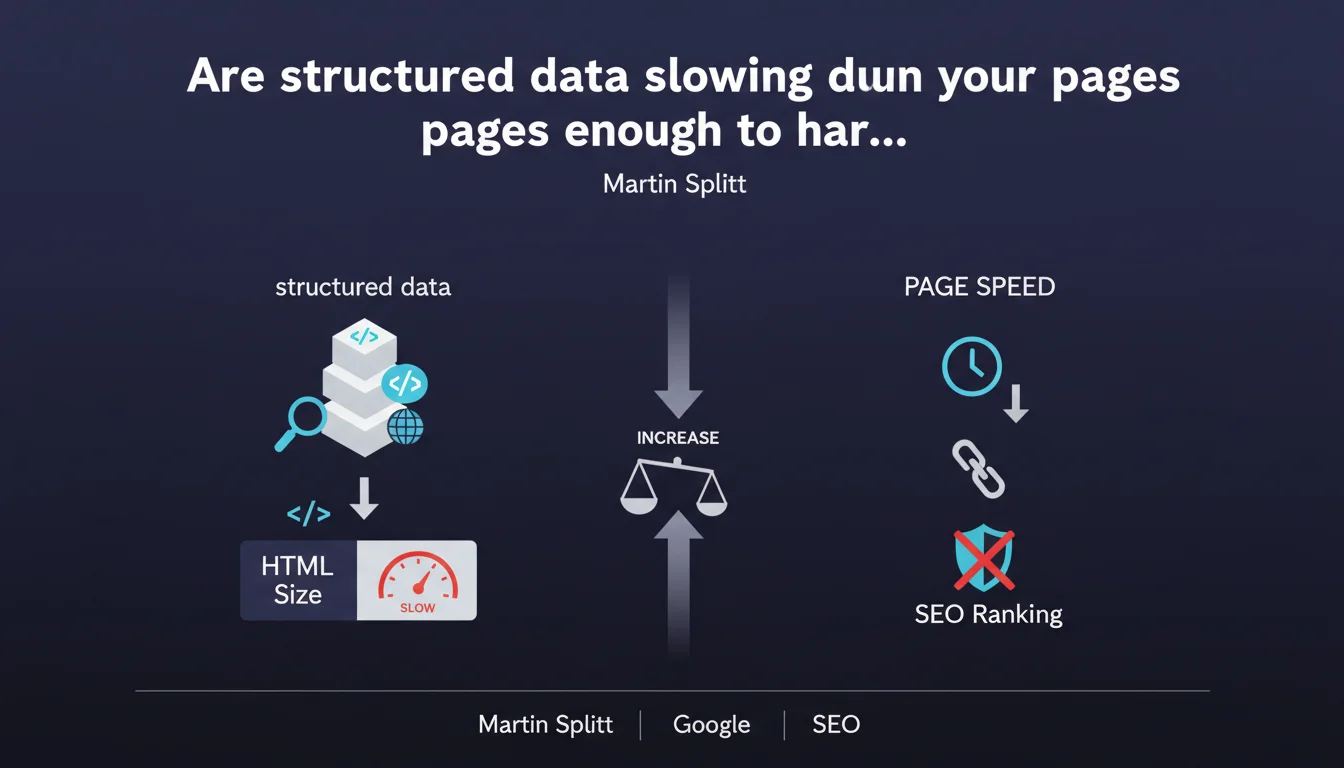

Martin Splitt reminds us that structured data adds HTML weight. The accumulation of multiple Schema.org markup types can significantly inflate page size. A trade-off between semantic richness and technical performance is necessary.

What you need to understand

Why is Google bringing up the weight of structured data now?

The statement from Martin Splitt comes at a time when Core Web Vitals carry significant weight in UX evaluation. Many sites stack Schema.org markups (Product, Article, FAQ, HowTo, BreadcrumbList, Organization, etc.) without measuring the impact on HTML size.

The problem: this data is meant for machines, not humans. It displays nothing on screen but bloats the source code. A detailed FAQ JSON-LD can easily add 20 to 50 KB, and when you multiply the types, you quickly exceed 100 KB in structured data alone.

Does this actually impact crawling and indexing?

Yes, in two ways. First, heavy HTML slows down parsing on the Googlebot side — more CPU resources needed to extract useful content. Second, if the page exceeds the thresholds Google tolerates (officially ~15 MB, but in practice problems start well before), certain portions may be truncated.

Let's be honest: most sites don't come close to these limits. But on rich pages (e-commerce with product variants, long articles with nested FAQ and HowTo), the accumulation becomes critical.

What does "Google supports many types of structured data" mean in this context?

It's an implicit reminder: Google offers the ability to enrich your pages with dozens of markup types. But just because a type is supported doesn't mean you should implement it systematically.

Each markup must serve a specific business or SEO objective (rich snippet, Knowledge Graph, carousel eligibility). Stacking structured data "just in case" is counterproductive if it tanks performance.

- HTML weight: structured data adds to the source code, invisible to users but read by bots

- Trade-off necessary: prioritize markups that actually generate SERP features (stars, accordion FAQ, breadcrumb, etc.)

- Googlebot parsing: bloated HTML slows down extraction of useful content and can impact crawl budget on large sites

- Core Web Vitals: heavy HTML can indirectly degrade LCP if the browser takes longer to build the DOM

SEO Expert opinion

Is this statement consistent with real-world observations?

Absolutely. I've seen e-commerce pages with 5 to 7 types of simultaneous structured data: Product, Offer, AggregateRating, Review, BreadcrumbList, Organization, WebSite. Result: 80 to 120 KB in JSON-LD alone, on pages displaying 3 lines of product text.

The paradox? These same sites struggle to optimize images and reduce third-party JS, but let HTML explode with redundant or poorly targeted markups. Google says it politely, but the message is clear: clean house.

In which cases does this rule become truly problematic?

Three classic scenarios. E-commerce sites that duplicate product info in multiple formats (Microdata + JSON-LD, or separate JSON-LD Product + Offer when they could be nested). Blogs that add FAQ + HowTo + Article + BreadcrumbList on every page, even when the content doesn't justify all these types.

And that's where it gets stuck: automatic structured data generators (plugins, CMS) stack by default without logic. I've seen pages with empty HowTo markup (step tags with no actual content) just "because the plugin offers it".

What nuances should be added to this claim?

Martin Splitt talks about "HTML weight," but we need to distinguish two problems. Raw weight (KB transferred over the network) and DOM complexity (number of nodes to parse). A well-structured 50 KB JSON-LD is less problematic than 50 KB of HTML stuffed with nested tables.

Second nuance: not all markup types are created equal. A 2 KB BreadcrumbList is negligible and delivers real UX benefit (breadcrumb in SERP). A 40 KB Review markup with 200 detailed reviews is questionable — Google only displays aggregate reviews from the entire page anyway.

[To verify]: Google has never published an official threshold for "acceptable" structured data size. We know the total HTML shouldn't exceed ~15 MB, but in practice, once you hit 500 KB of HTML, symptoms appear (partial indexing, crawl slowdown). So aiming for less than 200 KB of total HTML remains good practice, structured data included.

Practical impact and recommendations

How do you audit the weight of structured data on your site?

First step: measure. Use your browser's network inspector (Network tab, Doc filter) to see the raw HTML size of your strategic pages. Next, copy the source code and isolate JSON-LD or Microdata blocks — a simple Ctrl+F for application/ld+json reveals the scope.

You can script this with Screaming Frog (custom extraction via XPath) or a Python crawler. The goal: identify pages where structured data represents > 20% of total HTML. That's often a sign of imbalance.

Which markups to keep, which to delete?

Simple rule: keep what generates visible display in SERP. Product stars (AggregateRating), breadcrumbs (BreadcrumbList), accordion FAQs, recipe rich results. Remove what only serves to "feed the Knowledge Graph" without visible return — or A/B test if you have the traffic.

Concrete example: a media site with Article + FAQPage + HowTo on every article. If Google never displays the HowTo in a rich snippet after 3 months, remove it. You'll save 15-30 KB per page, a huge cumulative gain across 10,000 articles.

- Audit the HTML size of your key pages and measure the share of structured data

- Prioritize markups that generate measurable SERP features (stars, FAQ, breadcrumb)

- Remove or condense redundant markups (ex: separate Offer and Product when you could nest them)

- Test validity with Google's Rich Results Test after each change

- Monitor Core Web Vitals evolution (LCP particularly) after HTML streamlining

- Document markup types used by template (product page, article, category) to prevent anarchic stacking

❓ Frequently Asked Questions

Les structured data en JSON-LD sont-elles plus lourdes que les Microdata ?

Faut-il supprimer les structured data si ma page dépasse 200 Ko de HTML ?

Google peut-il ignorer certaines structured data si la page est trop lourde ?

Les structured data impactent-elles directement le classement SEO ?

Combien de types de structured data peut-on empiler sur une même page ?

🎥 From the same video 43

Other SEO insights extracted from this same Google Search Central video · published on 30/03/2026

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.