Official statement

Other statements from this video 43 ▾

- □ Does the 15 MB Googlebot crawl limit really kill your indexation, and how can you fix it?

- □ Is Google Really Measuring Page Weight the Way You Think It Does?

- □ Has mobile page weight tripled in 10 years? Why should SEO professionals care about this trend?

- □ Is your structured data bloating your pages too much to be worth the SEO investment?

- □ Is your mobile site missing critical content that exists on desktop?

- □ Is your desktop content disappearing from Google rankings because it's missing on mobile?

- □ Does page speed really impact conversions according to Google?

- □ Is Google really processing 40 billion spam URLs every single day?

- □ Does network compression really improve your site's crawl budget?

- □ Is lazy loading really essential to optimize your initial page weight and boost Core Web Vitals?

- □ Does Googlebot really stop crawling after 15 MB per URL?

- □ Has mobile page weight really tripled in just one decade?

- □ Does page weight really affect user experience and SEO performance?

- □ Does structured data really bloat your HTML and hurt page performance?

- □ Is mobile-desktop parity really costing you search rankings more than you think?

- □ Should you still worry about page weight for SEO in 2024?

- □ Is resource size really the make-or-break factor for your website's speed?

- □ Is Google really enforcing a strict 1 MB limit on images—and what does that tell you about SEO priorities?

- □ Does optimizing page size actually benefit users more than it benefits your search rankings?

- □ Does Googlebot really cap crawling at 15 MB per URL?

- □ Is exploding web page weight hurting your SEO? Here's what you need to know

- □ Are structured data slowing down your pages enough to harm your SEO?

- □ Does page loading speed really impact your conversion rates?

- □ Does network compression really optimize user device storage space, or is it just a temporary fix?

- □ Is content disparity between mobile and desktop killing your rankings in mobile-first indexing?

- □ Is lazy loading really a must-have SEO performance lever you should activate systematically?

- □ Does Google really block 40 billion spam URLs daily—and how does your site avoid the filter?

- □ Can image optimization really cut your page weight by 90%?

- □ Does Googlebot really stop at 15 MB per URL?

- □ Why is mobile-desktop parity sabotaging your rankings in Mobile-First Indexing?

- □ Is your page weight really slowing down your SEO performance?

- □ Does structured data really slow down your crawl budget?

- □ Does Google really block 40 billion spam URLs every single day?

- □ Should you really cap your images at 1 MB to satisfy Google?

- □ Does Googlebot really stop crawling after 15 MB per URL?

- □ Does site speed really impact your conversion rates?

- □ Is mobile-desktop mismatch really destroying your SEO rankings right now?

- □ Do structured data markups really bloat your HTML pages?

- □ Does page size really matter for SEO when internet connections keep getting faster?

- □ Is network compression really enough to optimize your site's crawlability?

- □ Can lazy loading really boost your performance without hurting crawlability?

- □ Does your website's overall size really hurt your SEO performance?

- □ Why does Google enforce a strict 1MB image size limit across its developer documentation?

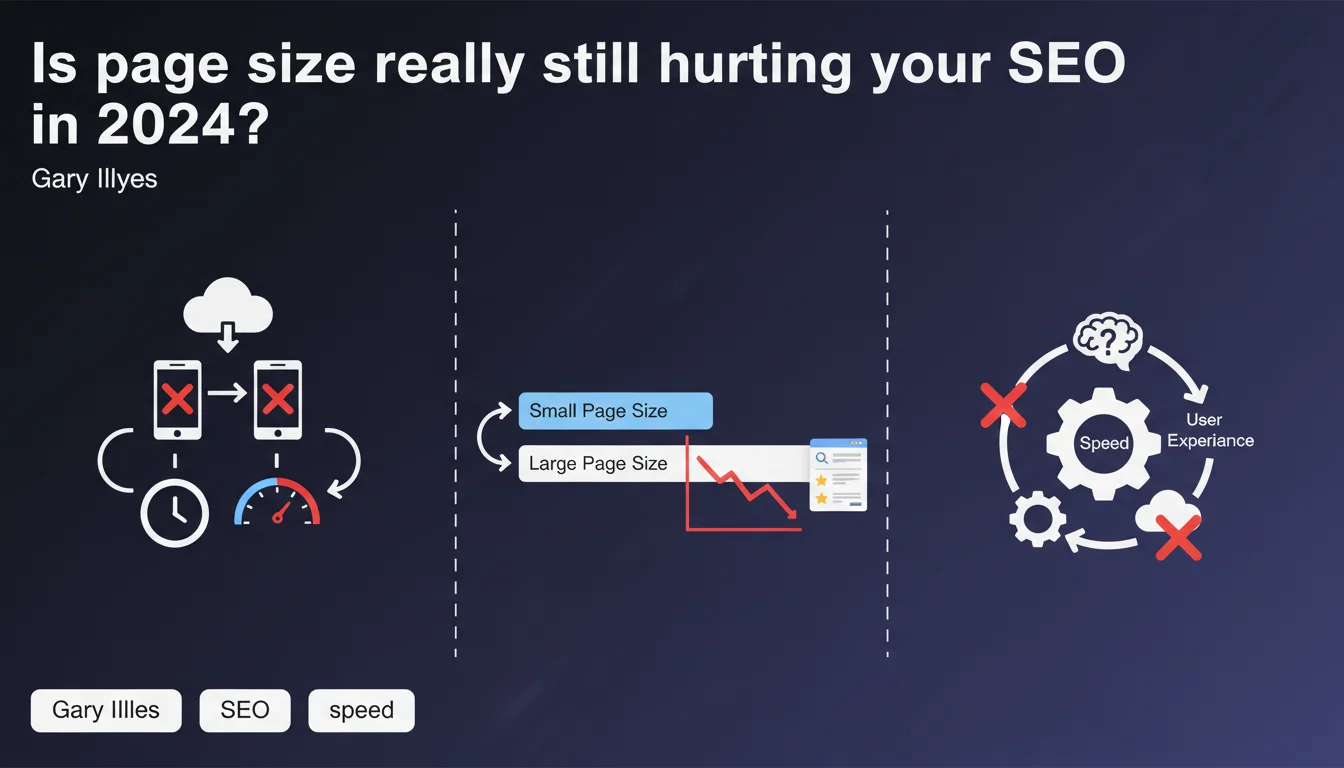

Google confirms that large pages remain a handicap for user experience, especially on slow connections or devices with limited storage. Size directly impacts loading speed: more data transferred = longer processing. Page weight optimization remains a concrete lever for improving UX and performance.

What you need to understand

Why does Google still insist on page size in 2026?

Gary Illyes' statement might surprise in a context where 5G is rolling out and fiber connections dominate in many countries. Yet, the gap between urban and rural areas remains massive, and a significant share of global traffic still comes from regions where 4G plateaus or domestic WiFi struggles.

Google is reminding us here of a reality: universal web accessibility requires not designing solely for privileged environments. Heavy pages de facto exclude part of your audience — and Google knows this because it observes bounce rates and user behavior at scale.

What's the relationship between page size and loading speed?

The formula is simple: more kilobytes = more download time + more CPU/GPU resources needed to parse, compile, and render. A page's weight isn't limited to raw HTML: images, scripts, stylesheets, fonts — everything counts.

Modern browsers optimize progressive rendering and lazy loading, true. But a 5 MB page is still a 5 MB page: even with excellent network speeds, the device must process that data. On entry-level hardware with 2 GB of RAM and a modest processor, the impact is brutal.

Which users are really affected today?

Three key profiles: rural and semi-rural areas with poor connections, emerging markets where budget smartphones dominate, and mobile users in real mobility situations (trains, cars, unstable coverage zones).

Google doesn't provide figures, but field studies show that 30 to 40% of global web traffic still comes from connections under 10 Mbps. Ignoring this reality means accepting the loss of a portion of your potential audience — and your conversions.

- Large pages continue to penalize a significant share of global traffic

- Loading speed is directly correlated with the volume of data transferred

- Page weight optimization remains a concrete UX and SEO lever, not a relic of the past

- Budget devices amplify the negative impact of heavy pages

SEO Expert opinion

Does this statement truly reflect field observations?

Yes, unequivocally. SEO audits consistently show a correlation between heavy pages and high bounce rates, especially on mobile. E-commerce sites with product pages exceeding 3-4 MB (unoptimized HD images, poorly implemented JS carousels) regularly lose 15 to 25% of conversions on low-bandwidth segments.

What's interesting is that Google isn't discussing Core Web Vitals or technical metrics here. It's framing the subject around raw user experience — a more universal approach less subject to metric evolutions.

In which cases does page size become truly critical?

Three practical thresholds emerge from observations: beyond 2 MB on mobile, performance drops multiply. Beyond 5 MB on desktop, even on decent connections, full page display time often exceeds 5 seconds — the psychological threshold where users begin to doubt.

Pages with heavy media content (photo portfolios, enriched articles, video landing pages) are most exposed. But the problem doesn't always come from visible content: third-party scripts, tracking pixels, social widgets often add 1 to 2 MB invisibly. [To verify]: Google doesn't indicate whether the size it mentions includes or excludes third-party resources blocked by ad blockers.

Are there exceptions where size matters less?

For ultra-qualified B2B corporate audiences with guaranteed professional connections, the impact is marginal. Same for certain SaaS content where the user is already engaged and willing to wait (complex dashboards, business tools).

But be careful: even in these cases, the first visit remains critical. A prospect discovering your tool through a Google search won't grant you the same patience as an existing user. The argument "our target has good bandwidth" rarely holds up against analytics reality.

Practical impact and recommendations

How to effectively audit your pages' actual weight?

First step: measure under real conditions, not just locally or via synthetic tools. WebPageTest with simulated 3G/4G profile, Chrome DevTools with throttling, GTmetrix with remote localization — take multiple angles.

Then break down weight by resource type. Identify major contributors: unoptimized images (JPEG instead of WebP, missing responsive images), bulky JS libraries (full libraries loaded for a few functions), unsubseted custom fonts.

Which optimizations should you prioritize to reduce size without degrading UX?

The classic order of attack: images first (compression, modern formats, lazy loading), then JavaScript (tree shaking, code splitting, deferring non-critical scripts), finally CSS (purging unused rules, inlining critical CSS).

Don't neglect third-party resources: a misconfigured tracking pixel can weigh 200 KB and block rendering. Audit every external script, challenge its necessity, load it asynchronously or deferred.

Which tools automate page weight monitoring?

Lighthouse CI integrated into pipelines, SpeedCurve or Calibre for continuous monitoring, PageSpeed Insights via API to track evolution. Define performance budgets: alert if a page exceeds X KB, block the build if it adds over Y KB without explicit validation.

Automation prevents silent regressions: a developer adds a JS library, an HD image slips into a PR — without alerts, weight creeps up without anyone noticing.

- Audit total weight and weight by resource type on a representative sample of pages

- Implement WebP/AVIF compression + lazy loading on all images

- Analyze and reduce JavaScript bundle weight (code splitting, tree shaking)

- Defer or remove third-party scripts non-essential to first paint

- Implement automated performance budgets in your CI/CD pipeline

- Continuously monitor page weight evolution with alerts on overages

❓ Frequently Asked Questions

Quel est le poids de page maximal recommandé par Google ?

Les Core Web Vitals mesurent-ils directement le poids de page ?

Le lazy loading résout-il complètement le problème des pages volumineuses ?

Les CDN et HTTP/3 compensent-ils le surpoids des pages ?

Faut-il privilégier la compression côté serveur ou l'optimisation à la source ?

🎥 From the same video 43

Other SEO insights extracted from this same Google Search Central video · published on 30/03/2026

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.