Official statement

Other statements from this video 43 ▾

- □ Does the 15 MB Googlebot crawl limit really kill your indexation, and how can you fix it?

- □ Is Google Really Measuring Page Weight the Way You Think It Does?

- □ Has mobile page weight tripled in 10 years? Why should SEO professionals care about this trend?

- □ Is your structured data bloating your pages too much to be worth the SEO investment?

- □ Is your mobile site missing critical content that exists on desktop?

- □ Is your desktop content disappearing from Google rankings because it's missing on mobile?

- □ Does page speed really impact conversions according to Google?

- □ Is Google really processing 40 billion spam URLs every single day?

- □ Does network compression really improve your site's crawl budget?

- □ Is lazy loading really essential to optimize your initial page weight and boost Core Web Vitals?

- □ Does Googlebot really stop crawling after 15 MB per URL?

- □ Has mobile page weight really tripled in just one decade?

- □ Does page weight really affect user experience and SEO performance?

- □ Does structured data really bloat your HTML and hurt page performance?

- □ Is mobile-desktop parity really costing you search rankings more than you think?

- □ Should you still worry about page weight for SEO in 2024?

- □ Is resource size really the make-or-break factor for your website's speed?

- □ Is Google really enforcing a strict 1 MB limit on images—and what does that tell you about SEO priorities?

- □ Does optimizing page size actually benefit users more than it benefits your search rankings?

- □ Does Googlebot really cap crawling at 15 MB per URL?

- □ Is exploding web page weight hurting your SEO? Here's what you need to know

- □ Is page size really still hurting your SEO in 2024?

- □ Are structured data slowing down your pages enough to harm your SEO?

- □ Does page loading speed really impact your conversion rates?

- □ Does network compression really optimize user device storage space, or is it just a temporary fix?

- □ Is content disparity between mobile and desktop killing your rankings in mobile-first indexing?

- □ Is lazy loading really a must-have SEO performance lever you should activate systematically?

- □ Does Google really block 40 billion spam URLs daily—and how does your site avoid the filter?

- □ Does Googlebot really stop at 15 MB per URL?

- □ Why is mobile-desktop parity sabotaging your rankings in Mobile-First Indexing?

- □ Is your page weight really slowing down your SEO performance?

- □ Does structured data really slow down your crawl budget?

- □ Does Google really block 40 billion spam URLs every single day?

- □ Should you really cap your images at 1 MB to satisfy Google?

- □ Does Googlebot really stop crawling after 15 MB per URL?

- □ Does site speed really impact your conversion rates?

- □ Is mobile-desktop mismatch really destroying your SEO rankings right now?

- □ Do structured data markups really bloat your HTML pages?

- □ Does page size really matter for SEO when internet connections keep getting faster?

- □ Is network compression really enough to optimize your site's crawlability?

- □ Can lazy loading really boost your performance without hurting crawlability?

- □ Does your website's overall size really hurt your SEO performance?

- □ Why does Google enforce a strict 1MB image size limit across its developer documentation?

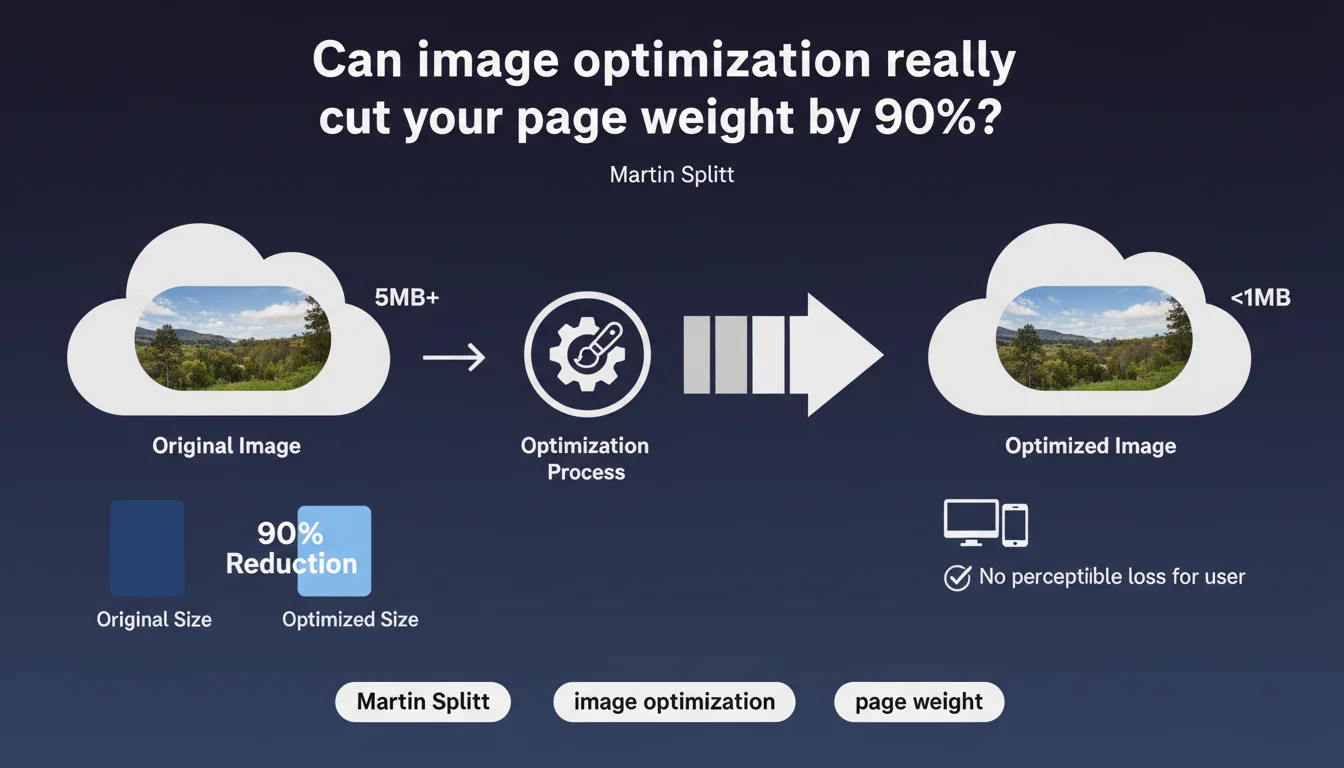

Google confirms that a properly compressed image maintains its visual quality while drastically reducing its weight — from several megabytes to under 1 MB. For SEO practitioners, this is a direct lever on Core Web Vitals and mobile experience. The message is clear: neglecting image optimization is sabotaging your performance for no valid reason.

What you need to understand

Why does Google insist so much on image optimization?

Because images represent the majority of web page weight. Martin Splitt reminds us: appropriate compression can reduce an image from several megabytes to less than one, with no perceptible degradation on screen. For Google, this is a major irritant — slow sites consume bandwidth, penalize mobile experience, and slow down crawling.

The search engine has long valued Core Web Vitals, and Largest Contentful Paint (LCP) is often degraded by unoptimized images. Reducing the weight of visual resources mechanically improves this score, thus organic visibility.

What does "appropriately compressed" mean?

Google doesn't give a magic number — and that's intentional. Compression depends on format, context, and visual content. A photo with subtle gradients tolerates less aggression than a vector illustration or flat design icon.

Concretely, "appropriate" means: no perceptible loss for the end user on a standard screen. No visible pixelation, no unsightly artifacts. The cursor sits between perceived quality and actual performance — and that's where errors multiply in the field.

What are the real gains for an SEO site?

Reducing image weight directly impacts three dimensions: loading speed, mobile experience, crawl budget. On mobile, a site that goes from 5 MB to 800 KB drastically reduces its Time to Interactive and LCP. On category pages in e-commerce with 50+ products, the effect is immediate.

On the crawl side, a lighter site = more pages crawled per Googlebot session. For large inventories, this is a non-negligible lever. Less obvious but equally strategic: the impact on bounce rate and engagement, indirect ranking factors.

- Improved LCP: heavy images are often the first visible element, thus the main brake on Largest Contentful Paint.

- Saved bandwidth: for mobile users on unstable 4G, but also for your server and CDN.

- Optimized crawl budget: Googlebot crawls more pages in less time if each resource is lightened.

- Indirect conversion rate: a page that loads quickly converts better — Google knows it, and factors it in.

SEO Expert opinion

Is this statement consistent with field observations?

Yes, without ambiguity. Audits show that 70 to 80% of sites don't compress their images correctly. JPEGs served at 100% quality, PNGs used for photos, desktop images served as-is on mobile — the errors are massive and systemic. Martin Splitt is hammering on an open door, but it's justified: the problem persists.

Where it gets tricky is that Google remains deliberately vague on thresholds. "Several megabytes to less than one" — okay, but for what resolution? What format? What JPEG quality? No precise figures. It's frustrating for a practitioner looking for an actionable reference. [To verify]: Google publishes no recommended format/compression/resolution matrix.

What nuances should be added?

First, "visually identical" is subjective. On a Retina screen in bright sunlight, JPEG Q75 compression may go unnoticed. On a 4K screen in a controlled environment, artifacts become visible. The perception threshold varies by device, context, and visual content itself.

Next, not all formats are equal. WebP and AVIF offer better compression/quality ratios than JPEG — but Google doesn't mention them here. That's a notable omission. The practitioner must find this info elsewhere. Splitt talks about "appropriate compression," but doesn't guide on which technologies to prioritize in 2025.

In what cases does this rule not apply?

Rarely. But certain contexts impose trade-offs. For an art or professional photography site, visual quality takes absolute priority — overly aggressive compression would destroy the value proposition. Same for graphic designer portfolio sites, where every pixel counts.

Another case: images serving as proof or technical documentation. Blueprints, annotated diagrams, screenshots with text — lossy compression can render critical details unreadable. There, you must intelligently balance weight and readability. But let's be honest: these exceptions concern 5% of sites. For 95% of the web, this rule applies without reservation.

Practical impact and recommendations

What should you do concretely?

First step: audit your existing setup. PageSpeed Insights, Lighthouse, WebPageTest — these tools identify unoptimized images and their optimization potential. Look for JPGs > 200 KB, PNGs used for photos, images served in desktop resolution on mobile. That's where quick wins are.

Next, define a compression strategy by content type. E-commerce product photos tolerate JPEG Q75-80 without issue. Flat design illustrations work better in WebP or optimized PNG. Simple icons and logos should be SVG — vector format, trivial weight. Don't settle for mass compression: segment.

- Identify images > 200 KB via Lighthouse audit or Screaming Frog.

- Test multiple compression levels (Q70, Q75, Q80 for JPEG) and compare visually.

- Adopt WebP with JPEG fallback to maximize compatibility.

- Implement native lazy loading on all images outside the initial viewport.

- Use

srcsetandsizesattributes to serve the resolution adapted to each device. - Automate compression via a CI/CD workflow or reliable CMS plugin.

What errors should you avoid?

Compressing blindly without visual validation. That's the most common mistake. An automated tool can butcher an image with subtle gradients or fine text. Always manually check a representative sample before global deployment.

Another trap: neglecting modern formats. Limiting yourself to JPEG in 2025 is leaving 30 to 40% of gain on the table. WebP is supported by 95% of browsers, AVIF is gaining ground. Ignoring these formats is a counterproductive choice. Yet hundreds of sites still serve only legacy JPEG/PNG.

How do you verify your site is compliant?

Run a full crawl with Screaming Frog or Sitebulb, filtering images > 150 KB. Cross-reference with PageSpeed Insights data to identify critical images — those impacting LCP. Prioritize optimizing these resources first.

Then test on WebPageTest in mobile 3G conditions. That's the most revealing scenario: if your page loads in under 3 seconds with optimized images, you're in the clear. Beyond that, dig deeper. Finally, validate visually across multiple devices — smartphone, tablet, desktop — to ensure no perceptible degradation.

Optimizing images is an SEO lever with strong ROI — but implementation demands rigor and method. Between format selection, compression thresholds, responsive implementation, and automation, variables multiply. If your team lacks time or technical expertise, it may be wise to entrust this project to a specialized SEO agency. External eyes and a proven methodology often accelerate performance gains — and avoid costly mistakes.

❓ Frequently Asked Questions

Quel niveau de compression JPEG Google recommande-t-il ?

WebP est-il obligatoire pour bien se classer ?

Les images non optimisées peuvent-elles pénaliser directement mon ranking ?

Faut-il optimiser toutes les images ou seulement celles above-the-fold ?

Un CDN suffit-il à compenser des images lourdes ?

🎥 From the same video 43

Other SEO insights extracted from this same Google Search Central video · published on 30/03/2026

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.