Official statement

Other statements from this video 43 ▾

- □ Does the 15 MB Googlebot crawl limit really kill your indexation, and how can you fix it?

- □ Is Google Really Measuring Page Weight the Way You Think It Does?

- □ Has mobile page weight tripled in 10 years? Why should SEO professionals care about this trend?

- □ Is your structured data bloating your pages too much to be worth the SEO investment?

- □ Is your mobile site missing critical content that exists on desktop?

- □ Is your desktop content disappearing from Google rankings because it's missing on mobile?

- □ Is Google really processing 40 billion spam URLs every single day?

- □ Does network compression really improve your site's crawl budget?

- □ Is lazy loading really essential to optimize your initial page weight and boost Core Web Vitals?

- □ Does Googlebot really stop crawling after 15 MB per URL?

- □ Has mobile page weight really tripled in just one decade?

- □ Does page weight really affect user experience and SEO performance?

- □ Does structured data really bloat your HTML and hurt page performance?

- □ Is mobile-desktop parity really costing you search rankings more than you think?

- □ Should you still worry about page weight for SEO in 2024?

- □ Is resource size really the make-or-break factor for your website's speed?

- □ Is Google really enforcing a strict 1 MB limit on images—and what does that tell you about SEO priorities?

- □ Does optimizing page size actually benefit users more than it benefits your search rankings?

- □ Does Googlebot really cap crawling at 15 MB per URL?

- □ Is exploding web page weight hurting your SEO? Here's what you need to know

- □ Is page size really still hurting your SEO in 2024?

- □ Are structured data slowing down your pages enough to harm your SEO?

- □ Does page loading speed really impact your conversion rates?

- □ Does network compression really optimize user device storage space, or is it just a temporary fix?

- □ Is content disparity between mobile and desktop killing your rankings in mobile-first indexing?

- □ Is lazy loading really a must-have SEO performance lever you should activate systematically?

- □ Does Google really block 40 billion spam URLs daily—and how does your site avoid the filter?

- □ Can image optimization really cut your page weight by 90%?

- □ Does Googlebot really stop at 15 MB per URL?

- □ Why is mobile-desktop parity sabotaging your rankings in Mobile-First Indexing?

- □ Is your page weight really slowing down your SEO performance?

- □ Does structured data really slow down your crawl budget?

- □ Does Google really block 40 billion spam URLs every single day?

- □ Should you really cap your images at 1 MB to satisfy Google?

- □ Does Googlebot really stop crawling after 15 MB per URL?

- □ Does site speed really impact your conversion rates?

- □ Is mobile-desktop mismatch really destroying your SEO rankings right now?

- □ Do structured data markups really bloat your HTML pages?

- □ Does page size really matter for SEO when internet connections keep getting faster?

- □ Is network compression really enough to optimize your site's crawlability?

- □ Can lazy loading really boost your performance without hurting crawlability?

- □ Does your website's overall size really hurt your SEO performance?

- □ Why does Google enforce a strict 1MB image size limit across its developer documentation?

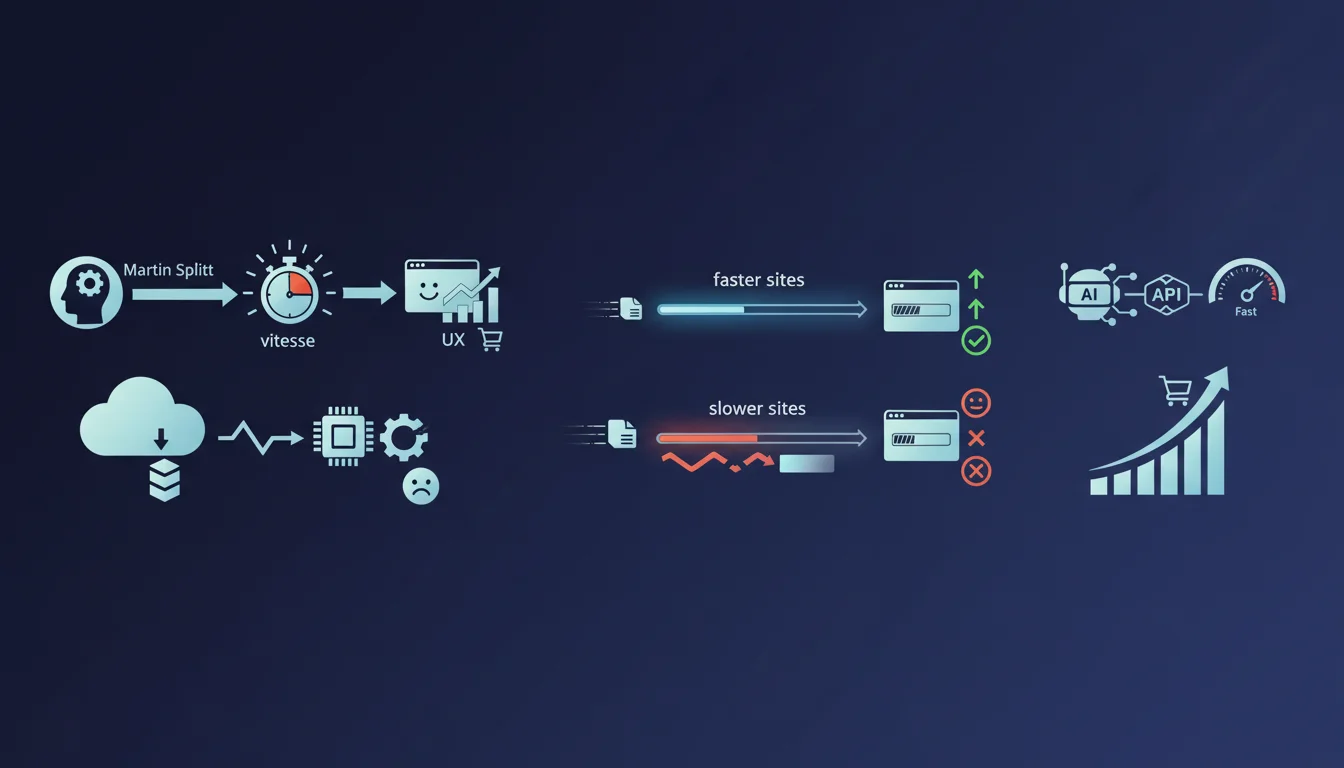

Google confirms what many suspected: faster websites convert better and retain more visitors. Speed depends directly on page weight — the higher the volume of data to transfer, the longer the display delay. In concrete terms, optimizing weight means optimizing conversion.

What you need to understand

Why does Google link speed and conversion?

The statement by Martin Splitt is based on empirical studies showing a direct correlation between loading time and user behavior. A slow site mechanically increases bounce rate and reduces conversions — this is a reality observed across millions of sessions.

Google doesn't just claim that speed matters for SEO: it explicitly points to its impact on business metrics. This shift in discourse is significant — technical performance becomes a lever for revenue, not just a ranking criterion.

What role does page weight play in this equation?

Page weight is the mass of data to download before display: HTML, CSS, JavaScript, images, fonts, videos. The higher this volume, the longer the network takes to transfer, and the more the processor struggles to parse, execute, and render the content.

Splitt reminds us here of an elementary principle often overlooked: every kilobyte counts. Modern websites often accumulate hundreds of kilos of useless JS, unoptimized images, exotic fonts. Result: pages that easily exceed 3-4 MB for content that could fit in 500 KB.

How does this claim differ from the usual discourse?

Google typically talks about Core Web Vitals in abstract terms: LCP, FID, CLS. Here, Splitt takes a much more direct angle: speed = conversion = money. It's a pragmatic message that bypasses technical debates to go straight to what decision-makers care about.

It also refocuses attention on page weight rather than server or CDN optimizations. It's a subtle way of saying the main problem often comes from the front-end — too many resources, poorly compressed, poorly prioritized.

- Speed and conversion are linked by multiple studies, not by simple anecdotal correlation.

- Page weight is the primary lever — reducing the volume of data transferred mechanically improves speed.

- Google orients the discourse toward business impact to reach an audience beyond SEO technicians.

- The underlying message: clean up your front-end before looking for exotic optimizations.

SEO Expert opinion

Is this statement consistent with field observations?

Yes, and it's even one of the rare points where Google and SEO practitioners unanimously agree. A/B tests conducted by Amazon, Walmart, Akamai, and dozens of other major players show that an additional second of latency can cost several points of conversion. The studies cited by Splitt are not hypotheses — they are documented and replicated.

In the field, we regularly see e-commerce sites lose 10 to 20% of their revenue due to loading time that's too long. Mobile users are particularly sensitive: beyond 3 seconds, the majority abandon. It's not a matter of perception — it's statistical.

What nuances should be added to this discourse?

Splitt's statement poses a simple causal relationship: high weight = slowness = fewer conversions. In absolute terms, it's true. But reality is somewhat more complex.

First, not all kilobytes are equal. A 50 KB tracking script blocking at the start of a page slows things down much more than a 200 KB image loaded lazily. The problem isn't just raw weight, it's also load order, JavaScript parsing, blocking requests.

Second, speed is not the only conversion factor. A fast site but poorly designed, with a confusing purchase flow or uncompetitive products, will convert poorly anyway. Speed is a necessary condition, not a sufficient one.

Third, some types of sites can afford more weight — an interactive 3D configurator, a complex SaaS tool. The trade-off between functional richness and lightness depends on business context. Don't fall into digital austerity at all costs.

In what cases doesn't this rule fully apply?

On sites with very high brand recognition or in a monopoly situation, the impact of slowness is cushioned by the lack of alternatives. If you're the only player in a niche market, your users will wait. But that's an exception — and even then, you're leaving money on the table.

Sites with captive audiences — intranets, long-term contract B2B platforms — can also ease off a bit. The user has no choice, they'll come back. But again, frustration accumulates and eventually weighs on contract renewal.

Practical impact and recommendations

What should you do concretely to reduce page weight?

First step: measure. Use WebPageTest, Lighthouse, or GTmetrix to identify the heaviest resources. Often, 80% of the weight comes from 20% of the files — unoptimized images, oversized JS libraries, unnecessary fonts.

Next, compress images — switch to WebP or AVIF, resize to actual display dimensions. An image of 2 MB served in a 300 px wide container is pure waste. Serve modern formats with a fallback for older browsers.

On the JavaScript side, do tree-shaking and code-splitting. If you're embedding an entire library to use a single function, it's time to refactor. Defer or lazy-load everything that's not critical to first paint.

What mistakes should you avoid in this optimization?

Don't sacrifice visual quality to the point of degrading experience. An image that's overly compressed and pixelated drives people away as much as a slow page does. You need to find the balance — aggressive compression while preserving perceived sharpness.

Avoid multiplying monitoring and tracking tools thinking it costs nothing. Every third-party script adds weight, requests, parsing. Google Analytics, Hotjar, Facebook Pixel, Drift, Intercom — after a while, you're dragging 500 KB of trackers for a 10 KB blog article. Prioritize.

Don't rely solely on a CDN to mask the problem. A CDN accelerates distribution, but doesn't reduce intrinsic weight. If your page weighs 5 MB, it will weigh 5 MB everywhere in the world — just a bit faster. The CDN is an amplifier, not a fundamental solution.

How do you verify that your site meets Google's expectations?

Use PageSpeed Insights and Search Console to track your Core Web Vitals. Google gives you the thresholds: LCP under 2.5s, FID under 100ms, CLS under 0.1. These metrics are proxies for perceived speed and visual stability.

But don't stop at the scores — test in real conditions. Simulate a slow 3G connection, use a mid-range Android device, browse like a typical user. Tools give you indications, the field gives you the truth.

- Audit your current page weight and identify the 5 heaviest resources.

- Compress all images to WebP or AVIF format with quality between 75 and 85.

- Remove or defer non-critical JavaScript from first paint.

- Clean up unused fonts and limit yourself to 2 or 3 weights maximum.

- Reduce the number of third-party scripts and consolidate tracking tools.

- Enable Gzip or Brotli compression on the server for all text assets.

- Test your site on slow 3G mobile from a mid-range Android device.

- Track your Core Web Vitals in Search Console and fix pages in red.

❓ Frequently Asked Questions

À partir de quel poids de page la vitesse devient-elle un vrai problème ?

Les Core Web Vitals suffisent-ils à mesurer la vitesse de mon site ?

Est-ce que compresser les images dégrade vraiment la qualité visuelle ?

Un CDN peut-il compenser un site trop lourd ?

Comment convaincre un client que la vitesse impacte ses revenus ?

🎥 From the same video 43

Other SEO insights extracted from this same Google Search Central video · published on 30/03/2026

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.