Official statement

Other statements from this video 43 ▾

- □ Does the 15 MB Googlebot crawl limit really kill your indexation, and how can you fix it?

- □ Is Google Really Measuring Page Weight the Way You Think It Does?

- □ Has mobile page weight tripled in 10 years? Why should SEO professionals care about this trend?

- □ Is your structured data bloating your pages too much to be worth the SEO investment?

- □ Is your mobile site missing critical content that exists on desktop?

- □ Is your desktop content disappearing from Google rankings because it's missing on mobile?

- □ Does page speed really impact conversions according to Google?

- □ Is Google really processing 40 billion spam URLs every single day?

- □ Does network compression really improve your site's crawl budget?

- □ Is lazy loading really essential to optimize your initial page weight and boost Core Web Vitals?

- □ Does Googlebot really stop crawling after 15 MB per URL?

- □ Has mobile page weight really tripled in just one decade?

- □ Does page weight really affect user experience and SEO performance?

- □ Does structured data really bloat your HTML and hurt page performance?

- □ Is mobile-desktop parity really costing you search rankings more than you think?

- □ Should you still worry about page weight for SEO in 2024?

- □ Is resource size really the make-or-break factor for your website's speed?

- □ Does optimizing page size actually benefit users more than it benefits your search rankings?

- □ Does Googlebot really cap crawling at 15 MB per URL?

- □ Is exploding web page weight hurting your SEO? Here's what you need to know

- □ Is page size really still hurting your SEO in 2024?

- □ Are structured data slowing down your pages enough to harm your SEO?

- □ Does page loading speed really impact your conversion rates?

- □ Does network compression really optimize user device storage space, or is it just a temporary fix?

- □ Is content disparity between mobile and desktop killing your rankings in mobile-first indexing?

- □ Is lazy loading really a must-have SEO performance lever you should activate systematically?

- □ Does Google really block 40 billion spam URLs daily—and how does your site avoid the filter?

- □ Can image optimization really cut your page weight by 90%?

- □ Does Googlebot really stop at 15 MB per URL?

- □ Why is mobile-desktop parity sabotaging your rankings in Mobile-First Indexing?

- □ Is your page weight really slowing down your SEO performance?

- □ Does structured data really slow down your crawl budget?

- □ Does Google really block 40 billion spam URLs every single day?

- □ Should you really cap your images at 1 MB to satisfy Google?

- □ Does Googlebot really stop crawling after 15 MB per URL?

- □ Does site speed really impact your conversion rates?

- □ Is mobile-desktop mismatch really destroying your SEO rankings right now?

- □ Do structured data markups really bloat your HTML pages?

- □ Does page size really matter for SEO when internet connections keep getting faster?

- □ Is network compression really enough to optimize your site's crawlability?

- □ Can lazy loading really boost your performance without hurting crawlability?

- □ Does your website's overall size really hurt your SEO performance?

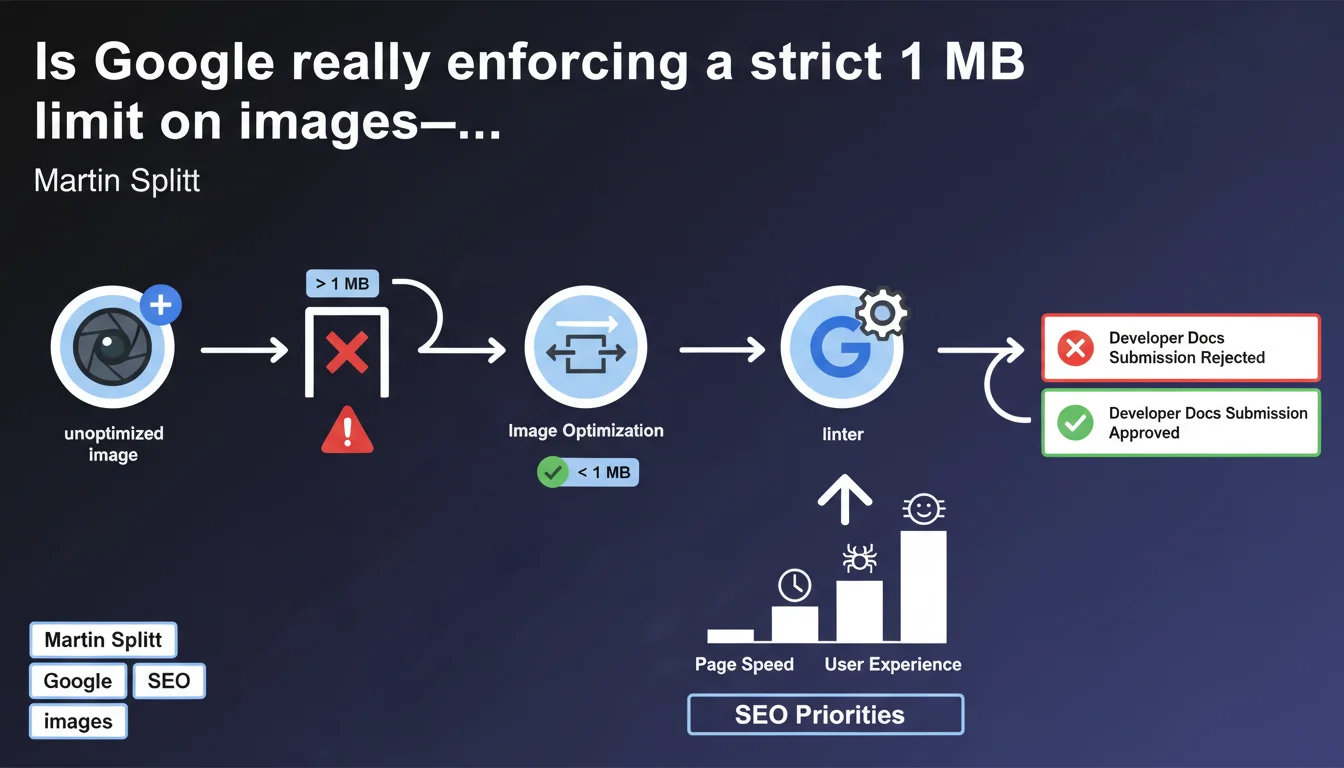

- □ Why does Google enforce a strict 1MB image size limit across its developer documentation?

Google uses an internal linter that automatically blocks the submission of images exceeding 1 MB on its own developer documentation sites. This technical choice reveals the critical importance the search engine places on image optimization—and sends a clear signal to webmasters: if Google imposes this constraint on itself, you should do the same.

What you need to understand

What is a linter and why does Google use it for images?

A linter is an automated quality control tool that analyzes code or resources before deployment. Here, Google has configured its development pipeline to reject any image exceeding 1 MB on its own developer-focused sites.

This technical constraint is not trivial. It reflects a strict internal policy: even Google's own teams cannot bypass this rule. The implicit message? Image optimization is not optional, it's a prerequisite.

Why exactly 1 MB?

The 1 MB threshold is not chosen randomly. It's a compromise between acceptable visual quality and loading performance across various connection speeds. For a modern website, a 1 MB image remains relatively heavy—but it's a ceiling, not a target.

Most properly optimized images weigh between 50 and 300 KB. Google sets here an absolute upper limit, while knowing that its own teams aim well below it.

What is the direct implication for SEO?

Google doesn't explicitly say "heavy images will penalize your rankings." But by imposing this constraint on itself, the engine suggests that loading speed remains a major quality signal.

Images are often the first factor in slowing down a page. If you neglect their optimization, you sacrifice your Core Web Vitals (particularly LCP), which can impact your visibility.

- Google practices what it preaches: its own sites respect this strict limit

- The 1 MB threshold is an absolute maximum, not a target to reach

- Poorly optimized images directly degrade LCP and TTFB

- This statement confirms that image optimization remains a priority SEO lever

SEO Expert opinion

Does this internal constraint prove a direct ranking impact?

Let's be honest: no, not directly. Google doesn't say "any image above 1 MB will cost you positions." What this statement reveals is a performance-oriented engineering culture—and this culture inevitably influences algorithm design.

If Google imposes such strict constraints internally, it's because loading speed remains a non-negotiable quality criterion. Core Web Vitals are simply the public translation of this obsession. [To verify]: it would be interesting to correlate the average image size on a site with its actual LCP performance—Google provides no numerical data on this relationship.

Should you really limit yourself to 1 MB per image?

No, and that's where Google's message can be misleading. The 1 MB threshold is a safety limit, not an operational recommendation. In practice, targeting 1 MB remains far too generous for most images.

A well-compressed e-commerce product photo in WebP typically runs around 80-150 KB. An optimized hero banner? 200-300 KB maximum. If your images are approaching 1 MB, you've missed several compression steps.

What are the pitfalls to avoid with this statement?

The classic pitfall: thinking "OK, I'll get all my images to 999 KB and I'm good." No. The goal is not to meet an arbitrary limit, but to actually optimize loading.

Another trap: focusing only on file size in KB and ignoring actual dimensions. An image of 2000×1500 pixels compressed to 800 KB is still nonsense if it displays at 400×300 on mobile. The browser downloads unnecessary pixels, regardless of the final file size.

Practical impact and recommendations

What should you actually do to respect this logic?

First step: audit your existing images. Use Lighthouse, PageSpeed Insights, or a tool like Screaming Frog to list all images on your site and identify those exceeding 500 KB.

Next, apply a systematic compression strategy: conversion to WebP (with JPEG fallback), compression with acceptable loss (80-85% quality), and above all, generation of multiple sizes via srcset to serve the version adapted to each device.

How do you automate this optimization at scale?

Manually, it's unmanageable. The ideal is to integrate optimization into your build pipeline: tools like ImageOptim, Squoosh, or WordPress plugins like Imagify/ShortPixel that process images at upload.

For large sites, a CDN with on-the-fly image transformation (Cloudflare Images, Imgix, Cloudinary) is often the only viable solution. You upload the image in high quality, and the CDN automatically serves the optimized version based on context.

What critical errors should you avoid?

Never sacrifice visual quality in the name of optimization. A blurry or pixelated image degrades user experience and can harm conversion—and thus indirectly SEO through behavioral signals.

Another error: forgetting alt attributes. Google regularly emphasizes the importance of alternative text for accessibility and contextual understanding of images. A perfectly optimized image without relevant alt text remains a missed opportunity.

- Audit all images exceeding 500 KB

- Convert to WebP with JPEG/PNG fallback

- Implement srcset and sizes for responsiveness

- Use a CDN with automatic transformation for high volume

- Verify that each image has a descriptive alt attribute

- Regularly monitor LCP via Search Console and Lighthouse

- Enable lazy loading for images outside initial viewport

❓ Frequently Asked Questions

Google pénalise-t-il directement les sites avec des images au-dessus de 1 Mo ?

Le format WebP est-il vraiment indispensable ?

Faut-il optimiser toutes les images, même celles en dessous de 1 Mo ?

Quelle différence entre compression avec et sans perte ?

Les images SVG sont-elles concernées par cette limite ?

🎥 From the same video 43

Other SEO insights extracted from this same Google Search Central video · published on 30/03/2026

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.