Official statement

Other statements from this video 29 ▾

- □ Does a bloated robots.txt file really hurt your SEO rankings?

- □ Does it really matter whether you submit your sitemap in robots.txt or Search Console?

- □ Do H1-H6 heading tags really still impact Google rankings?

- □ Is a strict heading tag hierarchy really necessary for SEO rankings?

- □ How long does Google actually take to fully process a domain migration?

- □ Can a site migration really boost your SEO rankings or destroy them completely?

- □ Does Googlebot really crawl from just one place when indexing your geo-targeted content?

- □ Can a noindex tag on geolocalized pages wipe your entire website from Google search results?

- □ Should you really ditch geo-redirects for a simple dynamic banner?

- □ How many location pages can you create before Google penalizes you for spam?

- □ Should you redirect mobile users to your app—and what are the hidden SEO risks?

- □ Do you really need to translate your pages word-for-word for hreflang to work effectively?

- □ Does the domain directive in your Disavow file really help you bypass Google's 2MB limit?

- □ Should you really use the Disavow tool only for purchased links?

- □ Should you noindex your internal search results pages to prevent spammers from creating backlinks?

- □ Does semantic HTML really boost your search rankings?

- □ Is AMP still a ranking factor in Google Search?

- □ Is AMP really a ranking factor for Google?

- □ Does removing AMP actually boost crawl on your regular pages?

- □ Should you test removing your Disavow file incrementally, or can you delete it all at once?

- □ Why do knowledge panels display differently across devices and search contexts?

- □ Does Google's synonym system really work without any human intervention?

- □ Should you really create a separate page for each location to implement Local Business schema correctly?

- □ Do you really need to mark up ALL your content with structured data?

- □ Do you really need to display all FAQ schema questions visibly on your page?

- □ Can hidden accordion content really show up in featured snippets?

- □ Why does Google deliberately choose not to index your entire website?

- □ Does search volume of anchor text really impact the value of your internal links?

- □ Should you really add unique content to your e-commerce product pages?

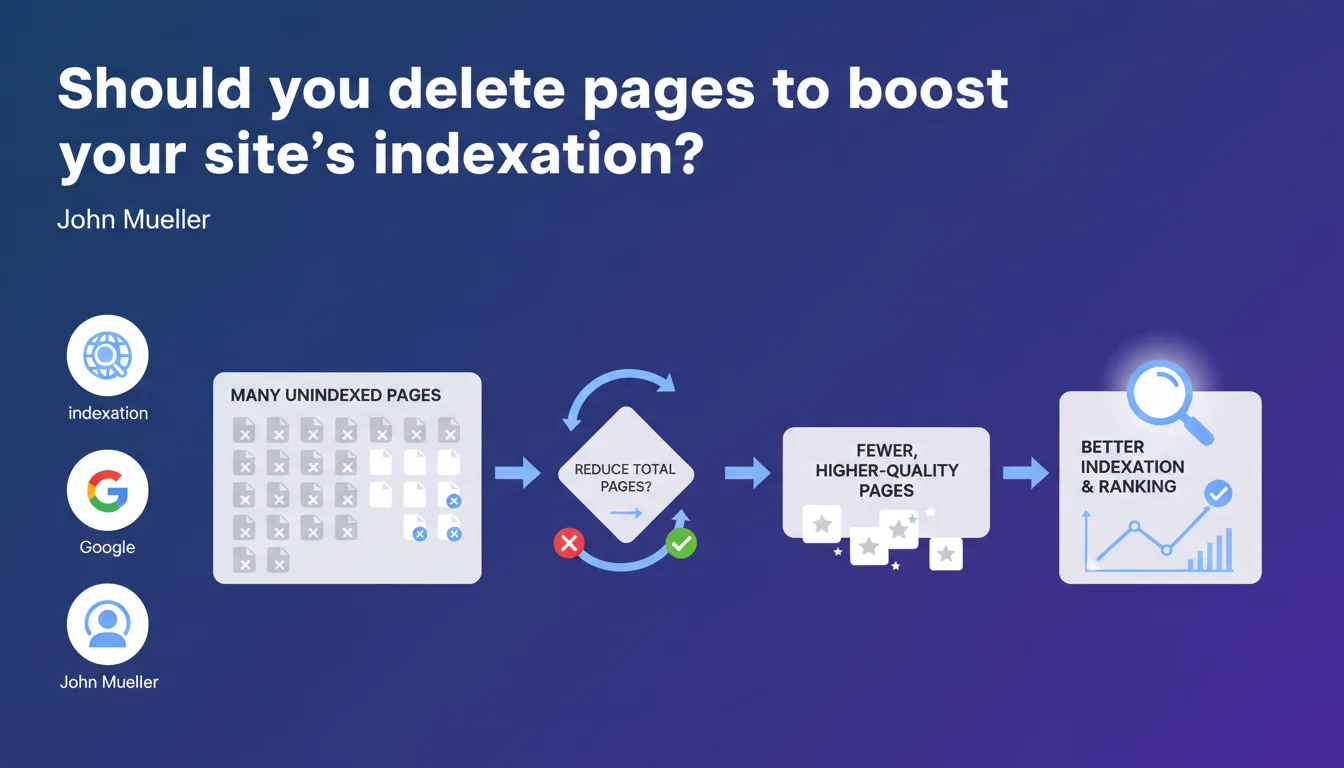

John Mueller confirms that a site with many non-indexed pages can benefit from reducing its overall volume. The goal: concentrate crawl budget and value on higher-quality pages. Google will index and rank priority content better if you simplify the task.

What you need to understand

Why does Google struggle to index all content on a website?

Crawl budget is not infinite. Google allocates limited time and resources to explore each site based on criteria like domain authority, update frequency, and perceived content quality.

If a site publishes massively without a clear editorial strategy — duplicate pages, poor content, infinite variations — the bot spends time on valueless URLs. Result: strategic pages are not crawled, or are crawled with significant delay.

What distinguishes a "quality" page from a superfluous one?

Google never provides binary criteria. But a quality page addresses a clear user intent, delivers unique value, and generates engagement signals.

Conversely, a superfluous page might be an unnecessary parametric variation, auto-generated content with no added value, an empty archive, or a hidden technical duplicate. Often these pages exist for historical or technical reasons, not for the user.

How does reducing the number of pages improve indexation?

Fewer pages means less dispersal of crawl budget. Google dedicates more resources to priority content, revisits it more often, and indexes updates faster.

For ranking, concentrating signals — internal links, click depth, traffic — on a limited number of pages strengthens their individual authority. A 500-page high-performing site often outranks a 5000-page mediocre site.

- Crawl budget is a limited resource that Google distributes based on perceived site quality

- Reducing page volume only makes sense if the removed pages are low-value

- Quality trumps quantity: 100 excellent pages beat 1000 average pages

- Indexation is not a right — Google decides what deserves indexing by its own criteria

- This logic applies especially to mid-authority sites with documented indexation issues

SEO Expert opinion

Is this recommendation truly new or just a reminder?

Let's be honest: this statement is not groundbreaking. The principle of crawl budget and quality over quantity has been repeated for years. What changes is that Google verbalizes it increasingly explicitly.

For several years we've observed a trend toward aggressive deindexing of content deemed irrelevant, even on established sites. Mueller's statement fits this logic: Google prefers a web focused on useful content rather than an ocean of indiscriminately generated pages.

In what cases doesn't this rule apply?

An authority site with strong crawl budget — think Amazon, Wikipedia — can afford millions of pages. Volume is not a problem if quality follows and crawling is efficient.

Conversely, a small niche site with 50 pages has no reason to delete content on principle. Mueller's advice targets sites that have artificially inflated their volume — infinite e-commerce facets, dated archives, auto-generated content — and that suffer from documented low indexation rates in Search Console.

What nuances should be applied to this reduction logic?

Mueller speaks of "higher-quality pages", but Google provides no quantifiable threshold. What level of quality? Measured how? By whom? [To verify] in each specific context.

Moreover, the notion of "priority pages" is subjective. For Google, priority may be determined by user engagement. For a publisher, it might be strategic pages with low traffic but crucial for conversion or editorial credibility. These two visions don't always align.

Finally, reducing page count is just one lever among many. A poorly structured site with 200 pages can be worse than a well-organized site with 2000 pages. Internal linking, speed, editorial quality, UX signals — everything counts.

Practical impact and recommendations

What should I do concretely if my site suffers from partial indexation?

Start by auditing non-indexed pages in Search Console. Identify patterns: are these e-commerce facets? Dated archives? Duplicate or poor content?

Next, segment these pages into three groups: those deserving improvement, those that can be consolidated (merging similar content), and those to be deleted or set to noindex. Never delete without checking traffic and backlinks.

For retained pages, optimize their crawlability: reinforced internal linking, reduced click depth, improved editorial quality. Redirect 301 deleted pages to relevant content, or use 410 if no equivalent exists.

What errors should be avoided when reducing volume?

Don't rely solely on recent traffic metrics. A page with no traffic may have quality backlinks or convert occasionally. Always verify.

Avoid deleting pages in batches without individual analysis. Each URL potentially has a story, links, utility. A mechanical approach destroys value.

Don't confuse indexation with ranking. Reducing volume may improve indexation, but if remaining pages are mediocre, ranking won't follow. Editorial quality remains decisive.

- Analyze non-indexed pages via Search Console (Coverage tab then Excluded)

- Export organic traffic data for 12+ months minimum for each URL candidate for deletion

- Check backlinks via third-party tool (Ahrefs, Majestic, SEMrush)

- Identify duplicate or near-duplicate content with a crawler (Screaming Frog, OnCrawl)

- Consolidate similar content rather than deleting systematically

- Implement 301 redirects to relevant content for any deleted page

- Use 410 (Gone) for pages without logical equivalent

- Strengthen internal linking to priority retained pages

- Monitor indexation and traffic evolution for 3 months post-intervention

❓ Frequently Asked Questions

Combien de pages dois-je supprimer pour améliorer mon indexation ?

Si je supprime des pages, est-ce que Google indexera automatiquement les autres ?

Vaut-il mieux supprimer une page ou la passer en noindex ?

Cette logique s'applique-t-elle aux sites d'actualité ou e-commerce ?

Comment savoir si mon site souffre vraiment d'un problème de crawl budget ?

🎥 From the same video 29

Other SEO insights extracted from this same Google Search Central video · published on 14/01/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.