Official statement

Other statements from this video 29 ▾

- □ Does a bloated robots.txt file really hurt your SEO rankings?

- □ Do H1-H6 heading tags really still impact Google rankings?

- □ Is a strict heading tag hierarchy really necessary for SEO rankings?

- □ How long does Google actually take to fully process a domain migration?

- □ Can a site migration really boost your SEO rankings or destroy them completely?

- □ Does Googlebot really crawl from just one place when indexing your geo-targeted content?

- □ Can a noindex tag on geolocalized pages wipe your entire website from Google search results?

- □ Should you really ditch geo-redirects for a simple dynamic banner?

- □ How many location pages can you create before Google penalizes you for spam?

- □ Should you redirect mobile users to your app—and what are the hidden SEO risks?

- □ Do you really need to translate your pages word-for-word for hreflang to work effectively?

- □ Does the domain directive in your Disavow file really help you bypass Google's 2MB limit?

- □ Should you really use the Disavow tool only for purchased links?

- □ Should you noindex your internal search results pages to prevent spammers from creating backlinks?

- □ Does semantic HTML really boost your search rankings?

- □ Is AMP still a ranking factor in Google Search?

- □ Is AMP really a ranking factor for Google?

- □ Does removing AMP actually boost crawl on your regular pages?

- □ Should you test removing your Disavow file incrementally, or can you delete it all at once?

- □ Why do knowledge panels display differently across devices and search contexts?

- □ Does Google's synonym system really work without any human intervention?

- □ Should you really create a separate page for each location to implement Local Business schema correctly?

- □ Do you really need to mark up ALL your content with structured data?

- □ Do you really need to display all FAQ schema questions visibly on your page?

- □ Can hidden accordion content really show up in featured snippets?

- □ Why does Google deliberately choose not to index your entire website?

- □ Should you delete pages to boost your site's indexation?

- □ Does search volume of anchor text really impact the value of your internal links?

- □ Should you really add unique content to your e-commerce product pages?

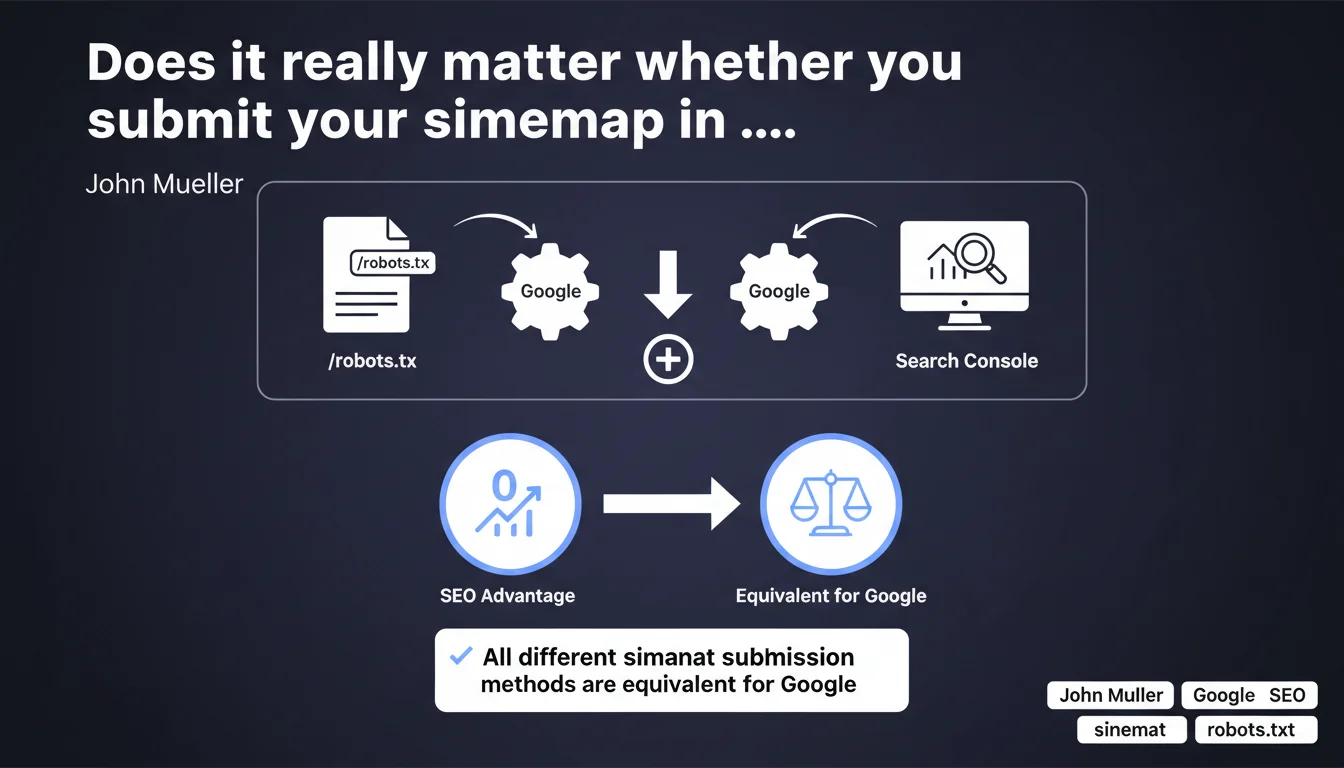

Google confirms that all sitemap submission methods are strictly equivalent. Declaring your sitemap in robots.txt, via Search Console, or through other means provides no additional SEO advantage — Google treats these signals identically.

What you need to understand

What are the different ways to submit a sitemap?

A sitemap can be submitted to Google in several ways. The most well-known remains Search Console, where you can enter the URL of the XML file in the dedicated section. But you can also declare your sitemap directly in the robots.txt file via the "Sitemap:" directive, or even let Google discover it automatically if it's mentioned in other crawled files.

Each method has its supporters. Some prefer robots.txt to centralize technical configuration, others prefer Search Console for tracking and coverage reports. The question that arises: does Google treat these methods differently?

Why is this Mueller clarification so important?

Because it ends a recurring debate in the SEO community. For years, some practitioners thought that submitting the sitemap via robots.txt gave a signal of "technical seriousness" or facilitated crawling. Others believed that Search Console offered priority processing.

Mueller puts it plainly: no method is favored. Google indexes and uses your sitemap in the same way, regardless of how it learned about it. The engine does not distinguish between these submission channels.

What does this change for your site's technical management?

Concretely? It means you can choose the method that best suits your workflow and your technical constraints. If your CMS automatically manages robots.txt, great. If you prefer to control everything from Search Console to have coverage reports at hand, that's equally valid.

What matters is that the sitemap is accessible, valid, and up to date. The submission channel has no impact on indexing speed or rankings — what counts is the quality of the content referenced in the sitemap and Google's ability to crawl it efficiently.

- All submission methods (robots.txt, Search Console, automatic discovery) are treated identically by Google

- No SEO advantage to favoring one method over another

- The choice should be based on your technical constraints and your monitoring needs

- What remains essential is the quality and validity of the sitemap, not its submission channel

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes, and it confirms what many empirical tests already suggested. In practice, we observe that Google crawls and indexes the URLs from a sitemap with the same frequency, whether it was discovered via robots.txt or submitted in Search Console. Indexing delays do not differ significantly.

Where things sometimes break down — and Mueller doesn't mention this — is the visibility of errors. Search Console alerts you when a sitemap contains error URLs like 404s, redirects, or pages blocked by robots.txt. If you only declare the sitemap in robots.txt, you lose this diagnostic layer.

Are there cases where one method is preferable to another?

Let's be honest: even though Google treats all sitemaps the same way, Search Console remains the most practical option for operational tracking. Coverage reports allow you to quickly detect indexing issues, validate that your URLs are being accounted for, and track crawl evolution.

Conversely, declaring the sitemap in robots.txt has an advantage in certain technical contexts: if your infrastructure doesn't allow easy access to Search Console (sites under migration, complex multi-domain environments), robots.txt can serve as a reliable fallback. But this is not an SEO advantage — it's an organizational one.

What doesn't Mueller say in this statement?

What's missing is the nuance about multiple sitemaps and large-scale sites. Does Google treat identically a sitemap declared via robots.txt on a site with 50,000 pages and a segmented sitemap submitted in multiple files via Search Console? [To verify]

Similarly, Mueller doesn't specify whether the re-crawl frequency of robots.txt influences how quickly Google detects a sitemap update. We know robots.txt is crawled regularly, but not necessarily in real time. If you change your sitemap URL in robots.txt, Google might take several hours — or even days — to discover it, whereas manual submission in Search Console is nearly instantaneous.

Practical impact and recommendations

Which method should you choose to submit your sitemap?

Choose Search Console if you want operational tracking and detailed coverage reports. It's the most robust option for diagnosing indexing issues and monitoring crawl evolution. It also allows you to submit multiple segmented sitemaps if your architecture requires it.

Use robots.txt if you manage a complex site with access constraints to Search Console, or if you want to centralize all technical configuration in a single file. But keep in mind that you'll lose visibility into crawl errors.

Nothing prevents you from combining both: declare the sitemap in robots.txt so Google discovers it automatically, and also submit it via Search Console to benefit from reports. It's redundant, but not penalizing.

What errors should you avoid when managing sitemaps?

Don't multiply sitemaps unnecessarily. Some sites submit multiple versions of the same file (via robots.txt, Search Console, and even in HTML code), thinking it will speed up indexing. It doesn't help — Google crawls the sitemap once it knows about it, no matter how many times you declare it.

Also avoid submitting sitemaps containing URLs blocked by robots.txt or pages with noindex. Google will crawl these URLs, see that they're not indexable, and report errors to you in Search Console. This clutters your reports and wastes crawl budget.

Finally, don't leave an obsolete sitemap active. If you change your sitemap URL, remove the old declaration from robots.txt and Search Console. A sitemap that returns a 404 or redirect will generate unnecessary alerts.

How do you verify that my sitemap is being properly recognized?

In Search Console, go to the Sitemaps section. You should see the file status (last read date, number of discovered URLs, any errors). If Google hasn't crawled your sitemap in several weeks, that's a warning sign — either the file is inaccessible or it contains too many errors.

If you're using robots.txt, test your sitemap URL in a browser to verify it's accessible. Then use the "URL Inspection" tool in Search Console to verify that Google can properly crawl the URLs listed in your sitemap.

- Choose Search Console for operational tracking and detailed diagnostics

- Use robots.txt if your technical constraints require it, but anticipate the loss of visibility into errors

- Don't multiply declarations unnecessarily — one is enough

- Avoid submitting blocked or noindex URLs in the sitemap

- Regularly check your sitemap status in Search Console to detect crawl errors

- Remove old declarations if you change the sitemap URL

❓ Frequently Asked Questions

Faut-il soumettre le sitemap à la fois dans robots.txt et Search Console ?

Le sitemap soumis via robots.txt est-il crawlé moins souvent ?

Peut-on soumettre plusieurs sitemaps pour un même site ?

Que faire si Google ne crawle pas mon sitemap ?

Un sitemap améliore-t-il le positionnement dans les résultats ?

🎥 From the same video 29

Other SEO insights extracted from this same Google Search Central video · published on 14/01/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.