Official statement

Other statements from this video 29 ▾

- □ Un fichier robots.txt volumineux pénalise-t-il vraiment votre SEO ?

- □ Soumettre son sitemap dans robots.txt ou Search Console : y a-t-il vraiment une différence ?

- □ Les balises H1-H6 ont-elles encore un impact réel sur le classement Google ?

- □ Faut-il vraiment respecter une hiérarchie stricte des balises Hn pour le SEO ?

- □ Combien de temps faut-il réellement pour qu'une migration de domaine soit prise en compte par Google ?

- □ Une migration de site peut-elle vraiment booster votre SEO ou tout faire planter ?

- □ Le noindex sur pages géolocalisées peut-il faire disparaître tout votre site des résultats Google ?

- □ Faut-il vraiment abandonner les redirections géolocalisées pour une simple bannière ?

- □ Faut-il créer des pages de destination pour chaque ville ou se limiter aux régions ?

- □ Faut-il rediriger les utilisateurs mobiles vers votre application mobile ?

- □ Faut-il vraiment traduire mot pour mot ses pages pour que le hreflang fonctionne ?

- □ Fichier Disavow : pourquoi la directive domaine permet-elle de contourner la limite de 2MB ?

- □ Faut-il vraiment utiliser le fichier Disavow uniquement pour les liens achetés ?

- □ Faut-il mettre en noindex ses pages de résultats de recherche interne pour bloquer les backlinks spam ?

- □ Le HTML sémantique booste-t-il vraiment votre référencement naturel ?

- □ AMP est-il encore un critère de ranking dans Google Search ?

- □ AMP est-il vraiment un facteur de classement pour Google ?

- □ Supprimer AMP boost-t-il le crawl de vos pages classiques ?

- □ Faut-il tester la suppression de son fichier Disavow de manière incrémentale ?

- □ Pourquoi les panels de connaissance s'affichent-ils différemment selon les appareils ?

- □ Le système de synonymes de Google fonctionne-t-il vraiment sans intervention humaine ?

- □ Faut-il vraiment créer une page distincte par localisation pour le schema Local Business ?

- □ Faut-il vraiment marquer TOUT son contenu en données structurées ?

- □ Faut-il vraiment afficher toutes les questions du schema FAQ sur la page ?

- □ Le contenu masqué dans les accordéons peut-il vraiment apparaître dans les featured snippets ?

- □ Pourquoi Google ne veut-il pas indexer l'intégralité de votre site web ?

- □ Faut-il supprimer des pages pour améliorer l'indexation de son site ?

- □ Le volume de recherche des ancres influence-t-il vraiment la valeur d'un lien interne ?

- □ Faut-il vraiment ajouter du contenu unique sur vos pages produit en e-commerce ?

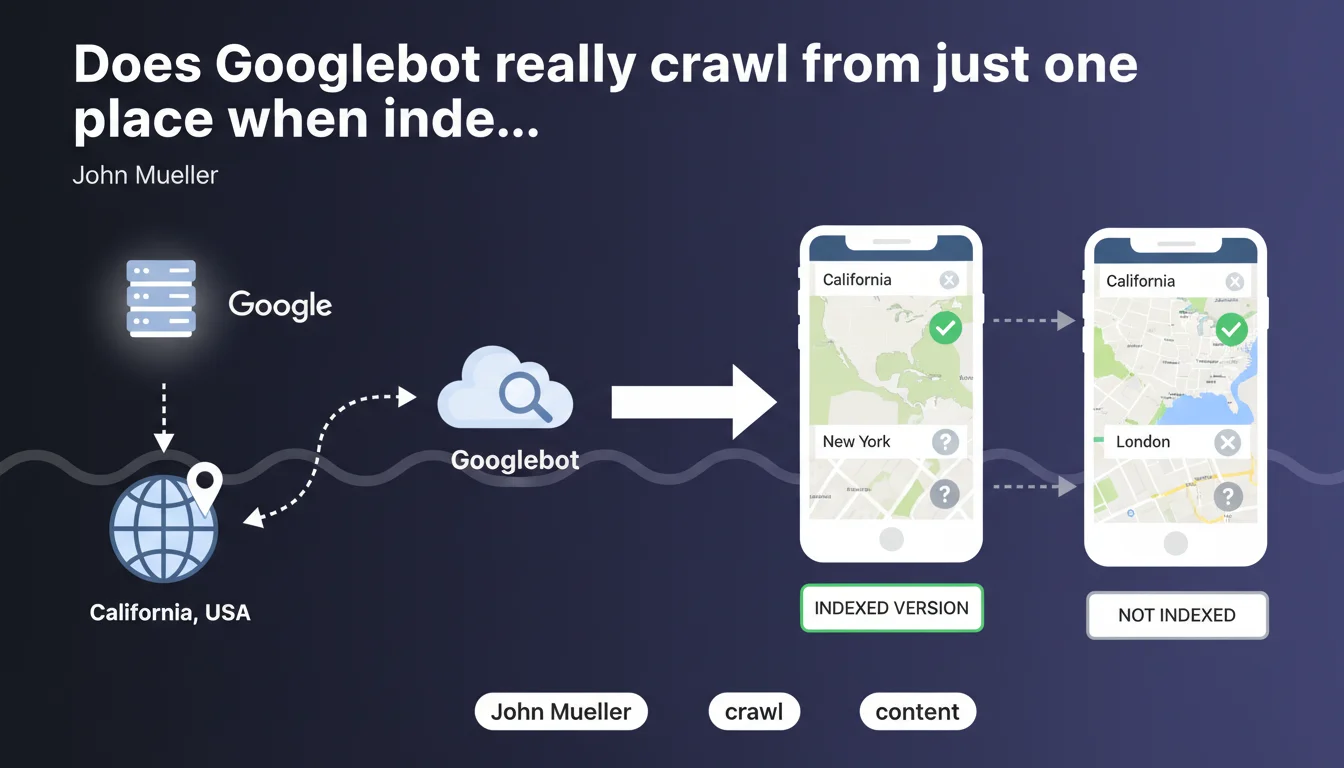

Googlebot generally crawls from a single location — likely California for most Google systems. If your content varies based on user geolocation, it's the California version that gets indexed. For international or regional sites, this reality forces you to completely rethink your geo-targeted content management strategy.

What you need to understand

From exactly where is Googlebot crawling our content?

John Mueller confirms what many suspected: Googlebot does not simulate crawls from multiple countries. It operates from a single geographic location — likely California for the vast majority of Google's systems.

In practical terms? If you serve different content based on user IP address (prices in euros vs dollars, regional product availability, local promotions), Googlebot only sees one version. And that version is the one you serve from California.

Why does this limitation create problems?

Because many sites adjust their content based on user geolocation — think of e-commerce sites, regional news platforms, booking services.

If your strategy relies on geo-targeted dynamic content without distinct indexable versions, you risk serving Google a version that doesn't match your actual target audience. The bot indexes what it sees from California, not what your French, British, or Japanese users see.

What's the difference with traditional multilingual sites?

Sites structured with subdomains or subdirectories by country (example.fr, example.com/uk/) are not impacted the same way. These distinct URLs are crawled and indexed separately.

The problem mainly affects sites that dynamically change content on the same URL based on detected location — without redirects, without different URLs. Googlebot has only one IP address, so it sees only one version.

- Googlebot crawls from a single geographic location, likely California

- Dynamically geo-localized content will only be indexed in its California version

- Multi-country structures with distinct URLs escape this problem

- Price variations, language differences, or availability based on IP will not all be indexed

SEO Expert opinion

Is this statement consistent with what we observe in practice?

Yes, largely. For years, SEO practitioners have observed that Googlebot crawls predominantly from US IP ranges. Server logs confirm it: no notable geographic diversity in bot requests.

But be careful — and this is where Mueller remains intentionally vague — he says "likely California for most of the systems." This wording leaves room for interpretation: are there exceptions? For what types of content or sites? [To verify] Google doesn't specify.

What nuances should we add to this rule?

Mueller speaks of Googlebot "for search." There are other Google bots: AdSense, AdWords, AdsBot, Google Images. This statement may not concern all of them. What about Google Discover or Google News, which have their own crawling systems?

Second nuance: the fact that Googlebot crawls from a single location doesn't prevent Google from ranking results differently based on the user's location. Ranking is geolocation-based, not crawling. This is a point many still confuse.

In what cases does this rule not really apply?

If you use hreflang and distinct URLs by country/language, you're not affected. Each URL is crawled independently, regardless of where the bot comes from.

On the other hand, if you change content on the same URL based on IP, you're directly concerned. And that's precisely where many international sites fail: they think Google "knows" they display different content based on country. No. Google indexes what it sees from California, period.

Practical impact and recommendations

What should you concretely do if your site displays geo-localized content?

First priority: identify what Googlebot actually sees. Test your site from a US IP address (VPN, proxy, US cloud server). Compare it with what your French, British, and other users see.

If the content differs significantly — prices, product availability, language — you have an indexing problem. Googlebot indexes the US version, your European users won't find what they're looking for in the results.

What mistakes must you absolutely avoid?

Never rely on server-side IP detection without distinct URLs. This is the main cause of non-indexed or poorly indexed content for international audiences.

Also avoid automatically blocking or redirecting Googlebot based on detected location. If you redirect it to a regional version, ensure that redirect is consistent with your hreflang and URL structure.

How should you restructure your international architecture to be compliant?

The safest solution: distinct URLs by country or language. Subdomains (fr.example.com), subdirectories (/fr/), or country-specific domains (.fr, .co.uk). Each URL serves a unique version, crawlable and indexable independently.

Complete this with hreflang tags to tell Google about relationships between language or regional versions. That way, even if Googlebot crawls from California, it understands that a .fr version exists for French users.

- Test your site from a US IP address to see what Googlebot actually indexes

- Identify geo-localized content that varies based on user IP

- Move to a structure with distinct URLs by country/language if not already done

- Properly implement hreflang tags for each regional version

- Verify in Search Console that all your international versions are properly indexed

- Avoid automatic redirects based solely on detected Googlebot IP

- Document in your server logs where Googlebot crawls really come from

❓ Frequently Asked Questions

Googlebot crawle-t-il depuis d'autres pays que les États-Unis ?

Si j'affiche des prix différents selon le pays de l'utilisateur, lesquels seront indexés ?

Les balises hreflang suffisent-elles si je sers du contenu géolocalisé dynamiquement ?

Comment vérifier ce que Googlebot voit réellement sur mon site ?

Cette règle s'applique-t-elle aussi à Google Images ou Google News ?

🎥 From the same video 29

Other SEO insights extracted from this same Google Search Central video · published on 14/01/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.