Official statement

Other statements from this video 21 ▾

- □ Faut-il créer une nouvelle URL ou mettre à jour la même page pour du contenu quotidien ?

- □ Faut-il arrêter d'utiliser l'outil de soumission manuelle dans Search Console ?

- □ Les balises H2 dans le footer posent-elles un problème pour le référencement ?

- □ Les balises <header> et <footer> HTML5 améliorent-elles vraiment le SEO ?

- □ Faut-il vraiment se fier au validateur schema.org pour optimiser ses données structurées ?

- □ La vitesse de page améliore-t-elle vraiment le classement aussi vite qu'on le croit ?

- □ Google continue-t-il vraiment de crawler un sitemap supprimé de Search Console ?

- □ Pourquoi Google n'indexe-t-il pas une page crawlée régulièrement si elle ne présente aucun problème technique ?

- □ Peut-on utiliser des canonical bidirectionnels entre deux versions d'un site sans risque ?

- □ Les structured data peuvent-elles remplacer le maillage interne classique ?

- □ Pourquoi un seul x-default suffit-il pour toute votre configuration hreflang multi-domaines ?

- □ Faut-il vraiment éviter le structured data produit sur les pages catégories ?

- □ Faut-il vraiment choisir une langue principale pour chaque page si vous visez plusieurs marchés ?

- □ Pourquoi Google ignore-t-il complètement votre version desktop en mobile-first indexing ?

- □ Le contenu 'commodity' peut-il vraiment survivre dans les résultats Google ?

- □ Faut-il isoler ses FAQ dans des pages séparées pour mieux ranker ?

- □ Pourquoi Google réduit-il drastiquement l'affichage des FAQ dans les résultats de recherche ?

- □ Pourquoi Google n'indexe-t-il qu'une infime fraction de vos URLs ?

- □ Peut-on héberger son sitemap XML sur un domaine différent de son site principal ?

- □ Les Core Web Vitals : pourquoi le passage de « Bad » à « Medium » change tout pour votre ranking ?

- □ La vitesse serveur impacte-t-elle vraiment le crawl budget des gros sites ?

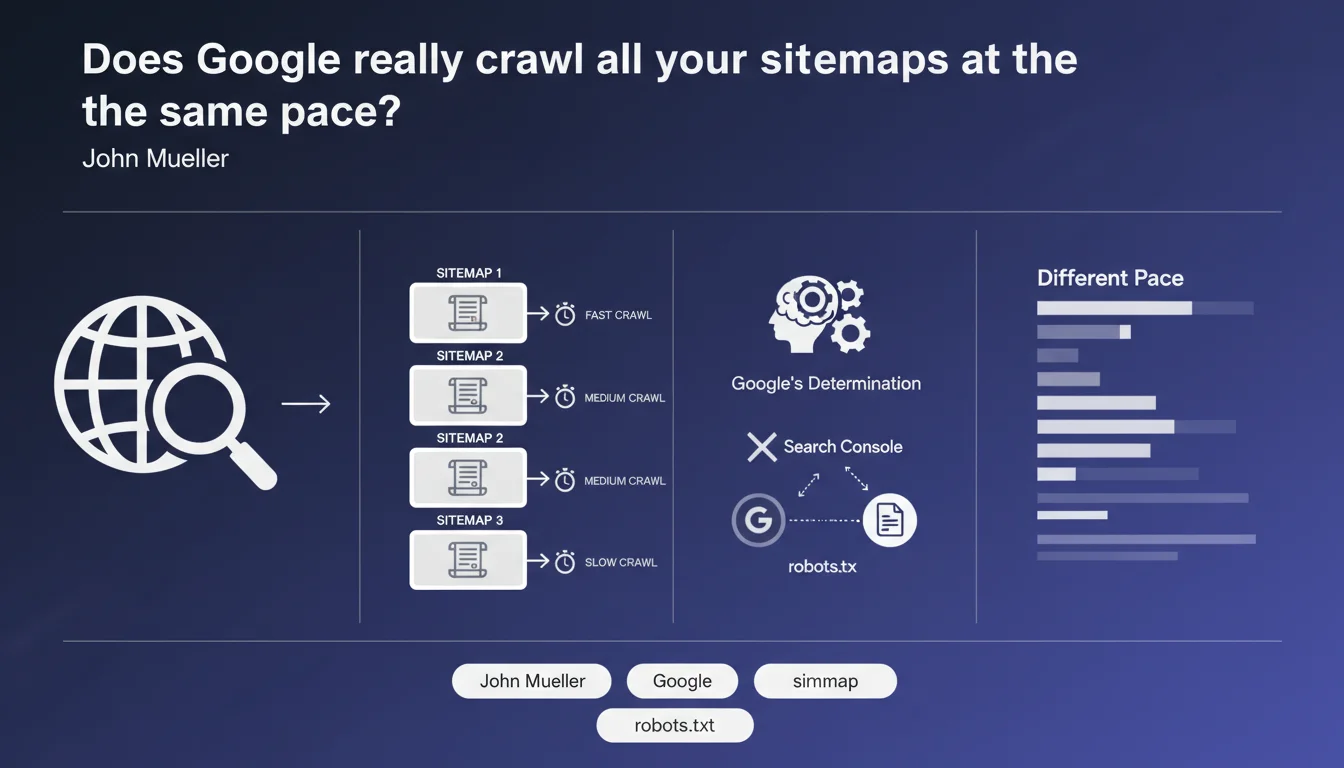

Google does not crawl all sitemaps uniformly. Each sitemap file is evaluated individually based on its change frequency and perceived value, which determines its crawl rate. The submission method (Search Console or robots.txt) does not influence this frequency.

What you need to understand

Google applies differentiated logic to sitemap crawling. Contrary to what many believe, there is no uniform crawl cycle where all files would be processed at the same time.

This approach relies on individual evaluation of each sitemap, regardless of how many files you manage.

How does Google determine a sitemap's crawl frequency?

Google analyzes two main criteria: the actual change frequency of content (not what you declare in the file) and the perceived value of the referenced content.

If your sitemap lists URLs that rarely change or point to content deemed less relevant, Google will crawl it less frequently. Conversely, a dynamic sitemap with frequently updated URLs and high-value content will be revisited more regularly.

Does the submission method really influence crawling?

No. Whether you submit your sitemap via Search Console or declare it in robots.txt, it makes no difference to the crawl frequency.

This Mueller clarification debunks a persistent misconception: some SEO professionals believed active submission via GSC triggered priority treatment. That's not the case — only the file's content matters.

- Google evaluates each sitemap individually, not in batches

- Crawl frequency depends on the actual content freshness, not changefreq tags

- Perceived content value plays a major role in prioritization

- The submission method (GSC vs robots.txt) has no impact on crawl rate

SEO Expert opinion

Does this statement align with real-world observations?

Yes, largely. We do indeed observe significant gaps in crawl frequency between different sitemaps on the same site. A daily-updated blog sitemap will be crawled far more frequently than a sitemap of static institutional pages.

However, Mueller remains vague about what he means by "value." Are we talking about URL popularity (backlinks, traffic)? Thematic relevance? Click-through rate in SERPs? [To verify] — Google provides no precise metrics, making optimization approximate.

What nuances should we add?

The "change frequency" Mueller refers to is what Google detects itself, not what you declare. The changefreq tags in XML sitemaps have been notoriously ignored by Google for years — Mueller indirectly confirms this here.

Also be careful not to confuse sitemap crawling with URL crawling. Google might crawl your sitemap daily but only visit the URLs once a week if they don't actually change.

In what cases does this rule not apply?

Low crawl budget sites may observe different behaviors. If Google allocates few resources to your domain, even a high-value sitemap won't be crawled frequently — the overall budget takes precedence.

Practical impact and recommendations

How can I optimize my sitemaps' crawl frequency?

Segment your sitemaps by actual update frequency. Separate dynamic content (blog, news, product sheets) from static pages (legal, about). Google can then intelligently allocate its crawl budget.

Avoid "catch-all" sitemaps with tens of thousands of mixed URLs. The more granular and coherent your structure, the better Google can assess each segment's value.

What mistakes should you absolutely avoid?

Don't bloat your sitemaps with low-quality URLs (empty pages, duplicate content, redirects). This dilutes the file's perceived value and slows its crawl.

Don't use changefreq and priority tags as optimization levers — Google ignores them. Focus on actual content updates rather than declarative signals.

How can you verify your sitemaps are being crawled properly?

- Check the Sitemaps report in Search Console and verify the last crawl date for each file

- Analyze server logs to identify frequency gaps between your different sitemaps

- Segment your sitemaps by content type (blog, products, categories, static pages)

- Remove obsolete, error, or redirected URLs from sitemaps — only 200 indexable URLs should be listed

- Test the correlation between actual update frequency and observed crawl frequency

❓ Frequently Asked Questions

La balise <changefreq> dans le sitemap a-t-elle un impact sur la fréquence de crawl ?

Dois-je soumettre mes sitemaps via Search Console ou robots.txt ?

Combien de temps faut-il attendre pour qu'un nouveau sitemap soit crawlé ?

Un sitemap de 50 000 URLs sera-t-il crawlé moins souvent qu'un sitemap de 100 URLs ?

Peut-on forcer Google à crawler un sitemap plus souvent ?

🎥 From the same video 21

Other SEO insights extracted from this same Google Search Central video · published on 05/03/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.