Official statement

Other statements from this video 21 ▾

- □ Faut-il créer une nouvelle URL ou mettre à jour la même page pour du contenu quotidien ?

- □ Les balises H2 dans le footer posent-elles un problème pour le référencement ?

- □ Les balises <header> et <footer> HTML5 améliorent-elles vraiment le SEO ?

- □ Faut-il vraiment se fier au validateur schema.org pour optimiser ses données structurées ?

- □ La vitesse de page améliore-t-elle vraiment le classement aussi vite qu'on le croit ?

- □ Google crawle-t-il tous les sitemaps au même rythme ?

- □ Google continue-t-il vraiment de crawler un sitemap supprimé de Search Console ?

- □ Pourquoi Google n'indexe-t-il pas une page crawlée régulièrement si elle ne présente aucun problème technique ?

- □ Peut-on utiliser des canonical bidirectionnels entre deux versions d'un site sans risque ?

- □ Les structured data peuvent-elles remplacer le maillage interne classique ?

- □ Pourquoi un seul x-default suffit-il pour toute votre configuration hreflang multi-domaines ?

- □ Faut-il vraiment éviter le structured data produit sur les pages catégories ?

- □ Faut-il vraiment choisir une langue principale pour chaque page si vous visez plusieurs marchés ?

- □ Pourquoi Google ignore-t-il complètement votre version desktop en mobile-first indexing ?

- □ Le contenu 'commodity' peut-il vraiment survivre dans les résultats Google ?

- □ Faut-il isoler ses FAQ dans des pages séparées pour mieux ranker ?

- □ Pourquoi Google réduit-il drastiquement l'affichage des FAQ dans les résultats de recherche ?

- □ Pourquoi Google n'indexe-t-il qu'une infime fraction de vos URLs ?

- □ Peut-on héberger son sitemap XML sur un domaine différent de son site principal ?

- □ Les Core Web Vitals : pourquoi le passage de « Bad » à « Medium » change tout pour votre ranking ?

- □ La vitesse serveur impacte-t-elle vraiment le crawl budget des gros sites ?

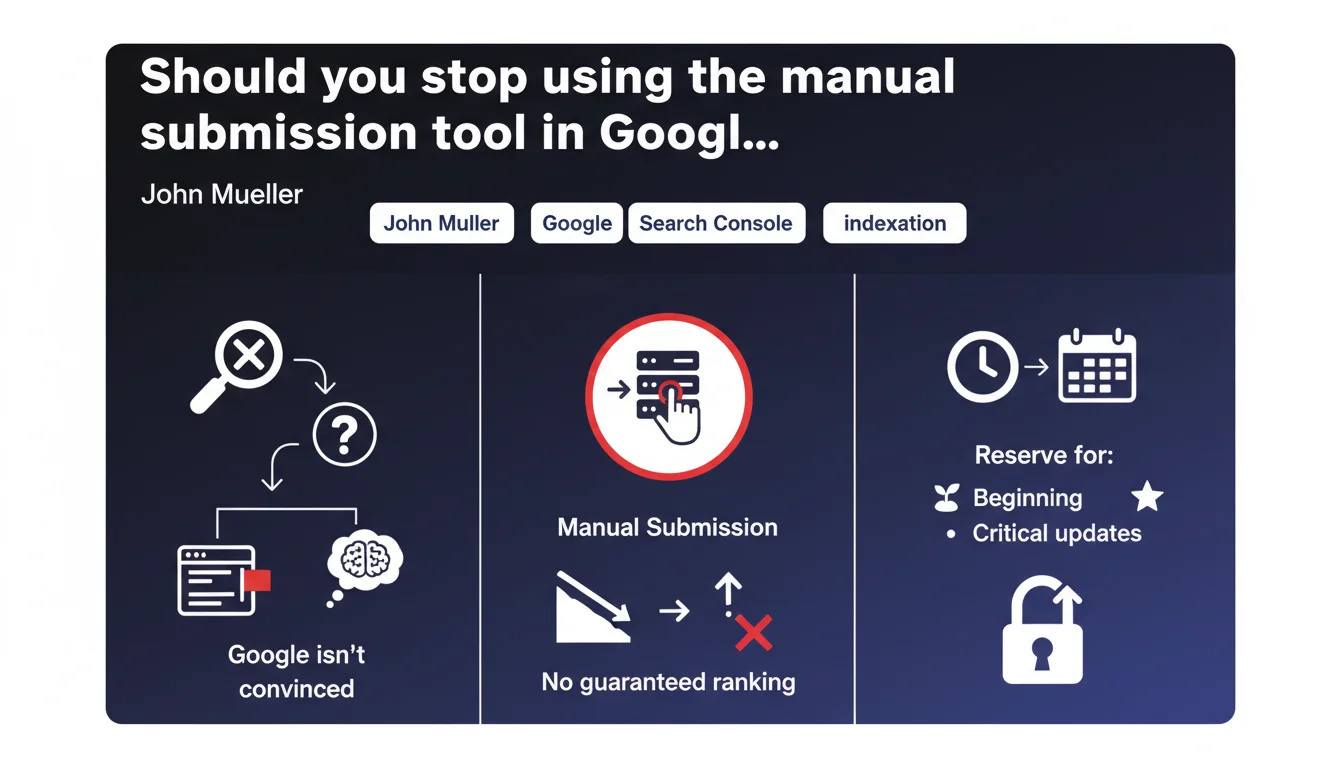

According to Mueller, regularly submitting pages for indexing via Search Console reveals that Google doubts your site's quality. The tool guarantees neither indexation nor ranking — it should only be used when launching a site or for occasional critical updates.

What you need to understand

Why does Google view manual submission as an admission of weakness?

The logic is straightforward: a technically solid site doesn't need you to force Google to crawl it. If your architecture is clean, your sitemap is up to date, your internal linking is coherent, Googlebot naturally discovers and indexes your priority pages.

When you use the submission tool repeatedly, you inadvertently signal that your crawl budget is poorly managed, that your pages struggle to be discovered, or that your content doesn't trigger sufficient signals. It's a symptom, not a solution.

Does this submission at least guarantee indexation?

No. Mueller is explicit on this: forcing indexation doesn't bypass Google's quality filters. Google can index the page and choose not to rank it — which amounts to the same outcome as non-indexation.

Indexation is an editorial decision made by Google based on relevance, originality, and authority. Manually submitting a weak page won't change its fate. You might gain 24 hours on the discovery timeline, but no advantage whatsoever on ranking.

In which cases does this tool remain legitimate?

Mueller validates two scenarios: launching a new site where you want to accelerate the initial discovery phase, and truly critical updates — correcting a major factual error, removing sensitive content, publishing urgent information.

Everything else amounts to futile effort. If you're systematically submitting your blog posts, ask yourself the real question: why isn't Google crawling them naturally?

- Repeated manual submission signals structural weaknesses of the site to Google

- The tool offers no guarantee of ranking, even if the page is indexed

- Legitimate use: new site launch or occasional urgency only

- A healthy site should be crawled and indexed without manual intervention

SEO Expert opinion

Does this statement match what we observe in practice?

Yes and no. In reality, manual submission does accelerate crawling — often within 48 hours for sites with decent crawl budget. But Mueller is right on one point: it doesn't change ranking at all.

I've seen dozens of cases where manually submitted pages get indexed... and remain invisible beyond page 5. The real problem was never the indexation delay; it was content quality, cannibalization, or lack of authority signals. [To verify]: Google claims that repeated use of the tool could negatively influence the algorithm's perception of your site, but no public data confirms this.

Should you really stop submitting pages entirely?

Let's be honest: on a news site or e-commerce platform adding 50 new products daily, waiting for Google to organically discover each page is unrealistic. Mueller's advice applies mainly to typical editorial sites that publish a few pieces of content per week.

The nuance — and it's important — is that submitting a page once at publication time is not the same as re-submitting it every 3 days because it's not ranking. The first case is pragmatic. The second is an admission of failure.

What concrete alternative to manual submission?

Top priority: optimize crawl budget. This means a clean sitemap (not 10,000 URLs with 80% returning 404s), a robots.txt that doesn't block critical resources, and internal linking that naturally pushes important new pages.

Next, work on signals that trigger rapid recrawls: internal links from fresh pages, mentions on pages with high crawl frequency (homepage, thematic hubs), and — if you have the leverage — external backlinks that signal newness.

Practical impact and recommendations

What should you concretely do with this information?

First instinct: stop systematically submitting every new page. Test for a month: publish normally, without touching the submission tool, and observe the average indexation delay. If Google takes more than 7 days to crawl your priority new content, you have a structural problem to solve.

Reserve the tool for true emergencies: a major factual correction, sensitive content to remove, critical business opportunity. Everything else must go through the natural crawl flow.

How do you diagnose if your site suffers from discoverability problems?

Look at the coverage report in Search Console. If you have hundreds of pages marked "Discovered, currently not indexed," it means Google sees them but considers them unworthy of indexation — manual submission won't change that.

Also check your sitemap: is Google crawling submitted URLs within a reasonable timeframe? If not, either your sitemap is too large and diluted, or Google has throttled your crawl budget because you're generating too many soft 404s, unnecessary facets, or weak content.

What levers should you activate to improve natural crawling?

- Clean up the sitemap: remove all non-strategic URLs, paginations, filters

- Strengthen internal linking toward new pages from the homepage or thematic hubs

- Avoid overly deep silo structures (more than 3 clicks from root = problem)

- Optimize loading speeds so Googlebot crawls more URLs per session

- Use Last-Modified and If-Modified-Since headers to signal updates

- Deindex zombie pages that waste crawl budget without generating traffic

Manual submission is putting a band-aid on a wooden leg. If you need it regularly, it's your SEO infrastructure that needs reviewing, not your indexation tactic.

Focus your efforts on crawl budget optimization, internal linking quality, and sitemap relevance. These projects are complex and often require a thorough technical audit. Engaging an SEO-specialized agency can accelerate diagnosis and implementation of structural fixes — especially if your site exceeds a few hundred pages.

❓ Frequently Asked Questions

Puis-je soumettre mes nouveaux articles de blog via l'outil sans risque ?

La soumission manuelle accélère-t-elle vraiment l'indexation ?

Combien de fois puis-je soumettre une même URL ?

Mon concurrent soumet toutes ses pages et rank mieux, dois-je faire pareil ?

Quels signaux indiquent que mon site a un problème de crawl budget ?

🎥 From the same video 21

Other SEO insights extracted from this same Google Search Central video · published on 05/03/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.