Official statement

Other statements from this video 29 ▾

- □ Un fichier robots.txt volumineux pénalise-t-il vraiment votre SEO ?

- □ Soumettre son sitemap dans robots.txt ou Search Console : y a-t-il vraiment une différence ?

- □ Les balises H1-H6 ont-elles encore un impact réel sur le classement Google ?

- □ Faut-il vraiment respecter une hiérarchie stricte des balises Hn pour le SEO ?

- □ Combien de temps faut-il réellement pour qu'une migration de domaine soit prise en compte par Google ?

- □ Une migration de site peut-elle vraiment booster votre SEO ou tout faire planter ?

- □ Googlebot crawle-t-il vraiment depuis un seul endroit pour indexer vos contenus géolocalisés ?

- □ Faut-il vraiment abandonner les redirections géolocalisées pour une simple bannière ?

- □ Faut-il créer des pages de destination pour chaque ville ou se limiter aux régions ?

- □ Faut-il rediriger les utilisateurs mobiles vers votre application mobile ?

- □ Faut-il vraiment traduire mot pour mot ses pages pour que le hreflang fonctionne ?

- □ Fichier Disavow : pourquoi la directive domaine permet-elle de contourner la limite de 2MB ?

- □ Faut-il vraiment utiliser le fichier Disavow uniquement pour les liens achetés ?

- □ Faut-il mettre en noindex ses pages de résultats de recherche interne pour bloquer les backlinks spam ?

- □ Le HTML sémantique booste-t-il vraiment votre référencement naturel ?

- □ AMP est-il encore un critère de ranking dans Google Search ?

- □ AMP est-il vraiment un facteur de classement pour Google ?

- □ Supprimer AMP boost-t-il le crawl de vos pages classiques ?

- □ Faut-il tester la suppression de son fichier Disavow de manière incrémentale ?

- □ Pourquoi les panels de connaissance s'affichent-ils différemment selon les appareils ?

- □ Le système de synonymes de Google fonctionne-t-il vraiment sans intervention humaine ?

- □ Faut-il vraiment créer une page distincte par localisation pour le schema Local Business ?

- □ Faut-il vraiment marquer TOUT son contenu en données structurées ?

- □ Faut-il vraiment afficher toutes les questions du schema FAQ sur la page ?

- □ Le contenu masqué dans les accordéons peut-il vraiment apparaître dans les featured snippets ?

- □ Pourquoi Google ne veut-il pas indexer l'intégralité de votre site web ?

- □ Faut-il supprimer des pages pour améliorer l'indexation de son site ?

- □ Le volume de recherche des ancres influence-t-il vraiment la valeur d'un lien interne ?

- □ Faut-il vraiment ajouter du contenu unique sur vos pages produit en e-commerce ?

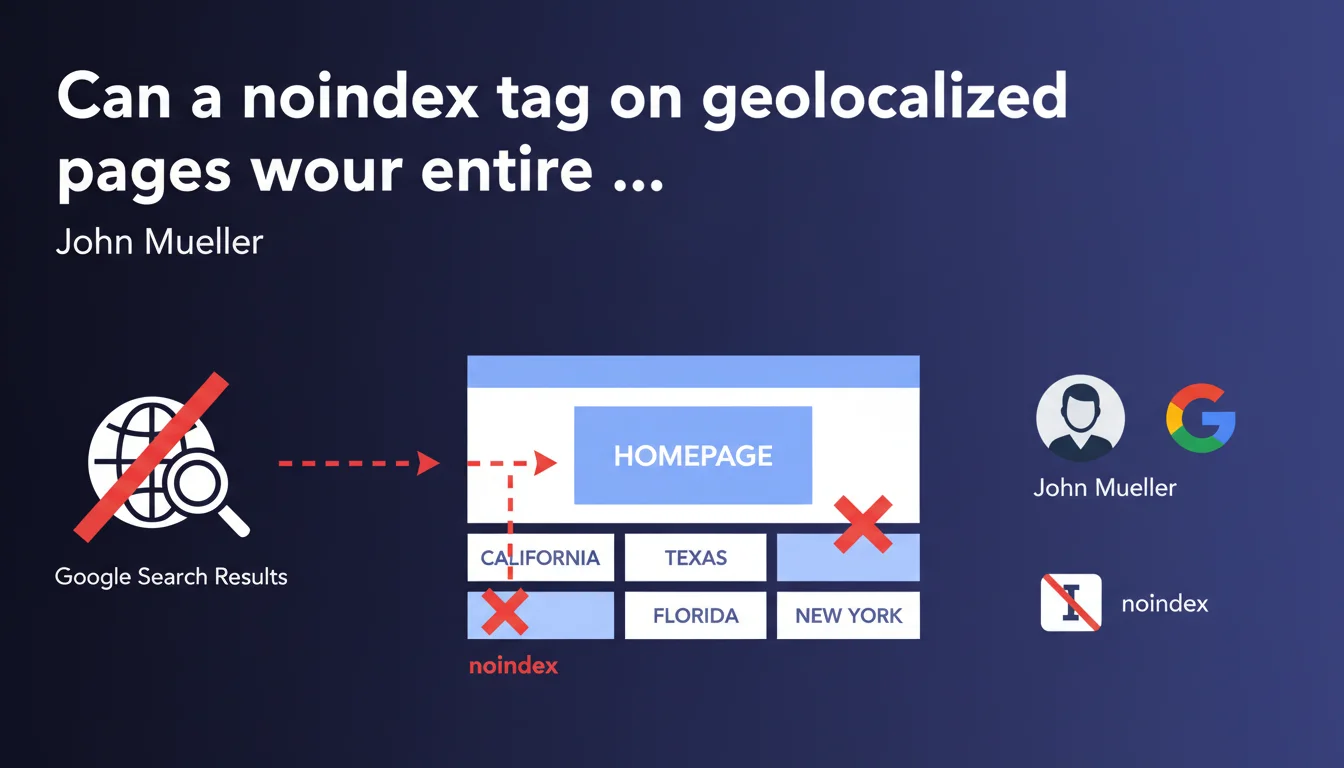

Adding a noindex tag on geolocalized landing pages can lead to complete site deindexation if these pages are served as the homepage based on user location. Google treats the page served at the root URL as the true homepage, even if it varies by geolocation. In practice: if your homepage displays different content by state and one version carries a noindex, your entire presence in the SERPs can collapse.

What you need to understand

Why would a noindex tag on a geolocalized page affect the homepage?

The mechanism is more insidious than it appears. When you create regional landing pages (by state, by city, by country), you typically implement conditional redirects or display based on IP address or user preferences. When a California visitor accesses your domain.com, they actually see the content of your California page — but at the root URL.

Google crawls from different locations and IPs. If Googlebot accesses your site from a California IP and receives the California version with a noindex in the header, it interprets this instruction as applying to the URL it's crawling: your homepage. The result? Complete site deindexation.

How does Google determine which version of a geolocalized page to index?

Google doesn't care about your backend architecture. What matters is what's served at a given URL at the time of crawl. If domain.com returns variable content based on geolocation without distinct URLs (like domain.com/us/, domain.com/ca/), you're technically creating multiple versions of the same URL.

The crawler can't guess which version is « main ». It indexes what it sees. And if what it sees carries a noindex directive, it applies the rule without second thoughts. It's that simple — and that brutal.

What are the warning signs that this issue is affecting your site?

- Sudden drop in organic visibility with no apparent changes to main content

- Search Console displays « Excluded by noindex tag » for the homepage or key URLs

- Coverage report shows massive deindexation of strategic pages

- Geolocalized pages themselves generate no organic traffic despite optimization

- Testing with different VPNs or locations reveals contradictory indexation directives depending on source IP

SEO Expert opinion

Is this claim consistent with real-world observations?

Absolutely. I've seen this scenario play out three times on international e-commerce sites between 2019 and 2023. In two cases, deindexation was partial — only certain regional versions disappeared. In the third, total disaster: 90% of organic traffic vanished in 72 hours because the UK version (main market) accidentally carried a noindex tag.

What's surprising is the speed of propagation. Google doesn't give a 15-day grace period. As soon as Googlebot recrawls the URL with noindex, the instruction is executed. Some believe an « established » site gets some inertia. Wrong. Noindex is a directive, not a suggestion — and Google executes it without hesitation.

What nuances should be added to this Mueller statement?

Mueller deliberately oversimplifies. He doesn't specify whether this behavior applies only to temporary 302 redirects, server rewrites, or also to client-side JavaScript solutions. [To verify]: does geolocalized content loaded via JS after first paint escape this rule? Probably not if rendering detects noindex, but official documentation remains vague.

Another point: Mueller talks about « landing pages by state » with noindex. Why on earth would you want to noindex these pages? Either to avoid duplicate content (poor strategy), or because they're under construction (process error). In both cases, the real problem isn't technical — it's a fundamental misunderstanding of international SEO architecture.

In which cases does this rule pose no problem?

If you use distinct URLs with properly implemented hreflang (/fr/, /de/, /us/), each version is an independent entity. You can noindex /us/ without touching /fr/. Google understands the structure, and the risk of cross-contamination disappears.

Same if you serve a single neutral homepage (language/country selector) and geolocalized versions live on dedicated subfolders or subdomains. There, no ambiguity: the root URL stays stable, indexable, and regional variants are clearly separated.

Practical impact and recommendations

What should you do concretely to avoid this trap?

First, audit immediately your geolocation implementation. Use a VPN or proxy to test accessing your homepage from different locations (USA, UK, France, Germany, Australia). Inspect the HTTP headers and source code returned for each geo. Look for any trace of noindex in meta robots or X-Robots-Tag headers.

Then, if you detect variable noindex directives depending on location, you need to overhaul the architecture. Either you switch to distinct URLs with hreflang (recommended solution for international), or you abandon noindex and manage duplicates differently (canonical, sufficiently differentiated content).

What mistakes to absolutely avoid when managing geolocalized pages?

- Never apply a noindex « by default » on regional pages to « clean up the index » — it's the suicide method

- Don't entrust geolocation to a third-party plugin or service without understanding its exact technical functioning

- Never assume Google crawls only from the USA — it uses multiple IPs, including European and Asian ones

- Avoid geolocalized 302 redirects to the homepage with variable content — prefer stable subfolders

- Don't forget to test Search Console with different property domains if you use ccTLDs

How to verify that your site is compliant and protected?

Set up daily monitoring of your strategic URLs in Search Console. Specifically watch the indexation status of the homepage and main regional landing pages. Any appearance of « Excluded by noindex tag » should trigger an immediate alert.

Use a crawl tool (Screaming Frog, Oncrawl, Botify) configured to simulate crawls from different IPs or with different user-agents. Compare the returned headers and directives. If you notice inconsistencies, your implementation has problems.

Noindex on geolocalized pages is an underestimated trap that can annihilate your organic visibility in hours. The solution involves a clear architecture with distinct URLs, rigorous hreflang, and permanent monitoring of indexation directives.

These technical configurations require sharp expertise in international SEO and server architecture. If your technology stack relies on dynamic routing, edge computing, or advanced personalization solutions, support from an SEO agency experienced in multilingual indexation issues can help you avoid costly mistakes and durably secure your SERP presence.

❓ Frequently Asked Questions

Puis-je utiliser un noindex temporaire sur des pages géolocalisées en construction ?

Le hreflang suffit-il à éviter les problèmes de noindex sur pages géolocalisées ?

Comment Google choisit-il quelle version géolocalisée crawler ?

Un CDN avec routage géographique pose-t-il les mêmes risques ?

Que faire si mon site a déjà été désindexé à cause de ce problème ?

🎥 From the same video 29

Other SEO insights extracted from this same Google Search Central video · published on 14/01/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.