Official statement

Other statements from this video 29 ▾

- □ Un fichier robots.txt volumineux pénalise-t-il vraiment votre SEO ?

- □ Soumettre son sitemap dans robots.txt ou Search Console : y a-t-il vraiment une différence ?

- □ Les balises H1-H6 ont-elles encore un impact réel sur le classement Google ?

- □ Faut-il vraiment respecter une hiérarchie stricte des balises Hn pour le SEO ?

- □ Combien de temps faut-il réellement pour qu'une migration de domaine soit prise en compte par Google ?

- □ Une migration de site peut-elle vraiment booster votre SEO ou tout faire planter ?

- □ Googlebot crawle-t-il vraiment depuis un seul endroit pour indexer vos contenus géolocalisés ?

- □ Le noindex sur pages géolocalisées peut-il faire disparaître tout votre site des résultats Google ?

- □ Faut-il vraiment abandonner les redirections géolocalisées pour une simple bannière ?

- □ Faut-il rediriger les utilisateurs mobiles vers votre application mobile ?

- □ Faut-il vraiment traduire mot pour mot ses pages pour que le hreflang fonctionne ?

- □ Fichier Disavow : pourquoi la directive domaine permet-elle de contourner la limite de 2MB ?

- □ Faut-il vraiment utiliser le fichier Disavow uniquement pour les liens achetés ?

- □ Faut-il mettre en noindex ses pages de résultats de recherche interne pour bloquer les backlinks spam ?

- □ Le HTML sémantique booste-t-il vraiment votre référencement naturel ?

- □ AMP est-il encore un critère de ranking dans Google Search ?

- □ AMP est-il vraiment un facteur de classement pour Google ?

- □ Supprimer AMP boost-t-il le crawl de vos pages classiques ?

- □ Faut-il tester la suppression de son fichier Disavow de manière incrémentale ?

- □ Pourquoi les panels de connaissance s'affichent-ils différemment selon les appareils ?

- □ Le système de synonymes de Google fonctionne-t-il vraiment sans intervention humaine ?

- □ Faut-il vraiment créer une page distincte par localisation pour le schema Local Business ?

- □ Faut-il vraiment marquer TOUT son contenu en données structurées ?

- □ Faut-il vraiment afficher toutes les questions du schema FAQ sur la page ?

- □ Le contenu masqué dans les accordéons peut-il vraiment apparaître dans les featured snippets ?

- □ Pourquoi Google ne veut-il pas indexer l'intégralité de votre site web ?

- □ Faut-il supprimer des pages pour améliorer l'indexation de son site ?

- □ Le volume de recherche des ancres influence-t-il vraiment la valeur d'un lien interne ?

- □ Faut-il vraiment ajouter du contenu unique sur vos pages produit en e-commerce ?

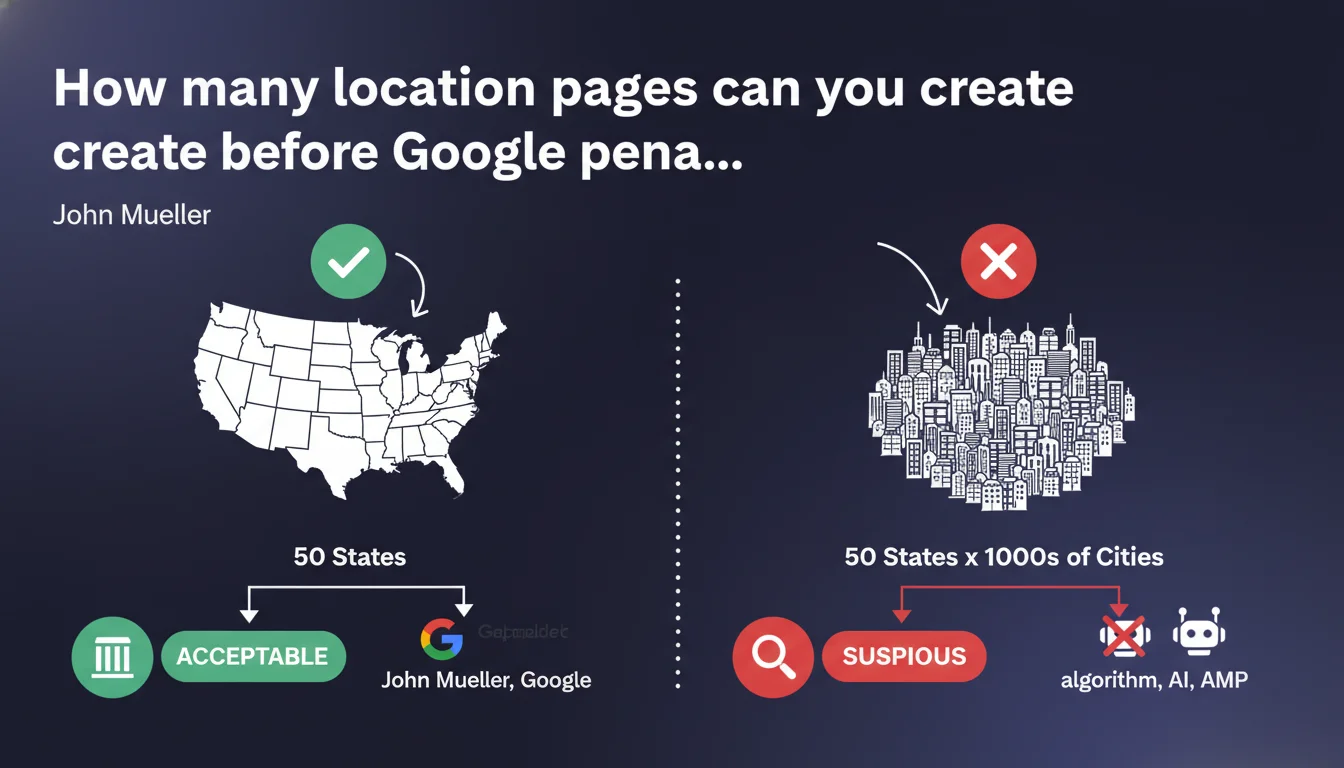

Google tolerates regional pages as long as the number remains reasonable — for example, 50 US states. Once you start creating a page for every city in each state, anti-spam algorithms are likely to flag it. The boundary lies between useful granularity and industrially-scaled duplicate content.

What you need to understand

This statement from John Mueller addresses a recurring question: how far can you push geographic targeting logic without triggering Google's anti-spam filters?

The context is straightforward. Many multi-location websites create dedicated pages for each service area — a legitimate practice when it serves the user. But the boundary with spam becomes blurry when you multiply hundreds of nearly-identical pages, changing only the city name.

What is the threshold of "reasonable quantity" according to Google?

Mueller gives a concrete example: 50 US states is acceptable. That's a figure that matches a natural administrative division, with manageable volume and clear relevance for the user.

On the other hand, if you then break down each state into dozens or hundreds of cities, you enter a gray zone. Anti-spam algorithms are designed to detect this type of strategy — especially if content varies little from one page to the next.

Why does this limit exist?

Google combats thin content at industrial scale. Creating 5,000 pages with the same template, just replacing a city name, generates noise in the index without added value.

If each page brings unique content, local testimonials, information specific to the area — no problem. The problem arises when differentiation is limited to the title and city name in a paragraph.

- 50 regional pages = acceptable if well differentiated

- Hundreds of city-by-city pages = high risk of anti-spam filter

- The key: each page must have a reason to exist for the user, not just to capture long-tail traffic

- The algorithm detects duplication patterns even with minor variations

SEO Expert opinion

Is this position consistent with what we observe in practice?

Yes, completely. We regularly see sites with hundreds of geolocalized pages getting filtered — either via manual action or via a brutal algorithmic adjustment. Sites that come out of it all have one thing in common: each page brings true differentiation.

The figure of 50 states is not a magic limit — it's an example to illustrate an order of magnitude. A French site with 13 regions will cause no problem. A site with 35,000 municipalities... that's another story.

What doesn't Google say clearly here?

The statement intentionally remains vague on the real criterion: quality of differentiation. Mueller talks about "looking suspicious," but doesn't give a precise threshold. [To verify]: is it 100 pages? 500? 1,000?

In reality, it's not so much the absolute number that matters as the signal-to-noise ratio. If you have 500 city pages with 80% identical content, you're on the radar. If you have 200 pages with truly unique content, it might get through.

In what cases does this rule not apply?

If your content is truly differentiated by zone — for example, a real estate listing site with different properties by city — then the number of pages is no longer a problem. Each page has a reason to exist.

Similarly, a local news site with articles specific to each city will never be penalized for having too many pages. The problem doesn't come from volume, but from disguised duplication.

Practical impact and recommendations

What should you concretely do with this information?

If you already manage geolocalized pages, start with a differentiation audit. Take 10 pages at random and compare them side by side. If 70% of the content is identical, that's a red flag.

Then decide: either you consolidate weak pages (for example, group several small cities under one regional page), or you enrich each page with truly unique content — local testimonials, specific data, local teams, hours, etc.

What mistakes should you absolutely avoid?

Don't launch a "1 page per city" strategy without having editorial resources to differentiate. Generating 500 pages in template mode won't work anymore — or only temporarily, before an algorithmic clean-up.

Also avoid the trap of low-grade programmatic content. Yes, you can automate certain parts, but you need a minimum of manual curation. User behavior signals (bounce rate, time on page) quickly reveal weak content.

- Audit existing pages: similarity rate between geolocalized pages

- Identify pages with less than 30% unique content → consolidate or enrich

- Check user engagement on these pages (GA4, Search Console): bounce rate, CTR, time

- If creating new regional pages: plan minimum 400-500 words unique content per page

- Integrate real local elements: testimonials, teams, events, specific data

- Monitor indexation in Search Console: watch for any massive deindexation

- Set up a local content creation process if significant volume is targeted

The limit is not an absolute number — it's the ability to produce truly differentiated content at each level of granularity. If you're unsure, stick with a broad breakdown (regions, departments) rather than multiplying nearly-identical city pages.

These strategic tradeoffs — consolidate vs. expand, automate vs. manual curation, optimal geographic granularity — require field expertise and a comprehensive site vision. If the complexity seems unmanageable internally, working with a specialist SEO agency can help structure the approach and avoid costly visibility mistakes.

❓ Frequently Asked Questions

Combien de pages régionales maximum peut-on créer sans risque ?

Peut-on créer une page par ville si le contenu est vraiment différent ?

Comment savoir si mes pages géolocalisées risquent un filtre anti-spam ?

Vaut-il mieux regrouper plusieurs villes sur une page régionale ?

Les algorithmes détectent-ils vraiment les variations minimes de contenu ?

🎥 From the same video 29

Other SEO insights extracted from this same Google Search Central video · published on 14/01/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.