Official statement

Other statements from this video 29 ▾

- □ Does a bloated robots.txt file really hurt your SEO rankings?

- □ Does it really matter whether you submit your sitemap in robots.txt or Search Console?

- □ Do H1-H6 heading tags really still impact Google rankings?

- □ Is a strict heading tag hierarchy really necessary for SEO rankings?

- □ How long does Google actually take to fully process a domain migration?

- □ Can a site migration really boost your SEO rankings or destroy them completely?

- □ Does Googlebot really crawl from just one place when indexing your geo-targeted content?

- □ Can a noindex tag on geolocalized pages wipe your entire website from Google search results?

- □ Should you really ditch geo-redirects for a simple dynamic banner?

- □ How many location pages can you create before Google penalizes you for spam?

- □ Should you redirect mobile users to your app—and what are the hidden SEO risks?

- □ Do you really need to translate your pages word-for-word for hreflang to work effectively?

- □ Does the domain directive in your Disavow file really help you bypass Google's 2MB limit?

- □ Should you really use the Disavow tool only for purchased links?

- □ Does semantic HTML really boost your search rankings?

- □ Is AMP still a ranking factor in Google Search?

- □ Is AMP really a ranking factor for Google?

- □ Does removing AMP actually boost crawl on your regular pages?

- □ Should you test removing your Disavow file incrementally, or can you delete it all at once?

- □ Why do knowledge panels display differently across devices and search contexts?

- □ Does Google's synonym system really work without any human intervention?

- □ Should you really create a separate page for each location to implement Local Business schema correctly?

- □ Do you really need to mark up ALL your content with structured data?

- □ Do you really need to display all FAQ schema questions visibly on your page?

- □ Can hidden accordion content really show up in featured snippets?

- □ Why does Google deliberately choose not to index your entire website?

- □ Should you delete pages to boost your site's indexation?

- □ Does search volume of anchor text really impact the value of your internal links?

- □ Should you really add unique content to your e-commerce product pages?

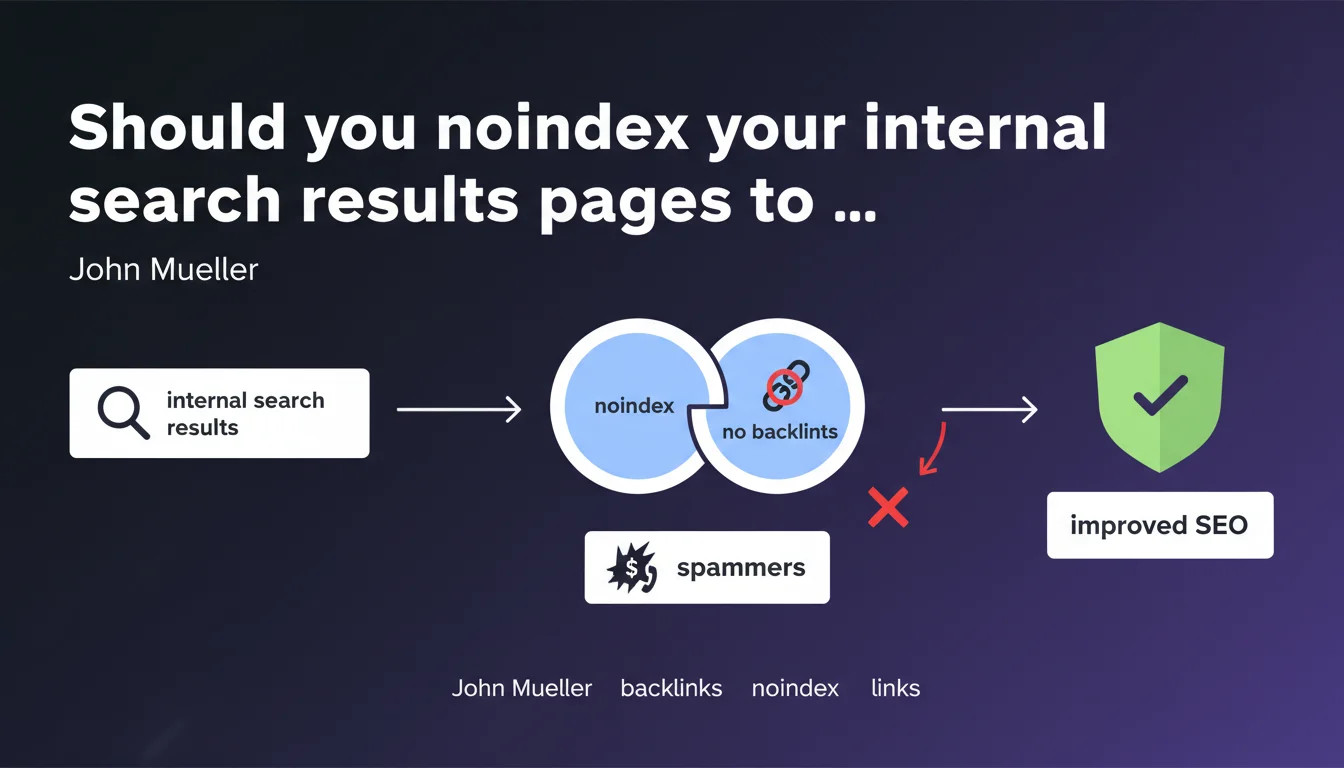

John Mueller recommends setting internal search results pages (or those with long query strings) to noindex to prevent spam sites from creating backlinks containing their phone numbers or URLs. A simple solution against a spam technique that exploits your internal search functionality to generate indexed pages containing their contact information.

What you need to understand

Malicious sites exploit the internal search engines of third-party websites to create indexable pages containing their own contact information. The mechanics are simple: they generate search URLs on your site that include their phone number or domain, then create backlinks to these pages.

Google indexes these results pages, and there you have it — the spammer gets an indexed page on a legitimate domain, with their number visible in the SERPs. It's pure parasitism.

Why do spammers specifically target internal search results pages?

Internal search results pages are dynamic and indexable by default on many sites. They generate unique content from the query, which can seem relevant to Google if the site doesn't block their indexation.

The spammer needs no backend access — they only need to manipulate the public URL of your search form. Once the page is created and crawled, it can rank in search results for queries including the spammer's number or domain.

What does it actually mean to noindex these pages?

The noindex directive prevents Google from including these pages in its index, even if they remain crawlable. Spammers can still create backlinks, but these links will lead to no indexed page — their strategy falls apart.

Mueller suggests two approaches: block all search results pages, or target only those containing long queries (often more likely to be spam). The second approach requires server-side detection.

- Internal search pages are often indexable by default

- Spammers exploit this loophole to create parasitic content

- Noindex stops this technique without requiring crawl blocking

- Two strategies: global noindex or conditional based on query length

SEO Expert opinion

Is this recommendation really sufficient for all sites?

For a typical e-commerce site or blog, yes — setting internal search results to noindex is a standard best practice. These pages rarely generate SEO value, they dilute crawl budget, and create duplicate content.

But be careful: some sites must index their search results. Classifieds aggregators, price comparison sites, job boards — their model relies on indexing combinations of filters. Blindly blocking can destroy their organic visibility.

Is long query detection really reliable against spam?

Let's be honest: it's a partial solution. Spammers can easily use short queries. A simple phone number is 10 characters — hard to define a universal threshold without false positives.

Real protection comes from server-side validation: detection of suspicious patterns (numbers, URLs), rate limiting on unique queries, or even CAPTCHA on search. Noindex addresses the symptom, not the cause. [To verify]: no public data shows whether this spam technique is truly widespread or just a marginal case Mueller encountered.

Does Google penalize sites that are victims of this backlink spam?

Nothing in Mueller's statement suggests the host site risks a penalty. The problem is the pollution of your index and dilution of crawl budget — not a direct algorithmic risk.

The spam backlinks themselves are normally ignored by Google through link filtering. But having hundreds of indexed pages with parasitic content degrades user experience and can affect how algorithms perceive your site's quality.

Practical impact and recommendations

What exactly should you do to block this exploitation?

First step: check if your internal search pages are currently indexed. A site:yourdomain.com inurl:search query (or equivalent to your URL pattern) in Google will give you the answer.

If they are, set them to noindex via the meta robots tag or the X-Robots-Tag HTTP header. Add a rule in your CMS or server to apply noindex to all search results URLs.

If you want to be more selective, implement conditional logic: noindex only if the query exceeds X characters, contains suspicious patterns (regex for phone numbers, URLs), or if the user isn't authenticated.

- Audit current indexation of your internal search pages

- Add a

<meta name="robots" content="noindex, follow">tag to these pages - Verify that noindex is present in rendered source code (not blocked by JavaScript)

- Monitor Search Console to confirm gradual deindexation

- Implement suspicious pattern detection if you want conditional noindex

- Keep crawl allowed (follow) to avoid breaking internal linking if these pages link to indexable content

Mueller's solution is pragmatic and low-risk for most sites. Noindex on internal search results protects against a parasitic spam form without affecting your legitimate SEO — provided these pages aren't already generating organic traffic.

For complex sites with filter architectures or millions of pages, implementation may require thorough analysis. A misconfiguration could deindex entire sections. In these cases, guidance from a specialized SEO agency helps avoid mistakes and adapt the strategy to your specific editorial model.

❓ Frequently Asked Questions

Le noindex empêche-t-il Google de crawler les pages de recherche interne ?

Dois-je aussi bloquer les pages de recherche dans le robots.txt ?

Cette technique de spam de backlinks affecte-t-elle mon autorité de domaine ?

Comment détecter si mon site est victime de ce type de spam ?

Faut-il supprimer les pages déjà indexées ou juste ajouter le noindex ?

🎥 From the same video 29

Other SEO insights extracted from this same Google Search Central video · published on 14/01/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.