Official statement

What you need to understand

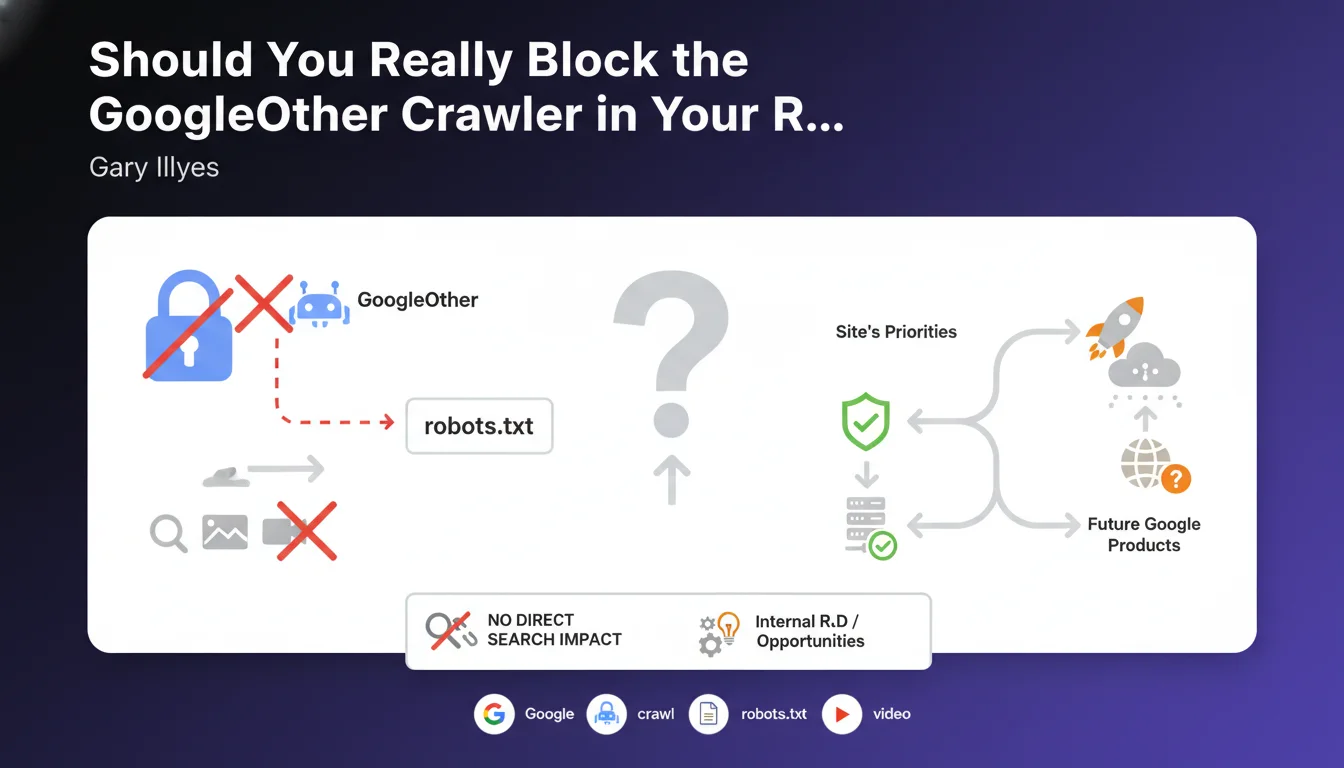

GoogleOther is a crawling bot separate from Googlebot, used by Google for its internal research and development projects. Unlike Googlebot which indexes pages for search results, GoogleOther collects data for experimental purposes.

This crawler comes in three specialized variants: a general version for textual content, one for images, and a final one for videos. These bots do not directly contribute to your site's ranking in the SERPs.

Gary Illyes clarifies that blocking GoogleOther will not affect your organic search rankings, but could deprive you of future opportunities. Google uses this data to develop new products and features that could benefit your site later on.

- GoogleOther does not participate in indexing pages in search results

- Blocking has no direct impact on your SEO positioning

- These crawlers serve Google's internal R&D

- Blocking GoogleOther may cause you to miss future opportunities related to Google innovations

- The decision to block or not depends on your data priorities

SEO Expert opinion

This statement is consistent with what we observe in the field. Server logs confirm that GoogleOther generates crawl activity without correlation to ranking variations. Blocking it is indeed neutral for immediate SEO.

However, we must nuance the recommendation not to block. For very high-volume sites or those with limited server resources, GoogleOther can represent a significant additional load. Similarly, certain sensitive sectors may legitimately want to limit Google's access to their data.

The real question is one of trust in Google and your strategy regarding the Google ecosystem. If you're betting on a strong presence in this environment, allowing GoogleOther to crawl is probably wise.

Practical impact and recommendations

- Check your server logs to identify the frequency and impact of GoogleOther crawl on your resources

- Keep GoogleOther authorized by default in your robots.txt if you don't have particular constraints

- Only block GoogleOther if: you have significant server limitations, legitimate privacy concerns, or a deliberate strategy to limit data access

- Document your decision in an internal note for future reference when new Google products launch

- Monitor Google announcements regarding new features that might require GoogleOther

- Test the impact on server load before making a final decision on high-traffic sites

- Set up specific monitoring to distinguish GoogleOther from Googlebot in your crawl analyses

- Periodically reassess (every 6 months) your GoogleOther policy based on developments

The fine-grained management of different Google crawlers and crawl budget optimization are complex technical issues that require in-depth expertise. If you want to implement an optimized crawl strategy tailored to your infrastructure and business objectives, support from a specialized SEO agency can prove valuable in making the right decisions and avoiding costly mistakes.

💬 Comments (0)

Be the first to comment.