Official statement

What you need to understand

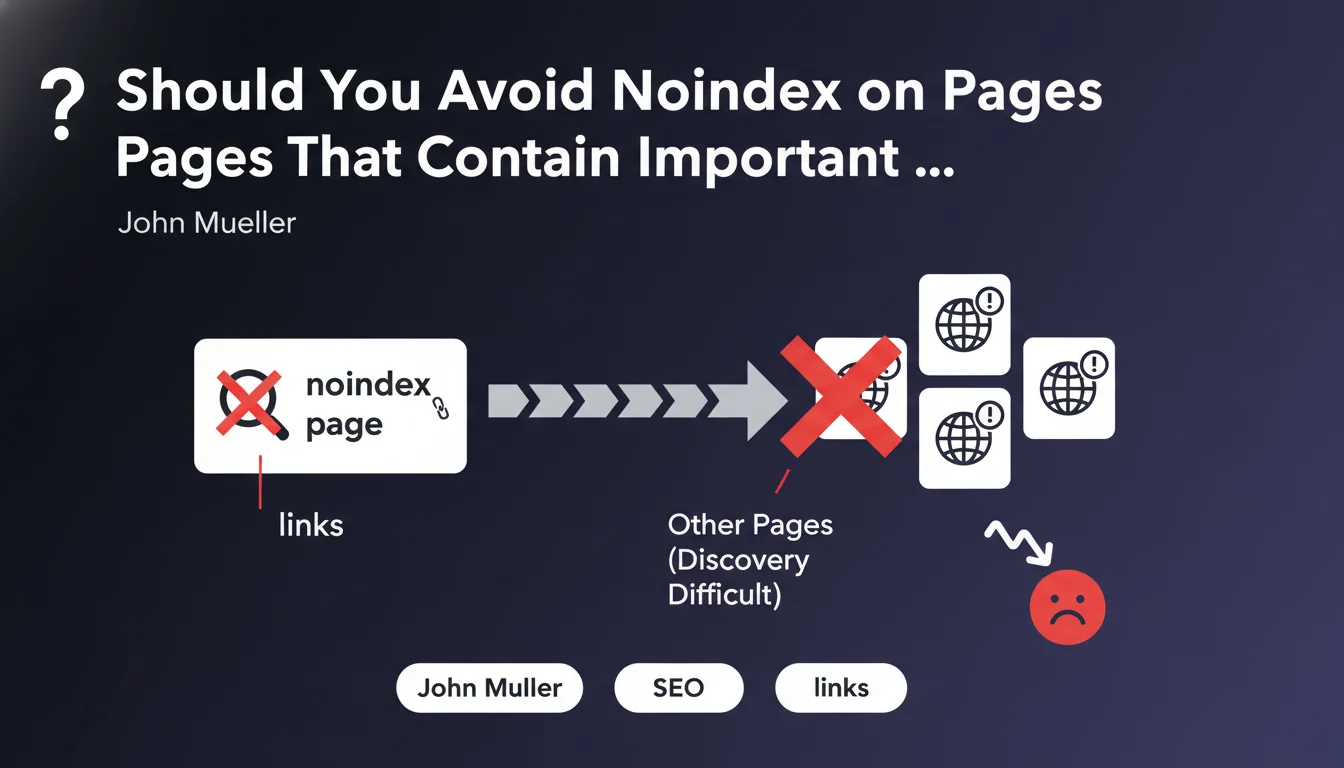

This statement from Google provides major clarification on crawler behavior when dealing with deindexed pages. When a page is blocked from indexing (via noindex or robots.txt), Google considers that its content, including the links it contains, is not relevant.

Concretely, this means that Google will not follow links present on these pages to discover or evaluate other content. This logic applies to both internal and external links, potentially creating orphaned zones in your architecture.

The editorial comment adds an important technical clarification: using noindex and follow together is ineffective. Even if you attempt to tell Google not to index a page but to follow its links, the search engine will ignore this contradictory directive.

- Noindex pages do not pass link equity to the pages they point to

- Link crawling is stopped on deindexed pages, regardless of the follow directive

- Pages only linked from noindex pages risk never being discovered

- This rule applies uniformly to both internal and external links

SEO Expert opinion

This position from Google is perfectly consistent with the field observations we've been conducting for several years. Numerous sites have experienced a drop in crawling and indexation of entire sections after placing hub or navigation pages in noindex.

An important nuance concerns alternative deindexation methods. If you use robots.txt to block crawling, Google won't even see the page or its links. With noindex, it crawls the page but ignores all its content, including links. In both cases, the result is similar for links.

However, there are special cases: links discovered via XML sitemaps, Search Console, or external backlinks will allow Google to bypass this problem. But don't rely on this alternative discovery as your primary strategy.

Practical impact and recommendations

- Audit all your noindex pages to identify those containing links to important content

- Remove noindex from hub pages (pagination, filters, categories) that serve as bridges to strategic content

- Create alternative crawl paths from indexed pages to your currently orphaned deep content

- Use JavaScript obfuscation instead or URL parameters for navigation links you don't want followed

- Favor canonical rather than noindex to manage duplicate content while preserving link crawling

- Verify in Search Console that your strategic pages are being discovered and crawled regularly

- Document your internal linking to identify critical dependencies before any indexation changes

- Test the impact on a sample before deploying massive noindex strategy changes

Optimal crawl and indexation management requires a thorough understanding of technical architecture and its implications for organic visibility. These optimizations touch the very core of SEO performance and require a methodical and experienced approach. For complex or high-stakes sites, support from a specialized SEO agency allows you to accurately map crawl flows, identify blocking points, and implement a coherent indexation strategy that maximizes the discoverability of your content while preserving the quality of your index.

💬 Comments (0)

Be the first to comment.