Official statement

Other statements from this video 43 ▾

- □ Pourquoi Googlebot s'arrête-t-il à 15 Mo par URL et comment cela impacte-t-il votre crawl ?

- □ Google mesure-t-il vraiment le poids de page comme vous le pensez ?

- □ Le poids des pages mobiles a triplé en 10 ans : faut-il s'inquiéter pour le SEO ?

- □ Les données structurées alourdissent-elles trop vos pages pour être rentables en SEO ?

- □ Votre site mobile contient-il autant de contenu que votre version desktop ?

- □ Pourquoi votre contenu desktop disparaît-il des résultats Google s'il manque sur mobile ?

- □ La vitesse de page impacte-t-elle réellement les conversions selon Google ?

- □ Google traite-t-il vraiment 40 milliards d'URLs de spam par jour ?

- □ La compression réseau améliore-t-elle réellement le crawl budget de votre site ?

- □ Le lazy loading est-il vraiment indispensable pour optimiser le poids initial de vos pages ?

- □ Googlebot s'arrête-t-il vraiment après 15 Mo par URL ?

- □ Pourquoi le poids des pages mobiles a-t-il triplé en une décennie ?

- □ Le poids des pages impacte-t-il vraiment l'expérience utilisateur et le SEO ?

- □ Les données structurées alourdissent-elles vraiment vos pages HTML ?

- □ Pourquoi la parité mobile-desktop reste-t-elle un facteur de déclassement majeur ?

- □ Faut-il encore se préoccuper du poids des pages pour le SEO ?

- □ La taille des ressources est-elle le facteur déterminant de la vitesse de votre site ?

- □ Pourquoi Google impose-t-il une limite stricte de 1 Mo pour les images ?

- □ L'optimisation de la taille des pages profite-t-elle vraiment plus aux utilisateurs qu'au SEO ?

- □ Googlebot limite-t-il vraiment le crawl à 15 Mo par URL ?

- □ Le poids des pages web explose : faut-il s'inquiéter pour son SEO ?

- □ La taille des pages web nuit-elle encore vraiment à votre SEO ?

- □ Les structured data alourdissent-elles vos pages au point de nuire au SEO ?

- □ La vitesse de chargement influence-t-elle vraiment les conversions de vos pages ?

- □ La compression réseau suffit-elle à optimiser l'espace de stockage des utilisateurs ?

- □ Pourquoi la disparité mobile/desktop tue-t-elle votre référencement en indexation mobile-first ?

- □ Le lazy loading est-il vraiment un levier de performance SEO à activer systématiquement ?

- □ Google bloque 40 milliards d'URLs de spam par jour : comment votre site échappe-t-il au filtre ?

- □ L'optimisation des images peut-elle vraiment diviser par 10 le poids de vos pages ?

- □ Googlebot s'arrête-t-il vraiment à 15 Mo par URL ?

- □ Pourquoi la parité mobile-desktop impacte-t-elle autant votre classement en Mobile-First Indexing ?

- □ Le poids de vos pages freine-t-il vraiment votre référencement ?

- □ Les données structurées ralentissent-elles vraiment votre crawl ?

- □ Google intercepte vraiment 40 milliards d'URLs de spam par jour ?

- □ Googlebot s'arrête-t-il vraiment à 15 Mo par URL crawlée ?

- □ La vitesse d'un site impacte-t-elle vraiment la conversion ?

- □ Pourquoi la disparité mobile-desktop ruine-t-elle encore tant de classements SEO ?

- □ Les données structurées alourdissent-elles vraiment vos pages HTML ?

- □ Pourquoi la taille des pages reste-t-elle un facteur SEO critique malgré l'amélioration des connexions Internet ?

- □ La compression réseau suffit-elle à optimiser le crawl de votre site ?

- □ Le lazy loading peut-il vraiment booster vos performances sans impacter le crawl ?

- □ La taille d'un site web a-t-elle vraiment un impact sur son référencement ?

- □ Pourquoi Google limite-t-il la taille des images à 1Mo sur sa documentation développeur ?

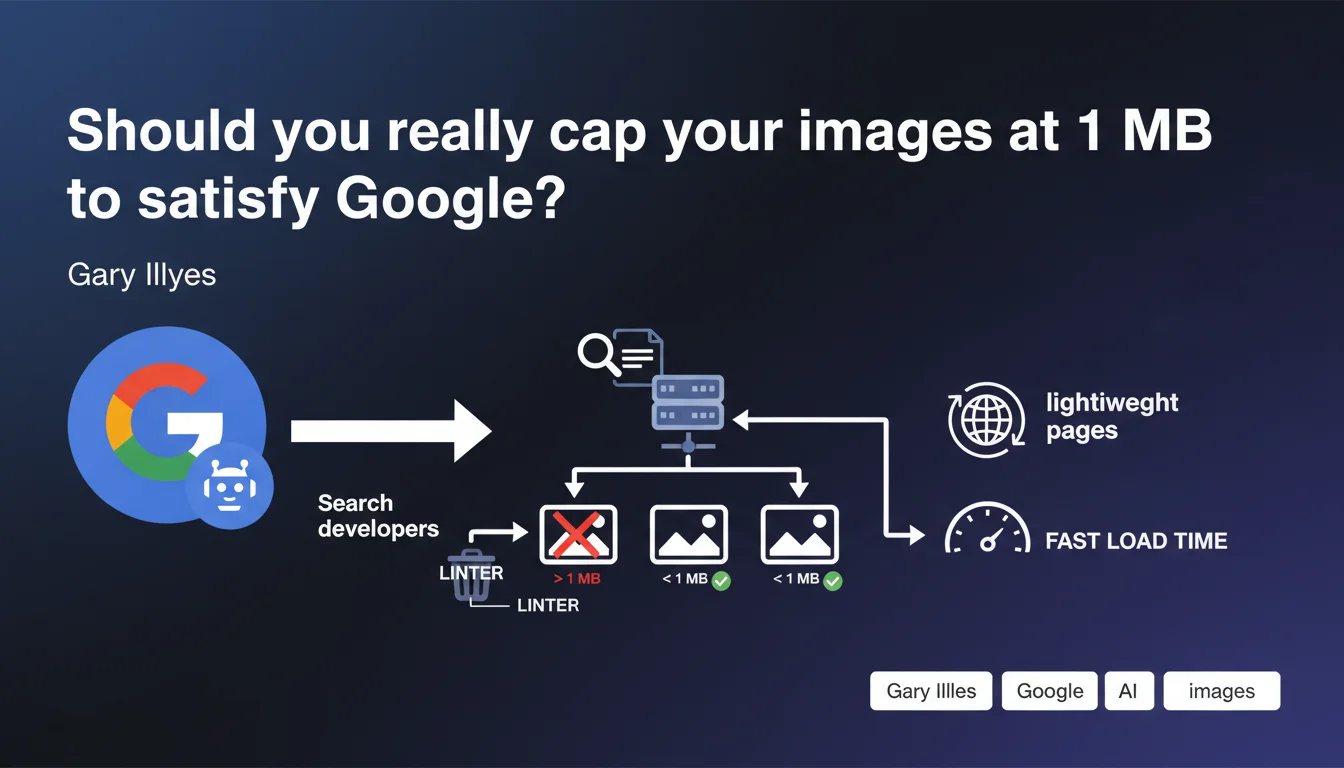

Google enforces a strict 1 MB maximum limit for images on its Search documentation via an internal linter. This technical constraint reveals a clear priority: maintaining ultra-lightweight pages. For SEO practitioners, it's a strong signal about Google's real expectations regarding performance, beyond the official discourse on Core Web Vitals.

What you need to understand

Why does Google enforce this limit internally?

The answer comes down to one word: performance. By constraining its own teams with a linter that blocks any image submission exceeding 1 MB, Google applies to itself the standards it advocates for the web. A linter is a tool that automatically verifies code compliance before validation — there's no way to cheat or make exceptions.

This technical constraint is far from trivial. It forces Google developers to systematically optimize every visual before publication. No compromises, no exceptions for "just this once".

Does this rule apply to external websites?

No, it's not an official ranking criterion. Google won't penalize your site because an image is 1.2 MB. But — and this is where it gets interesting — why would Google impose this limit on itself if it had no impact on perceived performance?

The obvious answer: because it does. Google knows that oversized images degrade user experience, increase load time, and unnecessarily consume bandwidth. If Google sets this bar for its own docs, it's because it corresponds to a relevant technical threshold.

What does this reveal about Google's real priorities?

Actions speak louder than words. Google can communicate all it wants about the importance of performance, but enforcing a linter internally shows it's an operational priority, not just marketing talk.

Gary Illyes' statement is invaluable because it lifts the veil on an internal practice rarely documented. It also confirms that Google clearly distinguishes between technical documentation and public-facing sites — but the underlying principles remain valid everywhere.

- Google uses an automatic linter that blocks images > 1 MB on its Search docs

- This limit explicitly aims to maintain lightweight pages

- It's an internal technical constraint, not an official ranking criterion

- The 1 MB threshold is a practical indicator of what Google considers "reasonable"

- This internal practice reveals Google's real priorities beyond public discourse

SEO Expert opinion

Is this 1 MB threshold really relevant for all websites?

Let's be honest: 1 MB is very tight. For a high-resolution product photo, quality editorial visual, or detailed infographic, staying under this limit requires advanced optimization. Google can pull it off because its Search documentation primarily uses screenshots, simple diagrams, and icons — not high-end marketing visuals.

For an e-commerce site that needs to display products from every angle, or a media outlet that relies on visual quality, applying this limit without nuance would be counterproductive. An image compressed excessively loses sharpness, and it shows — especially on Retina screens and modern smartphones.

The real metric is the quality-to-weight ratio. An 800 KB image perfectly optimized in WebP with lazy loading beats a 400 KB pixelated image that harms user experience. Google knows this very well.

Does this limit apply to all images on a page?

Gary Illyes specifically mentions images on documentation intended for Search developers. He doesn't mention decorative images, hero images, or above-the-fold visuals that might justify greater weight if the visual impact warrants it.

In practical field observations, Google tolerates heavier images perfectly — as long as Largest Contentful Paint (LCP) stays under control. It's the perceived load time that matters, not the raw weight of an isolated image. [To verify]: Google has never publicly confirmed an image weight threshold as a direct ranking factor.

Should you really be alarmed by this statement?

No. This statement isn't a directive; it's a window into Google's internal practices. It confirms what we already know: Google values performance, and its teams apply strict standards internally.

But — and this is crucial — Google doesn't force a linter on you. You retain control over the quality-to-weight tradeoff, as long as you respect Core Web Vitals thresholds. If your LCP is good, your CLS stable, and your FID acceptable, nobody at Google will penalize a 1.3 MB image.

Practical impact and recommendations

What should you actually do with this information?

Use this 1 MB limit as a reference benchmark, not an absolute rule. If 80% of your images stay under this threshold, you're probably in a healthy zone. If the majority significantly exceeds it, there's an optimization problem to investigate.

Focus on images critical for LCP — those appearing above-the-fold that directly impact perceived load time. A 1.5 MB hero image well-optimized and served in WebP via a CDN can load faster than a poorly configured 800 KB JPEG.

What matters is measuring actual impact rather than blindly chasing a magic number. PageSpeed Insights, Lighthouse, and Core Web Vitals give you objective metrics — use them.

What tools should you use to optimize without degrading quality?

Modern formats like WebP and AVIF offer far better compression-to-quality ratios than classic JPEG. A WebP image can weigh 30 to 50% less than an equivalent JPEG, with no visible loss to the naked eye.

For tooling: Squoosh (Google's tool), ImageOptim, TinyPNG, or server-side solutions like imgproxy and ImageKit for automatic on-the-fly optimization. If you use WordPress, plugins like ShortPixel or Imagify do the job — but always verify the final output.

Native lazy loading (loading="lazy" attribute) is now supported by all modern browsers. Enable it on all below-the-fold images to avoid unnecessarily loading visuals the user might never see.

How do you audit your existing images?

A Screaming Frog or Oncrawl crawl gives you an overview of your average image weight. Sort by descending weight and identify the biggest files — these are your potential quick wins.

Also check the format in use. If you're still serving PNG for photos (instead of reserving PNG for logos and icons), you're wasting bandwidth for nothing. PNG is lossless but much heavier than JPEG or WebP for complex images.

Test under real conditions with 3G network throttling in Chrome DevTools. If your images take several seconds to load on mobile, you have a problem — regardless of their theoretical weight.

- Audit your current images: identify those significantly exceeding 1 MB

- Prioritize optimization of above-the-fold images and those critical for LCP

- Migrate to WebP or AVIF to reduce weight without visible quality loss

- Enable native lazy loading on below-the-fold images

- Serve images via a CDN to reduce network latency

- Use responsive images (srcset) to adapt resolution to device

- Measure real impact with PageSpeed Insights and Core Web Vitals

- Document your internal thresholds (e.g., "product images < 800 KB, hero < 1.2 MB") to guide your teams

❓ Frequently Asked Questions

Google pénalise-t-il les sites dont les images dépassent 1 Mo ?

Quelle est la différence entre WebP et AVIF pour l'optimisation d'images ?

Le lazy loading natif suffit-il ou faut-il une librairie JavaScript ?

Comment savoir si mes images impactent négativement mon LCP ?

Faut-il optimiser toutes les images de la même manière ?

🎥 From the same video 43

Other SEO insights extracted from this same Google Search Central video · published on 30/03/2026

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.