Official statement

Other statements from this video 22 ▾

- □ Why doesn't Google Search Console's average position reflect a theoretical ranking but actual display results instead?

- □ Can you really afford to wait for an unstable ranking to stabilize on its own?

- □ Does boosting your SEO really require producing more content?

- □ Does the location of your XML sitemap really affect crawl efficiency?

- □ Should you really use the URL inspection tool to index a brand new website?

- □ How long does it really take to see your new backlinks in Google Search Console?

- □ Why do Search Console and Analytics data never really match up?

- □ Is Google Search Console really collecting all the data from your massive e-commerce site?

- □ Should you really prefer noindex over disallow to control indexation in Google?

- □ Can out-of-stock product pages really trigger soft 404 errors in Google's eyes?

- □ Do Google's testing tools really crawl in real-time or do they rely on cached data?

- □ Does Google really use different ranking algorithms depending on your industry?

- □ Why does Google deprioritize crawling low-effort aggregator sites?

- □ Does Google really count clicks on rich results the same way as organic clicks?

- □ Does the order of links in your HTML code really affect Google's crawl priority?

- □ Why does robots.txt prevent Google from crawling your pages but still allow them to be indexed?

- □ Are out-of-stock products hurting your e-commerce site's overall search rankings?

- □ Does partial duplicate content really hurt your search rankings?

- □ Does Google really ignore your canonical tags when it decides pages are too similar?

- □ Does Google really use just one signal to choose which URL to canonicalize among your duplicate content?

- □ Do brand mentions without backlinks actually help your SEO rankings?

- □ Why does a link without an indexed URL essentially do nothing for your SEO?

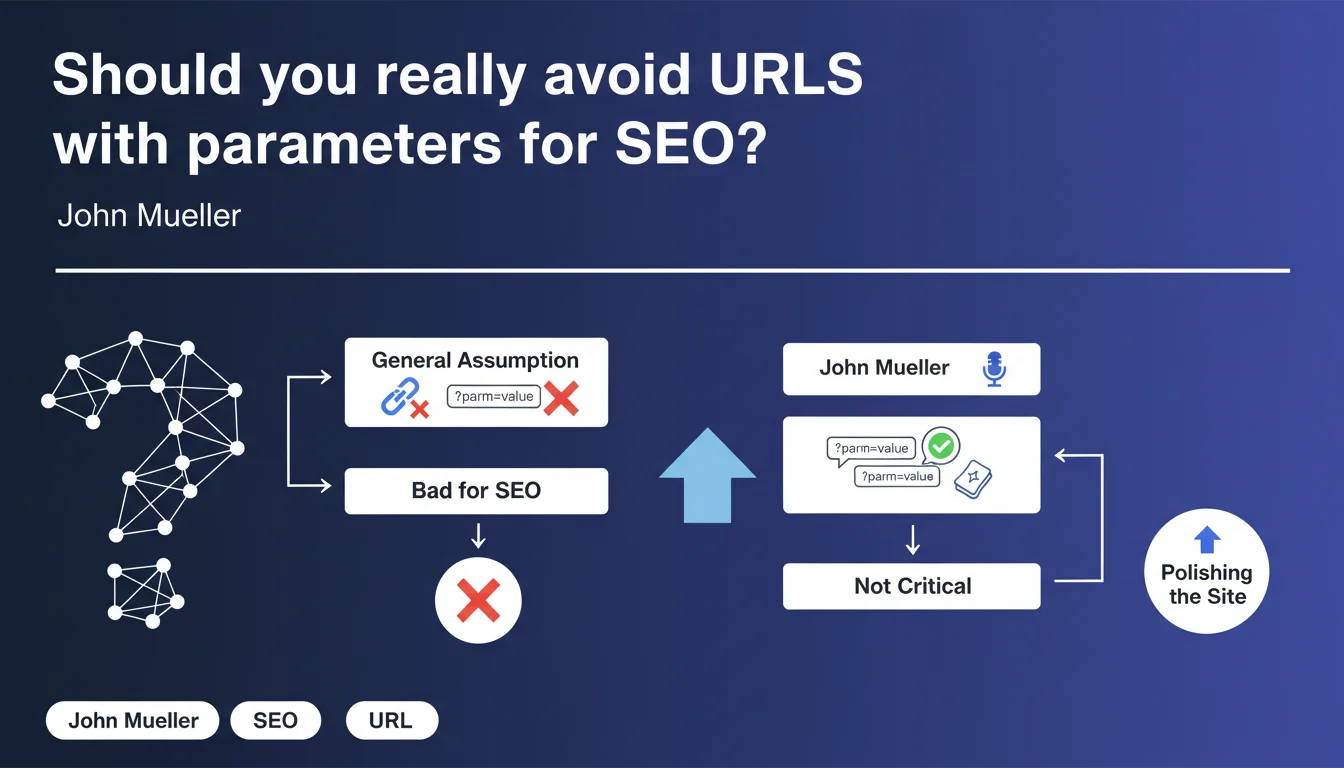

URLs with parameters are not inherently bad for SEO. Google handles them very well and optimizing them is more about perfectionism than critical technical work. The real impact depends on context and implementation — don't waste your time on this unless you have more pressing SEO priorities.

What you need to understand

Why does this misconception persist in the SEO community?

The distrust of URLs with parameters dates back to when search engines struggled to interpret them correctly. Infinite indexation loops, duplicate content generated by poorly configured facets, crawl budget issues — all of this left a mark on the industry's collective memory.

Except Google has evolved. The engine now handles these URLs without breaking a sweat in the vast majority of cases. The real problem isn't the parameter itself, but how it's being used.

What does Mueller mean by "polishing the site"?

He's placing this optimization in the category of marginal improvements. Not a blocking factor, not a penalty lurking around the corner. Just a detail that can make the site slightly cleaner.

Concretely, this means that if your site generates millions of URL variations through sorting or filtering parameters, you'd better control them. But if you have a few URLs with ?id=123 that are performing well in Search Console — move on, you have better things to do.

What types of parameters actually cause problems?

Not all parameters are created equal. Those that create unique content (a specific product, a category) aren't problematic. Those that generate unnecessary variations of the same content (sorting by price ascending, descending, alphabetically) clutter the index.

Session IDs, tracking parameters, superfluous filters — that's what can pollute your crawl. Google usually knows to ignore them, but why take the risk when a clean canonical or a properly configured robots.txt solves it in two minutes?

- URLs with parameters don't penalize your site by default

- The real issue: avoid crawl dilution and unnecessary duplication

- Google handles these URLs well, but why complicate things for it if you can do otherwise?

- Prioritize optimizations that have measurable impact on your KPIs first

SEO Expert opinion

Does this statement really reflect what we see in the real world?

Yes and no. Google does index URLs with parameters without major issues in 90% of cases. But saying it's "not critical" deserves some nuance.

On complex e-commerce sites with multiple facets, we regularly see crawl budget problems caused by poorly managed parameters. Google doesn't penalize — it gets lost. Result: strategic pages that aren't crawled frequently enough, unnecessary variations that bloat the index. [To verify] systematically in Search Console if you have more than 10,000 indexed URLs.

Why does Mueller downplay the impact so much?

Because he's probably talking to small site owners who are worried for nothing. A WordPress blog with a few pagination parameters? No problem, Google handles it.

But this generalization can be dangerous for large sites. A marketplace with millions of possible combinations can't afford to let Google decide on its own what to index. Reality on the ground: sites that structure their URLs properly — with or without parameters — perform better. Not because of a direct SEO boost, but because they control their information architecture.

In what cases absolutely does this rule not apply?

Sites with non-canonicalized faceted filters: you're sitting on a time bomb. Sites with session IDs in URLs (yes, it still happens) — that's SEO suicide. Multilingual or multi-currency sites that manage this via parameters without proper hreflang — same story.

Mueller is speaking about a general case. Your specific context might require URL rewriting for UX reasons, conversion, or simply technical control. Don't take this statement as an excuse to do nothing if you have clear signals of problems in your server logs.

Practical impact and recommendations

What exactly should you audit on your site?

Start with Search Console: look at indexed URLs. If you see hundreds of variations with parameters for the same content, you have a problem — even if Google claims to handle it.

Analyze your server logs: is Googlebot spending time on parasitic URLs? If yes, you're wasting crawl budget. A simple regex filter in your log analysis tool will give you the answer in 5 minutes.

What actions should you prioritize based on your situation?

If you have fewer than 5,000 indexed pages and a few harmless parameters: don't touch anything. Really. Spend your time on content or backlinks.

If you're an e-commerce site or marketplace: implement a strict canonicalization strategy, block useless parameters in robots.txt, use rel="canonical" religiously. And test — don't assume Google will make the right choice on its own.

If you're building a new site: favor clean URLs from the start. Even if parameters don't penalize, why complicate your life? A readable URL also improves your click-through rate in SERPs — it's measurable.

- Check Search Console for the number of indexed URLs with parameters

- Analyze logs to identify crawl patterns on these URLs

- Implement canonicals on all non-priority variations

- Block purely tracking parameters in robots.txt (utm_, fbclid, etc.)

- Document in Google Search Console (formerly URL Parameters tool, now via canonicals)

- Monitor the evolution of indexed URLs over 3 months

- If in doubt: prioritize rewriting clean URLs for new features

❓ Frequently Asked Questions

Google pénalise-t-il les sites qui utilisent des paramètres dans leurs URLs ?

Dois-je réécrire toutes mes URLs avec paramètres en URLs propres ?

Comment savoir si mes paramètres d'URL posent problème ?

Faut-il toujours utiliser des balises canonical sur les URLs avec paramètres ?

Les URLs avec paramètres impactent-elles le taux de clic en SERP ?

🎥 From the same video 22

Other SEO insights extracted from this same Google Search Central video · published on 28/03/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.