Official statement

Other statements from this video 22 ▾

- □ Pourquoi la position moyenne de Search Console ne reflète-t-elle pas un classement théorique mais des affichages réels ?

- □ Peut-on encore se permettre d'attendre qu'un classement instable se stabilise tout seul ?

- □ Faut-il vraiment produire plus de contenu pour améliorer son SEO ?

- □ Où placer son sitemap XML pour optimiser son crawl ?

- □ Combien de temps faut-il attendre pour voir les backlinks dans Search Console ?

- □ Pourquoi les données Search Console et Analytics ne concordent-elles jamais vraiment ?

- □ Search Console collecte-t-elle vraiment toutes les données sur les gros sites e-commerce ?

- □ Faut-il vraiment préférer noindex à disallow pour contrôler l'indexation ?

- □ Les produits en rupture de stock peuvent-ils vraiment être traités comme des soft 404 par Google ?

- □ Les outils de test Google crawlent-ils vraiment en temps réel ou utilisent-ils un cache ?

- □ Google utilise-t-il des algorithmes différents selon votre secteur d'activité ?

- □ Pourquoi Google ignore-t-il les sites agrégateurs de faible effort ?

- □ Google compte-t-il vraiment les clics sur les rich results comme des clics organiques ?

- □ L'ordre des liens dans le HTML influence-t-il vraiment la priorité de crawl de Google ?

- □ Faut-il vraiment éviter les URLs avec paramètres pour le SEO ?

- □ Pourquoi robots.txt bloque le crawl mais n'empêche pas l'indexation de vos pages ?

- □ Les produits en rupture de stock nuisent-ils au classement global de votre site e-commerce ?

- □ Le contenu dupliqué partiel pénalise-t-il vraiment vos pages ?

- □ Pourquoi Google refuse-t-il d'indexer plusieurs versions d'une même page malgré une canonicalisation correcte ?

- □ Comment Google choisit-il réellement quelle URL canoniser parmi vos contenus dupliqués ?

- □ Les mentions de marque sans lien ont-elles une valeur SEO ?

- □ Pourquoi un lien sans URL indexée ne sert strictement à rien ?

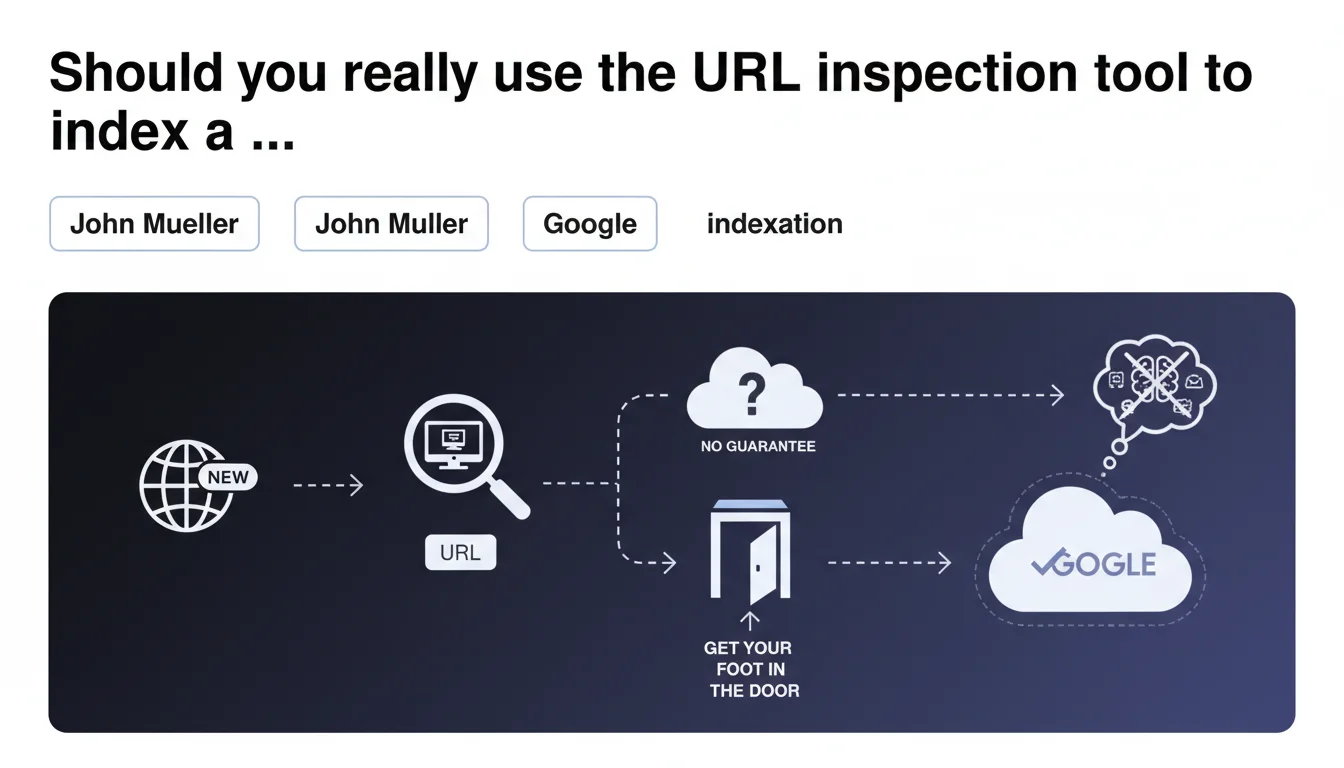

John Mueller confirms that the URL inspection tool (Search Console) can help a new site with no track record get its first Googlebot crawl, but with zero guarantee of indexation. It's one signal among many, not a magic wand. For an already established site with natural signals (backlinks, traffic), this tool adds nothing extra.

What you need to understand

What counts as a "signal" in Google's eyes?

Google uses the term signals to describe any indicator that helps it evaluate a site's relevance, authority, and freshness. In practical terms: external backlinks, domain age, organic traffic volume, brand mentions, indexation history.

A brand new site (recent domain, zero backlinks, no organic visits) has none of these markers. Google doesn't know if it's a serious project or spam thrown together in 10 minutes. That's where the inspection tool can serve as initial contact—but only as initial contact.

Why talk about "getting your foot in the door" rather than guaranteed indexation?

Mueller's wording is deliberately cautious. Requesting indexation via the inspection tool sends a priority request to Googlebot, which will indeed crawl the submitted URL. But crawl ≠ index.

Google can easily visit the page, see that it answers no useful query (thin content, duplicate, ultra-competitive niche with no differentiation), and decide not to add it to the index. "Getting your foot in the door" just means being on the radar—not a promise of results.

What are the key takeaways?

- The inspection tool isn't a magic button: it triggers a crawl, but indexation depends on content quality and site signals.

- Only useful for new sites: if your domain already has backlinks or a history, Google crawls naturally—the tool becomes pointless.

- No unlimited quota: Google limits the number of indexation requests per day and per Search Console property. Use it sparingly.

- Doesn't replace an XML sitemap: the sitemap remains the standard method to signal your URLs to Google. The inspection tool is a one-off supplement, not a strategy.

SEO Expert opinion

Does this statement align with what we see in practice?

Yes, completely. We regularly observe that new sites (fresh domains, zero backlinks) can wait several weeks before their first natural crawl, even with a properly configured sitemap. The inspection tool speeds up that first contact—often within 24-48 hours.

But here's the catch: accelerating the crawl doesn't change the indexation criteria. If your content is weak, duplicate, or poorly structured, Google will visit it and reject it anyway. [To be verified]: Google never communicates a precise threshold for what defines a "new site without signals"—is it 0 backlinks? Fewer than 10? A domain less than 3 months old? The usual vagueness.

What nuances should we add?

First point: the inspection tool is completely useless for an established site. If you already have organic traffic, backlinks, and regular crawls, submitting URLs manually via this tool is pointless—Google will discover them through the sitemap or internal links.

Second nuance: Mueller talks about "getting your foot in the door," but doesn't specify what happens next. If Google indexes your page, then finds it generates zero clicks and zero engagement, it can drop from the index after a few weeks. Indexation is never permanent—it's a revocable status.

When does this rule not apply?

If you launch a new site on an expired domain you purchased with a backlink history, you're no longer in the "new site without signals" category. Google already has data (even if old) and will crawl naturally—the inspection tool becomes superfluous.

Another case: e-commerce sites with thousands of product pages. Submitting each product sheet manually via the inspection tool is impractical and counterproductive. Better to optimize your XML sitemap and internal link structure so Google discovers priority URLs on its own.

Practical impact and recommendations

What should you actually do when launching a new site?

Start with the fundamentals: clean XML sitemap, submitted in Search Console, containing only indexable URLs (no redirects, no 404s, no canonicals pointing elsewhere). That's the foundation—the inspection tool comes after, not before.

Next, if your site is truly new (recent domain, zero backlinks), use the inspection tool to manually submit your 5-10 most strategic pages: homepage, main category pages, pillar content. No need to submit 200 URLs—you're limited on daily quota anyway.

What mistakes must you absolutely avoid?

- Never submit URLs with thin, duplicate, or auto-generated content—you risk triggering a spam signal on the very first crawl.

- Don't use the inspection tool as a substitute for an XML sitemap: Google crawls the sitemap regularly and automatically; the inspection tool stays ad-hoc.

- Don't loop-submit the same URLs hoping to force indexation—if Google refuses to index after a first crawl, there's a quality or relevance problem.

- Avoid submitting technical pages (legal notices, terms, contact): they have zero SEO value and waste your inspection quota.

How do you verify your site is being crawled and indexed?

Use the coverage report in Search Console to identify URLs discovered but not indexed. If Google refuses to index certain pages despite submission, check the exact reasons (duplicate content, low quality, robots.txt blocking, etc.).

Supplement this with server log analysis: it shows you if Googlebot actually visits your pages and how often. If crawl activity is minimal after 2-3 weeks, it's a signal your site lacks external signals (backlinks, mentions, traffic). In that case, focus on acquiring natural links rather than spamming the inspection tool.

❓ Frequently Asked Questions

L'outil d'inspection d'URL peut-il forcer l'indexation d'une page ?

Combien d'URLs peut-on soumettre par jour via l'outil d'inspection ?

Faut-il utiliser l'outil d'inspection pour chaque nouvelle page publiée ?

Que faire si Google refuse d'indexer une page malgré sa soumission ?

L'outil d'inspection améliore-t-il le crawl budget ?

🎥 From the same video 22

Other SEO insights extracted from this same Google Search Central video · published on 28/03/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.