Official statement

Other statements from this video 22 ▾

- □ Pourquoi la position moyenne de Search Console ne reflète-t-elle pas un classement théorique mais des affichages réels ?

- □ Peut-on encore se permettre d'attendre qu'un classement instable se stabilise tout seul ?

- □ Faut-il vraiment produire plus de contenu pour améliorer son SEO ?

- □ Où placer son sitemap XML pour optimiser son crawl ?

- □ Faut-il vraiment utiliser l'outil d'inspection d'URL pour indexer un nouveau site ?

- □ Combien de temps faut-il attendre pour voir les backlinks dans Search Console ?

- □ Pourquoi les données Search Console et Analytics ne concordent-elles jamais vraiment ?

- □ Search Console collecte-t-elle vraiment toutes les données sur les gros sites e-commerce ?

- □ Faut-il vraiment préférer noindex à disallow pour contrôler l'indexation ?

- □ Les produits en rupture de stock peuvent-ils vraiment être traités comme des soft 404 par Google ?

- □ Les outils de test Google crawlent-ils vraiment en temps réel ou utilisent-ils un cache ?

- □ Google utilise-t-il des algorithmes différents selon votre secteur d'activité ?

- □ Pourquoi Google ignore-t-il les sites agrégateurs de faible effort ?

- □ Google compte-t-il vraiment les clics sur les rich results comme des clics organiques ?

- □ Faut-il vraiment éviter les URLs avec paramètres pour le SEO ?

- □ Pourquoi robots.txt bloque le crawl mais n'empêche pas l'indexation de vos pages ?

- □ Les produits en rupture de stock nuisent-ils au classement global de votre site e-commerce ?

- □ Le contenu dupliqué partiel pénalise-t-il vraiment vos pages ?

- □ Pourquoi Google refuse-t-il d'indexer plusieurs versions d'une même page malgré une canonicalisation correcte ?

- □ Comment Google choisit-il réellement quelle URL canoniser parmi vos contenus dupliqués ?

- □ Les mentions de marque sans lien ont-elles une valeur SEO ?

- □ Pourquoi un lien sans URL indexée ne sert strictement à rien ?

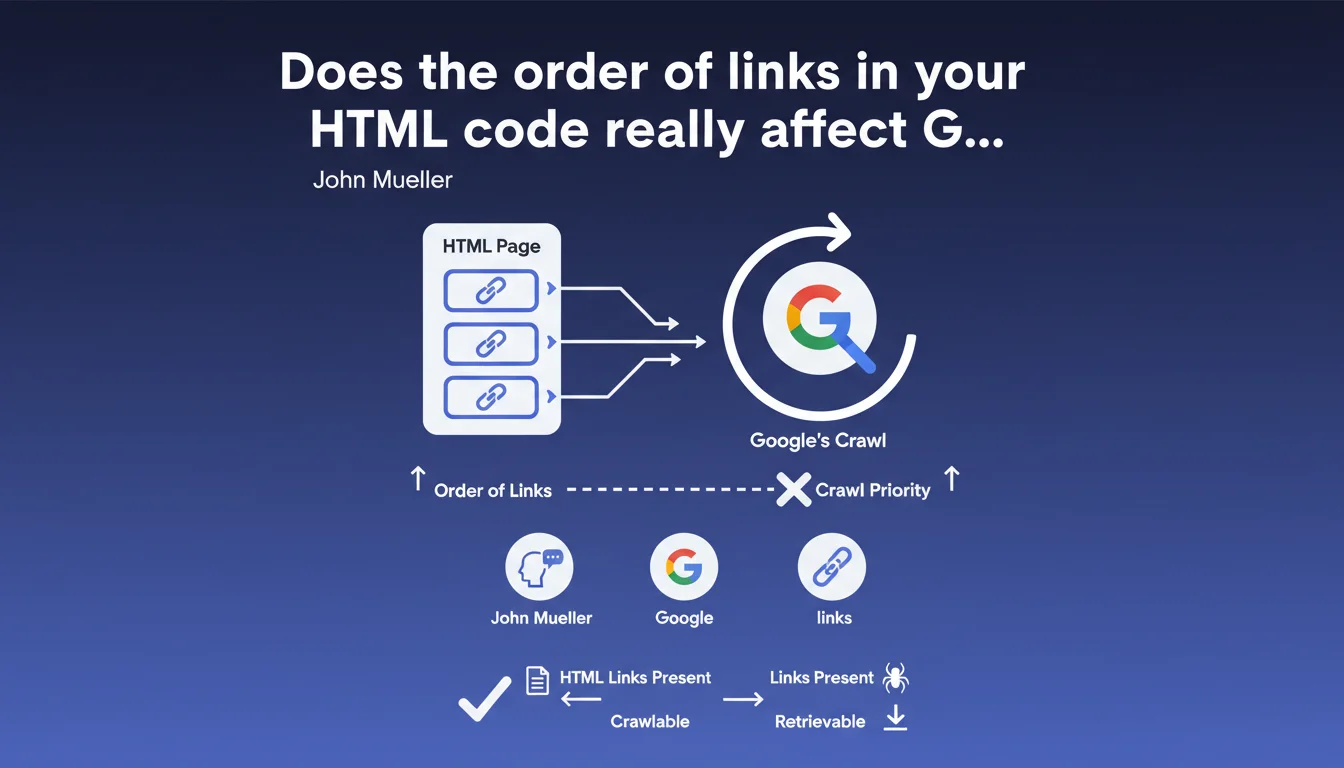

Google claims that the order of links in HTML code has no impact on the crawl priority of targeted pages. As long as links are present and followable, Googlebot will discover them regardless of their position in the DOM. This statement challenges certain internal linking optimization practices based on source code hierarchy.

What you need to understand

What exactly does Google say about HTML link order?

John Mueller is clear-cut: the position of a link in the HTML code does not determine when Googlebot will crawl the destination page. In other words, whether a link appears on the first line of the <body> or at the very bottom of the footer makes no difference to its discovery.

What matters is that the link is present and technically followable — no blocking JavaScript, no nofollow directive if you want to pass PageRank, no broken redirect chains.

Why does this confusion persist in the SEO community?

For years, we heard that placing important links "higher" in the HTML improved their consideration. This belief partly comes from old vague recommendations and confusion between crawl order and PageRank distribution.

Google did say that the first link counts when multiple links on the same page point to the same URL — but that concerns the anchor text retained, not crawl priority. Two distinct issues, often mixed up.

What's the difference between discovery and crawl priority?

Mueller is talking here about site-wide crawl, not initial URL discovery or recrawl frequency. Once a link exists and Googlebot can see it, the URL enters the queue. The effective priority of that queue depends on other signals: page popularity, content freshness, depth in the site structure, available crawl budget.

In plain terms: HTML order doesn't let you jump the queue, but it doesn't slow things down either if all other signals are green.

- The order of links in the DOM does not affect crawl priority according to Google

- What matters: technical accessibility of the link and popularity signals of the target page

- Confusion often stems from mixing up crawl order, discovery, and multiple anchor text handling

- Google always prioritizes the first link found to a URL for anchor text, but that doesn't concern crawl planning

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes and no. On sites with tight crawl budgets, we regularly observe that deep pages — often linked late in the HTML — take longer to be crawled. But it's not necessarily the link order that's responsible: it's the click depth, the number of internal links pointing to these pages, their actual popularity.

Google doesn't blindly crawl links in DOM order. It prioritizes based on dozens of signals — internal PageRank, change rate, estimated user demand. If a page buried in the footer receives 50 internal links from strong hubs, it will be crawled faster than an orphaned page placed in the <header>.

What nuances should be added to this claim?

Mueller says "the order of links is not related to the order of our overall crawl". The word "overall" is key. He doesn't say that all links have the same weight or that their context doesn't matter. A link in the main content probably passes more PageRank than a footer link — that's classic Reasonable Surfer logic. [To verify]: Google has never publicly confirmed whether DOM order influences the Reasonable Surfer score, but patents suggest that visual and contextual position matters, not parsing order.

Another point: this statement concerns crawling, not indexation or ranking. Even if Googlebot discovers all your links instantly, it doesn't guarantee that all pages will be indexed or that they will rank well.

In what cases doesn't this rule fully apply?

On massive sites with critical crawl budget (millions of pages, large e-commerce, content aggregators), every detail counts. If Google can only crawl 10,000 pages per day and you have 500,000, the slightest friction can delay discovery. In this context, strategically placing links to priority pages — not necessarily "at the top of the HTML", but within strong and shallow hubs — remains a good practice.

Practical impact and recommendations

What should you actually do with this information?

Stop stressing about the order of links in source code if your real problem is elsewhere. Focus on overall architecture: reduce click depth, strengthen internal linking to strategic pages, ensure your links are technically followable.

If you're doing client-side JavaScript, verify that Googlebot actually sees your links via server-side rendering or SSR. Order doesn't matter, but the links need to exist in the accessible DOM.

What mistakes should you avoid after this statement?

Don't throw out all thinking about visual and semantic hierarchy in your content. A link placed in a <nav> or <article> has more contextual weight than a link buried in a <footer> with 200 others. It's not the parsing order that changes, it's the relevance signal.

Another trap: believing that "Google crawls everything so I can leave it all messy". No. Even if Googlebot technically discovers all your links, it won't necessarily index all pages — especially if they're weak, duplicate, or lack added value. Prioritization remains essential.

How do you verify that your internal linking is optimal?

Audit the click depth of your strategic pages using Screaming Frog or Oncrawl. If critical pages are more than 3-4 clicks from the homepage, strengthen the linking — not by moving them up in the HTML, but by creating logical and multiple navigation paths.

Analyze server logs to see which pages are actually crawled and how often. If entire sections are ignored, the problem rarely comes from link order, but rather from a lack of internal popularity or poor crawl budget allocation.

- Verify that all strategic links are present in the rendered HTML (not just injected in JS later)

- Reduce the click depth of priority pages to maximum 3 from the homepage

- Strengthen contextual linking in content body, not just in nav/footer

- Audit crawl logs to identify ignored or under-crawled pages

- Clean up zombie pages (low value, few internal links, never crawled) to free up budget

- Test JavaScript rendering with Google Search Console (URL inspection tool) to ensure links are visible

❓ Frequently Asked Questions

Si l'ordre des liens HTML ne compte pas, pourquoi certains SEO continuent-ils à placer les liens importants en haut du code ?

Un lien dans le footer a-t-il la même valeur SEO qu'un lien dans le contenu principal ?

Cette déclaration signifie-t-elle que je peux placer mes liens n'importe où sans conséquence ?

Google crawle-t-il les liens JavaScript ajoutés tardivement dans le DOM ?

Comment savoir si mon crawl budget est mal alloué à cause de mon maillage interne ?

🎥 From the same video 22

Other SEO insights extracted from this same Google Search Central video · published on 28/03/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.