Official statement

Other statements from this video 22 ▾

- □ Pourquoi la position moyenne de Search Console ne reflète-t-elle pas un classement théorique mais des affichages réels ?

- □ Peut-on encore se permettre d'attendre qu'un classement instable se stabilise tout seul ?

- □ Faut-il vraiment produire plus de contenu pour améliorer son SEO ?

- □ Où placer son sitemap XML pour optimiser son crawl ?

- □ Faut-il vraiment utiliser l'outil d'inspection d'URL pour indexer un nouveau site ?

- □ Combien de temps faut-il attendre pour voir les backlinks dans Search Console ?

- □ Pourquoi les données Search Console et Analytics ne concordent-elles jamais vraiment ?

- □ Search Console collecte-t-elle vraiment toutes les données sur les gros sites e-commerce ?

- □ Faut-il vraiment préférer noindex à disallow pour contrôler l'indexation ?

- □ Les produits en rupture de stock peuvent-ils vraiment être traités comme des soft 404 par Google ?

- □ Les outils de test Google crawlent-ils vraiment en temps réel ou utilisent-ils un cache ?

- □ Google utilise-t-il des algorithmes différents selon votre secteur d'activité ?

- □ Pourquoi Google ignore-t-il les sites agrégateurs de faible effort ?

- □ Google compte-t-il vraiment les clics sur les rich results comme des clics organiques ?

- □ L'ordre des liens dans le HTML influence-t-il vraiment la priorité de crawl de Google ?

- □ Faut-il vraiment éviter les URLs avec paramètres pour le SEO ?

- □ Pourquoi robots.txt bloque le crawl mais n'empêche pas l'indexation de vos pages ?

- □ Les produits en rupture de stock nuisent-ils au classement global de votre site e-commerce ?

- □ Le contenu dupliqué partiel pénalise-t-il vraiment vos pages ?

- □ Comment Google choisit-il réellement quelle URL canoniser parmi vos contenus dupliqués ?

- □ Les mentions de marque sans lien ont-elles une valeur SEO ?

- □ Pourquoi un lien sans URL indexée ne sert strictement à rien ?

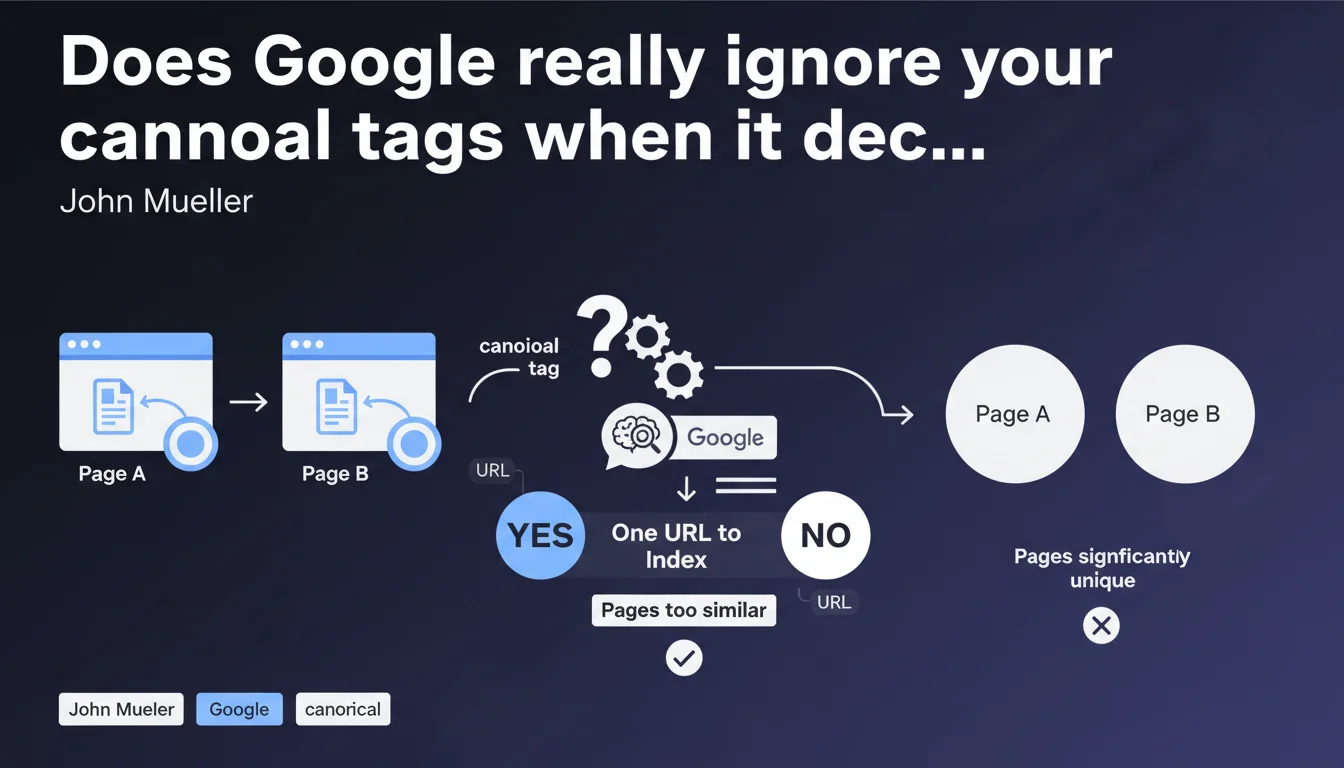

Google decides itself which URL to index when it considers multiple pages "essentially the same," regardless of your preferences. The only solution: ensure your pages are different enough for the algorithm to consider them unique. The threshold for differentiation remains unclear.

What you need to understand

Does Google really ignore your canonical tags?

Not exactly. Google takes your canonicalization signals into account, but reserves the right to override them if its algorithm detects substantial similarity between multiple URLs. This is what Mueller calls "doing you a favor" — an elegant way of saying Google does whatever it wants.

The canonical tag becomes just a simple advisory signal among others. If the text content, HTML structure, and search intent are judged too similar, Google chooses a master URL and ignores the others, regardless of your technical preferences.

What exactly is a "significantly unique" page according to Google?

Good question. Google provides no quantitative threshold. No percentage of text difference, no specific HTML criteria. We're in a total gray area.

Field experience suggests you need substantial content differentiation, not just a different sidebar or footer. But where exactly do you draw the line between "a few different sentences" and "complete redesign"? Google won't tell you — and that's probably intentional.

Why does Google merge my product variants?

Because its algorithm detects duplicate or near-duplicate content between your product pages with different colors, sizes, or options. If only the title and a photo change, Google considers the search intent identical and thinks one URL is enough.

This issue particularly affects e-commerce sites with poorly structured product variants. Google prefers to consolidate signals on one URL rather than spread crawl budget and authority across a dozen near-identical versions.

- Canonicalization is not an absolute directive — Google keeps final control

- "Significantly unique" remains a vague concept with no publicly defined threshold

- Main content must be differentiated, not just peripheral elements

- Google favors consolidation when it detects overly similar pages

SEO Expert opinion

Is this statement consistent with what we observe in the field?

Yes and no. In principle, it's accurate: Google has always operated through URL clustering of similar pages. What Mueller confirms here is that Google doesn't blindly follow your technical signals.

But the vagueness around "significantly unique" is problematic. In practice, we observe inconsistent behavior: pages with 40% different content get merged, while others with 15% difference stay indexed separately. Sensitivity varies by industry, crawl budget, and domain authority. [To verify]: Does Google apply the same threshold to all sites or does it adjust its requirements based on context?

What nuances should be added to this advice?

First, "essentially the same" doesn't mean "strictly identical." Google uses near-duplicate detection far more sophisticated than simple hash comparison. It analyzes main content, semantic structure, and named entities.

Second, this rule applies differently depending on content type. For e-commerce product pages, a price or availability change isn't enough to create uniqueness. For blog articles, rewriting a few paragraphs fools no one. Google is looking for real editorial differentiation.

In what cases does this rule cause problems?

Mainly on high-volume sites with legitimate but subtle variations. A real estate site with similar listings in neighboring neighborhoods. A job board with near-identical offers in multiple cities. An e-commerce store with product variants.

The paradox: you need these pages to target specific long-tail queries, but Google decides they're too similar and only indexes one. Result: you lose coverage without clear notification. And Mueller simply tells you "make them more unique" — thanks for the tip.

Practical impact and recommendations

What should I do concretely to differentiate my pages?

First step: audit clusters of similar pages. Identify URLs in Search Console marked "Excluded - Another page with appropriate canonical tag" or "Detected, currently not indexed." These are your consolidation candidates.

Next, enrich the main content of each page with truly differentiating elements. Not just reworded paragraphs: unique sections, specific FAQs, dedicated testimonials, contextualized case studies. Google must see distinct editorial intent.

For e-commerce sites, structure your product variants differently: a master page with variant selector instead of distinct URLs, or create genuinely unique content per variant (usage guides, comparisons, specific use cases).

What mistakes should you absolutely avoid?

Don't rely on content spinning or automated rewriting. Google detects these practices easily and it adds no value. If you have nothing unique to say about a variant, don't create a dedicated page.

Also avoid over-optimizing filters. Not every combination of filters needs to be indexed. If "red shoes size 42" and "red shoes size 43" have the same descriptive content, Google will only index one version — you might as well control which one with strategic noindex.

How do I verify that my site follows this rule?

Use Search Console to track pages excluded for canonicalization. If the volume increases, Google is consolidating aggressively. Compare with your crawl plan: do the merged pages match your strategy?

Test with a content similarity tool (like Copyscape or Siteliner) to measure duplication rate between your pages. If two URLs exceed 70-80% text similarity, it's a red flag.

- Audit excluded pages in Search Console to identify victims of forced canonicalization

- Enrich main content with truly unique sections (minimum 300+ differentiating words)

- Restructure product variants: master page + selector or truly distinct content per variant

- Measure text similarity between supposedly different pages (threshold < 70%)

- Implement strategic noindex on filter combinations without added value

- Create FAQ, guides, and testimonials specific to each page to mark the difference

❓ Frequently Asked Questions

Quel pourcentage de différence de contenu faut-il pour que Google considère deux pages comme uniques ?

Si Google choisit la mauvaise URL canonique, puis-je forcer mon choix ?

Les pages filtres de mon site e-commerce sont-elles concernées ?

Comment Google détecte-t-il que deux pages sont "essentiellement les mêmes" ?

Faut-il supprimer les pages que Google refuse d'indexer à cause de la canonicalisation ?

🎥 From the same video 22

Other SEO insights extracted from this same Google Search Central video · published on 28/03/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.