Official statement

Other statements from this video 17 ▾

- □ Pourquoi votre site n'apparaît-il pas dans Google : indexation ou ranking ?

- □ L'URL Inspection Tool de Search Console remplace-t-il vraiment le test d'indexation manuel ?

- □ Le rapport d'indexation de la Search Console suffit-il vraiment à diagnostiquer vos problèmes d'indexation ?

- □ Faut-il vraiment chercher à indexer 100% de ses pages ?

- □ Pourquoi Google indexe-t-il toujours la page d'accueil en premier sur un nouveau site ?

- □ Pourquoi la page d'accueil de votre nouveau site ne s'indexe-t-elle pas ?

- □ Pourquoi votre homepage n'apparaît-elle toujours pas dans l'index Google ?

- □ Votre site est-il vraiment absent de l'index Google ou juste victime de la canonicalisation ?

- □ Hreflang fausse-t-il vos rapports d'indexation dans Search Console ?

- □ Pourquoi vos pages 'site en construction' ne seront jamais indexées par Google ?

- □ Pourquoi certaines pages s'indexent en quelques secondes et d'autres jamais ?

- □ Google peut-il encore indexer l'intégralité du web ?

- □ Google applique-t-il vraiment un quota d'indexation par site ?

- □ Faut-il supprimer l'ancien contenu pour améliorer l'indexation du nouveau ?

- □ Faut-il vraiment utiliser la fonction 'Demander une indexation' de la Search Console ?

- □ L'opérateur site: est-il vraiment fiable pour mesurer l'indexation de votre site ?

- □ Comment exploiter vraiment l'opérateur site: au-delà de la simple vérification d'indexation ?

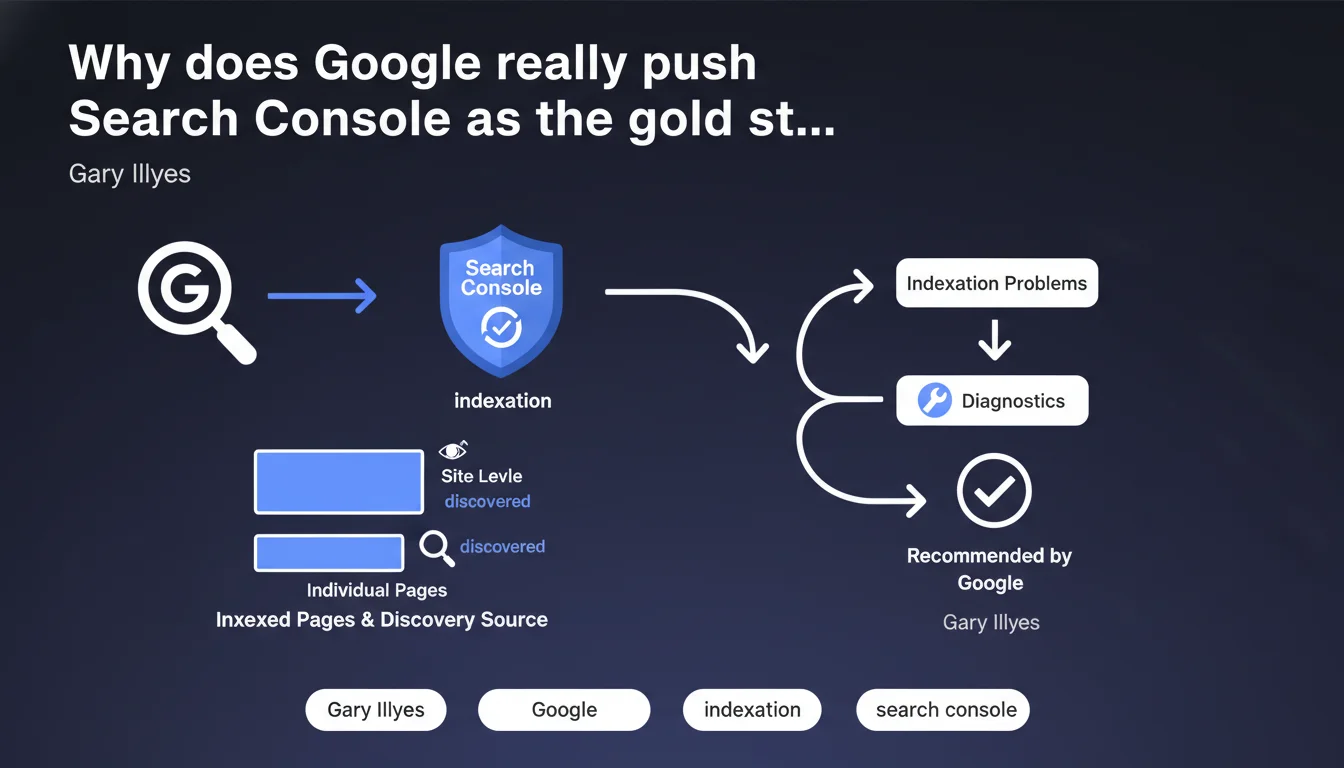

Google insists that Search Console should be your primary tool for diagnosing indexation issues, rather than third-party solutions or manual checks. The tool lets you see how many pages are indexed and how Google discovered them, at both site-wide and granular levels. But this recommendation deserves some nuance — GSC has known limitations.

What you need to understand

Gary Illyes emphasizes Search Console as the reference standard for analyzing indexation. No surprise there — it's the official tool, directly connected to Google's data. But behind this statement lies a reality: many SEO professionals still rely on alternative methods (site: operator, third-party tools, server logs) out of habit or distrust.

GSC does centralize information about indexed page counts and discovery sources (sitemap, internal navigation, backlinks). In theory, it's the most reliable view of what Google actually sees — not what your tools think it sees.

What does Search Console actually reveal about indexation?

The tool provides a Coverage Report that distinguishes indexed pages, excluded pages, and pages with errors. It also shows how Google discovered each URL: via sitemap, through natural crawling, or from an external link.

Practically speaking, you see how many pages are in the index, how many are blocked by robots.txt, marked noindex, or simply ignored. This is far more precise than a vague site: operator approximation.

Why does Google push this tool so hard?

Two likely reasons. First, to reduce support burden — if everyone uses the same reference point, fewer tickets like "but site: shows 150 pages and your tool shows 120." Second, to centralize diagnostics within an environment Google controls completely.

But let's be honest: GSC has delayed data updates, documented display bugs, and limited granularity on certain technical aspects. It remains a starting point, not absolute truth.

- Search Console is Google's official source for indexation data

- It displays indexed pages, excluded pages, and discovery sources

- More reliable than site: or third-party estimates — in theory

- Still imperfect: update delays, occasional bugs, sometimes incomplete data

- Best combined with server log analysis for a complete picture

SEO Expert opinion

Is this recommendation actually new?

No. Google has been repeating this message for years. What's changing is the intensity — a sign that many SEO professionals continue to ignore GSC or only use it superficially. Gary Illyes is driving the point home, likely after seeing too many diagnostics based on rough approximations.

The core message is sound: GSC remains the best official source. But positioning it as the sole reference ignores real-world complexity.

What limitations should you keep in mind?

GSC doesn't show everything. It displays only what Google chooses to share, sometimes with significant delays. On large sites with thousands of pages, some URLs may take days to appear in reports — or disappear without clear explanation.

Server logs remain essential for seeing what Googlebot actually crawls, how often, and what it ignores. GSC won't tell you why one page gets crawled 50 times a day while another never gets touched. [To verify]: the gap between "discovered" and "crawled" isn't always transparent in the interface.

When does this recommendation create problems?

When Google itself displays contradictory data. The Coverage Report may show "indexed," but the page appears nowhere in search results — even with site: plus exact title match. Or indexed URL counts fluctuate 20% overnight without any site changes.

In these cases, blindly trusting GSC leads to diagnostic errors. Cross-reference with other sources: logs, independent crawl via Screaming Frog, manual checks of critical pages.

Practical impact and recommendations

What should you do concretely right now?

If you're not already using Search Console as the foundation of your indexation diagnostics, fix that immediately. Set up access for all your properties, and verify that all relevant domains and subdomains are added (www and non-www, HTTPS and HTTP if applicable).

Review the Coverage Report at minimum once weekly. Identify pages marked "Excluded" or "Error" and document the reasons. Don't stop at the overall numbers — dig into the details by exclusion type.

What common mistakes should you avoid with this tool?

Don't operate in isolation. GSC says a page is indexed? Verify manually with site: and an exact title search. If it doesn't appear, investigate — content cannibalization, duplicate content, or simply Google updating its interface with a lag.

Another trap: ignoring discovery sources. If your critical pages are discovered only via sitemap and never through natural crawling, your internal linking is likely broken. GSC shows you this — if you bother to look.

How do you combine GSC with other tools for complete diagnostics?

Use GSC as your starting point, then validate with server logs (actual Googlebot crawl), Screaming Frog crawl (perceived architecture), and manual checks (actual indexation). Each source brings a different angle.

Managing a complex site (thousands of URLs, multilingual, e-commerce) makes this cross-analysis time-consuming quickly. Setting up GSC alerts (sudden indexation drops, error spikes) helps — but interpretation stays manual.

- Verify all domains/subdomains are added to Search Console

- Review Coverage Report weekly minimum

- Cross-reference GSC with server logs and independent crawls

- Analyze discovery sources to spot internal linking gaps

- Never rely on GSC as your sole source of truth

- Set up automated alerts for indexation fluctuations

❓ Frequently Asked Questions

La Search Console remplace-t-elle totalement l'analyse des logs serveur ?

Pourquoi le nombre de pages indexées varie-t-il autant d'un jour à l'autre dans la GSC ?

Peut-on se fier au site: pour vérifier l'indexation ?

Que faire si une page apparaît indexée dans GSC mais invisible dans les SERP ?

Comment savoir si Google découvre mes nouvelles pages assez vite ?

🎥 From the same video 17

Other SEO insights extracted from this same Google Search Central video · published on 22/06/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.