Official statement

Other statements from this video 17 ▾

- □ Pourquoi votre site n'apparaît-il pas dans Google : indexation ou ranking ?

- □ Pourquoi Google pousse-t-il Search Console pour diagnostiquer l'indexation ?

- □ L'URL Inspection Tool de Search Console remplace-t-il vraiment le test d'indexation manuel ?

- □ Le rapport d'indexation de la Search Console suffit-il vraiment à diagnostiquer vos problèmes d'indexation ?

- □ Faut-il vraiment chercher à indexer 100% de ses pages ?

- □ Pourquoi Google indexe-t-il toujours la page d'accueil en premier sur un nouveau site ?

- □ Pourquoi la page d'accueil de votre nouveau site ne s'indexe-t-elle pas ?

- □ Pourquoi votre homepage n'apparaît-elle toujours pas dans l'index Google ?

- □ Votre site est-il vraiment absent de l'index Google ou juste victime de la canonicalisation ?

- □ Hreflang fausse-t-il vos rapports d'indexation dans Search Console ?

- □ Pourquoi vos pages 'site en construction' ne seront jamais indexées par Google ?

- □ Pourquoi certaines pages s'indexent en quelques secondes et d'autres jamais ?

- □ Google peut-il encore indexer l'intégralité du web ?

- □ Google applique-t-il vraiment un quota d'indexation par site ?

- □ Faut-il supprimer l'ancien contenu pour améliorer l'indexation du nouveau ?

- □ L'opérateur site: est-il vraiment fiable pour mesurer l'indexation de votre site ?

- □ Comment exploiter vraiment l'opérateur site: au-delà de la simple vérification d'indexation ?

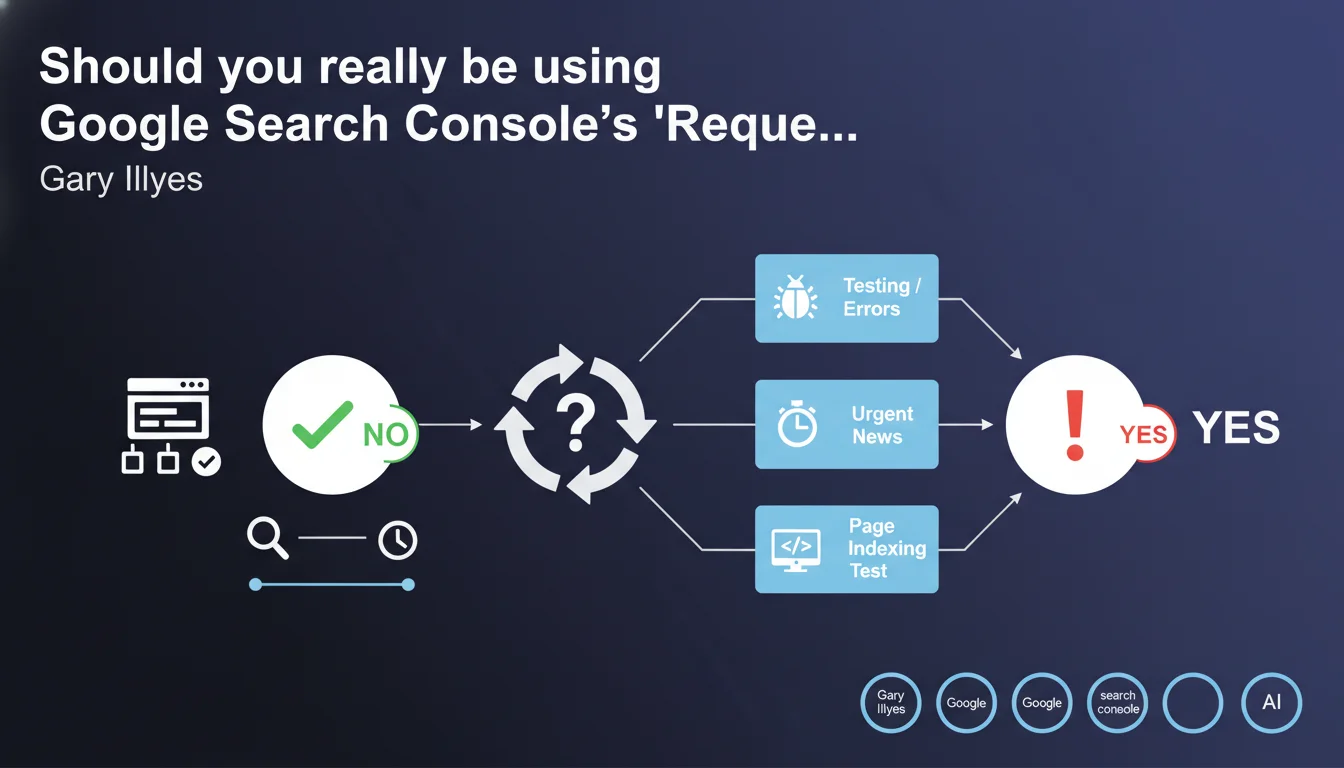

Gary Illyes states that the 'Request indexing' feature is generally unnecessary for normally well-built websites. It's primarily useful for diagnosing indexability issues or handling urgent cases like breaking news that requires immediate indexing. For everything else, natural crawling is sufficient.

What you need to understand

Why does Google downplay the importance of this feature?

The 'Request indexing' function has existed in Search Console for years, and many SEOs use it systematically after every content update. Yet Google is clarifying here that it provides no added value for a well-built website.

The underlying message: if your technical architecture is solid — functional XML sitemap, crawl budget respected, proper internal linking — Googlebot will discover and index your pages without manual intervention. The indexing request should only be used in specific cases.

In which cases does this feature remain relevant?

Gary Illyes mentions three scenarios: testing a page's indexability (verifying that no directive blocks crawling), detecting technical errors (robots.txt, meta tags, canonicals), and urgent news requiring immediate indexation.

Concretely, if you publish an exclusive scoop or critical information, the indexing request can accelerate the process. But for 95% of standard content, it's unnecessary.

What does Google mean by 'a normally built website'?

The expression is intentionally vague. It likely refers to a site with clean technical architecture, regularly updated content, and correct quality signals (traffic, engagement, backlinks).

If Google visits your site multiple times daily naturally, requesting indexing becomes redundant. Conversely, a site with low crawl budget or recurring technical issues won't truly benefit from this feature — you need to fix the root causes first.

- The 'Request indexing' feature does not significantly speed up indexation on a well-optimized site

- It primarily serves to diagnose problems rather than force indexation

- Legitimate use cases: urgent news, technical testing, correcting critical errors

- A site with good crawl budget and solid architecture doesn't need this crutch

SEO Expert opinion

Is this statement consistent with real-world observations?

Let's be honest: most experienced SEOs have already noticed that manually requesting indexation brings only marginal gains. Important pages are usually crawled quickly if the site is well-structured.

Where it gets tricky is that Google doesn't specify how long natural crawling takes. Between a few hours and several weeks, the difference can be huge depending on the site. For a news outlet, waiting even 24 hours can be unacceptable. [To verify]: What is the average natural indexation timeframe for a 'normal' site?

Why is Google emphasizing this point now?

Probably because too many SEOs are abusing this function. Requesting indexation for hundreds of pages per day creates unnecessary server load and pollutes Google's data.

The implicit message: stop spamming this feature. If you need to use it systematically, it means your indexation strategy is built on shaky foundations — and that's what you need to fix first.

What are the unstated limitations of this statement?

Gary Illyes doesn't mention sites with low crawl budget, very new sites without history, or entire sections struggling to be discovered despite a correct sitemap. In these cases, requesting indexation can actually help — even if it's just a band-aid.

And that's where the statement becomes frustrating: it addresses 'normally built sites' but gives no way to determine if your site qualifies. No metrics, no thresholds, no objective criteria. [To verify]: How does Google concretely define this 'normal quality'?

Practical impact and recommendations

What should you actually do with this information?

First step: stop using this feature by default after every publication. Test whether your content is indexed naturally within an acceptable timeframe — 48 to 72 hours for a well-crawled site.

Use the feature only for documented emergency cases: critical news, major bug fixes, or indexability testing after technical changes. Document these cases to evaluate whether the request actually accelerates the process.

How do you know if your site needs this crutch?

Monitor your coverage reports in Search Console. If your new pages systematically appear in 'Discovered, not currently indexed', it's a signal that your crawl budget is insufficient or your internal linking isn't doing its job.

Compare publication date with first indexation date. A gap greater than a week on standard content reveals a structural problem — and manually requesting indexation won't solve it.

What are more effective alternatives?

Focus on the technical fundamentals: optimize your XML sitemap, improve internal linking to new pages, reduce crawl depth, increase update frequency.

For news sites or urgent launches, set up IndexNow or use Google's Indexing API (reserved for eligible content). These solutions are more scalable than manual requests.

- Stop systematically using 'Request indexing' after every publication

- Measure the natural indexation timeframe of your content over 30 days

- Reserve this feature for urgent news, technical testing, or critical fixes

- Audit your crawl budget and internal linking structure if delays exceed 72 hours

- Document each use to evaluate its real impact

- Implement a dynamic sitemap that updates automatically

- Eliminate orphaned pages and reduce navigation depth

❓ Frequently Asked Questions

La fonction 'Demander une indexation' accélère-t-elle vraiment l'indexation ?

Combien de demandes d'indexation peut-on faire par jour ?

Quand utiliser cette fonction est-il réellement justifié ?

Qu'est-ce qu'un 'site de qualité normale' selon Google ?

Que faire si mes pages ne sont pas indexées même après demande ?

🎥 From the same video 17

Other SEO insights extracted from this same Google Search Central video · published on 22/06/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.