Official statement

Other statements from this video 17 ▾

- □ Pourquoi votre site n'apparaît-il pas dans Google : indexation ou ranking ?

- □ Pourquoi Google pousse-t-il Search Console pour diagnostiquer l'indexation ?

- □ L'URL Inspection Tool de Search Console remplace-t-il vraiment le test d'indexation manuel ?

- □ Le rapport d'indexation de la Search Console suffit-il vraiment à diagnostiquer vos problèmes d'indexation ?

- □ Faut-il vraiment chercher à indexer 100% de ses pages ?

- □ Pourquoi Google indexe-t-il toujours la page d'accueil en premier sur un nouveau site ?

- □ Pourquoi la page d'accueil de votre nouveau site ne s'indexe-t-elle pas ?

- □ Pourquoi votre homepage n'apparaît-elle toujours pas dans l'index Google ?

- □ Votre site est-il vraiment absent de l'index Google ou juste victime de la canonicalisation ?

- □ Hreflang fausse-t-il vos rapports d'indexation dans Search Console ?

- □ Pourquoi vos pages 'site en construction' ne seront jamais indexées par Google ?

- □ Pourquoi certaines pages s'indexent en quelques secondes et d'autres jamais ?

- □ Google peut-il encore indexer l'intégralité du web ?

- □ Google applique-t-il vraiment un quota d'indexation par site ?

- □ Faut-il supprimer l'ancien contenu pour améliorer l'indexation du nouveau ?

- □ Faut-il vraiment utiliser la fonction 'Demander une indexation' de la Search Console ?

- □ Comment exploiter vraiment l'opérateur site: au-delà de la simple vérification d'indexation ?

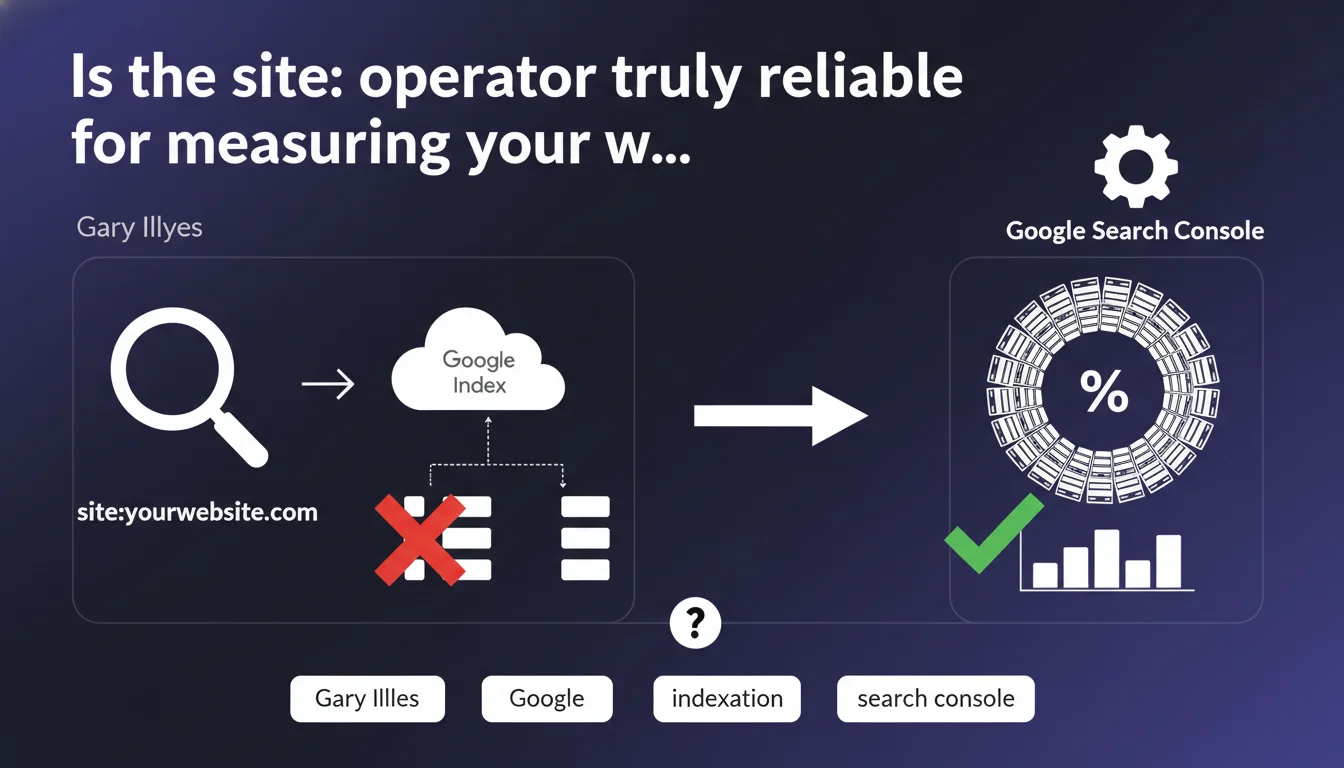

Google confirms that the site: command returns only a selection of indexed pages, not all of them. To know the real number of pages in the index, Search Console remains the only reliable source. This technical limitation calls into question an auditing practice that is still very widespread.

What you need to understand

Why can't the site: operator display everything?

Illyes' statement addresses a persistent belief: many SEO professionals still use site:example.com to quickly assess indexation. The problem? Google only loads a subset of results, never the complete set.

Technically, displaying millions of results through the search interface makes no sense for Google — it consumes resources without delivering user value. The site: operator was never designed as an exhaustive accounting tool, but rather as a search filter among others.

What's the difference with Search Console data?

Search Console taps directly into Google's indexation databases. No filtering, no sampling — just raw numbers. The coverage report gives you the exact count of indexed pages, excluded pages, and pages with errors.

Conversely, site: applies ranking mechanisms and deduplication that can hide pages that are technically indexed but deemed irrelevant for this display. You see 500 results? That absolutely doesn't mean you only have 500 pages in the index.

Is this limitation new?

No. Google has mentioned it before, but the practice persists. Illyes' reminder aims to definitively kill the myth: stop counting site: results as a reliable metric.

The real change is that Google is now communicating more directly about these technical limitations, whereas previously it left things vague.

- The site: operator returns a sample, never all indexed pages

- Search Console is the only reliable source for knowing the real indexation status

- This limitation is not a bug — it's an architectural constraint that Google accepts

- Site: results undergo filtering, ranking, and deduplication, which skews the count

SEO Expert opinion

Is this statement consistent with real-world observations?

Absolutely. For years, we've observed massive gaps between site: result counts and GSC data. Sites with 10,000 pages indexed in Search Console sometimes show only 1,200 results in site: — and vice versa.

The issue is that many clients, junior agencies, and even some SEO tools continue to rely on site: for their indexation reports. This official statement validates what we already know: it's a useless metric for measuring anything.

What nuances should be applied to this claim?

Let's be honest: site: remains useful for spot-checks. Example: verifying that a new strategic page has been indexed, checking that a sensitive directory isn't accidentally indexed, spotting duplicate content by combining it with other operators.

But for global indexation reporting, it's dead. The gap between site: and GSC can reach 50 to 80% on some sites — impossible to build a strategy on sand.

[To verify]: Google doesn't explicitly clarify which criteria govern the selection of pages displayed via site:. We assume it's related to ranking, freshness, and perceived quality — but there's no official confirmation. Intentional vagueness or technical complexity? Probably both.

In which cases does this rule cause problems?

The pinch point comes with quick audits without GSC access. When you don't have Search Console rights — because the client won't grant them, because it's a competitor you're analyzing, because it's a preliminary audit — you were already losing key data. Now it's official: you're working blind.

Second problem area: third-party SEO tools that scrape site: results to estimate indexation. This statement makes those metrics completely obsolete — yet some platforms continue to display them as flagship metrics.

Practical impact and recommendations

What should you do concretely to measure indexation?

First rule: Search Console becomes non-negotiable. If you manage a site without GSC access, you're not managing anything — you're guessing. Request read-only rights at minimum, set up a dedicated account if needed, but never work without this data again.

Second point: stop tracking site: variations in your dashboards. It measures nothing, creates false alert signals, wastes your time. Focus on the GSC coverage report: indexed pages, valid excluded pages, errors.

What errors should you avoid in tracking indexation?

Never rely on a single number. Indexation is a moving state: pages enter, exit, move to "discovered but not crawled" status. A 5-10% delta from week to week isn't necessarily alarming.

Classic mistake: panicking because site: shows fewer results than before. That means nothing. Check GSC, cross-reference with server logs if you have them, look at crawl rate and 4xx/5xx errors.

Another trap: using third-party tools that scrape site: to "estimate" indexation. This data is now officially recognized as inaccurate — stop trusting it.

How do you verify that your site is properly indexed?

- Connect your site to Google Search Console (validated property, sitemap submitted)

- Check the Coverage or Pages report (depending on GSC version) for the real count

- Identify valid excluded pages: they're technically indexable but Google chooses not to include them (weak content, duplication, canonicalization)

- Cross-reference with server logs to spot pages crawled but not indexed

- Monitor crawl errors (4xx, 5xx, robots.txt, meta tags)

- Use site: only for spot-checks (presence of a specific page, detection of indexation leaks)

- Automate tracking via the Search Console API if you manage multiple sites or large volumes

In short: Search Console is your only source of truth for indexation. The site: operator can serve as a quick check, but never as a steering metric. Adjust your processes, reporting, and tools accordingly.

These strategic adjustments — notably fine-grained exploitation of Search Console data, correlation with server logs, and detection of weak indexation signals — require specialized technical expertise. If your team lacks the resources or specialized skills to interpret these signals and continuously adjust your strategy, partnering with an SEO agency experienced in these issues can make the difference between approximative tracking and truly actionable management.

❓ Frequently Asked Questions

Peut-on encore utiliser l'opérateur site: pour des audits rapides ?

Quelle est la marge d'erreur entre site: et Search Console ?

Comment expliquer à un client que ses 50 000 pages ne génèrent que 8 000 résultats site: ?

Les outils SEO qui affichent un "nombre de pages indexées" se basent-ils sur site: ?

Si je n'ai pas accès à Search Console, comment mesurer l'indexation ?

🎥 From the same video 17

Other SEO insights extracted from this same Google Search Central video · published on 22/06/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.