Official statement

Other statements from this video 17 ▾

- □ Pourquoi votre site n'apparaît-il pas dans Google : indexation ou ranking ?

- □ Pourquoi Google pousse-t-il Search Console pour diagnostiquer l'indexation ?

- □ L'URL Inspection Tool de Search Console remplace-t-il vraiment le test d'indexation manuel ?

- □ Le rapport d'indexation de la Search Console suffit-il vraiment à diagnostiquer vos problèmes d'indexation ?

- □ Faut-il vraiment chercher à indexer 100% de ses pages ?

- □ Pourquoi Google indexe-t-il toujours la page d'accueil en premier sur un nouveau site ?

- □ Pourquoi la page d'accueil de votre nouveau site ne s'indexe-t-elle pas ?

- □ Pourquoi votre homepage n'apparaît-elle toujours pas dans l'index Google ?

- □ Votre site est-il vraiment absent de l'index Google ou juste victime de la canonicalisation ?

- □ Hreflang fausse-t-il vos rapports d'indexation dans Search Console ?

- □ Pourquoi vos pages 'site en construction' ne seront jamais indexées par Google ?

- □ Google peut-il encore indexer l'intégralité du web ?

- □ Google applique-t-il vraiment un quota d'indexation par site ?

- □ Faut-il supprimer l'ancien contenu pour améliorer l'indexation du nouveau ?

- □ Faut-il vraiment utiliser la fonction 'Demander une indexation' de la Search Console ?

- □ L'opérateur site: est-il vraiment fiable pour mesurer l'indexation de votre site ?

- □ Comment exploiter vraiment l'opérateur site: au-delà de la simple vérification d'indexation ?

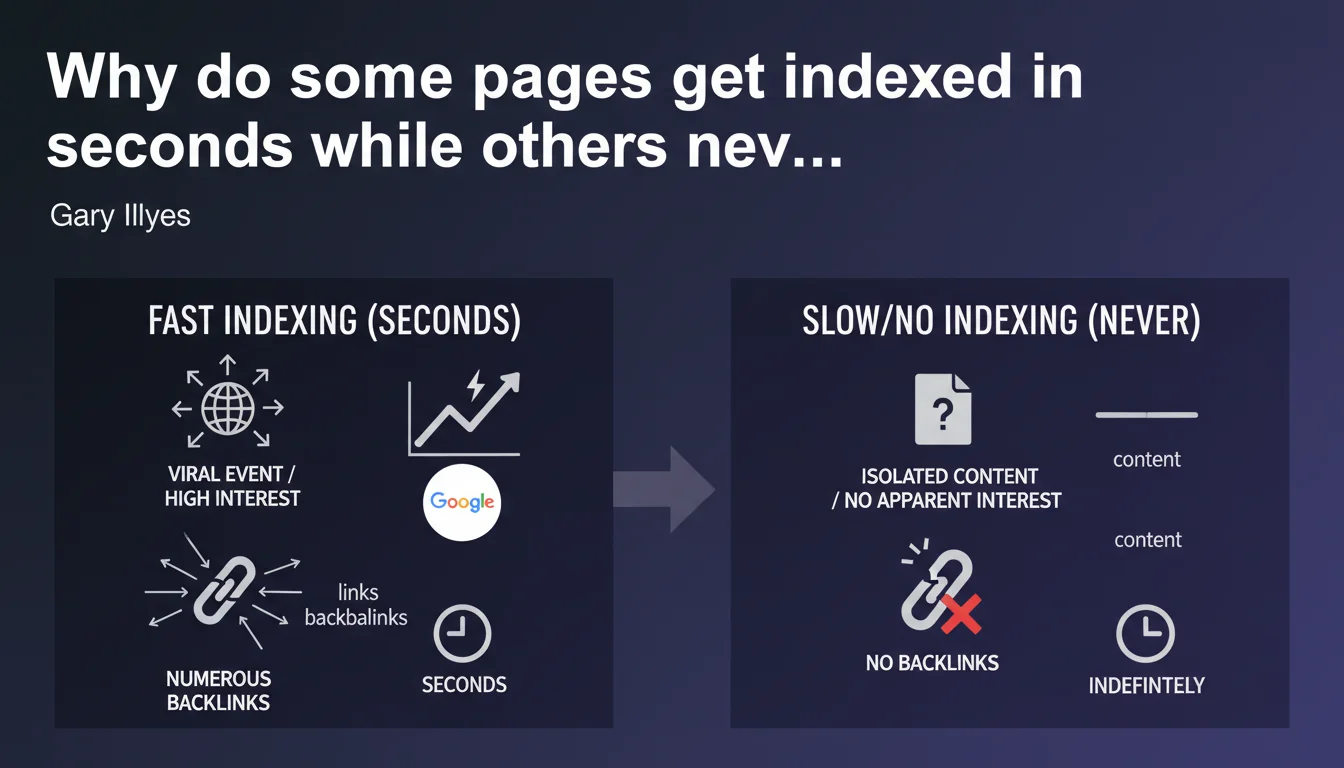

Gary Illyes confirms that indexing speed varies from a few seconds to indefinitely depending on the perceived interest of the content. A viral event with numerous backlinks (like Eurovision) gets indexed almost instantly, while an isolated page without interest signals may never be indexed. Link popularity and site structure remain the determining factors.

What you need to understand

What actually triggers fast indexing?

Gary Illyes points to two main criteria: virality and the volume of incoming backlinks. When an event generates a massive flow of backlinks in a short time, Google's algorithms detect the interest and prioritize indexing.

The Eurovision example is not random. We're talking about an event followed live by millions of people, reported simultaneously by hundreds of media outlets. Social signals, mentions, editorial links flood in in real time — exactly the type of content Google wants to serve immediately.

Why do some pages never get indexed at all?

The word "indefinitely" is harsh but revealing. Google doesn't crawl the entire web out of charity. If a page has no incoming links, no popularity signals, no observable direct traffic, the algorithm considers it unworthy of attention.

This is where the concept of crawl budget becomes crucial. Google allocates limited resources to each site. If your orphaned pages or weak content generate no engagement signals, they get deprioritized — and in practice, they're never visited.

Does this logic apply to all types of sites?

Short answer: yes, but with nuances.

An established authority site benefits from a more generous crawl budget. Its new pages have a better chance of being discovered quickly, even without immediate external links. Conversely, a small site with no track record must trigger indexation through clear external signals.

- Immediate popularity (links, mentions, traffic) = indexation in seconds to minutes

- Isolated content without signals = risk of permanent non-indexation

- Limited crawl budget = strict prioritization based on perceived interest

- Domain authority = slight advantage but no absolute guarantee

SEO Expert opinion

Is this statement consistent with real-world observations?

Absolutely. We've observed for years that news sites index their articles within minutes, while product pages on low-authority e-commerce sites may wait days or even disappear from the radar.

The problem is that Gary Illyes oversimplifies. He doesn't mention the role of XML sitemaps, the Indexing API, or manual submissions via Search Console. These levers exist and work — but their effectiveness remains conditional on content quality and external signals. [To verify]: Does manual submission really accelerate indexation on a site without authority, or does it merely trigger a visit without guaranteeing indexation?

What nuances should be added to this statement?

Popularity isn't just about backlinks. Google now integrates behavioral signals, Chrome data, and potential direct traffic. Content can be indexed quickly if it generates direct traffic before even acquiring links — think newsletters with large readerships or content shared internally within companies.

Another point: topical freshness. On trending topics (crypto, AI, political news), Google indexes faster even for mid-tier sites. The algorithm anticipates demand. On stable or older niches, the bar is higher.

In what cases does this rule not apply?

Sites with access to the Indexing API (jobs, livestreams, events) partially circumvent this logic. Indexation is nearly guaranteed within minutes, regardless of immediate popularity.

Canonicalized or duplicate pages don't strictly follow this rule either. Google may crawl them quickly but choose not to index them due to duplication. Crawling ≠ indexing.

Practical impact and recommendations

What should you do concretely to accelerate indexation?

First priority: generate popularity signals as soon as you publish. This means quality editorial backlinks, targeted social sharing, mentions in high-circulation newsletters. Don't expect Google to magically discover your content.

Next, maximize internal discoverability. An orphaned page with no internal links is invisible. Integrate your new content into your existing structure, on your homepage if relevant, in thematic hubs, in related articles.

Finally, leverage official tools: updated XML sitemap, Google Search Console to verify indexation, Indexing API if your site is eligible. These levers don't guarantee anything, but they improve your odds.

What mistakes should you avoid at all costs?

Don't publish isolated content without a promotion plan. If you have no way to generate external signals, postpone publication or merge the content with a stronger existing page.

Stop spamming the "Request indexation" tool in Search Console for every new page. It changes nothing if your content has no perceived interest. Google puts you in queue and processes when it decides to.

How do you verify your strategy is working?

Track the average indexation delay of your new pages. If you notice progressive improvement (from 7 days to 2 days, for example), it means your authority and external signals are strengthening.

Analyze pages that never got indexed. If they represent more than 10% of your content, you probably have a perceived quality or crawl budget problem. Audit, consolidate, eliminate what's superfluous.

- Publish with an external promotion strategy (backlinks, shares, mentions)

- Integrate each new page into internal linking structure on day one

- Submit XML sitemap and verify proper reading in Search Console

- Monitor average indexation delay over 30 days to detect trends

- Identify and address pages "discovered but not indexed" monthly

- Prioritize quality over quantity: 10 strong pages beat 100 weak ones

❓ Frequently Asked Questions

Est-ce que soumettre une page via Search Console garantit son indexation ?

Combien de temps faut-il attendre avant de s'inquiéter qu'une page ne s'indexe pas ?

Les petits sites ont-ils une chance d'indexer rapidement leurs contenus ?

Pourquoi certaines pages sont crawlées mais jamais indexées ?

L'API Indexing fonctionne-t-elle mieux que le sitemap XML ?

🎥 From the same video 17

Other SEO insights extracted from this same Google Search Central video · published on 22/06/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.