Official statement

Other statements from this video 17 ▾

- □ Pourquoi votre site n'apparaît-il pas dans Google : indexation ou ranking ?

- □ Pourquoi Google pousse-t-il Search Console pour diagnostiquer l'indexation ?

- □ L'URL Inspection Tool de Search Console remplace-t-il vraiment le test d'indexation manuel ?

- □ Le rapport d'indexation de la Search Console suffit-il vraiment à diagnostiquer vos problèmes d'indexation ?

- □ Pourquoi Google indexe-t-il toujours la page d'accueil en premier sur un nouveau site ?

- □ Pourquoi la page d'accueil de votre nouveau site ne s'indexe-t-elle pas ?

- □ Pourquoi votre homepage n'apparaît-elle toujours pas dans l'index Google ?

- □ Votre site est-il vraiment absent de l'index Google ou juste victime de la canonicalisation ?

- □ Hreflang fausse-t-il vos rapports d'indexation dans Search Console ?

- □ Pourquoi vos pages 'site en construction' ne seront jamais indexées par Google ?

- □ Pourquoi certaines pages s'indexent en quelques secondes et d'autres jamais ?

- □ Google peut-il encore indexer l'intégralité du web ?

- □ Google applique-t-il vraiment un quota d'indexation par site ?

- □ Faut-il supprimer l'ancien contenu pour améliorer l'indexation du nouveau ?

- □ Faut-il vraiment utiliser la fonction 'Demander une indexation' de la Search Console ?

- □ L'opérateur site: est-il vraiment fiable pour mesurer l'indexation de votre site ?

- □ Comment exploiter vraiment l'opérateur site: au-delà de la simple vérification d'indexation ?

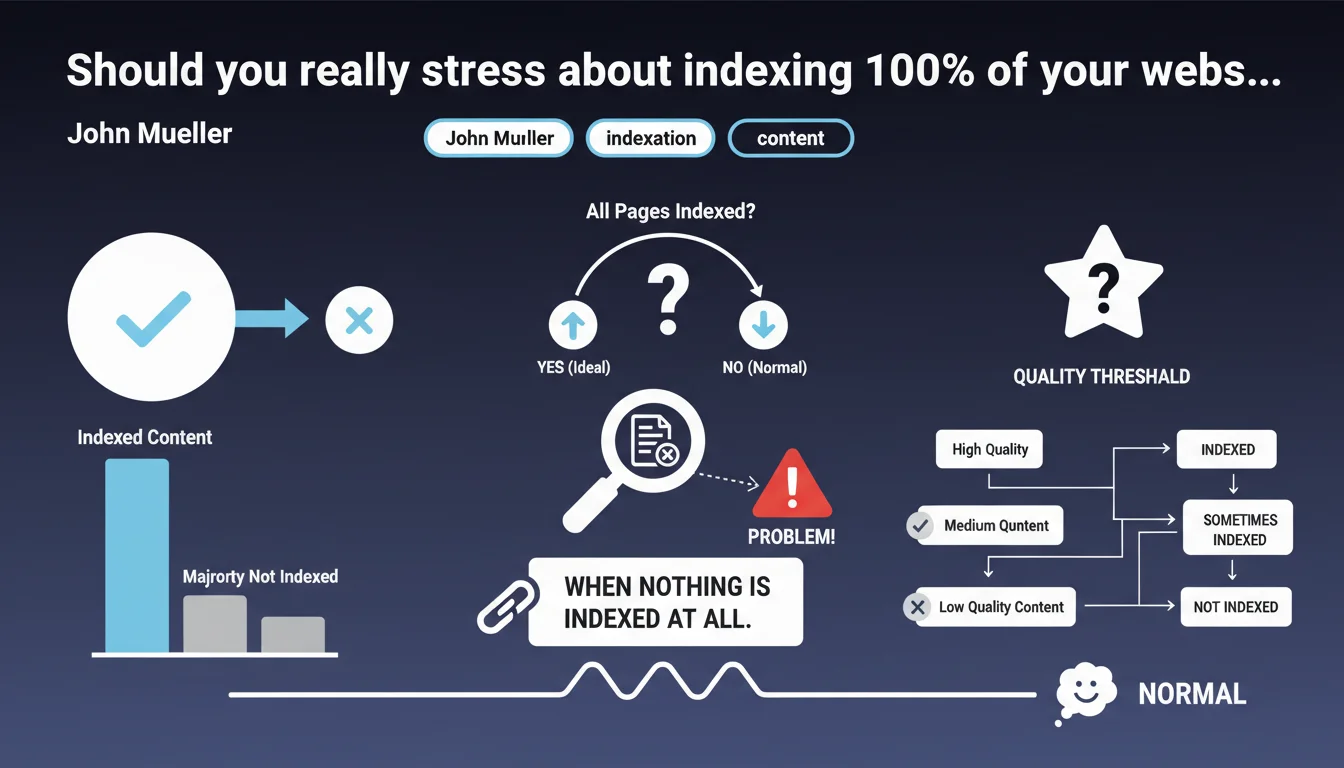

Google confirms it's perfectly normal for a significant portion of a site's pages not to be indexed. The only real problem: when nothing gets indexed at all. The underlying message? Not all your pages deserve to be in the index — and Google is the one making that call.

What you need to understand

Why doesn't Google want to index every page on a website?

Google manages hundreds of billions of web pages. Every indexed URL consumes resources: storage, processing quality signals, regular updates. The algorithm therefore applies harsh selection based on perceived content value.

Here's the brutal truth — and this is where it stings — a page that's technically accessible and crawlable can be deliberately excluded from the index if Google determines it adds nothing meaningful for users. No penalty. Just an economic decision on their end.

What determines whether a page has "sufficient quality"?

Google deliberately keeps this vague. We know several quality signals come into play: content uniqueness, information depth, user engagement signals, domain thematic authority.

But let's be honest: Google doesn't provide a numbered evaluation grid. This statement confirms the existence of an implicit quality threshold, without revealing its exact boundaries. Frustrating? Yes. Surprising? No.

When should you actually start worrying?

According to Mueller, the only real alarm signal is when no pages are indexed at all. Between "normal partial indexation" and "structural problem," the line remains blurry.

A site with 30% of pages indexed can be in perfect health if the remaining 70% are secondary URLs (product filters, pagination pages, parameter variants). Conversely, a site indexing only 30% of its strategic content has a problem — even if technically "it's normal."

- Partial indexation is the norm for most medium and large websites

- Google applies a quality filter whose exact criteria aren't public

- The issue isn't the raw indexation rate, but which pages are excluded

- Only total absence of indexation constitutes a clear warning sign

SEO Expert opinion

Is this statement consistent with real-world observations?

Absolutely. For years, we've observed that sites generate massive amounts of crawled but non-indexed URLs in Search Console. This statement formalizes what we're seeing: Google indexes selectively, not exhaustively.

The problem is defining "sufficient quality." On e-commerce sites with thousands of products, you regularly see perfectly optimized product pages with unique content that remain permanently excluded from the index without apparent reason. [To verify]: Google claims it's "normal," but when strategic pages are ignored despite serious editorial work, it's hard not to see this as opaque priority management.

What nuances should be applied to this general rule?

Mueller's statement sets a framework, but says nothing about site typologies. A news outlet with 80% of articles indexed? That's concerning. A corporate site with 30% of pages indexed (FAQs, legal mentions, internal pages)? That might be perfectly healthy.

And that's where it breaks down: Google provides no industry benchmarks. You have 40% indexation? Is that normal for your niche or a sign of quality issues? No public data to settle it. We're flying blind.

When doesn't this rule apply?

If you notice your high-value pages — pillar content, strategic landing pages, flagship product pages — aren't indexed, that's definitely NOT normal. Mueller's statement shouldn't become a smokescreen.

Another problematic scenario: sites whose indexation rate drops sharply without technical or editorial changes. A drop from 60% to 30% in a few weeks isn't "just normal fluctuation." It's a signal that warrants investigation, whatever this general statement says.

Practical impact and recommendations

How do you identify whether your indexation rate is healthy or problematic?

First step: segment your URLs by type (editorial content, products, categories, technical pages). Look at indexation rate per segment. If your strategic content is massively excluded, you have a problem.

Next, analyze exclusion patterns in Search Console: "Crawled, currently not indexed," "Discovered, currently not indexed," "Alternate page with appropriate canonical tag." Each status has a different meaning — and specific corrective action.

What concrete actions can improve indexation of strategic pages?

If Google thinks your pages lack quality, several levers are available:

- Enrich unique editorial content on currently excluded pages (goal: minimum 500+ words for strategic content)

- Strengthen internal linking to pages you want indexed — a strong priority signal for Google

- Eliminate redundant or low-value content polluting your index (unnecessary filters, parameter pages, pointless variants)

- Verify your strategic pages receive consistent crawl budget: are they accessible within 3 clicks from the homepage?

- Analyze engagement signals: if your pages have 90% bounce rate and 10-second average time on page, Google draws conclusions from that

- Use the "URL Inspection" feature then "Request indexing" for priority pages — without overdoing it

What mistakes should you avoid facing partial indexation?

Don't fall into the trap of forcing massive indexation via bloated sitemaps or repeated indexing requests. Google sees this as a spam or over-optimization signal.

Another common mistake: believing "all pages must be indexed." Wrong. Some URLs (filters, pagination, deprecated AMP mobile variants) have no business cluttering your index. Prioritize.

❓ Frequently Asked Questions

Quel est le taux d'indexation normal pour un site web ?

Comment savoir pourquoi Google n'indexe pas certaines pages ?

Peut-on forcer Google à indexer une page ?

Faut-il supprimer les pages non indexées ?

L'indexation partielle pénalise-t-elle le référencement global du site ?

🎥 From the same video 17

Other SEO insights extracted from this same Google Search Central video · published on 22/06/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.