Official statement

Other statements from this video 8 ▾

- □ Google vérifie-t-il réellement l'expérience utilisateur au-delà des codes HTTP ?

- □ Pourquoi Google veut-il détecter les incidents avant que vous ne les signaliez ?

- □ Comment Google gère-t-il les pics de trafic sans pénaliser le référencement ?

- □ Pourquoi Google traite-t-il certaines requêtes à moindre coût que d'autres ?

- □ Comment Google provisionne-t-il ses ressources serveur pour les pics de trafic prévisibles ?

- □ Google peut-il réellement voler des ressources à l'indexation pour stabiliser son moteur de recherche ?

- □ Comment Google gère-t-il les incidents de ranking avec des mitigations rapides ?

- □ Pourquoi Google coupe-t-il brutalement certains data centers en cas d'incident ?

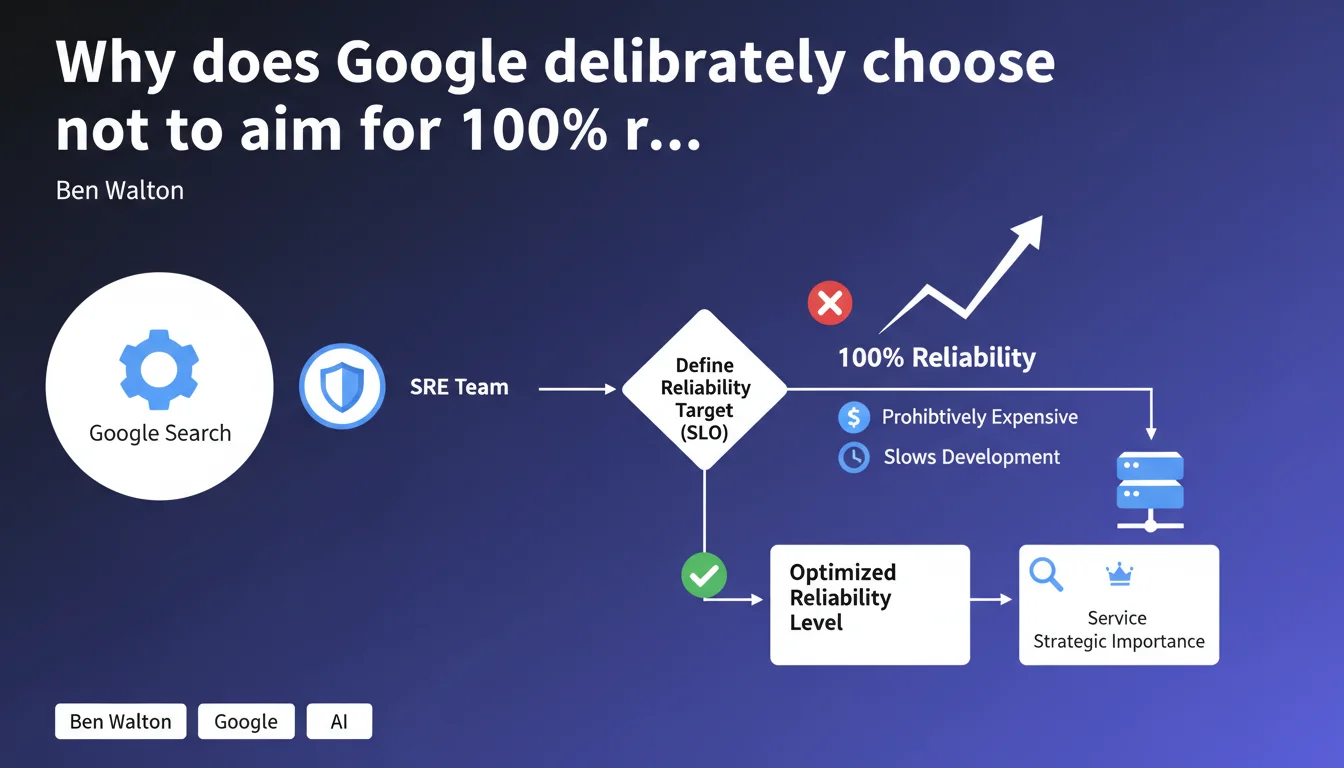

Google deliberately assumes a reliability level below 100% for Search. The SRE team sets reliability objectives (SLOs) based on a cost/benefit tradeoff, considering that maximum reliability would slow development too much and cost far more than what users actually need.

What you need to understand

What does "not aiming for 100% reliability" actually mean in practice?

Google uses Service Level Objectives (SLOs), performance thresholds that the SRE team (Site Reliability Engineering) defines for each product. For Search, this means a certain percentage of outages, bugs, or incidents is tolerated by design.

Pursuing absolute reliability would require massive investments in redundant infrastructure, extended testing cycles, and drastically reduced deployment velocity. Google prefers to iterate quickly, even if this means occasional micro-incidents.

How does Google determine what level of reliability is acceptable?

The tradeoff is based on three criteria: actual user needs, the strategic importance of the service, and the marginal cost of improving each additional percentage point.

An SLO of 99.9% means roughly 8 hours 40 minutes of downtime per year — acceptable for Search. Moving to 99.99% would reduce that to 52 minutes, but might require 10 times more resources for a minimal gain in overall user experience.

What are the implications for your SEO work day-to-day?

This policy explains why you observe unexplained fluctuations, temporary indexing bugs, or discrepancies between Search Console and reality. These aren't necessarily critical failures — they're normal operation within the tolerated margin of error.

- Google accepts a planned error rate on Search rather than guaranteeing absolute reliability

- SLOs vary based on the strategic importance of each Search feature

- This approach prioritizes rapid product evolution over technical perfection

- Minor incidents are part of normal operation, not critical exceptions

SEO Expert opinion

Is this statement consistent with what we observe in the field?

Absolutely. SEO professionals regularly encounter unexplained anomalies: positions fluctuating for no apparent reason, pages indexed then deindexed within 24 hours, Search Console data inconsistent with server logs.

What Google is formalizing here is that these bugs are part of the implicit contract. Except — and here's where it gets tricky — there's no transparency about Search's SLOs. We don't know what error rate Google considers "acceptable," or which features have stricter SLOs.

What are the gray areas in this statement?

[To verify] The notion of "user needs" remains vague. Does Google determine these needs through qualitative studies, quantitative metrics, or simply internal assumptions? No public data on this.

Another opaque point: how are SLOs distributed across different Search components? Does indexing have the same reliability target as ranking? What about featured snippets? [To verify] Probably not, but Google provides no figures.

In what cases does this logic not apply?

Manual actions and critical actions (deindexing for spam, copyright violations, etc.) likely have much stricter SLOs. Google can't afford to ban legitimate sites by mistake "in 0.1% of cases."

Similarly, sensitive features — medical results, financial content, YMYL in general — should theoretically have higher reliability requirements. But again, no public data on this.

Practical impact and recommendations

What should you do concretely in light of this reality?

Accept that your site will experience unexplained micro-incidents. A page disappearing from the index for 48 hours then returning? It might just be a bug within Google's tolerated margin, not necessarily a penalty.

On the monitoring side, multiplying sources of truth becomes essential. Don't rely solely on Search Console: cross-reference with your server logs, third-party tools (Screaming Frog, OnCrawl, Botify), and your analytics. If Search Console says "0 pages indexed" but your logs show Googlebot crawling normally, it's probably a GSC display bug.

What mistakes should you avoid in this context?

Don't panic at the first bug. Before redesigning your site because of an anomaly in GSC, wait at least 72 hours to see if it resolves itself — often it does.

Also avoid over-optimizing to compensate for perceived bugs. If your rankings fluctuate by ±3 positions daily for no apparent reason, it's probably not a signal that your content is insufficient — it's just noise in the system.

- Implement multi-source monitoring to distinguish real issues from temporary bugs

- Systematically document anomalies with timestamps and screenshots

- Define reasonable alert thresholds (e.g., alert only if an anomaly persists 72+ hours)

- Never modify your site in immediate response to a Search Console bug without external confirmation

- Archive your server logs for at least 6 months in case you need to escalate a dispute

❓ Frequently Asked Questions

Quel est le SLO exact de Google Search ?

Les bugs d'indexation que je constate sont-ils normaux ou critiques ?

Google peut-il invoquer ce SLO pour ignorer mes signalements de bugs ?

Les pénalités manuelles sont-elles aussi soumises à ce taux d'erreur ?

Comment savoir si une fluctuation de ranking est un bug ou un vrai changement algorithmique ?

🎥 From the same video 8

Other SEO insights extracted from this same Google Search Central video · published on 03/10/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.