Official statement

Other statements from this video 8 ▾

- □ Pourquoi Google refuse-t-il de viser 100% de fiabilité pour son moteur de recherche ?

- □ Pourquoi Google veut-il détecter les incidents avant que vous ne les signaliez ?

- □ Comment Google gère-t-il les pics de trafic sans pénaliser le référencement ?

- □ Pourquoi Google traite-t-il certaines requêtes à moindre coût que d'autres ?

- □ Comment Google provisionne-t-il ses ressources serveur pour les pics de trafic prévisibles ?

- □ Google peut-il réellement voler des ressources à l'indexation pour stabiliser son moteur de recherche ?

- □ Comment Google gère-t-il les incidents de ranking avec des mitigations rapides ?

- □ Pourquoi Google coupe-t-il brutalement certains data centers en cas d'incident ?

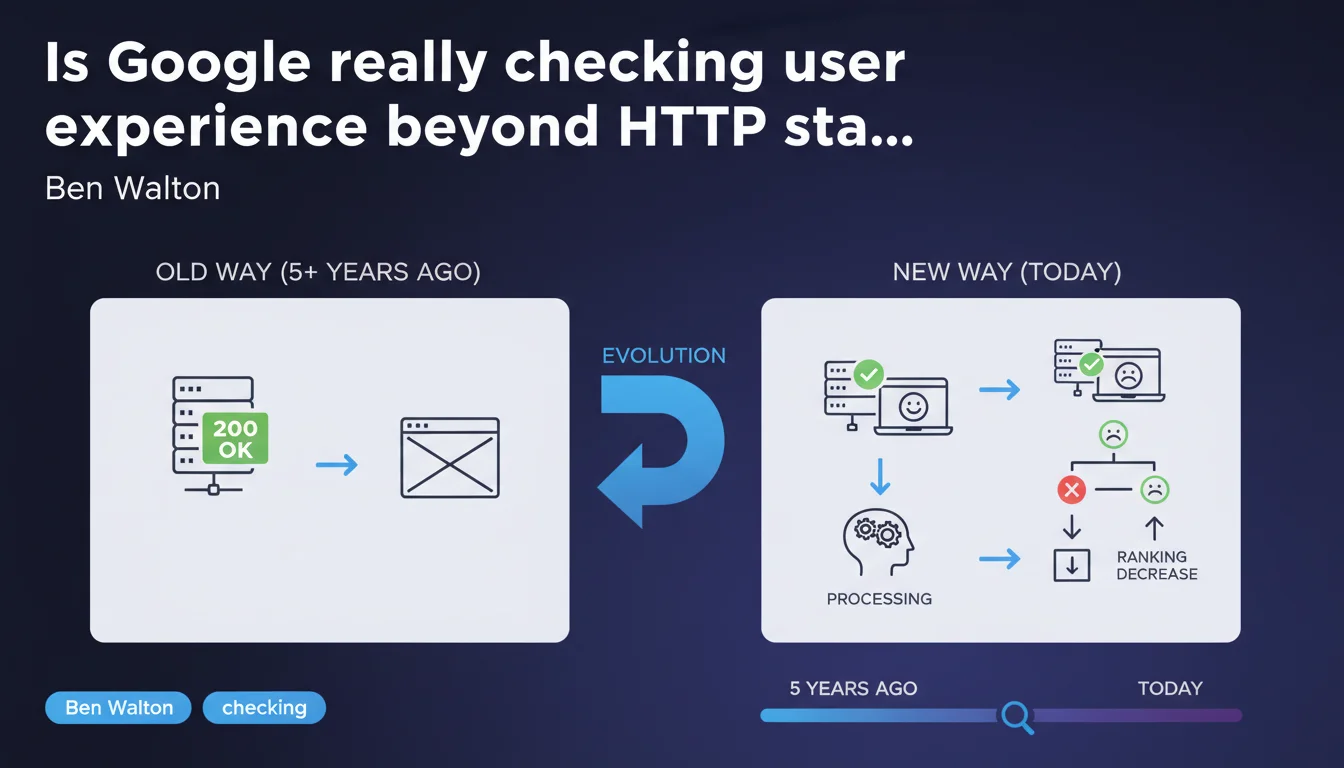

Google no longer simply verifies that your server returns a 200 code. The SRE team now monitors whether what is actually delivered to the user works correctly — a major shift that challenges traditional SEO monitoring practices. Bottom line: a clean HTTP status is no longer enough.

What you need to understand

How does this differ from traditional monitoring practices?

Historically, SEOs monitored primarily HTTP status codes: a 200 meant the page was accessible, a 404 that it was not found, a 503 that there was a server problem. Google also communicated mainly on these metrics in Search Console.

But real-world reality has always been more complex. A page can return a perfect 200 while displaying broken content, crashing JavaScript, blocked critical resources, or completely misaligned visuals. The user sees an unusable page — and that's precisely what Google now monitors systematically.

How can Google technically verify that the experience works?

The statement mentions the SRE (Site Reliability Engineering) team, which indicates an advanced systems engineering approach. Concretely, this means Google doesn't limit itself to simple HTTP crawling.

The algorithm likely evaluates full page rendering, JavaScript execution, availability of critical resources, and potentially signals like Core Web Vitals collected via Chrome. This is multi-level monitoring that goes far beyond simple server pinging.

Is this approach new or just officially disclosed?

The statement specifies that this evolution has developed over the past 5 years — so since around 2019-2020. It's not a breaking announcement but formalization of a practice already in place.

Many SEOs have long observed that pages with clean HTTP status can underperform if the actual experience is degraded. What Google is doing here is officially confirming what the field already suggested: the engine goes well beyond basic server signals.

- Google no longer limits itself to traditional HTTP codes to assess page availability

- The SRE team monitors the complete product experience: rendering, JavaScript, critical resources

- This approach has gradually developed over 5 years, it's not sudden news

- A clean 200 code no longer guarantees that Google considers your page truly functional

- Classic SEO monitoring based solely on HTTP statuses is now insufficient

SEO Expert opinion

Is this statement consistent with field observations?

Let's be honest: yes, completely. For years, we've observed situations where pages that are technically "accessible" according to classic tools don't perform well in Google. Sites with catastrophic load times, JavaScript errors blocking main content, or critical resources in 404 — all while returning a nice 200 to crawling.

What's changing is that Google is formally publicizing what its algorithms were already doing implicitly. The SRE team mentioned in the statement suggests an advanced engineering approach — but remains vague on the precise criteria used to qualify an experience as "working correctly."

What grey areas remain in this statement?

The statement is deliberately vague on technical details. Google says it monitors "whether what is delivered to the user works correctly," but doesn't precisely define what "works correctly" means. [To be verified] What thresholds? What specific signals? What tolerance for partial errors?

We can assume that Core Web Vitals, critical JavaScript errors, blocking resources, and full rendering are in scope — but Google doesn't state it explicitly here. The lack of transparency on exact metrics complicates compliance.

Another point: Google talks about its internal SRE team, but does it apply exactly the same logic to crawling external sites? [To be verified] It's likely yes, but nothing in this statement formally confirms it.

In what cases could this advanced monitoring cause problems?

For sites with conditional experiences (content that varies by geolocation, authentication, or device), this monitoring raises questions. If Google crawls from certain network points or with certain configurations, it may perceive a degraded experience that doesn't affect all real users.

Sites with complex progressive enhancements — where part of the experience depends on non-critical JavaScript — must ensure that Google doesn't consider these optional enhancements as "malfunctions." The risk: a page being penalized for a JS error that only affects a secondary module.

Practical impact and recommendations

What should you audit as a priority on your site?

First reflex: don't settle for tools that only check HTTP codes. You must now audit the real experience as Google perceives it — that is, full rendering with JavaScript executed.

Use tools like Google Search Console ("Page Experience" section), PageSpeed Insights, and monitoring solutions that test full rendering — not just server response time. Look at Core Web Vitals, critical JavaScript errors, resources blocked by robots.txt or in 404 error.

Also verify that your main content is visible in crawl with JavaScript enabled. The "URL Inspection" tool in Search Console shows the version rendered by Google — compare it with what your users actually see. If discrepancies exist, fix them.

What errors should you absolutely avoid?

Never assume that a 200 code means Google considers your page functional. A page can return a clean status but display an error message on the client side, empty content due to a JS error, or completely broken layout.

Also avoid blocking critical resources via robots.txt — CSS, JavaScript, images essential to rendering. Google needs these elements to assess whether the experience works. If a critical resource fails, even with a 200 on the HTML, the page may be considered dysfunctional.

Watch out for redirects and complex conditional loading. If Google perceives an inconsistent or incomplete experience during crawl, it can affect indexing — even if technically your server responds correctly.

How do you verify that your site complies with this new logic?

Implement regular monitoring that simulates Googlebot behavior with JavaScript enabled. Tools like Screaming Frog (JavaScript rendering mode), Sitebulb, or SaaS solutions like OnCrawl or Botify allow you to audit full rendering.

Systematically verify JavaScript errors in the browser console — particularly those that block main content display. A JS error that prevents rendering of a critical section can now be perceived by Google as a page malfunction.

Regularly compare the raw HTML version and the version rendered after JavaScript. If essential elements (titles, main content, internal links) only appear after JS execution, ensure Google sees them well. Use the "URL Inspection" tool in Search Console to validate.

- Audit the full rendering of your pages with JavaScript enabled, not just HTTP codes

- Check Core Web Vitals and fix pages with degraded metrics

- Identify and fix critical JavaScript errors that block content display

- Ensure that essential resources (CSS, JS, images) are not blocked or in error

- Compare the raw HTML version with the version rendered by Google via Search Console

- Implement continuous monitoring simulating Googlebot crawl with JS enabled

- Test conditional experiences to verify they don't create inconsistencies during crawl

This Google evolution requires a paradigm shift in SEO monitoring. Traditional tools based on HTTP statuses are no longer sufficient — you must now audit the complete user experience as Google perceives it.

This involves mastering JavaScript rendering, Core Web Vitals, critical resource management, and conditional content behavior. For many sites, this technical complexity requires specialized support — SEO agencies that master these rendering and experience challenges can help you implement a monitoring and optimization strategy suited to these new requirements.

❓ Frequently Asked Questions

Les codes HTTP 200 ne garantissent-ils donc plus l'indexation correcte d'une page ?

Quels outils permettent de voir ce que Google perçoit réellement lors du crawl ?

Les Core Web Vitals font-ils partie de cette surveillance avancée ?

Faut-il arrêter de surveiller les codes HTTP classiques ?

Cette surveillance s'applique-t-elle uniquement aux nouveaux sites ou à tous les sites existants ?

🎥 From the same video 8

Other SEO insights extracted from this same Google Search Central video · published on 03/10/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.