Official statement

Other statements from this video 17 ▾

- □ Pourquoi votre site n'apparaît-il pas dans Google : indexation ou ranking ?

- □ Pourquoi Google pousse-t-il Search Console pour diagnostiquer l'indexation ?

- □ L'URL Inspection Tool de Search Console remplace-t-il vraiment le test d'indexation manuel ?

- □ Le rapport d'indexation de la Search Console suffit-il vraiment à diagnostiquer vos problèmes d'indexation ?

- □ Faut-il vraiment chercher à indexer 100% de ses pages ?

- □ Pourquoi Google indexe-t-il toujours la page d'accueil en premier sur un nouveau site ?

- □ Pourquoi la page d'accueil de votre nouveau site ne s'indexe-t-elle pas ?

- □ Pourquoi votre homepage n'apparaît-elle toujours pas dans l'index Google ?

- □ Votre site est-il vraiment absent de l'index Google ou juste victime de la canonicalisation ?

- □ Hreflang fausse-t-il vos rapports d'indexation dans Search Console ?

- □ Pourquoi vos pages 'site en construction' ne seront jamais indexées par Google ?

- □ Pourquoi certaines pages s'indexent en quelques secondes et d'autres jamais ?

- □ Google peut-il encore indexer l'intégralité du web ?

- □ Google applique-t-il vraiment un quota d'indexation par site ?

- □ Faut-il vraiment utiliser la fonction 'Demander une indexation' de la Search Console ?

- □ L'opérateur site: est-il vraiment fiable pour mesurer l'indexation de votre site ?

- □ Comment exploiter vraiment l'opérateur site: au-delà de la simple vérification d'indexation ?

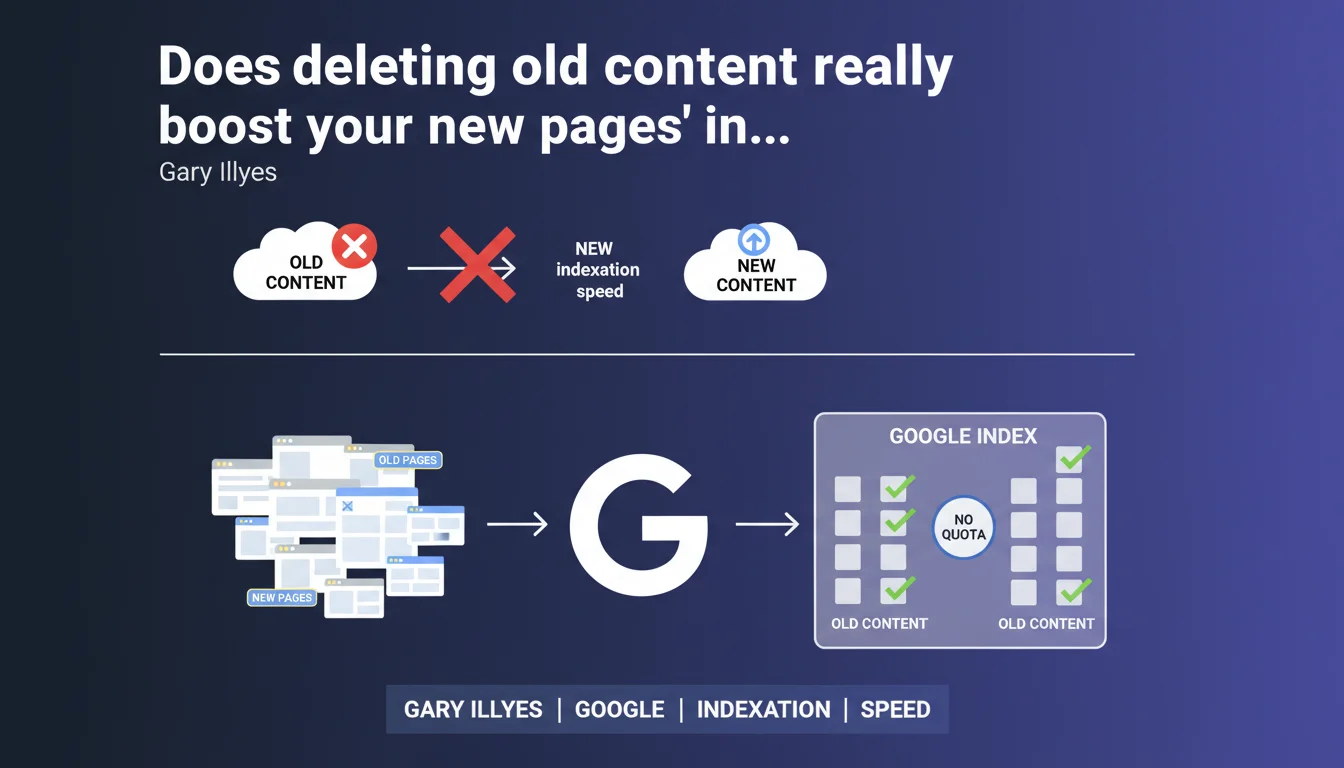

Google claims there is no indexing quota per site and that deleting old content does not improve the indexation of new pages. Old and new content can coexist without mutual interference according to the Mountain View firm.

What you need to understand

Why does this statement challenge a common SEO practice?

Many SEO professionals still believe that a site with too many old or irrelevant pages dilutes its "crawl budget" and penalizes the indexation of new URLs. This belief is based on the idea that an indexation quota exists per domain.

Gary Illyes directly dismantles this hypothesis. According to him, Google does not impose any indexation limit per site. A domain can host 100 pages or 100,000 without this negatively impacting the engine's ability to index new resources.

What is the difference between crawl budget and indexation quota?

The crawl budget corresponds to the number of pages that Googlebot will crawl on a site within a given period. It is a limit on resources allocated to crawling, not indexation.

The indexation quota — which Google formally denies here — would be a theoretical limit on the number of pages a domain can index. These two concepts are distinct but often confused in SEO discussions.

What does this statement imply for managing old content?

Practically speaking, keeping archives, obsolete product pages, or historical content would not harm the indexation of new articles or product sheets. Google would be capable of managing this friction-free coexistence.

This does not mean that all content deserves to be kept — the question is rather one of user experience, business relevance, and editorial strategy, not pure indexation.

- No indexation quota per domain according to Google

- Crawl budget and indexation are two distinct mechanisms

- Deleting old content does not accelerate new content indexation

- The decision to keep or remove old content hinges on business and UX criteria, not technical indexation

SEO Expert opinion

Is this statement consistent with real-world observations?

In practice, some audits show that a large volume of low-quality pages can indeed slow crawling and, indirectly, indexation. [To be verified] — Google is speaking here of indexation in the strict sense, not crawl prioritization or perceived site quality.

The nuance is crucial: if Googlebot spends its time crawling thousands of zombie pages, there is mechanically less time left to discover and quickly index fresh content. The indexation quota may not exist, but crawl time is finite.

In which cases does deleting old content remain relevant?

Removing or consolidating old content remains justified for several reasons — but not the one Google invokes. Duplicate pages, thin content, internal cannibalization: situations where cleanup improves the semantic coherence of the site.

The positive effect observed after massive pruning likely does not come from a "freed quota," but from better concentration of internal link juice, reduction of semantic noise, and improved average quality as perceived by the algorithm.

What strategy should you adopt in light of this statement?

Let's be honest: Google benefits when publishers keep the maximum amount of indexable content — more pages means more potential advertising surface. This statement could aim to discourage aggressive pruning strategies.

The real question is not "can I keep everything?" but "does this content add value to my users and my overall SEO strategy?" If the answer is no, delete it — not for a hypothetical quota, but for editorial coherence.

Practical impact and recommendations

What should I concretely do with my site's old content?

Do not blindly delete old pages under the pretext of "freeing up indexation." Evaluate each piece of content based on business criteria: does it generate organic traffic? Does it serve a conversion or information objective? Does it participate in strategic internal linking?

If an old page still performs, keep it. If it is obsolete but historically important, consider a redesign or update rather than brutal deletion. Content strategy trumps pure technique.

What mistakes should you avoid after this statement?

The mistake would be interpreting this statement as permission to let editorial quality slip. A site filled with weak pages will not face indexation issues according to Google, but it will likely face ranking and user experience problems.

Another trap: confusing crawl budget with indexation. If your site suffers from slow crawl speed on new URLs, the problem may come from poor architecture, chained redirects, or excessive URL parameters — not a imaginary indexation quota.

How can I verify that my site properly handles old and new content?

- Analyze in Search Console the indexation rate of new pages vs. old pages

- Check through server logs that Googlebot properly crawls your new content within an acceptable timeframe

- Audit the average quality of your index: ratio of active pages to zombie pages (zero traffic over 12 months)

- Identify old pages that cannibalize traffic on queries where you published more recent and relevant content

- Implement a process for regularly updating evergreen content rather than a logic of systematic deletion

❓ Frequently Asked Questions

Supprimer de vieilles pages améliore-t-il vraiment le SEO ?

Existe-t-il une limite au nombre de pages qu'un site peut faire indexer ?

Crawl budget et quota d'indexation, c'est la même chose ?

Dois-je garder toutes mes pages anciennes en ligne ?

Comment savoir si mes nouvelles pages sont bien indexées ?

🎥 From the same video 17

Other SEO insights extracted from this same Google Search Central video · published on 22/06/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.