Official statement

Other statements from this video 19 ▾

- □ Faut-il paniquer si votre hreflang disparaît temporairement pendant une migration ?

- □ Faut-il bloquer GoogleOther ou risquer d'impacter ses services Google ?

- □ Les domaines locaux (ccTLD) offrent-ils vraiment un avantage SEO pour le référencement local ?

- □ Pourquoi Google traite-t-il un site après expansion massive comme un tout nouveau site web ?

- □ Pourquoi Google continue-t-il d'afficher l'ancien nom de votre site après un rebranding ?

- □ Faut-il vraiment corriger toutes les erreurs d'indexation signalées dans la Search Console ?

- □ Comment exploiter l'API du tableau de bord de statut Google Search pour vos outils SEO ?

- □ Pourquoi vos données structurées produits n'apparaissent-elles pas dans les résultats enrichis ?

- □ Marque confondue avec un mot courant : faut-il vraiment attendre des mois sans rien faire ?

- □ Comment masquer du texte à Google en bloquant le JavaScript qui le contient ?

- □ Peut-on vraiment utiliser le Schema Recipe pour n'importe quel type de recette ?

- □ Google peut-il transférer vos rankings SEO lors d'une migration de domaine ?

- □ Comment la balise noindex fonctionne-t-elle réellement page par page ?

- □ Faut-il vraiment remplir tous les champs des données structurées pour que Google les prenne en compte ?

- □ Les flux RSS sont-ils vraiment exploités par Google pour l'exploration et l'indexation ?

- □ Pourquoi votre nouveau favicon met-il autant de temps à apparaître dans les résultats Google ?

- □ L'ordre des balises H1, H2, H3 influence-t-il vraiment le classement Google ?

- □ Les liens sur pages bloquées au crawl perdent-ils vraiment toute leur valeur SEO ?

- □ Faut-il vraiment structurer ses sitemaps selon des règles précises ou peut-on faire n'importe quoi ?

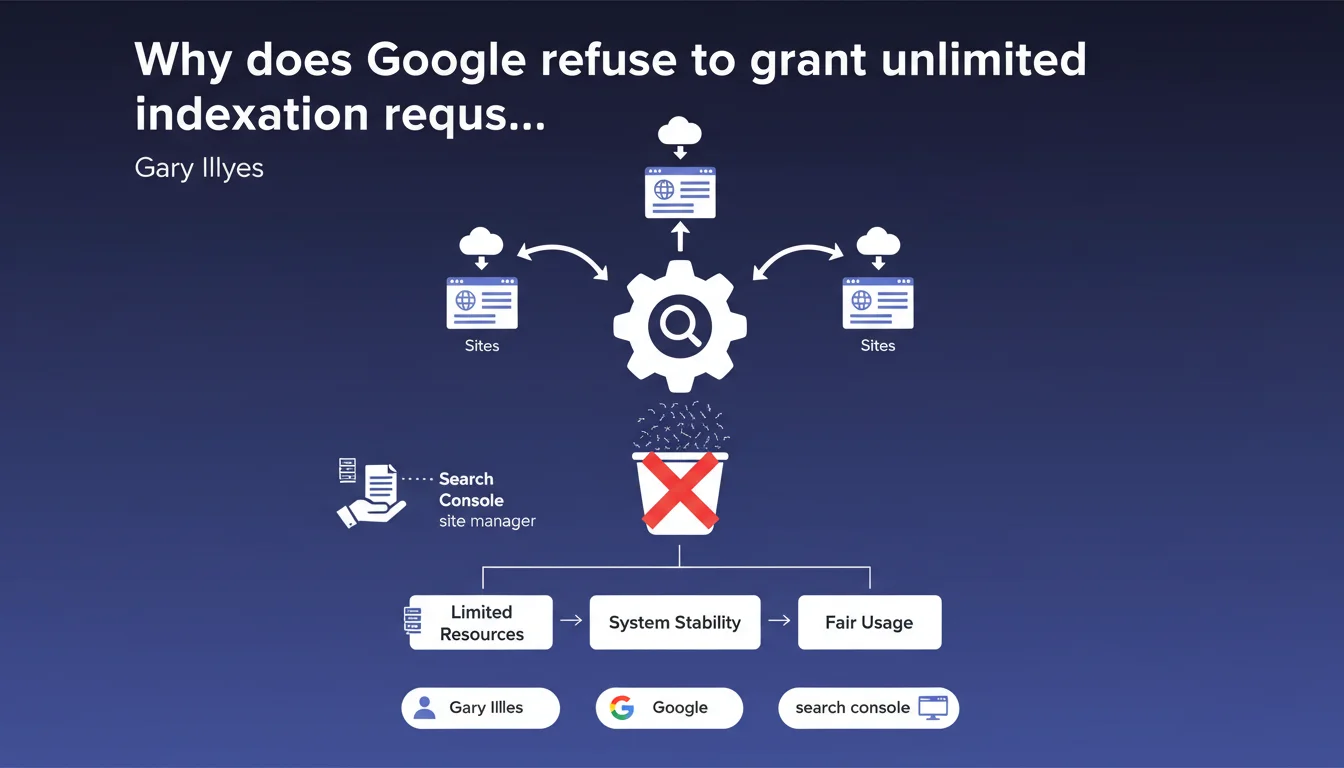

Gary Illyes confirms there is no way to obtain an unlimited quota of indexation requests in Search Console, even for owners managing multiple sites. This limit applies to everyone without exception or room for negotiation. Managers handling large volumes must work within the standard quotas imposed by Google.

What you need to understand

What is the current limit for indexation requests?

Google Search Console imposes a daily quota of indexation requests that varies based on several factors: site age, crawl frequency, overall authority. In practice, most sites hover around 10 to 20 requests per day, sometimes less for new domains.

This quota resets every 24 hours. If you manage a site with hundreds of new pages daily — e-commerce with rotating products, content aggregator, classifieds portal — you'll quickly hit the ceiling.

Why does Google maintain such strict quotas?

The official answer revolves around protecting crawl resources. Google wants to prevent its infrastructure from being heavily solicited by indexation requests that bypass its natural prioritization algorithm.

Between you and me? It's also a way to force webmasters to optimize their architecture rather than compensate for structural weaknesses with manual requests. If you need to submit 200 URLs daily, it means your crawl budget or internal linking has a problem.

Are managers of multiple sites treated differently?

No. Gary Illyes is explicit: even if you manage 50 sites through an agency or SaaS platform, each property keeps its standard individual quota. No special access, no "premium" account with priority indexation.

Some publishers hoped to gain privileged status through commercial channels or partnerships. This statement ends any negotiation: the limits are technical and uniform.

- Limited daily quota: typically 10-20 requests/day depending on site authority

- No exceptions: even for multi-site managers or large platforms

- 24-hour reset: the counter resets to zero each day

- No negotiation: no commercial channel allows you to obtain more requests

- Warning signal: if you regularly max out your quota, your architecture needs an audit

SEO Expert opinion

Is this limitation technically justified?

Yes and no. Technically, Google is right to want to preserve its crawl resources and prevent the indexation request tool from becoming a magic button used excessively. Let's be honest: if this quota didn't exist, some webmasters would abuse it massively.

But — and this is where it gets sticky — Google remains extremely vague about the exact criteria determining a site's quota. No public metrics, no dashboard showing where you stand. You discover the limit by hitting it. [To verify]: Gary doesn't specify whether this quota evolves automatically with site authority or remains fixed.

What concrete alternatives exist to work around this limit?

Let's be frank: you don't "work around" this limit, you optimize for it. The real question is reducing your dependence on manual requests by improving your natural discoverability.

Priority number one: internal linking. If Google discovers your new pages through internal links from sections crawled daily, you don't need to submit manually. Second lever: dynamic XML sitemap, updated in real-time with precise lastmod. Third option for large volumes: prioritize strategic pages in your manual requests and let others follow the natural flow.

And this is where many fail: they use indexation requests to compensate for a structural problem instead of solving it. If your site produces 100 URLs/day and Googlebot naturally discovers only 10, it's not a quota problem — it's an architecture problem.

In what cases does this rule create a real business problem?

News sites with breaking news, fast-rotation classifieds platforms (jobs, real estate), e-commerce with limited-time product launches. In these contexts, every hour counts and waiting for Googlebot to naturally visit can cost traffic.

Google typically responds that these sites should be crawled frequently if they have sufficient authority. True in theory. In practice? Even established media outlets see indexation delays of several hours on hot content. [To verify]: no public data clearly correlates "crawl frequency" and "request quota" — we assume a link exists, but Gary doesn't confirm it here.

Practical impact and recommendations

How should you prioritize your limited indexation requests?

First instinct: abandon the idea of submitting everything. Focus your manual requests on high-value URLs: strategic product launches, major editorial content, newly created transactional pages. Everything else should be discovered naturally.

Concretely? Implement an internal scoring system: priority 1 for pages generating direct revenue, priority 2 for editorial content with strong SEO potential, priority 3 for everything else. Use your 10-20 daily requests on priority 1 items, let the sitemap and internal linking handle the rest.

What structural optimizations reduce dependence on manual requests?

Your XML sitemap must be flawless: updated in real-time, with precise lastmod tags, segmented by content type if you exceed 10,000 URLs. Google must be able to identify new content without ambiguity.

On the internal linking side: each new page should receive at least 3 internal links from daily-crawled pages. Ideally, integrate your new content into "Latest articles," "New products," "Recent updates" sections present on your homepage or main categories.

And — often overlooked point — verify that your robots.txt and crawl directives aren't accidentally blocking Googlebot on sections you're later trying to manually index. It seems obvious, but we still see this type of inconsistency on medium-sized sites.

What if your production volume structurally exceeds the quota?

Let's be realistic: if you publish 200 URLs/day and Google naturally indexes only 50, you have three options. One, reduce volume and prioritize quality. Two, radically improve your architecture so Googlebot discovers everything itself. Three, accept that part of your content won't be indexed immediately.

Many sites overproduce out of fear of missing out, without analyzing the rate of indexed pages actually generating traffic. If 70% of your URLs never receive organic visits, why force their indexation? Focus your efforts and quota on the 30% that performs.

- Score new URLs by strategic value before submitting

- Audit your XML sitemap: structure, lastmod, update frequency

- Map internal linking to your new pages (minimum 3 links)

- Verify consistency between robots.txt / crawl directives / submitted pages

- Analyze the rate of indexed pages actually generating traffic

- Implement "new content" sections on high-crawl pages

- Test natural discovery speed before manual submission

- Document your daily quota usage to identify patterns

❓ Frequently Asked Questions

Peut-on augmenter son quota de requêtes d'indexation en contactant Google ?

Le quota de requêtes évolue-t-il avec l'autorité du site ?

Combien de requêtes d'indexation puis-je faire par jour ?

Si je gère plusieurs sites, ai-je un quota global ou par site ?

L'API Indexing de Google est-elle une alternative viable ?

🎥 From the same video 19

Other SEO insights extracted from this same Google Search Central video · published on 18/07/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.