Official statement

Other statements from this video 19 ▾

- □ Faut-il paniquer si votre hreflang disparaît temporairement pendant une migration ?

- □ Faut-il bloquer GoogleOther ou risquer d'impacter ses services Google ?

- □ Les domaines locaux (ccTLD) offrent-ils vraiment un avantage SEO pour le référencement local ?

- □ Pourquoi Google traite-t-il un site après expansion massive comme un tout nouveau site web ?

- □ Pourquoi Google continue-t-il d'afficher l'ancien nom de votre site après un rebranding ?

- □ Comment exploiter l'API du tableau de bord de statut Google Search pour vos outils SEO ?

- □ Pourquoi vos données structurées produits n'apparaissent-elles pas dans les résultats enrichis ?

- □ Pourquoi Google refuse-t-il les requêtes d'indexation illimitées dans Search Console ?

- □ Marque confondue avec un mot courant : faut-il vraiment attendre des mois sans rien faire ?

- □ Comment masquer du texte à Google en bloquant le JavaScript qui le contient ?

- □ Peut-on vraiment utiliser le Schema Recipe pour n'importe quel type de recette ?

- □ Google peut-il transférer vos rankings SEO lors d'une migration de domaine ?

- □ Comment la balise noindex fonctionne-t-elle réellement page par page ?

- □ Faut-il vraiment remplir tous les champs des données structurées pour que Google les prenne en compte ?

- □ Les flux RSS sont-ils vraiment exploités par Google pour l'exploration et l'indexation ?

- □ Pourquoi votre nouveau favicon met-il autant de temps à apparaître dans les résultats Google ?

- □ L'ordre des balises H1, H2, H3 influence-t-il vraiment le classement Google ?

- □ Les liens sur pages bloquées au crawl perdent-ils vraiment toute leur valeur SEO ?

- □ Faut-il vraiment structurer ses sitemaps selon des règles précises ou peut-on faire n'importe quoi ?

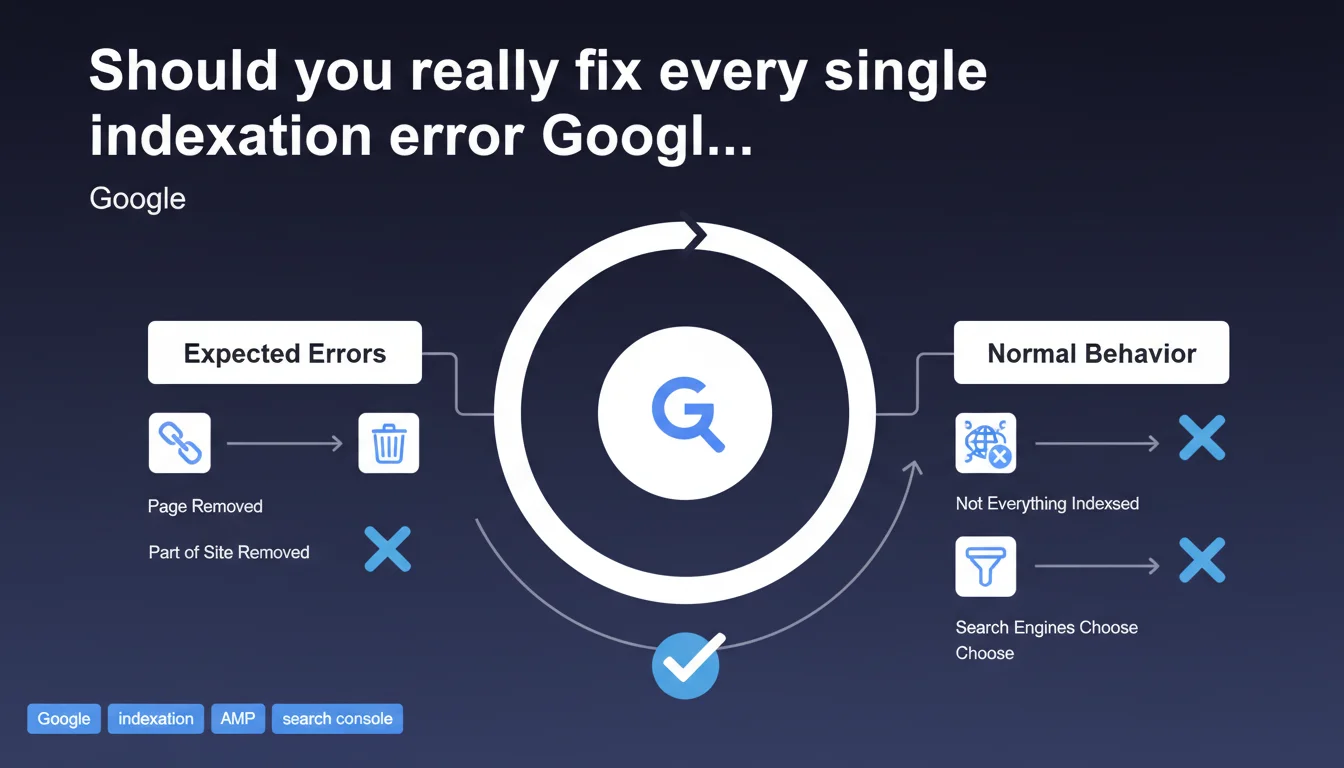

Google says you don't need to correct all the indexation errors flagged in Search Console. Some errors are normal and expected — especially after content removal — and search engines don't index your entire site anyway. The takeaway: stop panicking at the first red alert.

What you need to understand

Why does Google say some errors are "expected"?

Search Console lists a series of indexation exclusions that aren't automatically problems to solve. When you delete an entire section of your site, the resulting 404s are perfectly normal. Same goes for URLs excluded by robots.txt, a noindex tag, or a canonical pointing to another page.

The issue is that many site owners panic when faced with a report full of red alerts. Google is trying here to defuse this anxiety by reminding everyone that a search engine can't — and doesn't want to — index everything.

What does Google mean by "search engines don't index everything"?

Googlebot crawls according to a limited crawl budget and applies quality filters. Some pages may be deemed too weak, redundant, or irrelevant to users. Others are deliberately blocked by the webmaster. Google will never give you a precise figure, but it's clear that part of the web remains intentionally or technically out of the index.

In practice, a site with 10,000 URLs might only have 7,000 indexed — and that's not necessarily a disaster if the missing 3,000 are outdated pagination archives or facet filters with no SEO value.

Which errors really deserve intervention?

Not all errors are equal. A strategic URL blocked by an accidental noindex is critical. An old product page that returns a 404 is less so. Google invites you to prioritize rather than blindly fix everything.

- Critical errors: strategic pages excluded (unintentional noindex, robots.txt blocking, redirect loops)

- Normal errors: 404s after content removal, intentional exclusions, canonicals consolidating variants

- Errors to watch: soft 404s, recurring crawl anomalies, server timeouts

- The key is to understand why a page isn't indexed before deciding whether to act

SEO Expert opinion

Is this statement consistent with real-world practices?

Yes and no. In principle, Google is right: too many junior SEOs waste enormous amounts of time chasing every minor error. But the phrasing remains dangerously vague. What exactly is an "expected" error? Google provides no threshold, no precise typology.

On high-rotation e-commerce sites, seeing 20% 404 errors might be perfectly healthy. On a static blog, 5% should already raise concerns. [Worth checking]: Google provides no objective framework to distinguish normal from pathological.

What nuances should be added to this claim?

Saying "you don't need to fix everything" doesn't mean "ignore your reports". A site accumulating 5xx server errors or massive timeouts has a structural problem, even if Google downplays it. Soft 404s especially often signal weak content that Googlebot politely refuses to index.

Moreover, some errors are symptoms of other dysfunctions. A sudden explosion of pages excluded by canonical might reveal a technical bug or CMS misconfiguration. Not fixing something "because Google says it's normal" sometimes means missing a real issue.

In what cases does this rule absolutely not apply?

If strategic pages with high traffic or conversion value are excluded, Google's statement no longer holds. A flagship product page blocked by an accidental noindex must be fixed immediately, regardless of Mountain View's zen philosophy.

Similarly, a site experiencing a sudden drop in indexation rate without apparent cause can't simply shrug it off. Google's statement mainly aims to calm neuroses about residual and old errors, not to justify inaction in the face of serious anomalies.

Practical impact and recommendations

What should you concretely do when facing indexation errors?

Start by segmenting your errors. Separate critical URLs (active products, strategic content) from secondary ones (archives, filters, old campaigns). Focus your efforts on the first group.

Next, verify the context of each exclusion. A 404 on a page removed six months ago? Ignore it. A noindex on a main category? Maximum urgency. Search Console shows you the status, but it's up to you to determine the severity.

What errors should you avoid when reacting to this statement?

Don't fall into systematic laxity. Just because Google says "you don't need to fix everything" doesn't mean you should let errors that actually impact crawl and indexation slide. A server timing out on 15% of requests is never "normal".

Conversely, don't panic over a colorful report. Residual 404s, properly configured canonical exclusions, old campaigns blocked by robots.txt — none of this deserves a week of technical sprints.

How do you verify your site is in good shape?

Monitor evolution over time rather than absolute figures. A stable site showing 200 errors for months is probably not in danger. A site jumping from 50 to 500 errors in a week has a real problem.

Cross-reference the data: number of indexed pages vs. submitted sitemap, coverage rate by page type, server logs to identify crawled but non-indexed URLs. This multi-source analysis is what reveals true anomalies.

- Segment your errors by strategic priority (critical pages vs. secondary)

- Analyze the context of each exclusion before deciding on action

- Monitor temporal variations rather than absolute values

- Cross-reference Search Console data with server logs and analytics

- Prioritize errors impacting high-traffic or high-conversion pages

- Ignore residual 404s on intentionally removed content

- Document your intentional exclusions to prevent false alerts

❓ Frequently Asked Questions

Dois-je corriger tous les 404 signalés dans la Search Console ?

Quel taux d'erreur d'indexation est acceptable ?

Comment distinguer une erreur normale d'une erreur problématique ?

Que faire si mon taux d'indexation chute brutalement ?

Les soft 404 sont-ils vraiment sans gravité ?

🎥 From the same video 19

Other SEO insights extracted from this same Google Search Central video · published on 18/07/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.