Official statement

Other statements from this video 19 ▾

- □ Faut-il paniquer si votre hreflang disparaît temporairement pendant une migration ?

- □ Faut-il bloquer GoogleOther ou risquer d'impacter ses services Google ?

- □ Les domaines locaux (ccTLD) offrent-ils vraiment un avantage SEO pour le référencement local ?

- □ Pourquoi Google traite-t-il un site après expansion massive comme un tout nouveau site web ?

- □ Pourquoi Google continue-t-il d'afficher l'ancien nom de votre site après un rebranding ?

- □ Faut-il vraiment corriger toutes les erreurs d'indexation signalées dans la Search Console ?

- □ Comment exploiter l'API du tableau de bord de statut Google Search pour vos outils SEO ?

- □ Pourquoi vos données structurées produits n'apparaissent-elles pas dans les résultats enrichis ?

- □ Pourquoi Google refuse-t-il les requêtes d'indexation illimitées dans Search Console ?

- □ Marque confondue avec un mot courant : faut-il vraiment attendre des mois sans rien faire ?

- □ Comment masquer du texte à Google en bloquant le JavaScript qui le contient ?

- □ Peut-on vraiment utiliser le Schema Recipe pour n'importe quel type de recette ?

- □ Google peut-il transférer vos rankings SEO lors d'une migration de domaine ?

- □ Comment la balise noindex fonctionne-t-elle réellement page par page ?

- □ Faut-il vraiment remplir tous les champs des données structurées pour que Google les prenne en compte ?

- □ Les flux RSS sont-ils vraiment exploités par Google pour l'exploration et l'indexation ?

- □ Pourquoi votre nouveau favicon met-il autant de temps à apparaître dans les résultats Google ?

- □ L'ordre des balises H1, H2, H3 influence-t-il vraiment le classement Google ?

- □ Faut-il vraiment structurer ses sitemaps selon des règles précises ou peut-on faire n'importe quoi ?

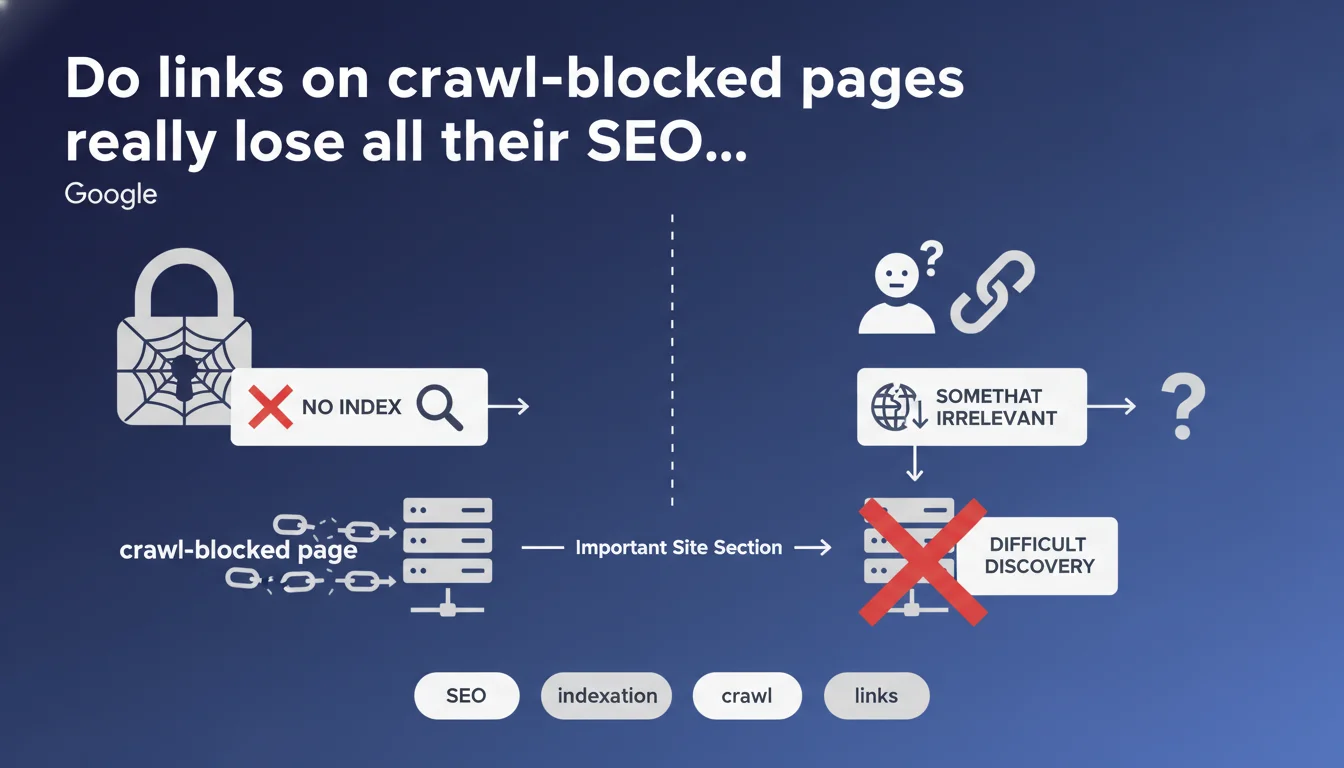

Google considers a page blocked from crawling or indexation to be equivalent to a non-existent page for the user. Links present on these pages therefore lose their relevance and do not transmit value. If a significant portion of your site is only accessible through blocked pages, its discovery by search engines becomes extremely difficult.

What you need to understand

Why does Google equate a blocked page with a non-existent page?

The logic is straightforward: if a user cannot access a page, it doesn't matter whether it's technically present on the server. Google adopts a user experience-centered perspective here.

A page blocked via robots.txt, noindex, or authentication becomes invisible to Googlebot. The links it contains cannot be followed effectively, and their referral value disappears. This is consistent with the principle that Google explores and ranks the web as a user would.

What does this concretely mean for your internal linking?

If your strategic pages only receive internal links from blocked pages, they become orphaned from Google's perspective. Even with an XML sitemap, their discovery and indexation remain compromised.

The problem worsens when an entire section of the site depends on these invisible links. Google may then underestimate the importance of this content, or even never explore it properly.

Does this rule apply to all types of blocking?

Google does not differentiate in this statement between robots.txt blocking, noindex tags, or pages under authentication. The effect remains the same: links become irrelevant.

This is a simplification that raises questions — particularly for crawlable noindex pages, where links can theoretically be followed. But the official position remains firm: no user access = no link value.

- A blocked page is equivalent to a non-existent page for Google

- Links on blocked pages lose their ability to pass recommendation value

- Orphaned pages (accessible only through blocked links) risk never being indexed

- This rule applies regardless of the type of blocking (robots.txt, noindex, authentication)

SEO Expert opinion

Is this statement entirely consistent with real-world observations?

Broadly speaking, yes — but with important nuances. On sites I've audited, pages accessible only through links on blocked pages do indeed show catastrophic indexation rates.

Where it gets tricky: Google doesn't specify whether a link from a crawlable noindex page retains value. Technically, Googlebot can follow that link. In practice, testing shows that PageRank transmission remains possible, but significantly weakened. [To verify] for each specific configuration.

What are the real consequences on crawl budget?

If Google considers links irrelevant, it won't allocate resources to actively following them. On a large site, this can create a major bottleneck.

The problem becomes critical when important pages end up multiple clicks away from the home page, accessible only through blocked areas. Google may take weeks — or even months — to discover them, if at all.

In which cases does this rule cause specific problems?

E-commerce sites with blocked navigation facets suffer particularly. If your product pages are only accessible through these facets, they become invisible.

The same applies to sites with member areas. If public articles are only linked from authentication-required pages, their organic discovery collapses. Let's be honest: many sites make this mistake without realizing it.

Practical impact and recommendations

How do you identify orphaned pages caused by blocking?

Start by crawling your site with the same restrictions as Googlebot. Screaming Frog or Sitebulb can simulate compliance with robots.txt and noindex tags.

Then compare this crawl with an unrestricted crawl. Pages present in the second but absent from the first are potentially orphaned for Google. Check whether they receive links from indexable pages.

What errors must you absolutely avoid?

Never block a page that serves as a critical navigation hub — even if it seems unimportant for users. The cascading consequences can be devastating.

Also avoid creating structural dependencies. If an entire category only receives internal links from noindex pages, you're sabotaging your own indexation.

What should you concretely implement?

Ensure that all your strategic pages receive at least one link from a crawlable and indexable page. Ideally, from the home page or a level-1 page.

For complex sites, create redundant internal linking: multiple access paths to each important page. This reduces the risk of accidental orphaning.

- Crawl your site with Googlebot restrictions to identify orphaned pages

- Verify that each strategic page receives at least one link from an indexable page

- Eliminate structural dependencies on blocked pages

- Create redundant navigation paths for priority content

- Document blocking rules and their impact on internal linking

- Regularly audit server logs to detect under-crawled areas

❓ Frequently Asked Questions

Un lien depuis une page en noindex crawlable transmet-il du PageRank ?

Les pages présentes uniquement dans le sitemap XML seront-elles indexées si elles n'ont pas de liens internes ?

Faut-il bloquer les pages de filtres et facettes en e-commerce ?

Comment gérer les pages sous authentification qui contiennent des liens vers du contenu public ?

Un blocage temporaire via robots.txt a-t-il le même impact qu'un noindex permanent ?

🎥 From the same video 19

Other SEO insights extracted from this same Google Search Central video · published on 18/07/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.