Official statement

Other statements from this video 19 ▾

- □ Faut-il paniquer si votre hreflang disparaît temporairement pendant une migration ?

- □ Faut-il bloquer GoogleOther ou risquer d'impacter ses services Google ?

- □ Les domaines locaux (ccTLD) offrent-ils vraiment un avantage SEO pour le référencement local ?

- □ Pourquoi Google traite-t-il un site après expansion massive comme un tout nouveau site web ?

- □ Pourquoi Google continue-t-il d'afficher l'ancien nom de votre site après un rebranding ?

- □ Faut-il vraiment corriger toutes les erreurs d'indexation signalées dans la Search Console ?

- □ Comment exploiter l'API du tableau de bord de statut Google Search pour vos outils SEO ?

- □ Pourquoi vos données structurées produits n'apparaissent-elles pas dans les résultats enrichis ?

- □ Pourquoi Google refuse-t-il les requêtes d'indexation illimitées dans Search Console ?

- □ Marque confondue avec un mot courant : faut-il vraiment attendre des mois sans rien faire ?

- □ Comment masquer du texte à Google en bloquant le JavaScript qui le contient ?

- □ Peut-on vraiment utiliser le Schema Recipe pour n'importe quel type de recette ?

- □ Google peut-il transférer vos rankings SEO lors d'une migration de domaine ?

- □ Comment la balise noindex fonctionne-t-elle réellement page par page ?

- □ Les flux RSS sont-ils vraiment exploités par Google pour l'exploration et l'indexation ?

- □ Pourquoi votre nouveau favicon met-il autant de temps à apparaître dans les résultats Google ?

- □ L'ordre des balises H1, H2, H3 influence-t-il vraiment le classement Google ?

- □ Les liens sur pages bloquées au crawl perdent-ils vraiment toute leur valeur SEO ?

- □ Faut-il vraiment structurer ses sitemaps selon des règles précises ou peut-on faire n'importe quoi ?

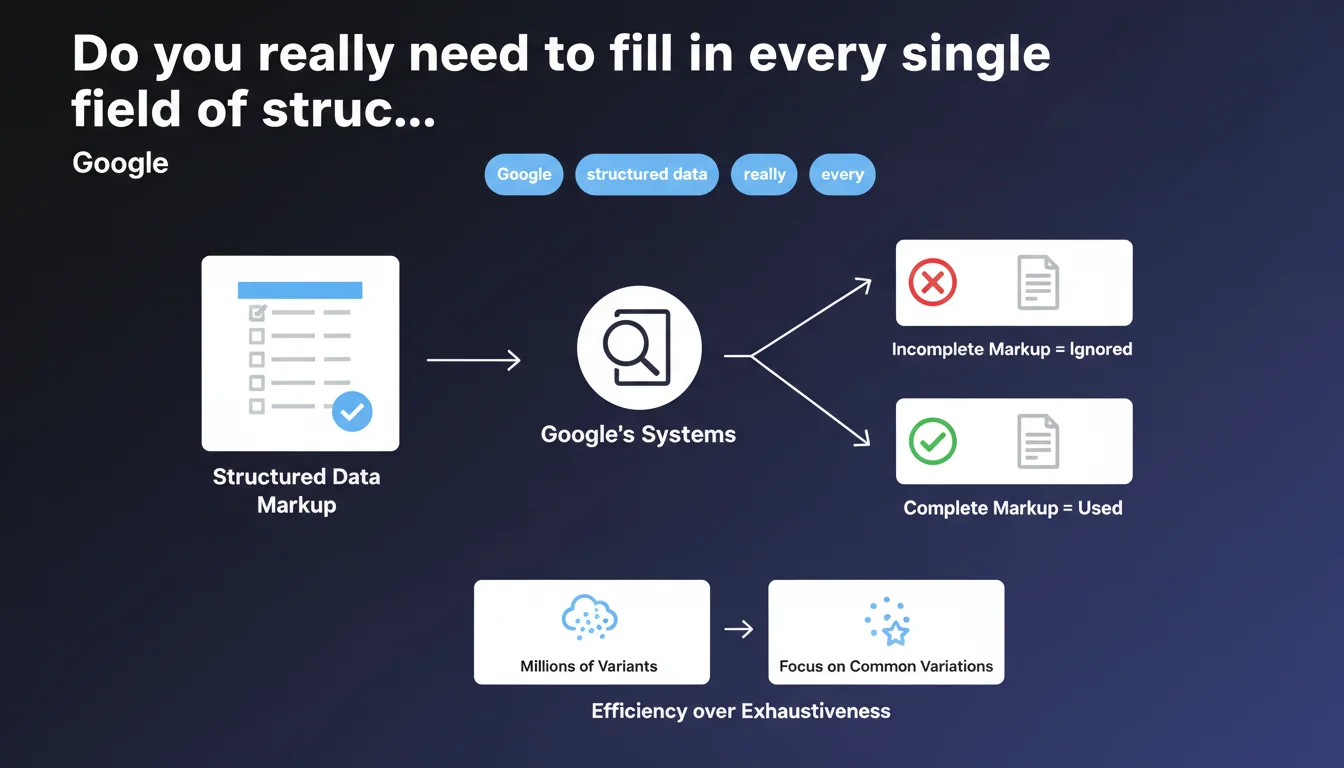

Google simply ignores incomplete structured data, even if it's technically valid. For catalogs with millions of product variants, it's better to focus markup on your best-selling variations rather than chase completeness at all costs.

What you need to understand

What exactly does "incomplete structured data" mean?

Google refers to properties marked as "required" in its Schema.org documentation. If a markup type requires a specific field — say price for a Product or author for an Article — and that field is missing, the markup is considered incomplete.

The structured data validator will flag errors or warnings, but technically the JSON-LD remains valid. Except on Google's end, it's as if you never marked anything at all.

Why does Google take such a radical stance on this?

The answer comes down to one word: reliability. Google's systems use this data to generate rich results — rich snippets, knowledge panels, product carousels. If the information is incomplete, there's no way to guarantee a coherent user experience.

Rather than trying to work with truncated data, Google prefers to ignore the markup entirely and rely on the visible content on the page. It's harsh but logical: a missing rich snippet is better than a misleading or incomplete one.

What's the logic behind the recommendation for millions of variants?

Google is implicitly admitting that marking every variation in a giant catalog is counterproductive. For an e-commerce site with 50,000 products and 10 sizes per product, that would mean 500,000 Product entities to markup.

The recommendation is pragmatic: focus on the most common variations — the ones that drive traffic and conversions. Everything else can remain unmarkuped without penalizing the site as a whole.

- The "required" fields in Google's documentation are truly mandatory — there's no negotiation

- Incomplete markup = ignored markup, even if it's syntactically correct

- For large catalogs, prioritization beats spreading yourself thin across every variant

- Google values data quality over the quantity of marked-up pages

SEO Expert opinion

Is this stance consistent with what we observe in practice?

Yes and no. In reality, some sites with incomplete structured data do get rich snippets — but it's unpredictable. Google seems to tolerate certain omissions depending on context or content type.

The problem is we never know when that tolerance applies. Counting on it is a gamble, not a strategy. [Needs verification]: Google doesn't publish a matrix showing which "required" fields are truly blocking for each Schema type.

Does the recommendation about product variants hide a confession of limitation?

Let's be honest: Google is telling you in no uncertain terms that its systems struggle to process millions of structured entities per site. The recommendation to prioritize common variants sounds like an admission of technical limitation.

It's also a signal that Google doesn't need to know about every size/color combination to properly index a catalog. Structured markup isn't a prerequisite for indexing — it's a bonus for rich results.

What are the risks of following this logic to the letter?

If you only markup 20% of your products because they're your bestsellers, you risk creating internal competitive distortion. Products without rich snippets will be structurally disadvantaged in SERPs against those that have them.

And be careful: what's "common" today may change tomorrow. Your markup strategy needs to be dynamic, not frozen at a snapshot of product popularity.

Practical impact and recommendations

How do I identify the truly required fields for my markup?

First step: consult Google's official documentation for the structured data type you're interested in (Product, Article, Recipe, etc.). Don't rely solely on Schema.org — Google has its own requirements.

Next, use the Rich Results Test from Google Search Console. It will clearly show you critical errors (missing fields) versus warnings (recommended but non-blocking fields).

What strategy should I adopt for an e-commerce catalog with thousands of variants?

Start with an analysis of your sales data and organic traffic. Identify the 20-30% of products that generate 80% of your revenue or organic visits.

Prioritize complete markup on those products. For the rest, you have two options: either minimal markup (without rich snippets), or markup at the generic product page level without spelling out every variant.

Also think about automation: if your catalog changes, your markup needs to change too. A script that updates markup priorities based on quarterly performance can make all the difference.

How do I verify that Google is actually using my structured data?

The "Enhancements" report in Search Console is your best friend. It shows you how many pages with markup are detected, how many have errors, and how many are actually generating rich results.

But watch out: a page that validates in Search Console doesn't guarantee it will show a rich snippet. Google reserves the right not to display enriched results even if the markup is perfect — it's eligibility, not a guarantee.

- Audit your structured data pages via Search Console and identify "required field missing" errors

- Fix critical errors first on high-traffic or high-potential pages

- For massive catalogs, segment by product popularity and markup the top 20% first

- Automate markup updates if your catalog changes frequently

- Monitor the Enhancements report monthly to catch any regression or new errors

- Always test new markup with Google's test tool before deploying to production

❓ Frequently Asked Questions

Si mon balisage Product manque le champ "availability", Google l'ignorera-t-il complètement ?

Est-ce que je perds du ranking si mes données structurées sont incomplètes ?

Dois-je baliser toutes les couleurs et tailles de mes produits pour avoir des rich snippets ?

Comment savoir si un champ est "required" par Google ou juste recommandé ?

Si Google ignore mon balisage incomplet, peut-il quand même utiliser ces données pour d'autres usages ?

🎥 From the same video 19

Other SEO insights extracted from this same Google Search Central video · published on 18/07/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.