Official statement

Other statements from this video 17 ▾

- □ Pourquoi votre site n'apparaît-il pas dans Google : indexation ou ranking ?

- □ Pourquoi Google pousse-t-il Search Console pour diagnostiquer l'indexation ?

- □ L'URL Inspection Tool de Search Console remplace-t-il vraiment le test d'indexation manuel ?

- □ Le rapport d'indexation de la Search Console suffit-il vraiment à diagnostiquer vos problèmes d'indexation ?

- □ Faut-il vraiment chercher à indexer 100% de ses pages ?

- □ Pourquoi Google indexe-t-il toujours la page d'accueil en premier sur un nouveau site ?

- □ Pourquoi la page d'accueil de votre nouveau site ne s'indexe-t-elle pas ?

- □ Pourquoi votre homepage n'apparaît-elle toujours pas dans l'index Google ?

- □ Votre site est-il vraiment absent de l'index Google ou juste victime de la canonicalisation ?

- □ Hreflang fausse-t-il vos rapports d'indexation dans Search Console ?

- □ Pourquoi vos pages 'site en construction' ne seront jamais indexées par Google ?

- □ Pourquoi certaines pages s'indexent en quelques secondes et d'autres jamais ?

- □ Google applique-t-il vraiment un quota d'indexation par site ?

- □ Faut-il supprimer l'ancien contenu pour améliorer l'indexation du nouveau ?

- □ Faut-il vraiment utiliser la fonction 'Demander une indexation' de la Search Console ?

- □ L'opérateur site: est-il vraiment fiable pour mesurer l'indexation de votre site ?

- □ Comment exploiter vraiment l'opérateur site: au-delà de la simple vérification d'indexation ?

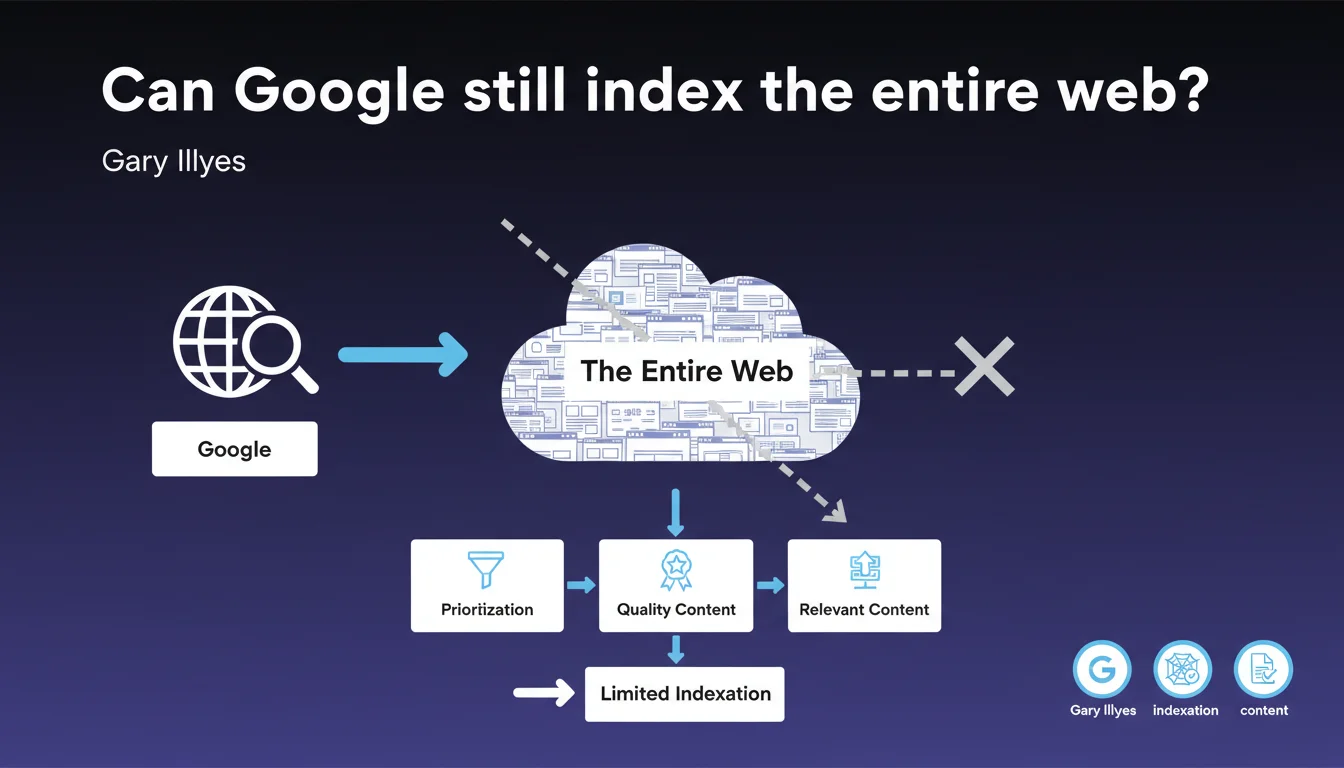

Google officially acknowledges that the internet has become too vast to be indexed in its entirety. Search engines now operate strict selection processes, prioritizing content deemed relevant and high quality. For SEO practitioners, this means that being technically crawlable no longer guarantees indexation — you must stand out to enter the index.

What you need to understand

Why is Google making this statement now?

Gary Illyes is formalizing here what many SEO professionals have suspected for years: exhaustive indexation is dead. The explosion of online content — duplicated pages, automatically generated spam, low-quality sites — makes the "index everything" approach economically and technically absurd.

Google is not saying it lacks resources. It is saying that indexing everything that exists would make no sense for search result quality. The filtering happens upstream, at the crawling and selection stage for the index.

What does this change in practical terms?

This statement confirms that crawl budget and indexation are two separate battles. An engine can crawl a page without ever indexing it. It can also decide to remove it from the index afterward if it no longer meets quality or relevance criteria.

Expectations must be recalibrated — especially for large sites or niche content. Just because a page is accessible and technically correct does not mean it deserves a place in Google's index.

On what criteria does Google select what to index?

Google remains vague about the precise criteria, but several signals play a role: domain authority, editorial quality, user behavior, content freshness. Orphaned pages, thin content, duplicates, and low-traffic sections are the first to be sacrificed.

New or low-authority sites must prove their value quickly. Average content on an average site has no chance of being indexed sustainably anymore.

- Exhaustive web indexation is technically and economically obsolete

- Being crawlable no longer guarantees indexation — selection is tightening

- Quality and relevance criteria become strict filters upstream

- Weak-authority or low-engagement sites face even more severe selection

- Google does not communicate a precise list of selection criteria for the index

SEO Expert opinion

Is this statement consistent with field observations?

Absolutely. For several years now, SEO practitioners have reported massive indexation issues on technically flawless sites. Thousands of orphaned pages, content properly submitted via Search Console that never passes through, unexplained deindexations.

This statement formalizes what we were already seeing: Google no longer plays the exhaustiveness game. The question is no longer "is my site indexable?" but "does my site deserve to be indexed?".

What gray areas remain in this announcement?

Gary Illyes remains extremely vague about selection criteria. What is "relevant" content? At what threshold is a site deemed too weak to deserve complete indexation? [To verify] — Google provides no metrics, thresholds, or concrete examples.

We also don't know how this selection applies to different types of content. Are product pages treated like blog articles? Do deep pages on an authority site receive preferential treatment compared to a new site?

In what cases does this logic pose problems?

Niche sites, voluminous knowledge bases, e-commerce sites with thousands of SKUs are hit first. A site can have real value for a specific user segment but be invisible to Google if it does not generate massive engagement signals.

The risk: that Google creates a reinforcing loop where only already-popular content continues to be indexed, suffocating new entrants or emerging niches.

Practical impact and recommendations

What should you do concretely to maximize your chances of indexation?

First, stop publishing everything. Quantity no longer compensates for quality — on the contrary, it can harm the overall perception of your site. Each page must provide real differentiated value, otherwise it dilutes your signal.

Then focus efforts on strategic pages: solid internal linking, clear engagement signals, in-depth content regularly updated. You must prove to Google that these pages deserve their place in the index.

What mistakes should you avoid at all costs?

Do not leave thousands of orphaned or low-quality pages lingering in your architecture. Google can interpret this as a signal of a low overall site value. Clean up, merge, redirect — or accept that not everything will be indexed.

Also avoid forcing indexation through massive submissions in Search Console. If Google judges that a page does not deserve its place, insisting will only worsen the negative signal. Better to improve the page before requesting its indexation.

How can you verify that your site is aligned with this selection logic?

Regular analysis of the actual index via site:mydomain.com and comparison with the submitted sitemap. Massive discrepancies should trigger an investigation: why is Google refusing these pages?

Also monitor engagement signals in Google Analytics and Search Console: click-through rate, time spent, bounce rate. If a page generates little interest even when indexed, it is a candidate for deindexation.

- Audit the entire site to identify low-value or duplicate pages

- Prioritize strategic content with reinforced internal linking

- Improve engagement signals on key pages (CTR, time spent, navigation depth)

- Clean up or redirect obsolete or underperforming sections

- Only submit truly differentiating pages for indexation

- Regularly monitor the gap between submitted pages and actually indexed pages

- Regularly update existing content to maintain its relevance

❓ Frequently Asked Questions

Est-ce que toutes les pages de mon site peuvent encore être indexées ?

Comment savoir si mes pages sont refusées à cause de cette sélection ou d'un problème technique ?

Soumettre manuellement mes pages via la Search Console peut-il forcer leur indexation ?

Les sites récents sont-ils désavantagés par cette logique de sélection ?

Faut-il réduire le nombre de pages de mon site pour améliorer l'indexation ?

🎥 From the same video 17

Other SEO insights extracted from this same Google Search Central video · published on 22/06/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.