Official statement

Other statements from this video 8 ▾

- □ La balise meta rating est-elle vraiment utile pour signaler du contenu explicite ?

- □ Faut-il vraiment isoler le contenu adulte dans un sous-domaine ou un dossier séparé ?

- □ Faut-il autoriser Googlebot à récupérer vos fichiers vidéo pour améliorer leur visibilité ?

- □ Faut-il vraiment désactiver la vérification d'âge pour Googlebot ?

- □ Comment SafeSearch filtre-t-il vraiment le contenu explicite dans les résultats de recherche ?

- □ Comment vérifier si SafeSearch filtre votre site avec l'opérateur site: ?

- □ Pourquoi Google impose-t-il un délai de 2 à 3 mois avant de réexaminer une classification SafeSearch ?

- □ Les politiques de contenu Google sont-elles vraiment un levier de visibilité organique ?

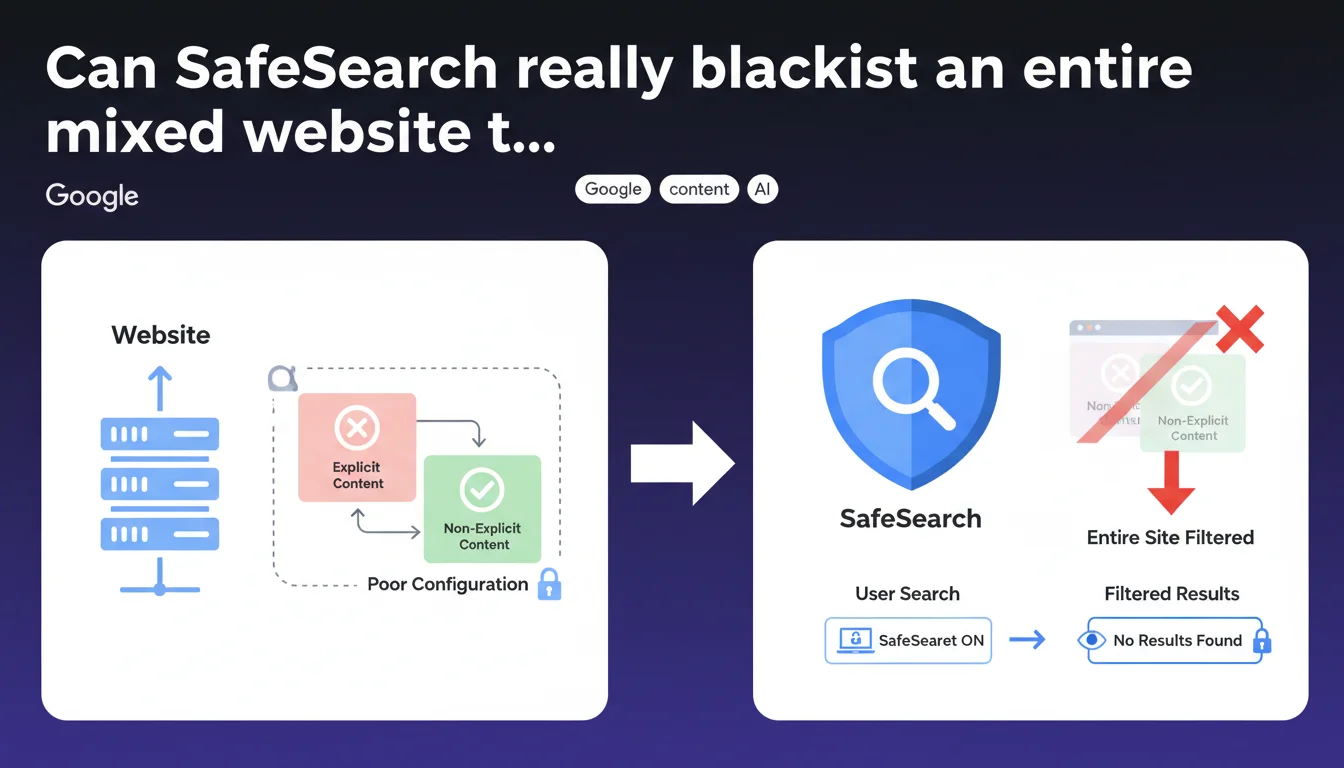

Google confirms that if a website hosts both explicit and non-explicit content without respecting SafeSearch recommendations, the entire domain can be filtered when SafeSearch is enabled — even completely neutral pages. Inadequate technical configuration alone is enough to penalize your entire web presence, without any page-by-page distinction.

What you need to understand

What exactly is SafeSearch and how does it work?

SafeSearch is a filter that users can enable in their Google search settings. Its role is to block the display of explicit content (pornography, graphic violence, etc.) from search results.

Most general audiences enable it by default, particularly in professional, educational, or family environments. For a mixed editorial or e-commerce site, ignoring SafeSearch amounts to accepting the loss of a significant portion of your potential audience.

Why would Google filter an entire site rather than page by page?

Google applies a trust-at-domain-level logic when technical signals are absent or inconsistent. If the site doesn't implement the recommended tags and mechanisms to distinguish explicit content from neutral content, the algorithms apply a precautionary principle.

In practice: rather than risk exposing a minor to inappropriate content because a page might have been miscategorized, Google switches the entire site to filtered mode. It's brutal, but consistent with their risk-averse approach to sensitive content.

Which sites are really affected by this rule?

All websites that mix explicit content and general public content without clear technical separation: video streaming platforms, community forums, dating sites, art galleries, lingerie or adult product stores also offering regular products, user-generated content aggregators.

Even a lifestyle blog occasionally hosting articles about sexuality can be affected if classification tags are not rigorously implemented.

- Domain-level filtering: if configuration is absent or faulty, the entire site can disappear for SafeSearch-active users

- No automatic granularity: Google doesn't guess the intent of each page without explicit technical signals

- Audience impact: potential traffic loss of 30 to 60% depending on target demographic

- Mandatory prevention: implementing SafeSearch recommendations is not optional for mixed sites

SEO Expert opinion

Is this statement consistent with real-world observations?

Completely. I've seen several lifestyle content or e-commerce sites lose 40% of their traffic overnight after a Google crawl detected untagged explicit content. The filter applies without warning, without any message in Search Console.

What's frustrating: Google never clearly communicates that a site is filtered for this reason. SEO teams discover the problem by analyzing traffic curves and manually testing with SafeSearch enabled. [To verify]: no official documentation details tolerance thresholds or the reassessment timeline after correction.

What nuances should be applied to this rule?

Google speaks of "SafeSearch recommendations" without ever publishing an exhaustive and current checklist. Official documentation refers to meta tags (rating, content-rating) and the possibility of manually submitting pages via a form — but the latter seems abandoned or at least poorly maintained.

In practice, we see that combined use of structured data tags (isFamilyFriendly:false), classic meta tags, and especially clear URL separation (subdomain or dedicated directory) improves filtering granularity. But nothing is 100% guaranteed.

In what cases does this rule not really apply?

If your site is 100% explicit content (pure adult site), you're already entirely filtered by SafeSearch — so it's not a problem since your target audience disables the filter. No gray area.

Likewise, a 100% family-friendly site with no borderline content has nothing to fear. The real danger concerns only poorly structured hybrid sites, where technical ambiguity creates an over-filtering risk.

Practical impact and recommendations

What concrete steps should you take to avoid global filtering?

First action: audit your entire site to identify pages containing explicit or potentially sensitive content. This includes images, videos, text, and user-generated comments. A SEO crawler configured to detect sensitive keywords can help, but a human eye remains essential.

Next, implement appropriate technical tags. For each explicit page: add <meta name="rating" content="adult"> and the schema.org "isFamilyFriendly": false in JSON-LD. Verify that these tags are properly crawlable (not blocked by robots.txt, not in undetected deferred JavaScript).

What mistakes should you absolutely avoid?

Never mix explicit and neutral content in the same directory structure without technical distinction. Avoid generic site-level tags that would mark your entire domain as adult — it's counterproductive if you also have general audience content.

Another common pitfall: relying solely on image filtering via SafeSearch without tagging text content. Google analyzes the overall context of the page, not just media. A very explicit product description is enough to trigger the filter.

How can you verify your site is properly configured?

Manually test your sensitive URLs by enabling SafeSearch in your Google search settings, then run targeted queries (site:yourdomain.com + specific keyword). If explicit pages appear despite SafeSearch being active, your tagging is insufficient.

Also use Google Search Console to monitor sudden fluctuations in clicks/impressions. An abrupt drop on certain queries may signal recent filtering.

- Identify and catalog all pages containing explicit or sensitive content

- Implement meta rating tags and schema.org isFamilyFriendly granularly

- Physically separate adult content from neutral content (subdomain or dedicated directory if large volume)

- Verify tag crawlability (test with Google Search Console and external crawler)

- Manually test with SafeSearch enabled on a representative sample of URLs

- Monitor traffic variations in GSC to detect unforeseen filtering

- Document the configuration to facilitate future audits and content updates

❓ Frequently Asked Questions

SafeSearch est-il activé par défaut chez tous les utilisateurs ?

Un site entier peut-il vraiment disparaître de Google à cause de SafeSearch ?

Quelles balises techniques Google recommande-t-il précisément ?

Si je corrige mon balisage, combien de temps avant que Google réajuste le filtrage ?

Un sous-domaine séparé suffit-il à isoler le contenu explicite ?

🎥 From the same video 8

Other SEO insights extracted from this same Google Search Central video · published on 01/11/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.