Official statement

Other statements from this video 20 ▾

- □ Comment Google indexe-t-il réellement le contenu des iframes ?

- □ Faut-il vraiment privilégier une structure hiérarchique pour les grands sites ?

- □ Bloquer le crawl via robots.txt : solution miracle contre les liens toxiques ?

- □ Faut-il traduire ses URLs pour améliorer son référencement international ?

- □ Pourquoi les migrations de sites échouent-elles si souvent malgré une préparation SEO ?

- □ Les doubles slashes dans les URLs sont-ils un problème pour le SEO ?

- □ Pourquoi Google pénalise-t-il les vidéos hors du viewport et comment y remédier ?

- □ Comment transférer efficacement le classement de vos images vers de nouvelles URLs ?

- □ Faut-il vraiment s'inquiéter des erreurs 404 sur son site ?

- □ HTTP 200 sur une page 404 : soft 404 ou cloaking ?

- □ Faut-il forcer l'indexation de son fichier sitemap dans Google ?

- □ Faut-il s'inquiéter si Googlebot crawle vos endpoints API et génère des 404 ?

- □ L'accessibilité web est-elle vraiment un facteur de classement Google ou un écran de fumée ?

- □ L'achat de liens reste-t-il vraiment sanctionné par Google ?

- □ Faut-il encore signaler les mauvais backlinks à Google ?

- □ Pourquoi bloquer le crawl via robots.txt empêche-t-il Google de voir votre directive noindex ?

- □ Pourquoi Google refuse-t-il l'idée d'une formule magique pour ranker ?

- □ Pourquoi Google affiche-t-il mal vos caractères spéciaux dans ses résultats ?

- □ Google Analytics et Search Console : pourquoi ces différences de données posent-elles problème ?

- □ Faut-il vraiment viser le SEO parfait ?

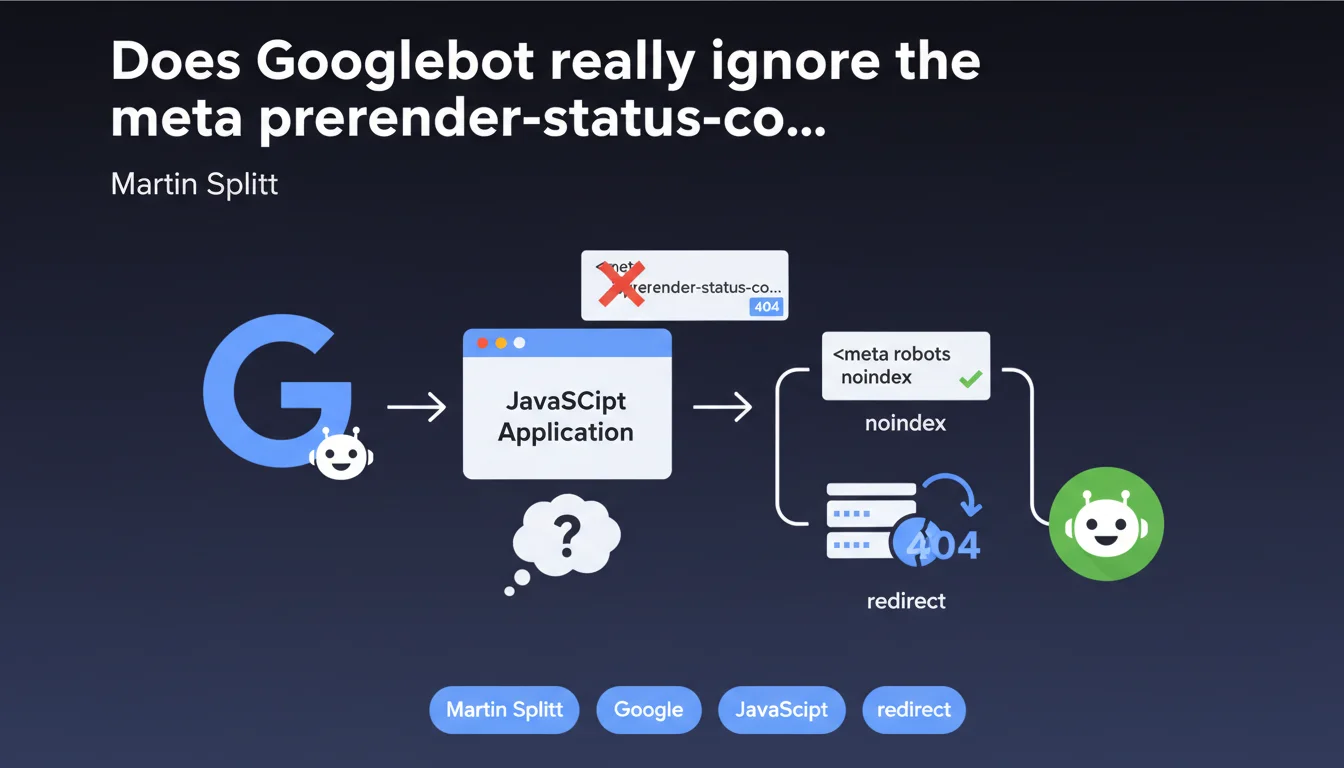

Googlebot does not process the 'prerender-status-code content 404' meta tag used by some JavaScript frameworks to simulate HTTP status codes. Result: your client-side rendered 404 pages risk being perceived as soft 404s and indexed despite not existing. The solution? Switch to a real server-side HTTP 404 code or use noindex via meta robots.

What you need to understand

What exactly is the meta prerender-status-code tag?

This tag was designed for pre-rendering services like Prerender.io or Rendertron. It allows single-page applications (SPAs) to communicate an HTTP status code to the pre-rendering service, even though technically the server always responds with a 200 OK.

The original idea? Tell the service "this page is actually a 404, treat it as such". Some developers believed Googlebot would read this tag directly, without going through an intermediary service. Spoiler: it doesn't.

So why does Googlebot ignore it?

Googlebot makes no distinction between client-side generated content and server-side generated content — it renders JavaScript and analyzes the final DOM. But it does not process custom meta directives invented for third-party services.

The meta prerender-status-code tag is not a recognized HTML standard by search engines. Google expects a real HTTP 404 code sent by the server or an explicit directive via meta robots. Everything else? Just noise.

What are the concrete risks of this misunderstanding?

If your SPA dynamically generates a 404 page with this tag without sending a real HTTP 404 code, Google sees an "empty" or thin page that responds with a 200. Result: soft 404.

- Parasitic indexation of error pages

- Wasted crawl budget on non-existent URLs

- Degraded quality signals if the volume of soft 404s is significant

- Confusion in Search Console with recurring soft 404 warnings

SEO Expert opinion

Is this statement consistent with real-world practices?

Yes, absolutely. I've seen dozens of misconfigured SPAs where the server systematically returns a 200 OK even for broken URLs. The developer thinks their meta tag "does the job". Except Google doesn't care.

The problem is that some monitoring tools (like Screaming Frog in JavaScript mode) may not detect this type of faulty configuration if they don't precisely simulate Googlebot's behavior. Result: you discover the problem months later, when Search Console fills up with soft 404s.

In which cases could this rule cause problems?

If you use an intermediary pre-rendering service that actually converts this tag into a real HTTP 404 code before serving the content to Googlebot, it works. But that's one more external dependency, with added cost and latency.

For small teams or internal projects without heavy infrastructure budget, relying on a third-party service to "translate" your status codes is architecturally flawed. Better to fix the problem at its source: server-side or via in-house dynamic rendering.

Should Google have been clearer about this earlier?

Honestly, yes. This tag has been floating around in Prerender.io and other pre-rendering solutions' documentation for years. Google never officially said "we support this" — but the silence created a gray area that many interpreted their own way.

Martin Splitt is right to finally clarify, but it deserved a detailed article or an explicit update to the official documentation. [To verify]: Has Google planned to properly document all the meta tags it actively ignores? That would help.

Practical impact and recommendations

What should you concretely do if you're using this tag?

First option: configure your server to return a real HTTP 404 code when the resource doesn't exist. This is the cleanest solution. It often involves implementing server-side rendering (SSR) or a server-side routing system that detects invalid URLs.

Second option: add a meta robots noindex tag to the DOM generated by your SPA for 404 pages. Google won't index them, even if the server responds with 200. Warning: this doesn't completely solve the wasted crawl budget problem, but it prevents parasitic indexation.

How do you verify your site is properly configured?

Test your non-existent URLs with the URL inspection tool in Search Console. Check the HTTP status code returned and the final HTML render. If you see a 200 with an empty or error page without noindex, you have a problem.

Crawl your site with a tool that simulates JavaScript rendering (Screaming Frog in Chrome mode or Oncrawl with JS enabled). Identify all pages that return 200 but are actually errors. Prioritize their correction.

- Audit all potential 404 URLs via Search Console and a JavaScript crawler

- Verify your server returns a real HTTP 404 code for non-existent pages

- If SSR/dynamic rendering is impossible: add meta robots noindex on error pages

- Remove any reference to the meta prerender-status-code tag if it's not coupled with a functional pre-rendering service

- Regularly monitor soft 404s in Search Console

Managing HTTP status codes in SPAs is a technical topic that touches on frontend, backend, and SEO architecture. If your dev team lacks the expertise or time to properly implement SSR or dynamic rendering, support from a specialized SEO agency can save months and prevent costly mistakes. An in-depth technical audit often quickly unblocks this type of configuration issue.

❓ Frequently Asked Questions

Peut-on utiliser un code JavaScript pour envoyer un 404 à Google ?

Est-ce qu'un service de pré-rendu comme Prerender.io résout le problème ?

Quelle différence entre un soft 404 et un vrai 404 pour Google ?

Le noindex suffit-il à remplacer un code 404 serveur ?

Doit-on corriger les anciennes pages qui utilisent cette balise ?

🎥 From the same video 20

Other SEO insights extracted from this same Google Search Central video · published on 18/12/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.