Official statement

What you need to understand

What is the prerender-status-code tag and why was it created?

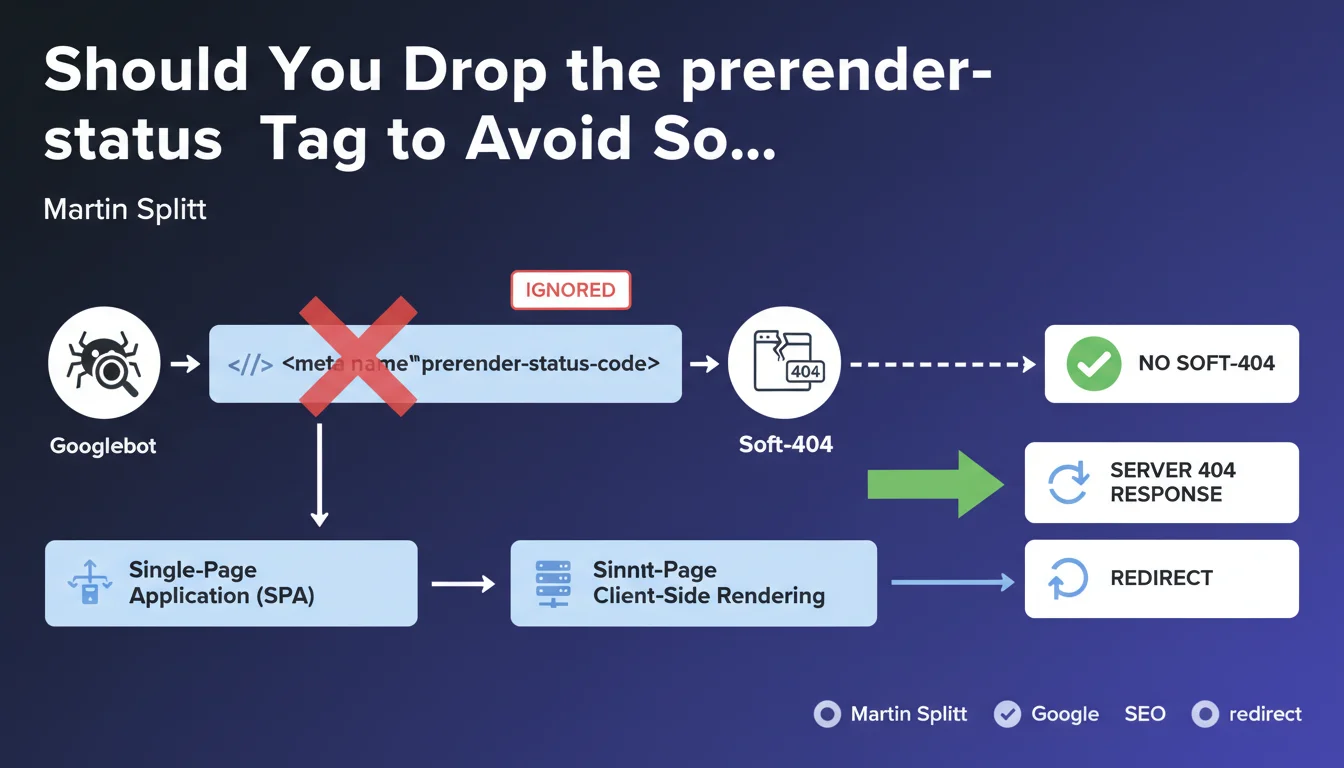

The prerender-status-code meta tag was developed to solve a specific problem with single-page applications (SPAs). These applications, built with React, Vue.js, or Angular, generate content client-side via JavaScript.

When a page doesn't exist in an SPA, the server often returns a 200 OK code even for a 404 error. This tag theoretically allowed crawlers to be informed of the page's true status, thus avoiding soft-404s that pollute Google's index.

Why does Google ignore this meta tag?

Google has clearly stated that Googlebot does not take this tag into account in its crawling and indexing process. This decision is part of a logic where Google favors authentic server signals rather than metadata added after the fact.

The search engine expects native HTTP status codes sent directly by the server. Any attempt to work around this via meta tags is considered unreliable and therefore ignored.

What is a soft-404 and why is it problematic?

A soft-404 occurs when a non-existent or empty page returns a 200 (success) code instead of a 404 (not found). Google detects that the page has no value but receives a contradictory signal from the server.

The consequences include a waste of crawl budget, index pollution with useless pages, and potentially a degradation of the site's perceived quality. Google may then reduce the crawl frequency of your domain.

- Googlebot completely ignores the prerender-status-code meta tag

- Soft-404s harm crawl budget and indexing quality

- Google favors native HTTP codes returned by the server

- This issue mainly concerns client-side JavaScript applications

- The recommended solution involves proper server configuration

SEO Expert opinion

Is this statement consistent with practices observed in the field?

Absolutely. For years, empirical testing has shown that Google places paramount importance on authentic HTTP signals. Attempts at manipulation via meta tags are systematically ignored or devalued.

This position aligns with Google's overall strategy of favoring server-side rendering (SSR) or static generation for sites requiring good organic visibility. Modern frameworks like Next.js or Nuxt.js have largely adopted these approaches.

What important nuances should be added to this recommendation?

Martin Splitt's recommendation remains general and requires contextual adaptation. For sites with thousands of dynamic pages, the technical solution can vary significantly depending on the existing architecture.

Several valid approaches exist: full SSR, static pre-rendering, or using dynamic rendering for Googlebot only. Each solution has its advantages and constraints in terms of performance and development cost.

In what specific cases does this issue become critical?

E-commerce sites with thousands of dynamically generated product pages are particularly vulnerable. If the server returns 200 for out-of-stock or deleted products, the index fills with worthless pages.

Content platforms like blogs, news sites, or marketplaces must also be vigilant. Poor management of obsolete URLs can quickly create hundreds of soft-404s that dilute the domain's authority.

Practical impact and recommendations

What should you do concretely to solve this problem?

The solution recommended by Google is to implement a server-side check that returns a true 404 code when content doesn't exist. For SPAs, this generally requires a layer of server-side rendering.

Technically, you can implement full Server-Side Rendering (SSR), use Static Site Generation (SSG) for pages known in advance, or configure middleware that checks for resource existence before rendering.

An intermediate alternative is to use dynamic rendering: serve pre-rendered HTML only to crawlers while maintaining the SPA for users. Google accepts this approach as long as it doesn't constitute cloaking.

What common mistakes should absolutely be avoided?

The most frequent error is to completely ignore the problem thinking that the meta tag will suffice. As confirmed by Google, this approach is totally ineffective and leaves the problem intact.

Another pitfall: returning 404 codes for temporarily unavailable pages. If a product is out of stock but will be restocked, a 404 will lose the ranking. Instead, use a 503 code or keep the page active with a clear status.

Finally, avoid redirect chains or JavaScript redirects that aren't always correctly followed by Googlebot. Favor 301/302 redirects at the server level.

How can you verify that your site properly handles status codes?

Use Google Search Console to identify detected soft-404s. The "Coverage" tab reports these pages that return 200 but are considered empty or worthless by Google.

Test your URLs with the URL Inspection tool to see exactly what Googlebot perceives. Particularly check the HTTP status codes returned during final rendering.

- Audit HTTP codes returned for non-existent or deleted pages

- Implement SSR or pre-rendering for SEO-critical pages

- Configure the server to return true 404 codes when appropriate

- Remove any references to the now-useless prerender-status-code tag

- Check Search Console for reported soft-404s

- Test Googlebot rendering with the URL Inspection tool

- Set up continuous monitoring of status codes via monitoring tools

- Document the strategy for managing temporarily unavailable pages

💬 Comments (0)

Be the first to comment.