Official statement

Other statements from this video 20 ▾

- □ Comment Google indexe-t-il réellement le contenu des iframes ?

- □ Bloquer le crawl via robots.txt : solution miracle contre les liens toxiques ?

- □ Faut-il traduire ses URLs pour améliorer son référencement international ?

- □ Pourquoi Googlebot ignore-t-il la balise meta prerender-status-code 404 dans les applications JavaScript ?

- □ Pourquoi les migrations de sites échouent-elles si souvent malgré une préparation SEO ?

- □ Les doubles slashes dans les URLs sont-ils un problème pour le SEO ?

- □ Pourquoi Google pénalise-t-il les vidéos hors du viewport et comment y remédier ?

- □ Comment transférer efficacement le classement de vos images vers de nouvelles URLs ?

- □ Faut-il vraiment s'inquiéter des erreurs 404 sur son site ?

- □ HTTP 200 sur une page 404 : soft 404 ou cloaking ?

- □ Faut-il forcer l'indexation de son fichier sitemap dans Google ?

- □ Faut-il s'inquiéter si Googlebot crawle vos endpoints API et génère des 404 ?

- □ L'accessibilité web est-elle vraiment un facteur de classement Google ou un écran de fumée ?

- □ L'achat de liens reste-t-il vraiment sanctionné par Google ?

- □ Faut-il encore signaler les mauvais backlinks à Google ?

- □ Pourquoi bloquer le crawl via robots.txt empêche-t-il Google de voir votre directive noindex ?

- □ Pourquoi Google refuse-t-il l'idée d'une formule magique pour ranker ?

- □ Pourquoi Google affiche-t-il mal vos caractères spéciaux dans ses résultats ?

- □ Google Analytics et Search Console : pourquoi ces différences de données posent-elles problème ?

- □ Faut-il vraiment viser le SEO parfait ?

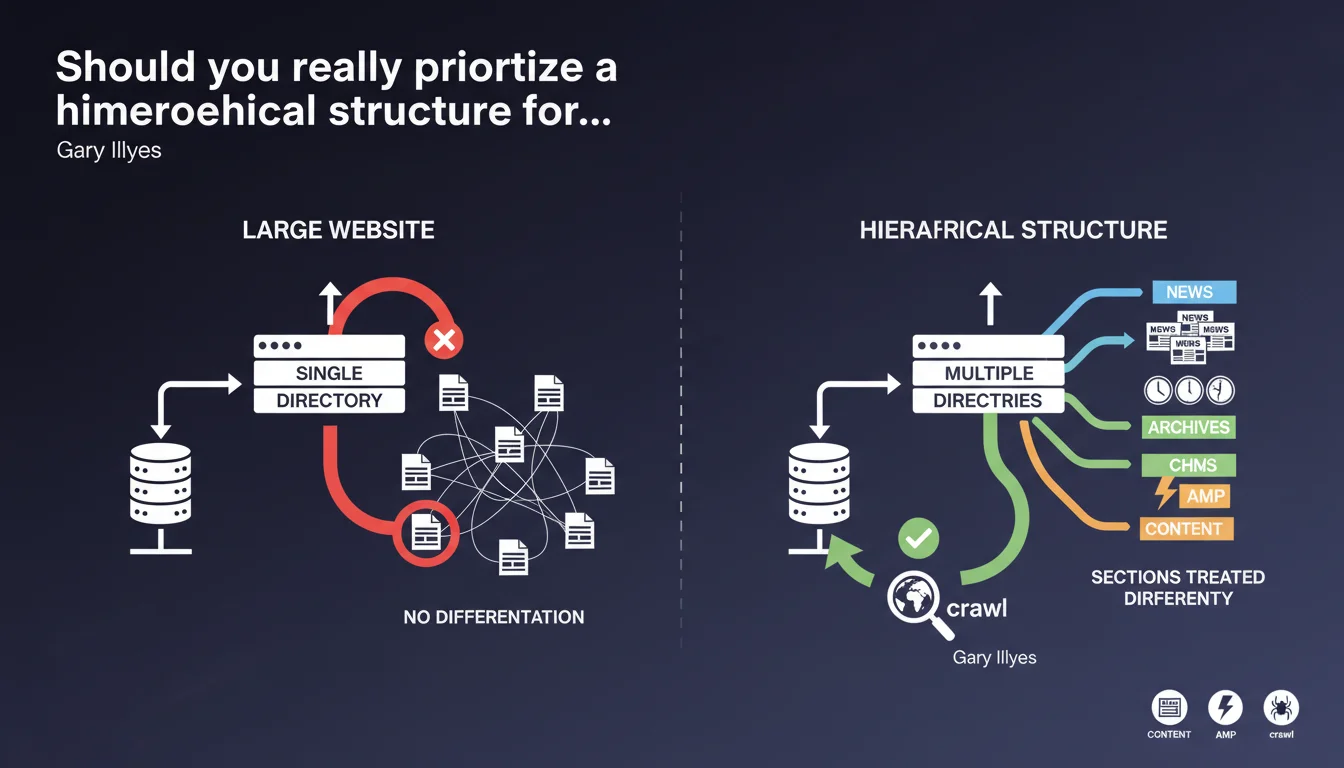

Google explicitly recommends a hierarchical structure for large websites because it allows differentiated crawl treatment by section. Without this organization into distinct directories (e.g., /news/ vs /archives/), it's impossible to optimize crawl frequency by content type.

What you need to understand

Why does Google insist on hierarchical structure for large websites?

Gary Illyes' answer is clear: hierarchical structure provides a crawl control lever that flat structure does not allow. In practical terms, by isolating your content in dedicated directories (/news/, /blog/, /products/), you give structural clues to Googlebot to adapt its crawl strategy.

The example of the 'news' directory is revealing. If your news content is located in /news/, Google can crawl these URLs more frequently than your static archives. If everything is at the same level (flat structure), this differentiation becomes impossible — or at least much more complex to manage through other signals.

What characterizes a flat vs hierarchical structure?

Flat structure: all pages at the same level (example.com/page1, example.com/page2, example.com/page3). No visible logical organization in the URL. Relevant for small websites of 20-50 pages maximum where this distinction has no measurable impact.

Hierarchical structure: organization into directories and subdirectories that reflects the logic of content (example.com/category/subcategory/page). This approach facilitates crawl budget management and thematic understanding by search engines.

In which cases does this recommendation really apply?

Google explicitly mentions "large websites". Let's be honest: if you manage 100 pages, this issue probably doesn't concern you. The critical threshold is rather around 1000+ pages, or as soon as you have content with different lifecycle cycles (news, products, archives, blog, etc.).

E-commerce sites, media outlets, content platforms and multi-section corporate websites are directly concerned. For a 30-page brochure website, this optimization remains anecdotal.

- Hierarchical structure = granular crawl control by section

- Flat structure = no differentiation possible based on architecture

- The critical threshold is around 1000+ pages or multi-type content

- The /news/ example illustrates differentiated crawl frequency optimization

- Without hierarchy, Google must rely solely on other signals (freshness, popularity, etc.)

SEO Expert opinion

Is this statement consistent with real-world observations?

Absolutely. Empirical tests confirm that Google crawls differently depending on the depth and location of URLs. Directories identified as "news" or "blog" do indeed benefit from higher crawl frequency, provided the content is actually fresh and regularly updated.

But be careful — and this is where it gets tricky. Creating a /news/ directory is not enough: if you publish one article per month there, Google will adjust its frequency downward. The structure provides the clue, but it's the editorial behavior that validates or invalidates this clue.

What nuances should be added to this recommendation?

Gary Illyes doesn't specify a crucial point: the hierarchy must remain logical and shallow. A structure like /category/subcategory/sub-subcategory/sub-sub-subcategory/page becomes counterproductive. Beyond 3-4 levels of depth, you dilute crawl budget and complicate indexation.

Another limitation: this approach assumes your sections are clearly defined. If you have hybrid content (a blog article that is also a product update), structuring becomes more delicate. [To verify] Google has never specified how it handles cross-section content in this context.

In which cases does this rule not apply?

For websites with fewer than 500 pages, the impact remains marginal. Flat structure can even be preferable if it reduces crawl depth — a product accessible in 1 click rather than 3 clicks through a complex hierarchy.

Monolingual sites with high internal PageRank can also afford a flat structure: if each page receives enough juice through linking, Googlebot will crawl them frequently regardless of their position. But let's be clear: this is a minority scenario.

Practical impact and recommendations

What should you do concretely on a large website?

Audit your current architecture. List your content types (news, products, in-depth articles, corporate pages) and verify if they are isolated in distinct directories. If everything is mixed at the same level, you're leaving optimization on the table.

Define a coherent directory strategy: /news/ for daily-updated news content, /blog/ for evergreen content, /products/ for catalog, etc. Each directory should correspond to an editorial reality and homogeneous update frequency.

How can you verify that your structure is effective?

Analyze server logs or Google Search Console to identify crawl frequency by directory. If /news/ is crawled as rarely as /archives/, either your publication rhythm doesn't justify this section, or Google hasn't yet understood its nature.

Use differentiated XML sitemaps: one sitemap for /news/ with daily frequency, another for archives with monthly frequency. This reinforces the structural signals given by your URLs.

What mistakes should you avoid when overhauling architecture?

Don't create artificial hierarchy just to "look good". If your categories don't have strong business or editorial logic, you're complexifying the structure without gain. The structure must reflect actual usage, not a theoretical ideal.

Avoid over-segmentation: 15 root directories for 200 total pages makes no sense. Focus on large content masses (100+ pages per section) that justify clear separation.

- Audit current architecture and identify content types

- Create distinct directories for each editorial type (/news/, /blog/, /products/)

- Limit depth to 3-4 levels maximum

- Analyze logs to verify crawl frequency by directory

- Use differentiated XML sitemaps with adapted frequencies

- Avoid over-segmentation (too many directories for few pages)

- Ensure URL structure reflects editorial reality (update frequency)

- Implement clean 301 redirects in case of redesign

❓ Frequently Asked Questions

Un site de 300 pages doit-il obligatoirement adopter une structure hiérarchique ?

Peut-on changer de structure sans perdre du trafic ?

Comment Google sait-il qu'un répertoire /news/ contient de l'actualité ?

Une structure plate peut-elle être plus performante dans certains cas ?

Faut-il créer un répertoire distinct pour chaque catégorie de produits ?

🎥 From the same video 20

Other SEO insights extracted from this same Google Search Central video · published on 18/12/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.