Official statement

Other statements from this video 13 ▾

- □ Pourquoi Google préfère-t-il les données structurées au machine learning pour comprendre vos pages ?

- □ Faut-il encore se fatiguer avec les données structurées si le machine learning fait le boulot ?

- □ Les données structurées donnent-elles vraiment du contrôle aux webmasters sur l'affichage Google ?

- □ Google vérifie-t-il réellement l'exactitude de vos données structurées ?

- □ Pourquoi Google recommande-t-il de commencer par les données structurées génériques ?

- □ Pourquoi votre Schema.org valide peut être rejeté par Google ?

- □ Faut-il implémenter des données structurées même si Google ne les utilise pas encore ?

- □ Les données structurées influencent-elles vraiment la compréhension du sujet d'une page par Google ?

- □ Les données structurées sont-elles vraiment utiles si Google comprend déjà votre page ?

- □ Faut-il vraiment bourrer vos pages de données structurées pour mieux ranker ?

- □ Faut-il abandonner JSON-LD au profit de Microdata pour les données structurées ?

- □ Le JSON-LD externe pose-t-il vraiment des problèmes de synchronisation pour Google ?

- □ Les données structurées doivent-elles systématiquement refléter le contenu visible de la page ?

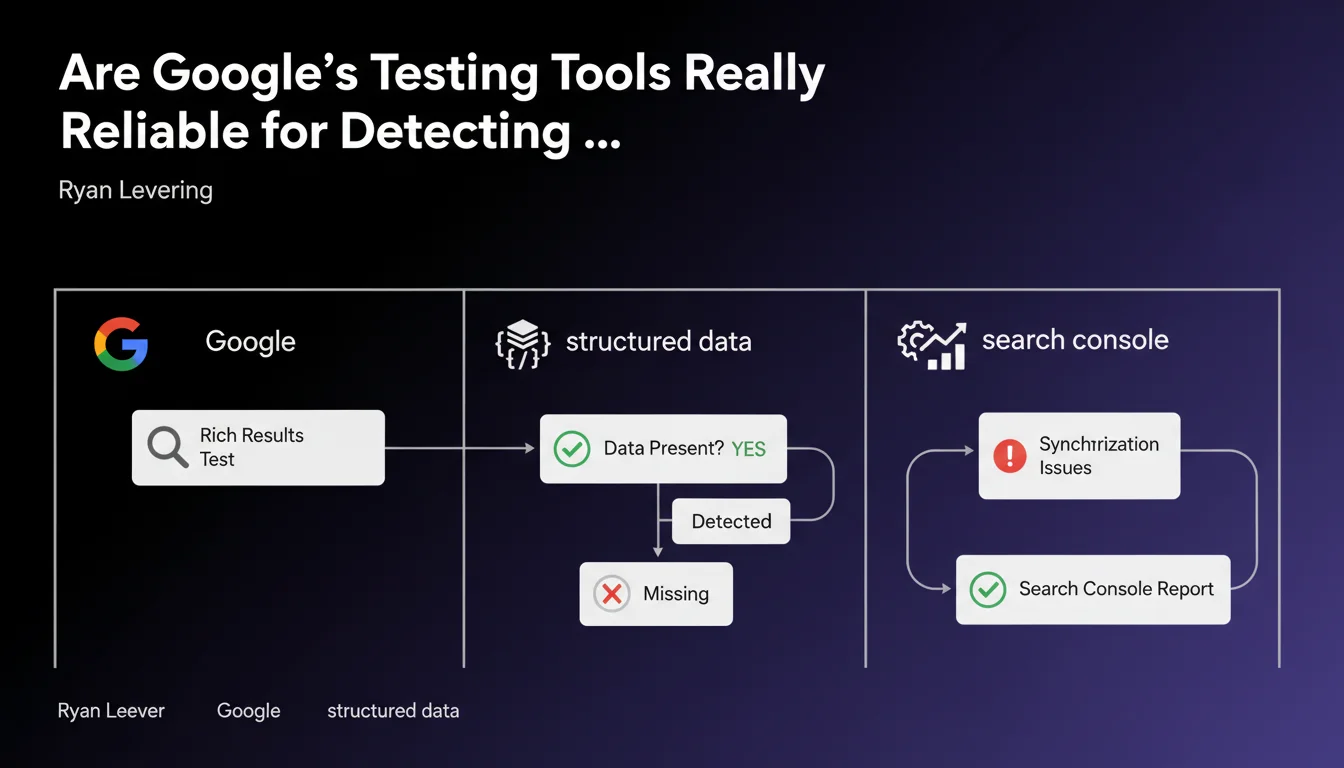

Google confirms that the Rich Results Test and other diagnostic tools accurately detect the presence or absence of structured data. Synchronization issues between the tools and the actual index will appear in Search Console. In other words: if the tool doesn't see your schema, Google probably doesn't see it either.

What you need to understand

Why is Google communicating about the reliability of its testing tools?

This statement answers a recurring question among practitioners: can we really trust the Rich Results Test to validate the implementation of our structured data? Ryan Levering's answer is unambiguous — these tools are accurate.

The underlying message is simple: if your schema doesn't appear in the Rich Results Test, there's a real technical problem. It's not a tool bug, it's your implementation that's broken.

What is this "synchronization" that Google mentions?

Google refers to synchronization issues that would appear in both Search Console and testing tools. Concretely, this is the delay between the moment you deploy your structured data and when Google crawls it, indexes it, and then exploits it to generate rich snippets.

This point is crucial: even if your markup is technically valid, temporal misalignments can exist. Testing tools work in real time on your current HTML, while Search Console reflects the state of the index — sometimes with several days of lag.

Are testing tools the final word on my rich snippets?

No, and that's where it gets complicated. A positive test in the Rich Results Test doesn't guarantee display of a rich snippet in the SERPs. Google may choose not to display your rich snippets for reasons of relevance, content quality, or simply because the algorithm deems another format more suitable for the query.

Conversely, a negative test is a warning sign: if the tool detects nothing, you'll never see a rich snippet. It's a necessary condition, not a sufficient one.

- The Rich Results Test detects with precision the presence or absence of structured data in your HTML

- Gaps between tools and the actual index appear in Search Console in the form of errors or warnings

- A positive test doesn't guarantee display in SERPs, but a negative test guarantees no display

- Synchronization issues are normal and transitory — give Google time to crawl your changes

- If Search Console reports schema errors after several days, it's an implementation problem, not a tool bug

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes, overall. For years, the Rich Results Test has proven relatively reliable at detecting syntax errors, missing properties, or poorly implemented schema types. When the tool detects a problem, there's indeed a technical issue to fix.

But — and this is a significant "but" — Google's wording remains vague on one crucial point: what timeframe should be considered "normal" for synchronization? I've seen cases where Search Console took two weeks to update its reports after a schema deployment. Other times, it's nearly instantaneous. [To verify] Google provides no clear metrics on these delays.

What nuances must be added to this statement?

First point: Google only speaks of detection, not eligibility. Your structured data can be perfectly detected and technically valid, but Google may decide not to exploit it. The quality criteria for displaying rich snippets are not public, and they vary by schema type.

Second point: testing tools work in "fetch as Googlebot" mode, but they don't simulate real crawling conditions — notably crawl budget, complex JavaScript rendering on heavy sites, or timeout issues. A positive lab test doesn't guarantee that Googlebot will achieve the same result on a site under load.

In what cases does this rule not apply fully?

On sites with strong dynamic content — e-commerce with thousands of product sheets updated in real time, news sites with rapid rotation — synchronization delays can create temporary false negatives. Search Console may report errors on pages that no longer exist or whose markup has changed between crawl and when you check the report.

Another edge case: hybrid implementations (mix of JSON-LD and microdata). Testing tools generally detect both, but inconsistencies between the two formats can create unpredictable behavior — and Google doesn't clearly document how it arbitrates in case of conflict.

Practical impact and recommendations

What should you concretely do to verify your structured data?

First, systematically use the Rich Results Test before any deployment. Test a representative sample of pages: homepage, product sheet, blog article, category page. Don't rely on just one URL.

Next, after deployment, track progress in Search Console via the "Enhancements" report. Errors and warnings that persist beyond 7-10 days signal a real problem — not just a synchronization delay.

What diagnostic errors should you avoid with your schemas?

Don't panic if Search Console shows errors 24 hours after your deployment. Google needs to recrawl your pages, and this process is never instantaneous. First check that the URL inspection tool (which forces a real-time fetch) correctly detects your schemas.

Another classic mistake: relying solely on third-party validators. Tools like Schema.org Validator or other JSON-LD validators verify syntax, but don't test Google eligibility. Only Google's tools have the final say on whether your data will trigger rich snippets.

How do you build an effective monitoring workflow?

Set up automated monitoring of your Search Console reports via the API. Alert yourself as soon as an error spike appears on your critical schema types (Product, Article, FAQ, etc.). Reactive monitoring lets you fix issues before SEO impact sets in.

Supplement with regular post-deployment tests: after each major template update, after each redesign, after each migration. Structured data breaks easily — a moved div, a removed itemscope attribute, and everything collapses.

- Systematically test with the Rich Results Test before deployment on a representative sample of pages

- Use the URL inspection tool in Search Console to verify actual rendering on the Googlebot side after deployment

- Wait 7-10 days before considering a Search Console error as definitive (normal synchronization delay)

- Monitor the "Enhancements" report in Search Console via API to detect error spikes quickly

- Specifically test JavaScript rendering if your schemas are injected client-side

- Never rely solely on third-party validators — only Google's tools have the final say on eligibility

- Document your schema implementations to facilitate future diagnostics during redesigns or migrations

❓ Frequently Asked Questions

Si le Rich Results Test valide mes schemas, pourquoi mes rich snippets n'apparaissent-ils pas en SERP ?

Combien de temps faut-il attendre pour que Search Console reflète mes modifications de schema ?

Mes schemas JSON-LD injectés en JavaScript sont-ils détectés par Google ?

Dois-je corriger les avertissements (warnings) ou seulement les erreurs (errors) dans Search Console ?

Peut-on mélanger JSON-LD et microdata sur une même page sans risque ?

🎥 From the same video 13

Other SEO insights extracted from this same Google Search Central video · published on 07/04/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.