Official statement

Other statements from this video 20 ▾

- □ Do internal links in the header or footer really have less SEO value?

- □ Does Google really penalize a website that buys links in bulk?

- □ Do you really need technical perfection to rank well on Google?

- □ Does Google really crawl your site less when it perceives low quality?

- □ Is the 'Crawled, Currently Not Indexed' status really just a sign of poor website quality?

- □ Should you worry when the number of indexed pages drops?

- □ Crawled vs Discovered: Are these two non-indexed statuses really the same thing?

- □ Can you really control which images Google displays in your search snippets?

- □ Is your franchise network losing visibility because of duplicate content across multiple domains?

- □ CCTLD, subdomain or subdirectory: which structure for international geotargeting?

- □ Does the 503 status code really protect your pages from deindexing during an outage?

- □ Will accidental dofollow links in your PR coverage actually hurt your rankings?

- □ Can you really use the address change tool to merge or split websites?

- □ Why are your structured data disappearing on your localized pages?

- □ Do structured data really improve rankings, or just how results are displayed?

- □ Will Google ever display Core Web Vitals badges directly in search results?

- □ Why Does Google Cause Position Fluctuations for Two Months After URL Restructuring?

- □ Does internal linking really outperform URL structure for SEO?

- □ Should you really spend time calculating internal PageRank to optimize your website?

- □ Can Google Really Identify the Main Language of a Multilingual Page Without Hurting Your SEO?

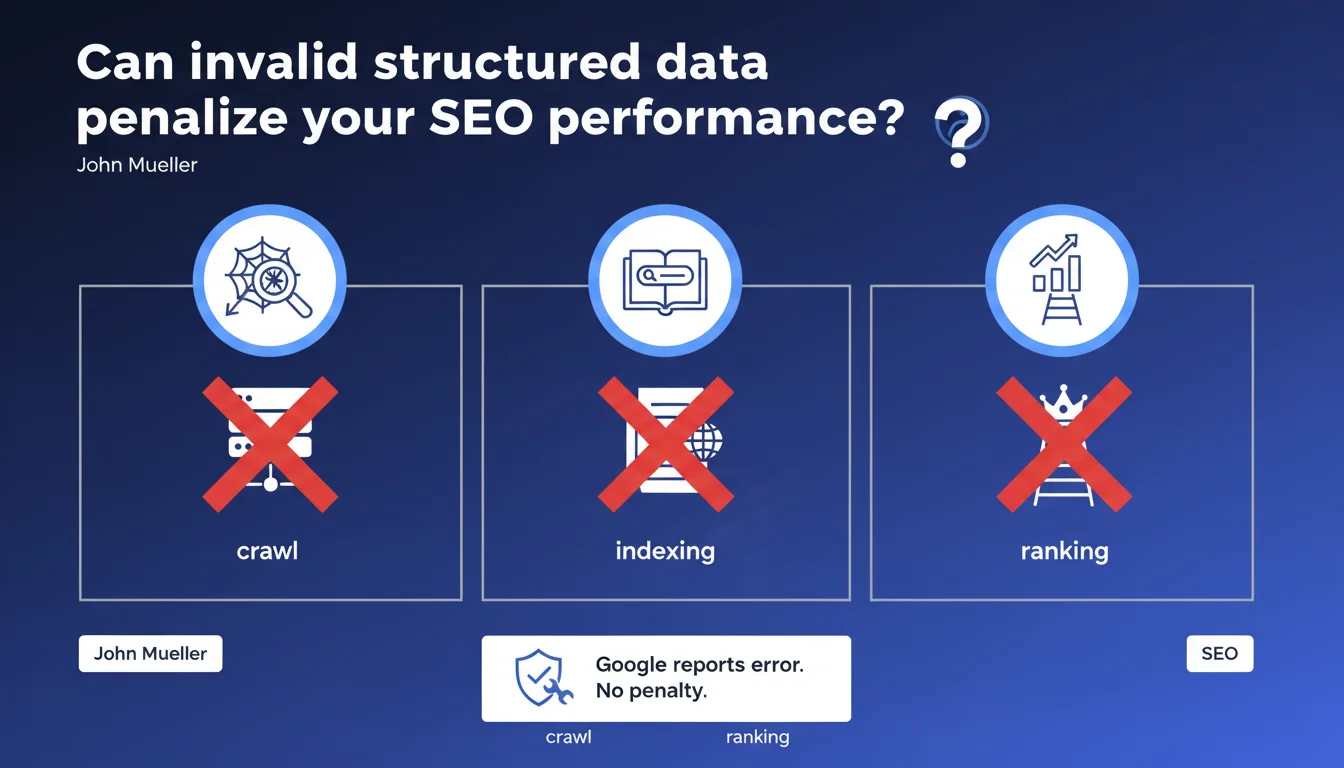

Invalid structured data does not result in any penalty to the crawling, indexing, or ranking of your pages. Google simply reports the error in Search Console if you want to leverage this data for rich results. In other words: a markup error will not cause your rankings to drop.

What you need to understand

Why does Google clarify that markup errors do not impact SEO?

Historically, many SEO practitioners feared that a defective implementation of structured data could harm a page's ranking. This statement from Mueller clears up any ambiguity: a syntax error in your JSON-LD, a poorly defined Schema type, or a missing attribute will never trigger an algorithmic penalty.

Google treats structured data as an optional enrichment layer. If the markup is invalid, the search engine simply ignores this layer and continues to crawl, index, and rank the page normally — as if the markup did not exist.

What is the difference between "invalid" and "unrecognized"?

Structured data that is invalid contains syntax errors or does not comply with Schema.org specifications (missing property, incorrect type, poorly formatted value). Data that is unrecognized, on the other hand, is technically correct but covers a type or property that Google does not use to generate rich results.

In both cases, the organic SEO impact is zero. The only difference: valid but unrecognized data generates neither an error in Search Console nor a rich result. Invalid data generates an alert but remains without consequence for ranking.

What are the key takeaways?

- Structured data errors do not affect crawling, indexing, or ranking of your pages.

- Google reports these errors in Search Console only to allow you to correct the markup and access rich results.

- A page without structured data and a page with invalid data are treated the same way by the ranking algorithm.

- Valid structured data can improve SERP visibility (rich snippets, carousels, knowledge panels) but is not a direct ranking factor.

SEO Expert opinion

Is this statement consistent with real-world observations?

In principle, yes. I have never observed a drop in rankings directly correlated with markup errors. Sites that correct their Schema data errors generally do not gain organic positions — they gain SERP visibility through rich snippets, which is different.

However, we need to nuance this: if your markup is so broken that it triggers critical JavaScript errors blocking page rendering, then yes, you will see an SEO impact. But this is no longer a matter of invalid structured data — it is a problem of technical availability of content.

What nuances should we add to this claim?

Mueller is talking about structured data in isolation. Let's be honest: in reality, a markup error often reveals a broader quality issue. A site that accumulates hundreds of Schema.org errors generally has other concerns (malformed HTML, poorly managed JavaScript, degraded UX).

What affects ranking is not the Schema errors themselves, but the degraded technical context in which they appear. [To be verified]: it would be interesting to measure whether Google considers overall markup quality as an indirect technical quality signal — but nothing suggests that this is the case today.

When does this rule not apply?

Mueller's statement covers passive markup errors. It does not apply if you attempt to manipulate rich results with misleading or spam structured data (fake reviews, fictitious prices, non-existent events).

In these cases, Google can apply a manual action targeting rich results — or, in extreme cases, a broader penalty if the spam is systematic. This is no longer a matter of technical validity, but editorial compliance.

Practical impact and recommendations

What should you concretely do about structured data errors?

Prioritize based on business objective. If you are targeting rich results (review stars, FAQs, recipes, events), then correct all errors reported in Search Console. If these rich snippets are not strategic for you, the errors can wait — they do not harm your organic search rankings.

Use Google's rich results test and Search Console to identify blocking errors. Focus first on markup types that have a proven SERP impact in your sector (Product, Review, FAQ, HowTo, Recipe, Event).

What errors should you avoid when managing structured data?

Do not fall into the opposite trap: implementing structured data everywhere "just in case". Poorly thought-out or generic markup brings nothing and dilutes your semantic signal. Better to have 3 perfectly implemented Schema types than 15 poorly done ones.

Also avoid correcting errors without understanding their root cause. If your CMS automatically generates invalid markup, fix the source, not the symptoms page by page. Otherwise, you will enter an endless correction cycle with every publication.

How can you verify that your implementation is optimal?

- Audit your structured data with the rich results test and the Search Console URL inspection tool.

- Verify that the Schema types used match your SERP objectives (no unnecessary markup).

- Check consistency between visible content and structured data — any divergence can be interpreted as misleading.

- Set up automatic monitoring of Schema errors via the Search Console API to detect regressions after each deployment.

- Test the real SERP impact of corrections: some corrected errors will never activate a rich snippet if Google does not deem the content relevant.

❓ Frequently Asked Questions

Une erreur de données structurées peut-elle faire chuter mes positions dans Google ?

Dois-je corriger toutes les erreurs signalées dans la Search Console ?

Peut-on être pénalisé pour des données structurées trompeuses ?

Les données structurées sont-elles un facteur de classement ?

Vaut-il mieux avoir des données structurées invalides ou pas de données du tout ?

🎥 From the same video 20

Other SEO insights extracted from this same Google Search Central video · published on 21/01/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.