Official statement

Other statements from this video 11 ▾

- □ Le fichier robots.txt empêche-t-il réellement l'indexation de vos pages ?

- □ Votre outil de test SEO est-il vraiment un crawler aux yeux de Google ?

- □ Googlebot suit-il vraiment les liens ou fonctionne-t-il autrement ?

- □ Pourquoi Google abandonne-t-il les directives d'indexation dans robots.txt ?

- □ Publier un site web équivaut-il juridiquement à autoriser Google à le crawler ?

- □ Comment Googlebot ajuste-t-il sa fréquence de crawl pour ne pas faire planter vos serveurs ?

- □ Peut-on indexer une page sans la crawler ?

- □ Pourquoi Google refuse-t-il des directives robots.txt trop granulaires ?

- □ Le robots.txt est-il vraiment suffisant pour contrôler le crawl de votre site ?

- □ Qui a vraiment créé le parser robots.txt de Google ?

- □ Pourquoi Google refuse-t-il catégoriquement de moderniser le format robots.txt ?

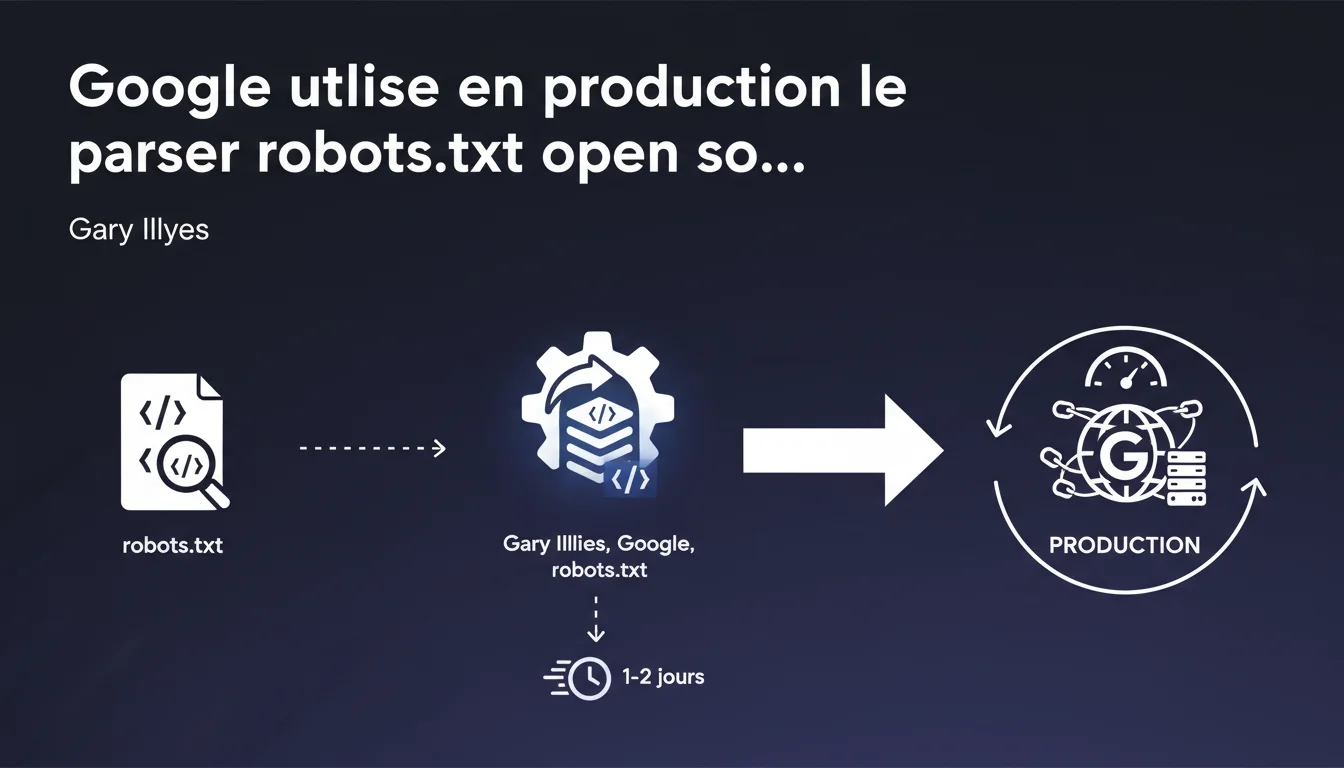

Google confirms that the open source robots.txt parser it released is exactly the same code that runs in production. Changes made to the GitHub repository are deployed in production within 1 to 2 days. This level of transparency is unusual and allows for anticipating changes in crawler behavior.

What you need to understand

Why did Google make its robots.txt parser open source?\u003c/h3>

Google released the source code of its robots.txt parser on GitHub to promote standardization. For years, each search engine interpreted the robots.txt file in its own way, creating inconsistencies.<\u003cp>

By making its code public, Google allowed developers to test locally how Googlebot will interpret their directives. It's also a strong signal sent to the industry: this is how we do it, align yourself if you want consistency.<\u003cp>

What does "the same code in production" really mean?\u003c/h3>

Gary Illyes states that this is not a simplified or watered-down version. It's the exact code that analyzes robots.txt files from millions of sites every day. When Googlebot encounters a robots.txt, it goes through this parser.<\u003cp>

Validated changes in the GitHub repository are deployed in production within 1 to 2 days. This means that one can track changes in Google's behavior by monitoring the commits. This level of transparency is unprecedented.<\u003cp>

What are the key points to remember?\u003c/h3>- The open source parser is not a demo — it's the real production code

- Code updates are deployed within 1 to 2 days after validation

- Behavior changes can be anticipated by monitoring the GitHub repo

- Developers can test locally how Googlebot will interpret their robots.txt

- This is a step towards standardization of the robots.txt protocol interpretation

SEO Expert opinion

Is this transparency consistent with observed practices in the field?\u003c/h3>

Yes, and that's precisely what makes this statement credible. Since the code was released, several developers have compared the observed behavior of Googlebot with the rules set in the parser. The results match.<\u003cp>

This consistency is not trivial. Google could have published a "marketing" parser that looks like the real thing without actually being it. The fact that the code is actually used in production changes the game for testing and predictability.<\u003cp>

What nuances should be added to this statement?\u003c/h3>

The robots.txt parser is one component among others in Google's crawling system. It determines what Googlebot is allowed to crawl, but not what it will actually crawl or when.<\u003cp>

Crawl budget decisions, prioritization, and crawling frequency — all that remains opaque. The parser simply states "allowed" or "blocked", period. The rest of the crawling machinery is not open source.<\u003cp>

Can we really trust the announced deployment delay?\u003c/h3>

The 1 to 2 days delay between commit and production is technically plausible — it's a classic CI/CD cycle for critical code. But this quickness also implies that bugs can occur in production quickly.<\u003cp>

Monitoring the GitHub repo becomes relevant. If a major change is pushed, you can anticipate that it will be active within 48 hours. This allows for detecting potential regressions before they impact your crawl.<\u003cp>

Practical impact and recommendations

What should you concretely do with this information?\u003c/h3>

First, install the parser locally if you manage sites with complex robots.txt rules. The GitHub repository provides a command-line tool that allows you to test your directives before deploying them in production.<\u003cp>

Then, set up monitoring of the GitHub repo. Changes to the parser can reveal changes in behavior before they are officially documented. This is a strategic advantage for anticipation.<\u003cp>

What mistakes should be avoided with the robots.txt file?\u003c/h3>

Don't confuse robots.txt and indexing management. The robots.txt blocks crawling, not indexing. If a URL is blocked in robots.txt but has backlinks, Google can still index it without crawling it.<\u003cp>

Avoid overly complex patterns. The parser supports wildcards (*) and end-of-path ($), but the more convoluted your rules are, the higher the risk of error. Test systematically with the parser before deploying.<\u003cp>

How can I check if my robots.txt is interpreted correctly?\u003c/h3>- Use the robots.txt testing tool in Google Search Console

- Clone the GitHub parser and test your complex rules locally

- Compare the observed behavior in logs with the defined directives

- Ensure that critical directives (admin, sensitive areas) are correctly applied

- Monitor commits in the GitHub repo to detect future developments

The fact that Google uses the same parser in production and open source changes the game for testing and predictability. You can now anticipate how Googlebot will interpret your directives before deploying them. However, fine management of the robots.txt — especially on complex architectures with conditional rules or migrations — demands sharp expertise and regular monitoring. If your site relies on critical rules or if you fear an expensive crawl budget error, seeking help from a specialized SEO agency can prevent difficult-to-correct mistakes down the line.<\u003c/div>

❓ Frequently Asked Questions

Le parser open source est-il vraiment identique au code de production de Google ?

Puis-je utiliser ce parser pour tester mon robots.txt avant de le déployer ?

Si je surveille le repo GitHub, puis-je anticiper les changements de comportement de Googlebot ?

Le robots.txt bloque-t-il l'indexation ou seulement le crawl ?

Quels sont les risques d'une règle robots.txt mal configurée ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 21/12/2021

🎥 Watch the full video on YouTube →Related statements

Get real-time analysis of the latest Google SEO declarations

Be the first to know every time a new official Google statement drops — with full expert analysis.

💬 Comments (0)

Be the first to comment.