Official statement

Other statements from this video 11 ▾

- □ Le fichier robots.txt empêche-t-il réellement l'indexation de vos pages ?

- □ Votre outil de test SEO est-il vraiment un crawler aux yeux de Google ?

- □ Googlebot suit-il vraiment les liens ou fonctionne-t-il autrement ?

- □ Le parser robots.txt open source de Google est-il vraiment utilisé en production ?

- □ Pourquoi Google abandonne-t-il les directives d'indexation dans robots.txt ?

- □ Publier un site web équivaut-il juridiquement à autoriser Google à le crawler ?

- □ Comment Googlebot ajuste-t-il sa fréquence de crawl pour ne pas faire planter vos serveurs ?

- □ Pourquoi Google refuse-t-il des directives robots.txt trop granulaires ?

- □ Le robots.txt est-il vraiment suffisant pour contrôler le crawl de votre site ?

- □ Qui a vraiment créé le parser robots.txt de Google ?

- □ Pourquoi Google refuse-t-il catégoriquement de moderniser le format robots.txt ?

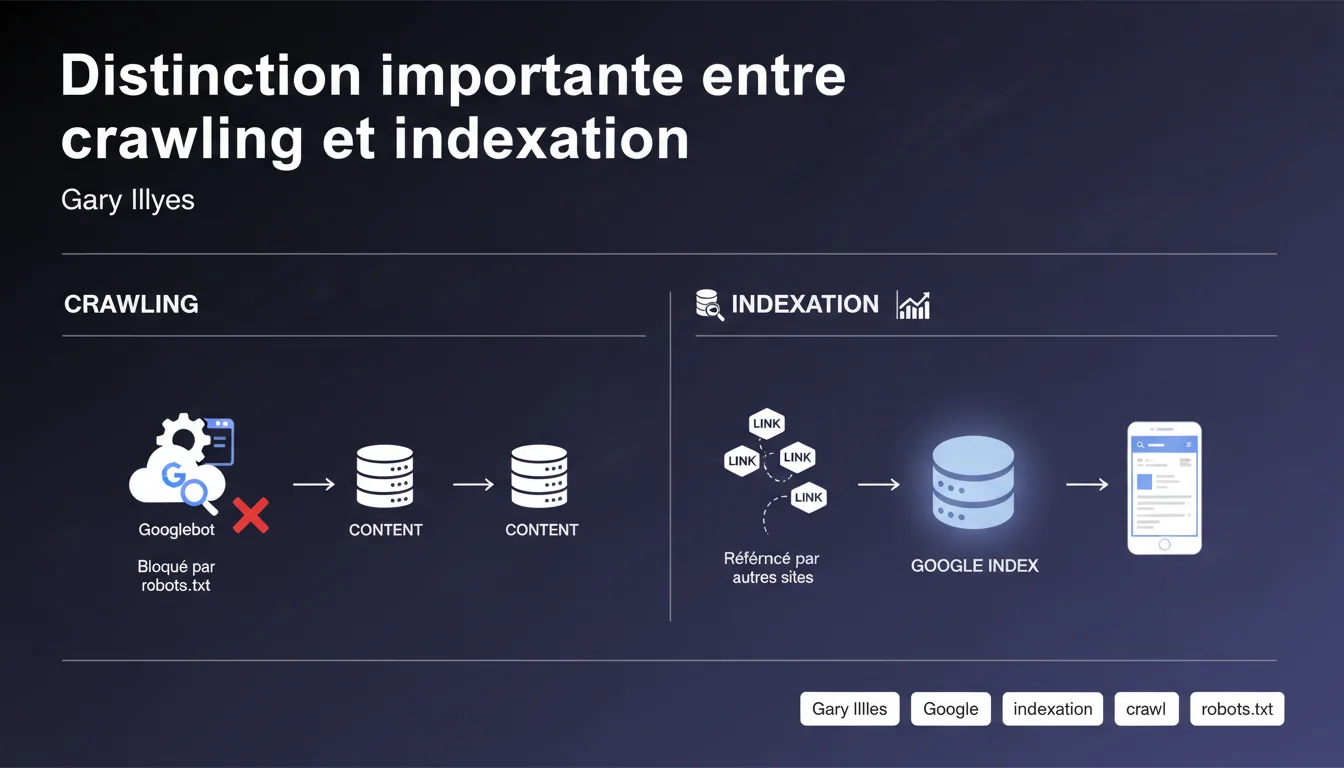

Google indexes URLs without crawling their content if they are blocked by robots.txt but referenced by backlinks. This mechanism creates ‘empty’ index entries — without title, description, or usable content. In practical terms: blocking a page from crawling doesn't guarantee it disappears from the index.

What you need to understand

Why does Google index URLs it cannot crawl?

The engine distinguishes between two separate processes: crawling (retrieving HTML) and indexing (storing in the database). When a URL is blocked by robots.txt, Googlebot cannot access it. However, if other sites link to that page, Google knows of its existence.

In this case, the URL can appear in search results — but without a title or usable meta description. The index entry remains skeletal, based solely on external link anchors and off-page signals.

What are the practical consequences for a site blocked from crawling?

A page blocked by robots.txt but indexed appears in Google with a generic notice such as “No information available for this page.” The CTR is disastrous, and the user experience is non-existent. Worse yet, you have no control over the displayed title or description.

This situation frequently occurs with internal PDFs, poorly configured back offices, or member areas inadvertently referenced. Blocking robots.txt does not protect them from indexing — it just makes them invisible to the crawler.

How can I check if my site is affected?

In Search Console, look for indexed URLs that have not been crawled. Filter by “Blocked by robots.txt.” If you find results, it means Google has indexed these pages without accessing the content — probably via backlinks or an old sitemap.

- Crawling and indexing are two distinct processes — one does not mechanically depend on the other

- A URL blocked by robots.txt can remain indexable if it receives external backlinks

- The index entry will be empty: no title, no description, no usable content

- To truly de-index, use noindex (but beware: robots.txt prevents it from being seen)

- Search Console allows you to identify indexed URLs that are blocked from crawling

SEO Expert opinion

Is this distinction really applied in the field?

Yes, we regularly observe URLs in “Blocked by robots.txt” that remain indexed. Typically: a PDF linked by an external directory, a product page referenced by a partner, a customer area mentioned in a forum. Google sees the link, knows the URL, but cannot crawl the content.

The issue — and this is where Gary's statement becomes interesting — is that many SEOs still think that robots.txt = de-indexation. False. Robots.txt blocks access, but does not prevent indexing if external signals exist.

What nuances should be added to this rule?

In practice, a URL blocked from crawling has very little chance to rank. No content = no thematic relevance. It may appear in SERPs, but rarely beyond the 10th page. Except in very specific cases: strong domain authority + ultra-optimized link anchors.

Another nuance: if a page has already been crawled before being blocked, Google keeps the old version in cache. Indexing does not restart from scratch — it freezes. The title and meta remain those from before the block, until Google decides to purge the entry. [To verify]: the retention duration varies according to the authority of the page and the frequency of historical updates.

In what cases does this mechanism really cause problems?

When you block a sensitive area — back office, customer space, staging environment — thinking it will be rendered invisible. If an external link points to it (an employee accidentally sharing the URL, a leak in a GitHub changelog), Google can index it. Result: a sensitive URL appears in search results, even without accessible content.

Practical impact and recommendations

What should you actually do to avoid this trap?

If you want a page to disappear from the index, do not use robots.txt. Place a noindex tag in the HTML and let Google crawl the page to read the directive. Once de-indexed, you can then block crawling if you want to save budget.

For already blocked and indexed content, there are two options: either temporarily unblock the crawl with a noindex, or use the URL removal tool in Search Console. The latter method is faster but temporary (6 months). The former is permanent.

How can I check that my robots.txt isn't preventing de-indexation?

Audit your robots.txt file: look for Disallow that block entire sections. Cross-check with the indexed URLs in Search Console. If you find pages blocked from crawling but present in the index, it means backlinks are keeping them active.

Use a tool like Screaming Frog in “List” mode to check that sensitive pages have a noindex and are crawlable. A noindex on a blocked page serves absolutely no purpose — Google will never see it.

What mistakes should be absolutely avoided?

- Never block crawling on a page you want to de-index — let Google read the noindex

- Do not confuse robots.txt (crawling control) and noindex (indexing control)

- Regularly check Search Console to identify “Blocked by robots.txt” URLs that are indexed

- Properly de-index with noindex before blocking crawl if necessary

- Never rely solely on robots.txt to protect sensitive content

- Monitor external backlinks pointing to non-public areas

❓ Frequently Asked Questions

Peut-on forcer la désindexation d'une page bloquée par robots.txt ?

Si une page a déjà été crawlée avant d'être bloquée, que devient son indexation ?

Un noindex sur une page bloquée au crawl est-il utile ?

Comment repérer les pages indexées sans contenu dans la Search Console ?

Robots.txt protège-t-il réellement les contenus sensibles ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 21/12/2021

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.