Official statement

Other statements from this video 11 ▾

- □ Le fichier robots.txt empêche-t-il réellement l'indexation de vos pages ?

- □ Votre outil de test SEO est-il vraiment un crawler aux yeux de Google ?

- □ Googlebot suit-il vraiment les liens ou fonctionne-t-il autrement ?

- □ Le parser robots.txt open source de Google est-il vraiment utilisé en production ?

- □ Pourquoi Google abandonne-t-il les directives d'indexation dans robots.txt ?

- □ Publier un site web équivaut-il juridiquement à autoriser Google à le crawler ?

- □ Comment Googlebot ajuste-t-il sa fréquence de crawl pour ne pas faire planter vos serveurs ?

- □ Peut-on indexer une page sans la crawler ?

- □ Le robots.txt est-il vraiment suffisant pour contrôler le crawl de votre site ?

- □ Qui a vraiment créé le parser robots.txt de Google ?

- □ Pourquoi Google refuse-t-il catégoriquement de moderniser le format robots.txt ?

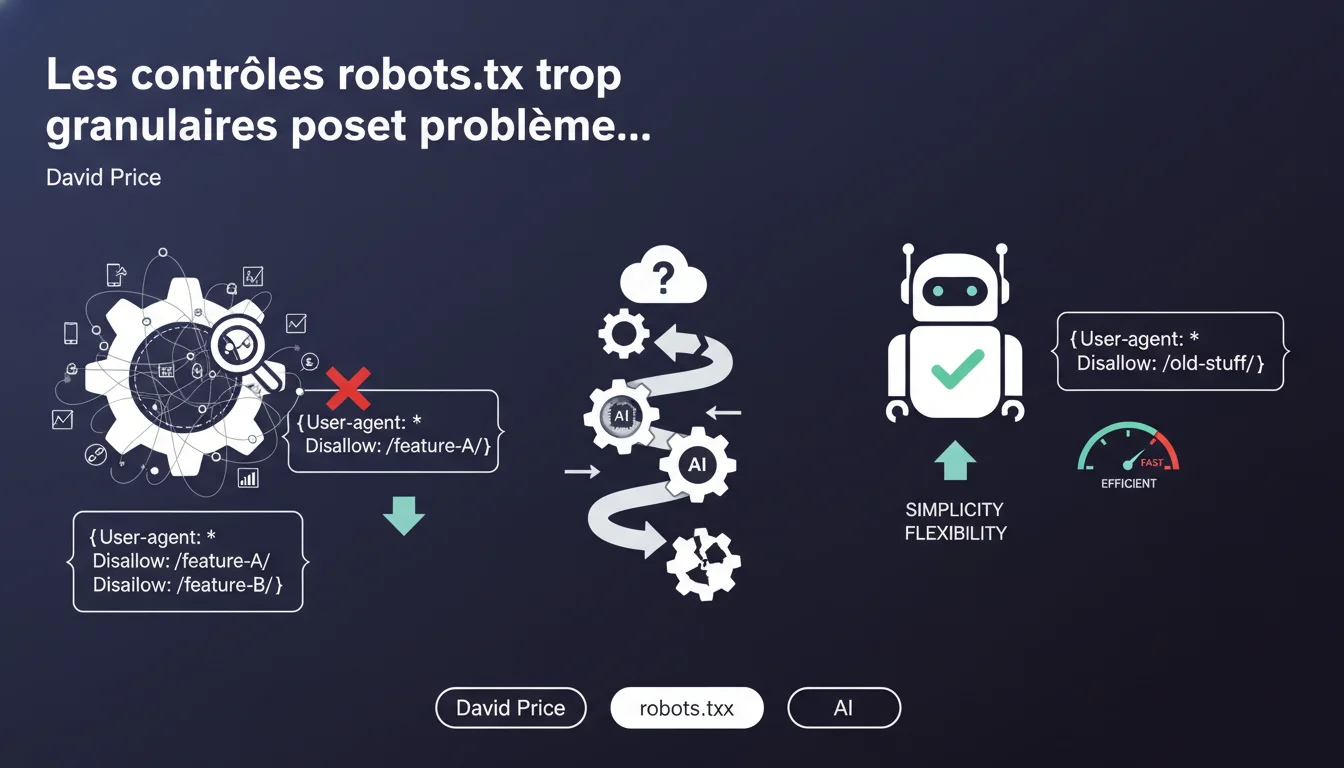

Google explains that overly specific robots.txt directives create interpretation problems as engine features evolve. This raises the question: is it really for our benefit, or to simplify Google's work?

What you need to understand

David Price from Google speaks on a technical debate: should we refine robots.txt directives to the maximum to precisely control what Googlebot can crawl?

His answer is clear — no. And the explanation boils down to one word: maintenance.

What does

SEO Expert opinion

Does this justification really hold up?

Partly, yes. The argument for long-term maintenance is valid — I’ve seen robots.txt files of 200 lines become unmanageable after a migration or redesign. Forgotten rules blocking critical resources, and no one knows why.

But let’s be honest: this position also suits Google. A simple robots.txt means fewer edge cases to deal with, less support, and fewer bugs to fix. Saying ‘we keep it basic’ avoids handling complex edge cases.

In what cases is this recommendation insufficient?

On sites with massive dynamic content generation — marketplaces, directories, aggregators — it is sometimes necessary to block precise patterns to avoid crawling millions of unnecessary pages. A simple Disallow: /search? might be too blunt.

In these cases, “granular” directives are unavoidable. But Google is right on one point: document them, maintain them, and plan for regular reviews. [To verify] — we lack documented feedback on the real impact of a Googlebot evolution on complex robots.txt. Google does not publish a detailed changelog with each crawler update.

What is the proposed concrete alternative?

Google says: meta robots, X-Robots-Tag, canonical, Search Console parameters. This is true, these tools offer finer control. But they require Googlebot to crawl the page first to read these instructions — which consumes crawl budget.

So, if the goal is to save server resources or prevent access even before crawling, robots.txt remains essential. The contradiction is there: Google says “keep it simple” while knowing that some sites have no choice.

Practical impact and recommendations

What should you practically do with your robots.txt?

Audit your current file. If you have dozens of lines targeting specific endpoints, API versions, ultra-specific GET parameters — ask yourself: are they still needed?

Favor broad and stable rules. For example, block /admin/ instead of /admin/dashboard/v2/. If you go to v3 tomorrow, the rule remains valid.

What mistakes should be absolutely avoided?

Never block critical resources (CSS, JS, images) with overly precise rules. Google may misinterpret, especially as its rendering evolves. Always test your modifications using the robots.txt test tool in Search Console.

Avoid complex regex or nested wildcards. Even if the syntax is technically supported, it can become ambiguous during a Google parser update.

How can you check that your configuration is compliant?

- Open Search Console → Robots.txt Test Tool → verify that critical URLs are not blocked.

- List all

Disallowdirectives and ask yourself: “If Google changes its crawler tomorrow, does this rule remain relevant?” - Document every complex rule: why it exists, what it blocks, who added it.

- Review your robots.txt at least every 6 months, especially after a migration or redesign.

- Use meta robots or X-Robots-Tag for anything requiring fine control (noindex, nofollow, indexIfEmbedded, etc.).

- If you block dynamic patterns, test them regularly with third-party tools (Screaming Frog, Botify, OnCrawl).

Robots.txt should remain a coarse and stable blocking tool. Anything requiring granularity should pass through meta robots, canonical, or Search Console. If your file becomes complex, it is probably a sign to rethink your site architecture rather than adding patches.

These technical optimizations — especially on high-volume sites — require specialized expertise and continuous monitoring. If your robots.txt exceeds 50 lines or if you manage millions of indexable pages, consulting a specialized SEO agency can help you avoid costly mistakes and ensure a sustainable configuration, even when Google evolves its crawlers.

❓ Frequently Asked Questions

Est-ce que Google prévient quand il modifie le comportement de Googlebot ?

Peut-on bloquer Googlebot-Image différemment de Googlebot tout en restant « simple » ?

Si je bloque des paramètres via robots.txt, est-ce que Google les ignore totalement ?

Quelle est la taille maximale recommandée pour un fichier robots.txt ?

Dois-je supprimer toutes mes directives complexes immédiatement ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 21/12/2021

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.