Official statement

Other statements from this video 11 ▾

- □ Le fichier robots.txt empêche-t-il réellement l'indexation de vos pages ?

- □ Votre outil de test SEO est-il vraiment un crawler aux yeux de Google ?

- □ Googlebot suit-il vraiment les liens ou fonctionne-t-il autrement ?

- □ Le parser robots.txt open source de Google est-il vraiment utilisé en production ?

- □ Pourquoi Google abandonne-t-il les directives d'indexation dans robots.txt ?

- □ Publier un site web équivaut-il juridiquement à autoriser Google à le crawler ?

- □ Comment Googlebot ajuste-t-il sa fréquence de crawl pour ne pas faire planter vos serveurs ?

- □ Peut-on indexer une page sans la crawler ?

- □ Pourquoi Google refuse-t-il des directives robots.txt trop granulaires ?

- □ Le robots.txt est-il vraiment suffisant pour contrôler le crawl de votre site ?

- □ Pourquoi Google refuse-t-il catégoriquement de moderniser le format robots.txt ?

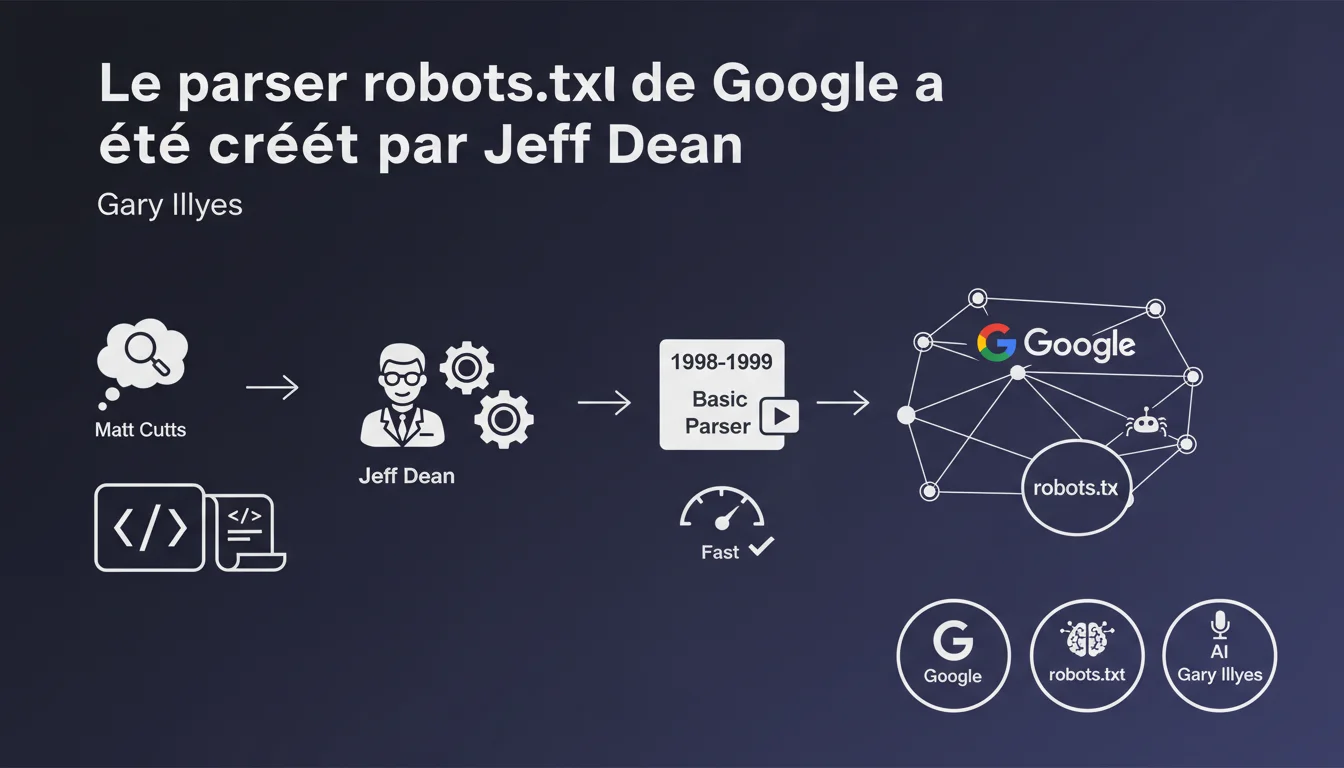

Jeff Dean wrote Google's robots.txt parser in 1998-1999, following Matt Cutts' suggestion to integrate the protocol. The initial code was minimalist, containing only a few lines. This revelation illustrates the technical genesis of a fundamental component of modern crawling.

What you need to understand

Why does this historical revelation still matter?

The robots.txt parser is the first line of defense between a website and Google's bots. Knowing that it was designed by Jeff Dean — a legendary figure in software engineering at Google — with a very simple initial code, reminds us of a key principle: efficiency trumps complexity.

Matt Cutts proposed the idea of implementing the robots.txt protocol, which Google then adopted. The robots.txt file has become the standard mechanism for controlling crawler access to a site's resources.

What does this reveal about the evolution of Google’s crawler?

The initial parser consisted of just a few lines of code. Today, Google's crawling system is a complex ecosystem that manages billions of pages, conditional rules, and advanced patterns.

This original simplicity explains why some behaviors of the parser sometimes seem rigid: the foundations laid in 1998-1999 have influenced the current architecture, even after many iterations.

Is the robots.txt protocol still relevant today?

Absolutely. Despite its age, the robots.txt remains the first file consulted by Googlebot before any crawl. If misconfigured, it can block indexing of entire sections of a site — a common mistake in production.

In fact, Google opened the source code of its parser in 2019, thus standardizing behaviors and allowing developers to test their configurations locally.

- Jeff Dean coded the original robots.txt parser between 1998 and 1999

- The initial code was very basic, with only a few lines

- Matt Cutts proposed integrating the robots.txt protocol at Google

- The parser has evolved but retains an architecture inherited from this initial design

- Google opened the source code of the parser in 2019, standardizing implementations

SEO Expert opinion

Does this statement bring any technical value?

Honestly, it’s more of a historical anecdote than a strategic revelation. Knowing who wrote the initial code doesn’t change how we should configure a robots.txt today.

However, it confirms an often underestimated point: the foundations of Google are based on simple engineering choices. No over-optimization. What matters is that the parser works predictably and quickly.

Is the current parser still as basic?

Absolutely not. [To be verified] However, it would be interesting to know how many lines the modern parser has. SEO professionals regularly encounter subtle behaviors: wildcard management, contradictory allow/disallow interpretations, partial compliance with certain non-standard directives (like crawl-delay, for example).

The fact that the initial code was minimal suggests that some current limitations are not bugs but inherited architectural choices. Google has always prioritized execution speed over syntax flexibility.

Should we be concerned about the original simplicity?

No. The robustness of a system does not depend on its initial complexity. The robots.txt parser has proven its reliability for over two decades — that’s what matters.

Where things sometimes go wrong is when SEOs try to use exotic patterns that are undocumented. The Google parser follows the REP (Robots Exclusion Protocol) standard, and anything outside of this framework is considered non-guaranteed behavior.

Practical impact and recommendations

What should you concretely do with your robots.txt?

Keep it simple and clear. No convoluted patterns that could be misinterpreted. Test each modification in Google Search Console before deploying it to production.

Avoid blocking critical resources (CSS, JS) unless you know exactly what you're doing. Google has explicitly recommended not to block assets necessary for page rendering.

What mistakes should you absolutely avoid?

Accidentally blocking the entire site via a misplaced Disallow: / — this happens more often than one might think, especially after a migration or a CMS change.

Using robots.txt to hide sensitive content. This file is public and readable by anyone. It can even sometimes indicate to malicious actors where to look. For private content, use server authentication or noindex meta robots.

How do you check if everything is working correctly?

Use the robots.txt testing tool in Google Search Console. It shows you exactly how Googlebot interprets your file, URL by URL.

Regularly check the coverage reports to detect any unintentional blockages. A sudden spike in pages excluded by robots.txt often signals a configuration error.

- Test each modification of robots.txt in Google Search Console before deployment

- Never block CSS and JavaScript necessary for rendering

- Monitor coverage reports for unintentional blockages

- Do not use robots.txt to hide sensitive content — the file is public

- Prioritize simplicity: fewer lines = less risk of error

- Document each non-standard directive to understand its future impact

- Check syntax with validators compliant with the REP standard

The robots.txt remains an essential tool for crawl control, but its management requires diligence and vigilance. A configuration error can be costly in organic visibility.

For complex sites or multi-domain architectures, fine-tuning crawl budget and the strategic management of robots.txt requires deep expertise. Support from a specialized SEO agency helps avoid common pitfalls and implement crawl strategies tailored to your technical context.

❓ Frequently Asked Questions

Qui a proposé l'intégration du protocole robots.txt chez Google ?

Le code du parser robots.txt de Google est-il toujours aussi simple ?

Google a-t-il rendu public son parser robots.txt ?

Peut-on bloquer des ressources CSS/JS via robots.txt ?

Comment tester son fichier robots.txt avant de le mettre en production ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 21/12/2021

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.