Official statement

Other statements from this video 16 ▾

- □ Le balisage Local Business doit-il vraiment se limiter à une seule ville ?

- □ Faut-il vraiment migrer 1:1 sans rien changer lors d'un changement de domaine ?

- □ Schema.org : pourquoi Google ignore-t-il une partie de vos balises structurées ?

- □ Faut-il vraiment rédiger du texte descriptif autour de vos illustrations pour ranker dans Google Images ?

- □ Faut-il publier tous les jours pour améliorer son référencement Google ?

- □ Le nombre de mots est-il vraiment sans importance pour le référencement ?

- □ Les mots-clés dans les URLs ont-ils encore un impact en SEO ?

- □ Peut-on vraiment lancer deux sites quasi-identiques sans risquer de pénalité Google ?

- □ Pourquoi vos liens JavaScript doivent absolument utiliser des balises A avec href valide ?

- □ L'audio sur une page influence-t-il réellement le classement Google ?

- □ Faut-il vraiment éviter de modifier les balises meta avec JavaScript ?

- □ Les mises à jour algorithmiques de Google sont-elles vraiment différentes des pénalités ?

- □ Pourquoi Google ne communique-t-il que sur une fraction de ses mises à jour d'algorithme ?

- □ Les données structurées améliorent-elles vraiment votre classement dans Google ?

- □ Faut-il vraiment éviter d'utiliser noindex et canonical sur la même page ?

- □ Les données structurées vidéo servent-elles uniquement à l'indexation ?

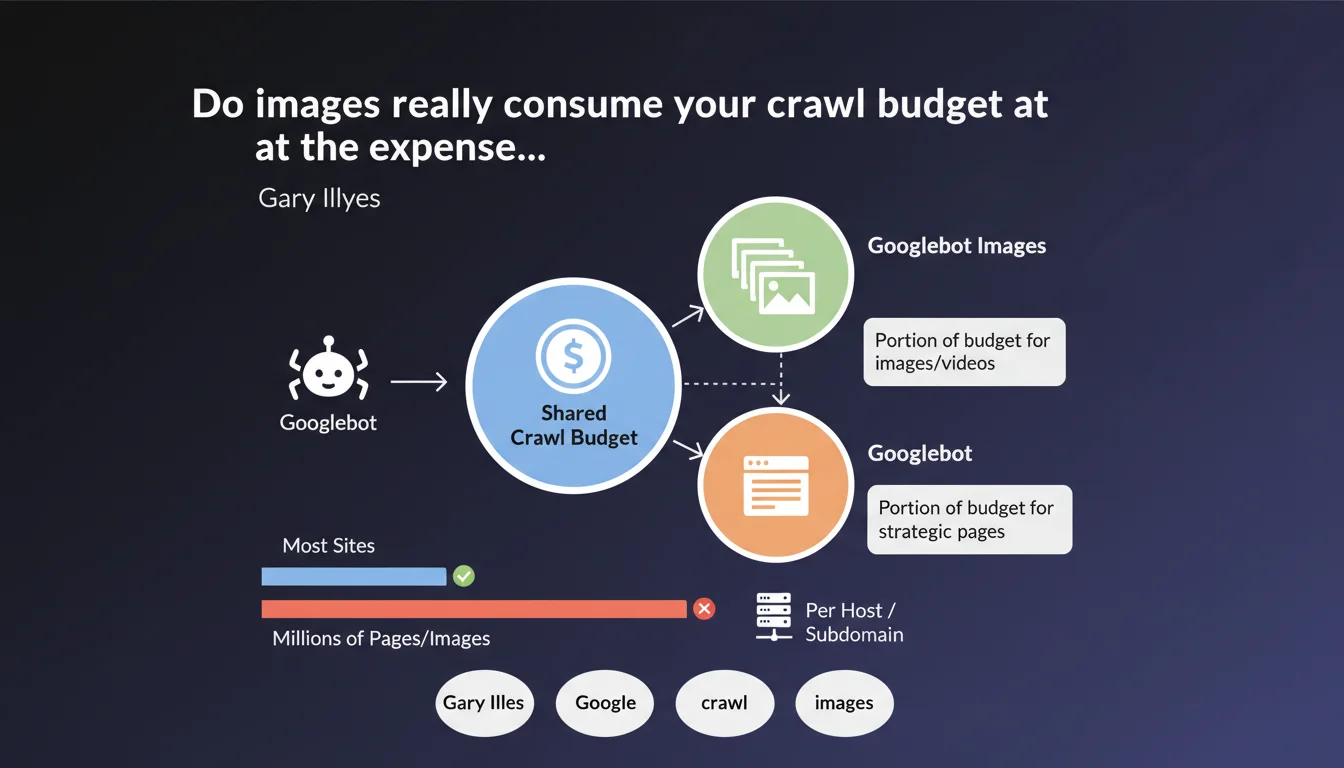

Googlebot and its variants (Images, News, etc.) share a single crawl budget per host. If your site hosts millions of images, Googlebot Images can consume a significant portion of the budget that could have been allocated to crawling your HTML pages. Each subdomain has its own budget, which opens up architectural optimization possibilities.

What you need to understand

What does this shared crawl budget really mean in concrete terms?

When Gary Illyes talks about a shared crawl budget, he confirms that Google doesn't segment crawl resources by content type. Whether Googlebot explores your HTML pages or Googlebot Images scans your JPGs, everything draws from the same pool.

For an average site with a few hundred or thousand pages, this notion remains theoretical. Crawl budget isn't the limiting factor — your server capacity, content quality, and technical structure matter more. But when you manage millions of resources (massive e-commerce, media platform, image-heavy sites), the game changes.

Why does this statement specifically target large sites?

Small and medium-sized sites generally benefit from a crawl budget that exceeds their actual needs. Google can explore 10,000 URLs per day while you publish 50 per month — no risk of saturation.

On the other hand, a site with 5 million images produced faces permanent tradeoffs. If Googlebot Images mobilizes 60% of the daily budget to scan redundant or low-value visuals, your new product pages or strategic articles may wait days before being crawled.

What does the subdomain specification bring to the table?

The key information here: each subdomain has its own crawl budget. It's not a revelation in itself, but it's the official confirmation that a subdomain architecture can serve as an optimization lever.

If you isolate your millions of images on cdn.yoursite.com or img.yoursite.com, you split the problem. The main subdomain keeps its budget intact for priority content, while the CDN handles visual resource crawling without cannibalizing high-ROI pages.

- Single crawl budget per host, shared between all Googlebots (standard, Images, News, etc.)

- This issue only matters for sites with very high volume (millions of resources)

- Each subdomain has its own distinct and independent crawl budget

- Small sites don't need to worry — their budget far exceeds their needs

SEO Expert opinion

Is this statement aligned with what we observe in the field?

Yes, overall. Log audits on high-volume platforms show that Googlebot Images can actually represent 30 to 50% of total crawling on certain e-commerce or visually rich media sites. This isn't anecdotal.

Where it gets tricky is that Google remains vague about exact thresholds. "Millions of pages" — OK, but at what point precisely does budget become a limiting factor? 500,000 URLs? 2 million? 10 million? [To be verified] because Google doesn't provide exploitable numerical data.

What nuances should be added to this advice?

First nuance: not all Googlebots are equal in terms of resource consumption. Googlebot Images can technically crawl faster than standard Googlebot because images don't require complex JavaScript rendering or heavy semantic analysis.

Second nuance: crawl budget isn't fixed. Google adjusts it dynamically based on server health, site popularity, content freshness. If your server handles the load well and your pages generate traffic, Google naturally increases your budget — within certain limits.

In which cases does this rule become critical?

Let's be honest: for 95% of sites, this is a non-issue. Even an e-commerce site with 50,000 products and 200,000 associated images will probably never encounter real friction.

It becomes critical when you combine: massive volume (millions of resources), high publication frequency (thousands of new content pieces per day), and suboptimal technical architecture (slow server response times, poorly managed infinite pagination, duplication). There, crawl budget becomes a measurable bottleneck.

Practical impact and recommendations

What should you concretely do if you manage a large site?

First step: audit your server logs. Identify the crawl distribution between standard Googlebot, Googlebot Images, and other variants. If Googlebot Images consumes more than 40% of your budget while your images don't bring significant SEO traffic, you have an optimization lever.

Second action: prioritize strategic content. Use robots.txt to block crawling of redundant or low-value images (thumbnails, multiple versions of the same visual). Leverage noindex directives to prevent Google from spending time on non-indexable resources.

Is a subdomain architecture the silver bullet?

Not necessarily. Moving your images to a dedicated subdomain can indeed isolate their crawl budget, but it introduces technical complexities: CORS management, potential SSL certificate duplication, impact on load time if the CDN isn't properly configured.

It's a relevant strategy for platforms hosting tens of millions of resources and experiencing abnormal crawl delays on priority pages. For others, optimizing internal structure and server response time will have far greater impact.

How do you measure the real impact on your site?

Set up a server log monitoring system (Oncrawl, Botify, or in-house solutions via ELK/Splunk). Track daily crawl volume by Googlebot type, cross-reference with Google Search Console data (pages crawled vs pages indexed).

If you detect an abnormal gap between publishing priority content and its appearance in the index, and your logs show budget saturation by Googlebot Images, then you've confirmed the problem — and it's time to act.

- Analyze crawl distribution between different Googlebots via your server logs

- Block or deprioritize low-value visual resources with

robots.txt - Consider a subdomain architecture only if you manage several million resources

- Optimize server response time and internal link structure before blaming crawl budget

- Monitor delays between publication and indexation to detect bottlenecks

❓ Frequently Asked Questions

À partir de combien de pages le budget de crawl devient-il un vrai problème ?

Si je bloque mes images dans robots.txt, est-ce que Google Image Search les indexera quand même ?

Dois-je créer un sous-domaine dédié pour mes images même si j'ai « seulement » 100 000 visuels ?

Est-ce que le budget de crawl d'un sous-domaine peut être transféré au domaine principal ?

Comment savoir si Googlebot Images consomme trop de mon budget de crawl ?

🎥 From the same video 16

Other SEO insights extracted from this same Google Search Central video · published on 07/09/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.